Musings is an informal newsletter mainly highlighting recent science. It is intended as both fun and instructive. Items are posted a few times each week. See the Introduction, listed below, for more information.

If you got here from a search engine... Do a simple text search of this page to find your topic. Searches for a single word (or root) are most likely to work.

Introduction (separate page).

This page:

2018 (September-December)

December 12

December 5

November 28

November 14

November 7

October 31

October 24

October 17

October 10

October 3

September 26

September 19

September 12

September 5

Also see the complete listing of Musings pages, immediately below.

All pages:

Most recent posts

2026

2025

2024

2023:

January-April

May-December

2022:

January-April

May-August

September-December

2021:

January-April

May-August

September-December

2020:

January-April

May-August

September-December

2019:

January-April

May-August

September-December

2018:

January-April

May-August

September-December: this page, see detail above

2017:

January-April

May-August

September-December

2016:

January-April

May-August

September-December

2015:

January-April

May-August

September-December

2014:

January-April

May-August

September-December

2013:

January-April

May-August

September-December

2012:

January-April

May-August

September-December

2011:

January-April

May-August

September-December

2010:

January-June

July-December

2009

2008

Links to external sites will open in a new window.

Archive items may be edited, to condense them a bit or to update links. Some links may require a subscription for full access, but I try to provide at least one useful open source for most items.

Please let me know of any broken links you find -- on my Musings pages or any of my web pages. Personal reports are often the first way I find out about such a problem.

December 12, 2018

A record we noted earlier this year has already been broken. It's about the longest known bond between two carbon atoms.

* News story: World record for longest carbon-carbon bond broken. (D Bradley, Chemistry World, December 5, 2018.) Links to the article. The news story raises an interesting question about what kind of bond should "count". The molecule is complex, and it is not easy to see what is going on.

* Background post: The longest C-C bond (April 17, 2018). I have added a note about the new finding to that post.

December 11, 2018

Here is the basic finding, as reported in a recent article...

|

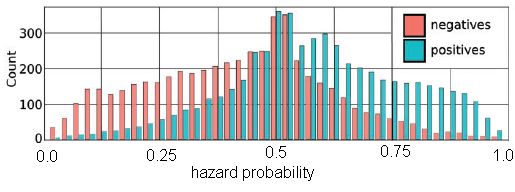

The graph shows the incidence of Parkinson's disease (PD) over time, for two groups of people.

The red curve is for people who have had their appendix removed. The black curve is for a matched control group. The people who had an appendectomy had about a 20% lower rate of PD. For the appendectomy group, the time scale is time since the operation. The controls are matched by age, sex, and location. This is Figure 1A from the article. |

It's a striking finding. What more did the scientists learn about this?

The results above are based on a huge database in Sweden. An analysis of a second group is consistent with the main finding.

Further analysis showed that the effect is greater for people in rural areas than in urban areas. If this finding holds up, it could be an interesting clue. Rural PD is more often associated with external effects such as pesticides.

The finding led the scientists to explore the human appendix. PD is associated with an increased brain level of an aggregated form of a protein called α-synuclein. The authors find that α-synuclein is abundant in the appendix -- of nearly everyone, including young people. Processing of the protein occurs there, and the aggregated form is found.

That is, apparently we all have -- in our appendix -- significant levels of the protein considered a key to PD. Removal of the appendix seems to reduce the chance of certain types of PD.

What's the connection? It's too early to say, but one must wonder whether the appendix is a source of the protein form that causes PD. Clearly, PD is a more complex disease than we used to think; involvement of non-brain regions, including the GI tract, is now accepted. The work here may make that connection even more important. Further work is needed!

We also note that other work has not found an association between the appendix and PD. The authors here suggest that their study is the best yet, because of the nature of the database, including its long time span. The disagreement between studies needs to be resolved.

News stories:

* Appendix identified as a potential starting point for Parkinson's disease -- Appendix acts as a reservoir for disease-associated proteins; appendectomy lowers the risk of developing Parkinson's. (Science Daily, October 31, 2018.)

* The potential benefits of missing an appendix. (The Science of Parkinson's, November 1, 2018.) Long, but very good. It explores many aspects of the topic, and tries to present it at a level suitable for the general audience.

The article, which may be freely available: The vermiform appendix impacts the risk of developing Parkinson's disease. (B A Killinger et al, Science Translational Medicine 10:eaar5280, October 31, 2018.)

More about the appendix: Appendix. Yours. (December 11, 2009)

More about PD:

* Metabolism of the Parkinson's disease drug L-DOPA by the gut microbiota (July 26, 2019).

* Possible role of gut bacteria in Parkinson's disease? (March 17, 2017).Is there a connection between the work in the two posts just noted and the current work? We can only note the question for now.

Also see... Involvement of the non-pregnant uterus in brain function? (February 11, 2019).

My page for Biotechnology in the News (BITN) -- Other topics includes a section on Brain. It includes a list of related posts.

December 10, 2018

There are various ways to organize a post. Commonly, one does things in a simple order, such as: a problem, a test, conclusions. But sometimes it seems good to work backwards, starting at the end: what are the conclusions, then how did we get to them. For the current topic, we start at the back end.

Look at the first movie file at the following site (which is also listed below as a news story): Image of the Day: Swish Swish. (It's a large file; be patient. And don't close that tab when done; we'll make further use of the page in a moment.) Now archived.

If you have trouble with that site, or just want a quick preview, here is a series of stills from the movie file [link opens in new window]. The three stills there were taken 0.33 second apart. This is Figure 1F from the article.

What is that thing? The authors, who are engineers, found similar devices on a variety of animals at their local zoo. They developed a mathematical model to describe the motion of such a device. It behaves as a double pendulum. To see what this means, see the second movie file on that page introduced above.

What does it do? It is likely a device to repel mosquitoes (and other flying insects). Sometimes, it may directly hit one -- the swat phase of its action. Beyond that, its motion, at about the same speed as the bugs, will severely disrupt the local environment, effectively shooing the bugs away; that's the swish phase. The role of that gentle breeze is the big finding here.

The work includes recording data from nature (e.g., from the wilds of Zoo Atlanta), as seen in the top movie. The authors report that they "were harassed by many insects while filming" (first paragraph of Results section). Theoretical analysis (presumably in the comfort of an air-conditioned lab free of flying insects) led to the construction of an insect-repelling device based on the mammalian tail. It works -- and is more energy-efficient than a commercial wind-based mosquito-repelling device.

News stories:

* Image of the Day: Swish Swish. (K Grens, The Scientist, October 16, 2018. Now archived.) This is the site used above as the source for the two movie files. There is only a brief text beyond those movies.

* Swishing tails guard against voracious insects with curtain of breeze. (EurekAlert!, October 15, 2018.) This appears to be a freely-available version of the news story from the journal, listed below.

* How Animals Use Their Tails to Swish and Swat Away Insects -- Findings could help engineers build better devices to repel mosquitoes. (J Maderer, Georgia Tech, October 16, 2018.) From the University.

* News story accompanying the article: Tails guard against voracious insects with curtain of breeze. (K Knight, Journal of Experimental Biology 221:jeb188888, October 2018.) See the EurekAlert! news story, above, for a freely available version of this item.

* The article: Mammals repel mosquitoes with their tails. (M E Matherne et al, Journal of Experimental Biology 221:jeb178905, October 2018.)

More from the same lab:

* How a cat tongue works (March 19, 2019).

* What is the proper length for eyelashes -- and why? (March 16, 2015).More about tail functions: An animal that walks on five legs (February 3, 2015).

Previous post about elephants: Carbon-14 dating of confiscated ivory: what does it tell us about elephant poaching? (February 10, 2017).

Other posts about repelling mosquitoes include:

* What is the purpose of the cat response to silver vine or catnip? (March 22, 2021).

* Can chickens prevent malaria? (August 12, 2016).

* A laser-based missile-defense system to bring down mosquitoes (May 18, 2010).More about dealing with mosquitoes... Blocking eggshell formation in mosquitoes? (February 8, 2019).

There is a section of my page Biotechnology in the News (BITN) -- Other topics on Malaria. It includes a list of related Musings posts, including posts more generally about mosquitoes.

December 7, 2018

Making vaccines against influenza (flu) is a messy issue. It is a guessing game each year for vaccine makers to choose a small number of strains to target in the new vaccine.

A recent article reports a new approach to making a "universal" flu vaccine. The work was done with the help of some llamas.

The following figure shows some information about the antibodies used here. It's a complex figure, but we will focus on one part of it, which is rather clear.

|

The graph shows the effectiveness of several antibodies against various flu virus strains in a simple lab test.

Effectiveness is shown on the y-axis as the value for IC50. IC stands for inhibitory concentration; the 50 means it is the concentration that inhibits the virus by 50%. Lower is better; a low value of IC means that less antibody is needed to inhibit the virus. Look at the right half of the graph -- the part with reddish symbols. Those symbols are for five strains of type B flu virus. (They are listed at the right, but don't worry about that.) Three antibodies are tested against the red-symbol flu viruses; they are shown along the x-axis (SD83...). The main observation is that the third (right-hand) antibody is the best. What is that third antibody? It is a combination of the first two; look at the names. The left side of the graph shows the same kind of experiment for antibodies against a collection of type A flu viruses. The big picture is about the same, though the data set is obviously more complex. This is Figure 1 from the article. |

What's going on? And what did the llamas do?

The llamas made the antibodies. More specifically, the llamas made the first two antibodies. The third, the combination antibody, was made in the lab by fusing the genes for the two individual antibodies. The new gene made a double-length antibody that is effectively the two single antibodies joined end-to-end into a single long protein.

In the work above, the scientists made two of these double-length antibodies: one combining two antibodies against type A flu strains (left) and one combining two antibodies against type B flu strains (right).

If combining two antibodies into a single long chain is good, as the graph above suggests, why not combine all four of them into one extra-long protein? They did.

Here is a test of the effectiveness of such an antibody...

|

In this work, a 4-part combination antibody was tested against several flu strains -- in mice. It's a model system for real flu infections. The scientists measured the survival of the flu-infected mice over time, after various antibody treatments.

Results for two flu strains are shown here. For both viruses, the highest two antibody doses protected the mice completely, or nearly so. The low dose provided poor protection, with survival not much different from the "vehicle" treatment. |

|

What's important here is that the two viruses are very distinct, and yet we have a "single" antibody that is protecting against both of them about equally well. There are more viruses in the full figure. The general pattern holds. This 4-part antibody is effective against a wide range of flu strains. In the tests shown here, the mice were given the antibody directly, by injection. In other tests, they were given a constructed virus that carried the gene for the new antibody. This is part of Figure 4 from the article. | |

The results suggest that the scientists have made progress toward a universal flu vaccine: a "single" antibody that is widely effective against a variety of flu strains.

The work was done starting with antibodies from llamas, as we noted earlier. Why llamas? Those who recall the structure of common antibodies, such as those from humans and mice, may find this work extraordinary. Antibodies are complicated. Each antibody has four chains, including two types of chains. The active site is formed by multiple chains. Given that complexity, making a composite antibody with multiple active sites would be challenging.

But llamas don't make antibodies like that. Llamas (and more broadly, the camel family) make single-chain antibodies -- a single chain that folds up to make the active site. Making an extra-long gene that codes for two llama antibodies end-to-end leads to a long protein that contains two active sites. The two antibody parts, or domains, act more or less independently. That allows the construction of composite antibodies that carry domains against a variety of flu viruses.

The starting llama antibodies had rather broad activity (top figure). In fact, llama antibodies against flu virus tend be of broader specificity (than the usual antibodies), probably because of their smaller size.

The antibody parts of the composite antibody actually act better than independently. As the top figure shows, the composite antibody is not just as good as its components, but better. The components act synergistically.

No one knows why llamas make single-chain antibodies. But it certainly makes combining them genetically much easier. And it seems to offer a novel pathway to making a universal flu vaccine.

News stories:

* Llama antibodies could be key to universal flu vaccine. (T Puiu, ZME Science, November 2, 2018.)

* Tethered antibodies present a potential new approach to prevent influenza virus infections. (Science Daily, November 5, 2018.)

* Researches develop new protein for prevention of influenza virus infection. (L Heilesen, Aarhus University, November 2, 2018.) From the current institution of the lead author. Excellent overview.

The article: Universal protection against influenza infection by a multidomain antibody to influenza hemagglutinin. (N S Laursen et al, Science 362:598, November 2, 2018.)

Recent posts about flu vaccines or drugs:

* Baloxavir marboxil: a new type of anti-influenza drug (September 14, 2018).

* What's wrong with the flu vaccine? (February 16, 2018).More...

* A treatment for botulism -- using a modified botulinum toxin (February 8, 2021).

* A universal flu vaccine: phase I trial (February 1, 2021).

* Why the flu vaccine wanes: role of the bone marrow plasma cells (January 2, 2021).Many posts on various flu issues are listed on the supplementary page: Musings: Influenza.

A post about a similar problem with HIV: Should we make antibodies to HIV in cows? (November 14, 2017).

There are no previous posts about llamas, or about single-chain antibodies.

Most recent post about camels: Prions in camels? (June 18, 2018). Links to more.

December 5, 2018

1. Light pollution. A news feature, with a good overview...

* News story: The Vanishing Night: Light Pollution Threatens Ecosystems -- The loss of darkness can harm individual organisms and perturb interspecies interactions, potentially causing lasting damage to life on our planet. (D Kwon, The Scientist, October 2018, page 36.) Now archived.

* Background post: A world atlas of darkness (July 29, 2016). I have noted the current article there.

2. High lead content in spices, herbal remedies, and ceremonial powders. An intriguing little article, stimulated by finding that in one (US) county the blood levels of lead in children were not following the usual decreasing trend. Some of the products studied here are not intended for internal consumption, but people -- especially children -- may ingest them anyway.

* News story: Some spices may be a source of lead exposure in kids, study finds. (M A Schaefer, Medical Xpress, November 28, 2018.) Oddly, it does not link to the article, which is -- freely available: Lead in Spices, Herbal Remedies, and Ceremonial Powders Sampled from Home Investigations for Children with Elevated Blood Lead Levels - North Carolina, 2011-2018. (K A Angelon-Gaetz et al, Morbidity and Mortality Weekly Report (MMWR) 67:1290, November 23, 2018.)

December 4, 2018

A new article suggests that one feature of a restaurant inspection system has resulted in reduced levels of Salmonella cases.

Part of the analysis seems questionable, but it is still interesting and worth noting.

The article deals with two features of the restaurant inspection system in New York City (NYC). A scoring system itself was implemented in 2005. Then, starting in 2010, the results of the scoring system were displayed at the restaurant entrance in the form of a letter grade.

The scoring and posting features are specifically for the city. Thus the authors compare data for NYC with the rest of the state (NYS, New York state).

The main analysis in the article is summarized in the following figure...

| The graph shows the number of reported cases of Salmonella (per 100,000 population; y-axis) vs year (x-axis). Data is shown for two regions: NYC (solid symbols and lines), and NYS (open symbols and dashed lines). |

|

The vertical dotted lines break the graph into three time periods. The break points correspond to the start of the scoring and posting steps. To start, compare the results for time periods 2 and 3 on the graph. For both regions, the rate of Salmonella cases was approximately constant during time period 2. The rate was significantly higher in NYC. Now look at time period 3, when the posting of letter grades was begun in NYC. You can see that the rate in NYC starts to decline. In fact, by the end of the period shown, the Salmonella rate in the city is about as low as in the rest of the state. This is slightly modified from the Figure in the article. I added the numbers 1-3 to make it easier to refer to the three time periods on the graph. | |

The comments above might suggest that the new policy, of posting the letter grades from restaurant inspections, led to a decline in the rate of Salmonella cases. That is the point the authors want to make.

However, the full graph is more complex. Look at time period 1. There is a jump -- a discontinuity in the curves -- between time periods 1 and 2. Now, that can happen. Perhaps there were changes in inspection or reporting procedures. Further, in time period 1 the rate was declining faster in NYC than in the rest of the state. Then, the rates were not only higher but also constant for 5 years in period 2. Period 2 is when the inspection scoring was begun; that was in NYC, but the curves change for both regions.

It is hard to know what to make of all this. It may be that the change between periods 2 and 3 is indeed what is important, and that posting of inspection grades in NYC led to a decline in Salmonella cases. That's a useful hypothesis, but the case here is not convincing. Not yet.

The authors briefly address the concern I have raised. Their explanations are not convincing; it may not even be possible to resolve the questions, which involve old data from public health records. My point is to raise the concern, and make clear that the article is interesting, but not necessarily the last word.

News stories:

* Letter grades for restaurants helped reduce Salmonella illnesses in New York City. (Food Safety News, November 23, 2018.)

* Letter Grade Program Linked to Declines in Salmonella Infections in New York City. (C Plain, University of Minnesota School of Public Health, November 21, 2018.) From the university.

The article, which is freely available: Restaurant Inspection Letter Grades and Salmonella Infections, New York, New York, USA. (M J Firestone & C W Hedberg, Emerging Infectious Diseases 24:2164, December 2018.)

Also see:

* Tracking food poisoning through online reviews (July 7, 2014).

* Are government safety inspections worthwhile? (June 12, 2012).My page Internet resources: Biology - Miscellaneous contains a section on Nutrition; Food safety. It includes a list of related Musings posts.

December 3, 2018

Children acquire language skills from the environment -- from those around them. Exactly how they do this is not at all clear.

It is a common observation that the language skills of children correlate with socioeconomic status (SES). The nature of the connection is not clear.

A recent article offers a new clue how this may work. It provides evidence that conversation plays a key role in brain development.

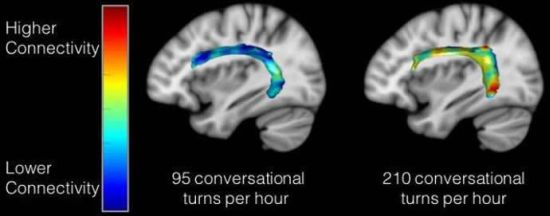

The following figure shows some data...

|

Each graph shows a measure of brain function (y-axis) plotted against a measure of the child's conversational experience (x-axis). The children in this work were ages 4-6.

Each graph shows a small but significant correlation. Importantly, this is the strongest correlation seen in the analyses. It holds independently of SES and the simple amount of adult speech. Remember, language is a complex trait; finding a factor that is one significant contributor can be a useful step. So what are these graphs showing? The x-axis is "conv[ersational] turns". It is based on recordings made in the natural environment at home; the recordings were then analyzed to find how many times the child and adult switched roles as speaker and listener; that is a conversational turn. The y-axes report two brain scan measurements, each showing connectivity in certain regions. This is Figure 2b from the article. |

The following figure maps the results onto the brain...

|

The colored band across each brain shows the degree of connectivity -- between two regions associated with language.

The brain on the left is for a child who showed a relatively low degree of conversation; the one on the right is for a child with a high degree of conversation. The band on the left is mostly blue, showing low connectivity; see the color key at the left. The band on the right has various "warmer" colors, showing a higher degree of connectivity. This is the figure from the Neuroscience News story listed below. It seems to be the same information as in Figure 2c of the article. Assuming that is so, the two samples shown here are those circled in the top graphs. |

That's it. That's the basic finding.

The authors also note that there was a modest but significant correlation between the child's conversational turns score and their score on a standard test of verbal skill.

There is no experimental manipulation or intervention in this work. But there could be; the work could lead to an intervention intended to facilitate a child's development of language skills. Take a group of children and increase their "conversation". Does that affect their language development? Seems practical to try, and it could be worthwhile.

Reading to the kids may be good; engaging them in lively conversation may be even better.

News stories:

* Adult-Child Conversations Strengthen Language Regions of Developing Brain. (Neuroscience News, August 14, 2018.)

* First paper published linking conversational turns with brain structure. (LENA, August 13, 2018.) LENA = Language Environmental Analysis; LENA software was used in the work.

The article: Language Exposure Relates to Structural Neural Connectivity in Childhood. (R R Romeo et al, Journal of Neuroscience 38:7870, September 5, 2018.)

Also see:

* Right-hemisphere processing of language in children (October 3, 2020).

* If you are talking with someone, how can you tell if they are paying attention? (May 8, 2017).

* Speech: Taking turns (August 17, 2011).

December 1, 2018

A recent article reports a set of measurements of the size of atomic nuclei; the purpose is to test a new model that allows prediction of the size.

Specifically, the scientists measured the size of the nucleus for 31 isotopes of a single element -- 31 different atomic nuclei, differing only by one neutron from one step to the next.

Cadmium (Cd; element #48). In this work, the scientists measured the size of the nucleus for 31 isotopes of Cd, from mass number 100 (52 neutrons) to 130 (82 neutrons).

The results...

|

The graph shows the nuclear radius (Rc -- we'll explain the subscript later) on the y-axis and the mass number (A) on the x-axis. Note that the units for radius are femtometers (1 fm = 10-15 m). You might also note that their measurements are to the hundredth of a fm (though no error bars are shown here).

Look at the black line, with dots. The dots are the data points for the measurements. You can see that the nuclear radius increases, fairly smoothly, from about 4.45 fm to nearly 4.7 fm as we add 30 neutrons, going from A = 100 to 130. If you look carefully you can see a zig-zag; there is a regular effect of odd vs even mass numbers. We won't go into that further, but it is a well-known effect; that they can measure it is a testament to the quality of these measurements. Cadmium has lots of isotopes. The Wikipedia page lists isotopes for all mass numbers from 95 to 132. There are two isomers for some mass numbers, even three isomers in one case. Natural Cd contains eight isotopes at an abundance of (about) 1% or more. Six of those are stable; two are ultra-long-lived radioactive isotopes. This is slightly modified from Figure 2 from the article. I have removed an inset. (The vertical line, labeled N = 82, should go to A = 130 on the x-axis.) |

There's more. Three more lines -- for three theoretical predictions of the radius. All of them roughly agree with the data. But the green dashed line ("Skyme") is clearly the worst. It's more subtle, but the blue line [Fy(Δr)] is the best.

That blue line model [Fy(Δr)] is fairly new. The authors published it not long ago, They worked it out, building on earlier models, but carefully developing it to explain the size of nuclei for calcium isotopes.

Having developed the new model using one data set, it's time to test it on something different. That's the point of the Cd work. And the conclusion here is that the new model passes this new test quite well: it is the best fit to the data.

We won't try to explain the model here. The development of the model is mainly in earlier articles. The article here is mainly about the experimental measurements.

What are those measurements? How does one measure the nuclear radius? It is based on measuring something familiar: electronic transitions, the kind that one measures with an ordinary spectrophotometer. The point is that the exact energy of an electronic transition depends on other charges that may be nearby. In particular, it depends on the nuclear charge. The magnitude of the nuclear charge is the same for all isotopes of a single element. However, the charge density is lower for bigger nuclei (heavier isotopes). That's what they measure here: the effect of nuclear charge density, reflecting the nuclear radius, on electronic transitions. This is a special high-tech spectrophotometer, capable of extremely precise measurements. But the idea is relatively simple.

The method measures the size of the nucleus as reflected by its charge distribution. How this relates to the "physical" size of the nucleus overall is a separate question. We noted above that the graph axis is labeled Rc -- for the "charge radius".

Look carefully at that graph again, and there is a little teaser. The blue line is for the new model. Look what that line does just past the last data point. There is a steep rise in the predicted values for R. Why? It has to do with the shell structure of the nucleus. Nuclear particles have a shell system of energy levels, rather like that for electrons (s, p and so forth). At A = 130, a shell is filled. Going further opens a new shell; that's why there is an abrupt change in the slope of the curve. Will the scientists be able to measure this? There are two heavier isotopes; they have half-lives shorter than any measured so far.

It's an interesting article just based on what they did. It may also lead to a better understanding of atomic structure, even if that part is hard for us to appreciate.

News story: Towards a global model of the nuclear structure -- Researchers confirm theory by measuring nuclear radii of cadmium isotopes. (Technische Universität Darmstadt, September 5, 2018. Now archived.) From one of the (many) institutions involved.

The article, which is freely available: From Calcium to Cadmium: Testing the Pairing Functional through Charge Radii Measurements of 100-130Cd. (M Hammen et al, Physical Review Letters 121:102501, September 4, 2018.)

Even smaller radii: The proton -- and a 40 attometer mystery (March 17, 2013).

More cadmium: Unusual synthesis of cadmium telluride quantum dots (February 15, 2013).

My page of Introductory Chemistry Internet resources includes a section on Nuclei; Isotopes; Atomic weights. It includes a list of related Musings posts.

* * * * *

Update September 2023...

Scientists have made the heaviest known isotope of oxygen: O-28, with 20 neutrons. It's not very stable; lifetime is about 10-21 seconds - similar to that for O-27, also made in the new work. Its extreme instability was somewhat surprising. The combination of 8 protons and 20 neutrons is thought to be "doubly magic", which should lead to greater stability. Once again, new measurements illustrate that our understanding of nuclear structure is incomplete. (How does one make O-28? Make F-29, and kick out a proton. F-29 is relatively stable, with a half-life of about a millisecond.)

* News story: Exploring Light Neutron-Rich Nuclei: First Observation of Oxygen-28. (Tokyo Institute of Technology ("Tokyo Tech"), August 31, 2023.)

* News story accompanying the article: Nuclear physics: Heaviest oxygen isotope is found to be unbound -- The isotope oxygen-28 is expected to be 'doubly magic', with strongly bound neutrons and protons in its nucleus. Experiments now reveal that it exists in an unbound state - casting doubt on its magic status. (Rituparna Kanungo, Nature 620:958, August 31, 2023.)

* The article: First observation of 28O. (Y Kondo et al, Nature 620:965, August 31, 2023.)

November 28, 2018

Fungal confusion. What's the difference between Candida krusei and Pichia kudriavzevii? None, according to a recent article; just two different names for the same organism. Such confusion is not unusual with the fungi. Organisms -- and names -- have accumulated over the ages. If the same organism is isolated and characterized by different people in different contexts, the connection may not be noticed. But in this case, we have a common industrial fermentation organism, usually regarded as safe, that is actually a pathogen -- of some importance.

* News story: Two Fungal Species - One Pathogenic, One Benign - Are Actually the Same. (S Charuchandra, The Scientist, July 19, 2018.) Now archived.

* The article, which is open access: Population genomics shows no distinction between pathogenic Candida krusei and environmental Pichia kudriavzevii: One species, four names. (Alexander P Douglass et al, PLoS Pathogens 14:e1007138, July 19, 2018.)

November 27, 2018

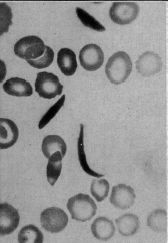

|

A blood sample showing some unusually shaped cells.

Magnification not stated. Human red blood cells are typically 6-8 micrometers diameter. This is the upper left part of the figure from the article. (The figure is not numbered in the original article. It is labeled as Figure 1 in the reprinted version.) |

That's from a 1910 article. The actual work, on Walter Clement Noel's blood, was done in 1904. The article described the unusual cells, as part of the patient's examination. But it offered no diagnosis or explanation.

The article is now recognized as the first report of what we now call sickle-cell anemia (SCA) or sickle-cell disease -- just over a century ago.

SCA is now well known. It was the first genetic disease to be characterized at the molecular level: it is due to a mutation that results in a single amino acid change in one of the hemoglobin subunits. The gene for SCA is prevalent in certain populations with a high incidence of malaria, such as parts of Africa. (Noel, from the Caribbean island of Grenada, was of African descent.) Having a single copy of the SCA allele protects against malaria; having two copies leads to a serious anemia. As much as we understand what is behind SCA, there is still no good treatment.

The news magazine "The Scientist" recently noted this 1910 article in their historical section "Foundations". Their story is worth a look. The article itself is freely available, in a reprinted form.

"News" story: Charting Crescents, 1910 -- James Herrick, a Chicago doctor, was the first to describe sickled red blood cells in a patient of African descent. (S Charuchandra, The Scientist, October 2018, page 68.) Now archived.

The article: Peculiar elongated and sickle-shaped red blood corpuscles in a case of severe anemia. (J B Herrick, Archives of Internal Medicine 6:517, November 1910.) A caution... Some of the language in the article reflects 1910 culture.

The article was reprinted in the Yale Journal of Biology and Medicine 74:179, May 2001. That reprint is freely available at PubMed Central: YJBM reprint of the article, freely available. The first page contains a note about the reprint, and also includes references to other historic articles about SCA.

An article about the article: Herrick's 1910 Case Report of Sickle Cell Anemia -- The Rest of the Story. (T L Savitt & M F Goldberg, JAMA 261:266, January 13, 1989.) The original article does not identify the patient, following common medical practice. However, given the historical importance of the article, others have uncovered the story. This article puts the original article into both medical and social context.

More about sickle-cell disease:

* Reactivating fetal hemoglobin production to treat β-hemoglobin problems - I (February 15, 2021).

* Sickle cell disease: a step toward treatment by activation of fetal hemoglobin (October 29, 2011).A post that connects hemoglobin and malaria: Malaria and bone loss (September 10, 2017).

More about blood cells: Progress toward a universal source for red blood cells, avoiding the need to match blood type (February 23, 2021).

There is a section of my page Biotechnology in the News (BITN) -- Other topics on Malaria. It includes a list of related Musings posts.

My page Internet resources: Miscellaneous contains a section on Science: history. It includes a list of related Musings posts.

November 26, 2018

The effects of climate change can seem rather distant and abstract. Now, an international team of scientists, from institutions including Peking University, University of Cambridge, and University of California, offer an analysis that tries to make climate change more relevant to the average person: the effect on the price of beer.

Not interested in the beer? Take it as an example of a specific consumer product, one from the "luxury" category.

Here is the connection...

- Climate change will lead to more extreme weather.

- That will reduce the yield of barley, a key ingredient of beer.

- That will make beer more expensive.

- That will reduce consumption.

Computers already know a lot about climate change models and the effect on weather. Give them a few more numbers, and they figure out the rest. The article reports the impact of climate change on beer price and consumption, for various standard climate change models.

Here are some examples of the findings...

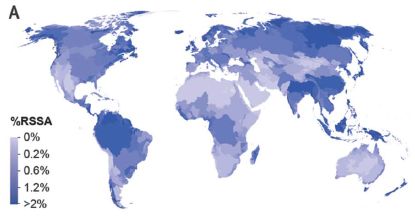

|

The figure shows two measures of the effect of climate change during this century. One is the change in the price of beer (part g; left side); the other is the resulting change in consumption (part k; right side).

The scientists focused on 26 countries, chosen as important in the beer or barley trades; the results are shown above for the top ten countries by each criterion. (Data for other countries was lumped into regional groups, and the results are shown in the Supplementary Information.) The numbers on the graph are changes. Compared to what? Figure 5 of the article gives some baseline data. For example, the price of beer in Ireland was (US) $2.51 (in 2016). And the consumption (per capita) in the Czech Republic was 274 bottles (2011). The numbers at the left are x-values for the country. For example, +1.70 at the upper left is the x-value for Ireland; read the bar length against the x-axis. The unit of beer here is the 500 mL bottle. That is about one pint (depending on the country), or 16 fluid ounces. The world's #1 country for amount of beer consumed? China, at 48.8 billion liters (2011). But there are a lot of people there, and it is not in the top-10 by per capita consumption. The graphs shown here are for one specific climate change model, called rcp6.0. (That's the model that predicts a global temperature increase of ~5 °C by 2100.) The article includes similar analyses for other models; the general message is the same. This is part of Figure 4 from the article. I modified the header for part k, to make it clear that the numbers are per capita. |

As we noted at the start -- and as the authors emphasize -- the point here is to do an analysis of the effect of climate change for something that people can relate to. Climate change is predicted to lead to more extreme weather events. That will lead to more extreme wildfires. That's serious, but as presented in articles, it's rather abstract. Even with our recent devastating wildfires here in California, it can be hard to make the connection between the big issue of climate and a specific fire. The price of beer is simple, and visible every day.

Will consumers make the connection between the increased beer prices they see and climate change? That's not at all obvious. After all, price increases are common. What the article does is to describe the effects of climate change using a common item of commerce, where people can appreciate the prediction.

The project is not as simple as it may sound. Barley is a complicated issue. It is grown in selected regions; the article includes maps. It is used to make beer, and for animal feed and human food. The relative roles of those uses vary widely. As examples, the leading countries for specific uses are... South Africa uses 94% of its barley for beer. France uses 91% for animal feed. India uses 77% for human food. (Those numbers are from Table SI-1, from the Supplementary Information file accompanying the article.) The modeling here takes into account all those local differences in production and use, and makes assumptions about the future.

News stories:

* Climate change is about to make your beer more expensive -- Extreme weather events are expected to reduce global barley production. (M Warren, Nature News, October 15, 2018.) In print, with a different title: Nature 562:319, October 18, 2018.

* Beer shortages? Study reveals climate change could affect global beer supply. (FoodIngredientsFirst, October 16, 2018.)

The article: Decreases in global beer supply due to extreme drought and heat. (W Xie et al, Nature Plants 4:964, November 2018.)

A recent post about the effects of climate change: Climate change and food insecurity (November 11, 2018).

More... A thermotolerant coffee plant, with high quality beans (June 8, 2021).

More about beer: The history of brewing yeasts (October 28, 2016).

November 16, 2018

The Hox family of genes code for body patterns. Perhaps most famously, one Hox mutation in fruit flies leads to there being an extra pair of legs -- up on the head where the antennae should be. Good legs, wrong place. Hox genes are widespread, occurring in nearly all higher animals, both vertebrate and invertebrate.

A recent article reports a new example of Hox genes providing body plan information.

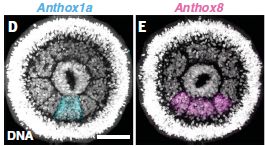

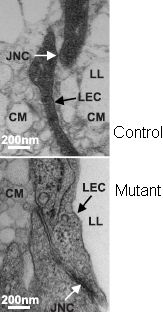

The first figure provides some background. It shows where two of the Hox genes are expressed.

|

In part D (left), you can see that there is a signal (blue) in the bottom segment.

In part E (right), there is a signal (pink) in the bottom three segments. |

|

What does this mean? It may not be very clear, but there is a ring of eight segments. In the experiment for Part D, the sample was stained to show the expression (messenger RNA) of the Hox gene anthox1a. Part E is the same idea, but for the gene anthox8. The scale bar is 50 µm. This is part of Figure 1 from the article. | |

That tells us something about where the genes are expressed, but doesn't say anything about what they do. Now...

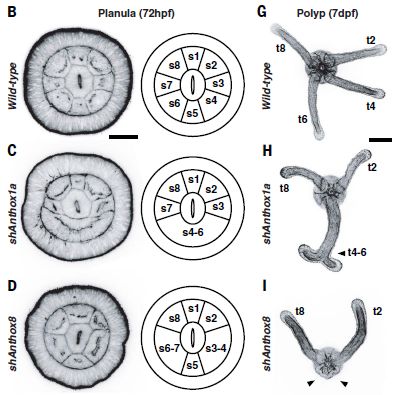

|

This figure shows three rows of information. The top row is for the wild type animal. The next two rows are for experiments in which the function of one Hox gene has been disrupted; the two rows examine the two Hox genes discussed in the first figure.

Let's start with the wild type, as the reference point. Top row (parts B and G). The first image (left) shows the same structure seen above. Next to it is a diagram, showing the eight segments -- numbered. At the right is a photo of another stage of the animal. There are four tentacles; they are associated with the four even-numbered segments. The second row (parts C and H) is for a case where the anthox1a gene function has been disrupted. This is the gene shown above to be active in the bottom segment (which we now call s5). You can see that segment s5 is disrupted; s4-s6 now all seem to be one big segment. At the right, you can see that we no longer have the expected two tentacles from this area. Instead, there is one tentacle, with an unusual terminal doubling. The third row (parts D and I) shows the effect of disrupting anthox8 function. This Hox gene is normally expressed in segments s4-s6. Disrupting this gene causes loss of segment boundaries -- again right at the edges of the segments where it should be expressed. And the tentacles that should develop from segments s4 and s6 are both missing. The scale bar is 50 µm on the left, and 100 µm on the right. The labels hpf and dpf (at the top) mean hours or days post fertilization. In these experiments, gene function was disrupted by adding an RNA that interfered with messenger RNA function. It is called short hairpin RNA. The "sh" on the labels (at the left) stands for short hairpin. This is part of Figure 2 from the article. |

Summarizing... Two Hox genes are shown to be expressed in specific locations; they affect both segment and tentacle formation in the region. The article contains such analyses for two additional Hox genes; they fit the general picture discussed here.

Hox genes affecting the body pattern. Just as we said at the top. Why, then, is this of special interest? The animal here is a sea anemone. Phylum Cnidaria; the jellyfish and corals are among its more famous members. The simplest animal in which Hox genes have been shown to affect body plan. In fact, Cnidaria are one of the simplest animal groups there is. Hox genes, with a role in specifying the body plan, have been in the animal kingdom since almost the beginning.

The animal here is the sea anemone Nematostella vectensis.

The life cycle is shown in Figure 1A of the article. Shown above are the larval stage, called a planula, and the polyp stage. The news story listed below has a nice picture of the adults.

News story: Ancient past of a body plan code probed -- Researchers identify the function of Hox genes in non-bilaterally symmetrical animals. (Science Daily, September 27, 2018.)

* News story accompanying the article: Development: Hox genes and body segmentation -- An ancient gene cluster controls the formation of repetitive body parts in a sea anemone. (D Arendt, Science 361:1310, September 28, 2018.)

* The article: An axial Hox code controls tissue segmentation and body patterning in Nematostella vectensis. (S He et al, Science 361:1377, September 28, 2018.)

More about sea anemones...

* The immune response of cnidarians (e.g., corals) (November 1, 2021).

* Can a sea anemone make you smile? (June 21, 2021).

* Restoring lost hearing: lessons from the sea anemone (November 15, 2016).Another story about simple animals: A novel nervous system? (July 20, 2014).

November 14, 2018

1. Bitcoin and climate change. Bitcoin requires extensive computer processing -- so much that it could become a major contributor to global warming. That's the claim of a new article, based on extrapolating current trends.

* News story: Study Warns Bitcoin to Push Global Warming Above 2C Threshold by 2033. (L Papadopoulos, Interesting Engineering, October 31, 2018.) Links to the article. The main criticism of the article seems to be their extrapolations; critics argue that the Bitcoin system will become more efficient. A reminder... A good way to find multiple -- and diverse -- news stories is to put the article title into your search engine.

2. The carbon-intensity of producing oil. How much does it cost to produce oil -- in terms of greenhouse gas emissions? It varies. A lot.

* News story, from the lead institution: Stanford study finds stark differences in the carbon-intensity of global oil fields -- Stanford researchers' comprehensive new assessment of climate emissions from crude oil production suggests avoiding the most carbon-intensive reservoirs and better management of natural gas could dramatically slash emissions. (J Garthwaite, Stanford News, August 30, 2018.)

* The article: Global carbon intensity of crude oil production. (Mohammad S Masnadi et al, Science 361:851, August 31, 2018.)

* There is more data in the Supplement; it is about 100 pages, including 40 pages of references. However, the news story gives a good overview of the key issues.

* For more about energy issues, see my page Internet Resources for Organic and Biochemistry under Energy resources.

November 13, 2018

Solar and wind energies are attractive sources of renewable energy; both are gaining market share. A fundamental problem with both of them is that they are intermittent. The supply is not controllable and may well not match the demand. As these energy sources become a larger fraction of the total energy supply, their intermittency becomes more and more important.

A solution to the problem of intermittent energy sources is to store the energy for later use. There is nothing new about the idea. Gasoline is a storage system for energy (as is sugar for us). One cannot store sunshine or wind, but one can interconvert energy from one form to another. Storage batteries are just one example.

A new article shows progress with a novel form of energy storage, designed for storing solar energy. Designed for using summer's sunshine to heat the house in winter.

Here's the scheme...

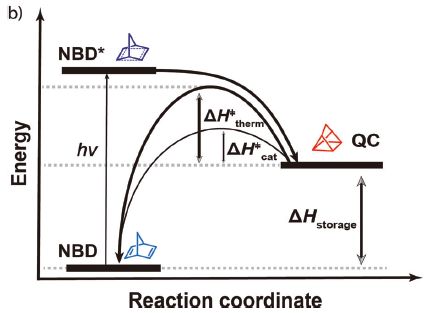

|

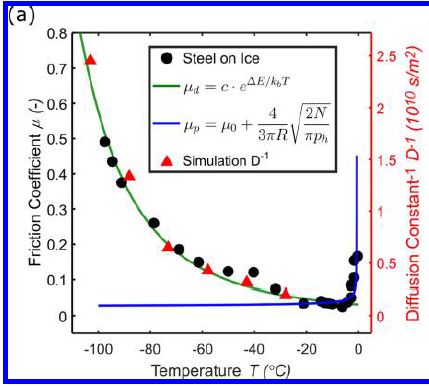

The graph shows the relative energies of three chemicals -- and some paths between them.

Start with the chemical at the lower left, labeled NBD. Low energy. It absorbs a photon, shown as hν. That "excites" the NBD; it is now in a high-energy ("excited") state, shown as NBD*. NBD* is not stable. It decays, not to the original NBD, but to the chemical shown at the right as QC. QC is a medium-energy chemical. The net result is that the low energy chemical NBD has been converted to the higher energy chemical QC, using light energy. Or, from another viewpoint, some of the light energy has been stored in the chemical QC. That's what is shown by ΔHstorage. So we have stored some of the solar (light) energy in QC. Now what? We want to use that energy. QC is higher energy than NBD; we just need a way to release that energy. The article reports development of a catalytic system that allows QC to degrade. It returns to being NBD, releasing energy as it does so; that energy can be used to heat water. Why doesn't QC just spontaneously degrade to NBD, releasing its excess energy? As is common, there is an energy barrier to that reaction; chemists call it activation energy. The graph shows that activation energy as ΔH‡therm and ΔH‡cat. The first of those is the "natural" activation energy, without catalyst. The second is the activation energy with catalyst. The second is low enough that the reaction now proceeds. The chemical structures shown are for the part of the molecules involved in the structural change; they are not the actual complete structures. NBD = norbornadiene; QC = quadricyclane. Again, those names refer to that part of the chemicals. NBD itself does not absorb light well. A key step was to develop a derivative that absorbed light, but otherwise retained the merits of NBD. This is Figure 1b from the article. |

There are no numbers on the energy scale above. That is a diagram, showing the scheme. The article reports development of a practical system, with specific chemicals and operating parameters.

In one experiment, the scientists showed that their system released heat and heated some water from 20 to 83 °C -- in less than three minutes. That's usefully hot water.

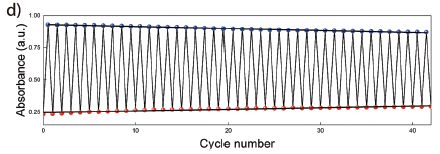

In another experiment, they showed that the system could be used over and over. Here are some results from that test...

|

In this test, the system was repeatedly cycled between the NBD and QC states. Those two chemicals absorb light differently, so a simple measurement of light absorption describes the conversion.

You can see that the A values are not constant from one cycle to the next, but they are not far from it. The system is quite stable over the 40 cycles shown here. This is Figure 2d from the article. |

You might have many questions about the system. As usual, the post presents only some of the information from the current article. Further, parts of the system need further development. Nevertheless, it is an interesting approach that deserves consideration as one possible alternative for how to store solar energy.

And the name of the system? MOST. That's molecular solar thermal energy storage.

Is this a battery? No. Batteries involve electricity. But more broadly, there is a logical similarity. Both batteries and the current device involve interconverting energy from one form to another, and storing it as chemical energy.

News stories:

* Newly-developed fuel can store solar energy for up to 18 years. (A Micu, ZME Science, November 6, 2018.)

* Emissions-free energy system saves heat from the summer sun for winter. (Chalmers University of Technology, October 3, 2018. Now archived.) From the lead institution. It is an overview of the project, noting four articles published this year. The current article is #4 on the list here. #3 on that list offers a clue to the 18-year number, which is not from the current article.

The article, which is freely available: Macroscopic heat release in a molecular solar thermal energy storage system. (Z Wang et al, Energy & Environmental Science 12:187, January 2019.) Very readable.

Among other posts about storing energy:

* A solar cell that generates electricity at night (April 12, 2022).

* Storing energy from an intermittent source -- as compressed air under the sea (March 3, 2019).

* Flow battery (January 4, 2016).Among recent posts on solar energy...

* Solar energy: What if the Moon got in the way? (August 16, 2017).

* Using your sunglasses to generate electricity (August 14, 2017).

* Is solar energy a good idea, given the energy cost of making solar cells? (March 24, 2017).There is more about energy issues on my page Internet Resources for Organic and Biochemistry under Energy resources. It includes a list of some related Musings posts.

November 11, 2018

In an effort to reduce global warning, we may choose to take some mitigating steps. Is it possible that these mitigation steps could be worse, in some ways, than the warming they mitigate? That's the issue explored by a recent article.

The following graph summarizes some of the findings...

|

You might notice right away that the red bars are bigger than the green bars. The key says that the green bars are climate effects; the red bars are mitigation effects. That's the message: mitigation effects can be worse than the climate effects they mitigate. At least sometimes.

The graph is actually rather complex, with a lot of cryptic abbreviations. We'll try to sort through some of that, but that first impression stated above is the main idea; don't despair if you get lost in the detail. The y-axis is a measure of food insecurity: changes in food calories available to people. Negative values are food deficits, and thus are "bad". All the green bars are negative; the climate effects are bad for food, in general. The two halves of the figure are labeled with RCP numbers. The right side is for a climate scenario that would lead to a global temperature (T) increase of 2.7 °C. The left side is the result of mitigation to reduce the global T increase to 2 °C. The climate effects (green) are indeed smaller, but now there are mitigation effects (red). They are negative, too. And they are larger than the gains due to reduced climate effects. That is, the total bars after mitigation are worse than before the mitigation. There are three green bars on each side. They are for three SSPs: shared socioeconomic pathways. Since the conclusions are similar for all three, we need not worry about them for now. RCP? Representative Concentration Pathways. The "models"? Different models for calculating the effects; they vary -- a lot. This is Figure 1d from the article. |

That's the idea... Mitigation may have negative effects, too. Large negative effects.

What are we to do? The answer is not to give up, but to try to better understand the effects. It's all complicated. Climate effects are complicated. Some parts of the world may benefit in some ways from global warming. Some organisms will adapt just fine, at least if given enough time. There are many kinds of mitigation possible. The results shown above are for certain mitigations, and are not to be taken as "the answer" for what mitigation does.

Just as climate effects may not be uniform around the world, mitigation effects may not be uniform either. The authors explore this, and show that mitigation effects reducing food will be most severe in regions such as sub-Saharan Africa and South Asia -- where food shortage is already a concern.

What's the problem? The focus here is on a commonly proposed tool for mitigation: a carbon tax (a tax on emissions of CO2). A C-tax will hit agriculture hard -- and lead to reduced food. The authors suggest that the food story needs to be an explicit part of plans for dealing with climate change. They do not suggest that we avoid mitigation, but that we do it wisely; that means understanding how one or another mitigation policy works. The article is a step towards understanding the complexity of climate change mitigation.

News stories:

* Climate Change Mitigation Policy Risks Increased Food Insecurity -Study. (DevelopNig (Development in Nigeria), August 3, 2018. Now archived.)

* A blanket carbon tax could heighten food insecurity. (E Bryce, Anthropocene, August 3, 2018.)

* Global carbon tax in isolation could 'exacerbate food insecurity by 2050'. (Carbon Brief, July 30, 2018.)

The article: Risk of increased food insecurity under stringent global climate change mitigation policy. (T Hasegawa et al, Nature Climate Change 8:699, August 2018.)

Also see:

* A thermotolerant coffee plant, with high quality beans (June 8, 2021).

* Climate change and the price of beer (November 26, 2018).

* Predicting the "side-effects" of geoengineering? (September 23, 2018).

November 9, 2018

Tuberculosis (TB) is a major challenge. It is one of the world's great killers. Among features that make it difficult to fight...

- the causal bacteria grow very slowly;

- the bacteria tend to go into a latent phase in the body;

- antibiotic-resistant strains are becoming an increasing problem.

Vaccines against TB have always been questionable. Thus an article with some promising results from a clinical trial of a new vaccine candidate is attracting attention.

The following graph summarizes the key findings from the trial...

|

The graph shows the fraction of trial participants remaining TB-free vs time for the two groups: those given the candidate vaccine (M72/AS01E) and those given a placebo.

You can see that the fraction of people disease-free by the end of the trial is about 0.98 for the placebo group, but is a little over 0.99 for the vaccine group. Put that way, it doesn't sound like much. But the disease incidence dropped from about 2% to about 1% -- a reduction in TB incidence of about 50%. The observations noted above are based on what may seem to be the main graph, but which is actually an inset. The same results are plotted "full-scale" in the "outer" graph. At that scale, one can see essentially nothing. I wonder why they bothered to show that graph. This is Figure 2 from the article. |

The nature of the vaccine is of some interest. It is a subunit vaccine. That means it is based on using genes for specific antigens (rather than some form of the natural organism). Further, it uses an adjuvant, to enhance the immune response.

In the broad field of vaccines, 50% efficacy is not particularly good. However, TB is a difficult target, and 50% efficacy, if real, would be welcomed.

What are the reservations? First, that entire graph above is based on 32 cases of TB: 22 in the control group, 10 in the vaccine group. That's why the result is only marginally significant.

Second, the nature of the test group is perhaps distinctive. All of those in the current trial had latent TB infections. It is interesting that the vaccine was effective in preventing active disease in those who were already infected. But the latent infection may itself be promoting immunity.

Further, most of the trial participants had previously been vaccinated against TB using a common vaccine called BCG. A common but controversial vaccine. There is actually little evidence that it has much effect past infancy -- especially in areas with high levels of TB and presumably high levels of latent infection. Does it matter? We don't know. The role of prior infection, whether with BCG vaccine or natural TB, needs to be sorted out.

Interesting results for an interesting vaccine. It will take further experience before we are able to evaluate its significance. The current article is a progress report based on preliminary data from a Phase 2 trial. There will be more information from this trial. That will include analyses of blood samples from trial participants, which can explore the immune response.

News stories:

* Another New Promise for Tuberculosis Vaccines -- GlaxoSmithKline's tuberculosis vaccine candidate M72/AS01E produced 54% efficacy rate in adults. (D W Hackett, Precision Vaccinations, September 26, 2018.)

* GSK's Investigational Vaccine Candidate M72/AS01E shows promise for prevention of TB disease in a Phase 2b trial conducted in Kenya, South Africa and Zambia. (WHO, September 25, 2018.) Links to considerable related information.

* GSK candidate vaccine helps prevent active pulmonary tuberculosis in HIV negative adults in phase II study. (GSK, September 25, 2018.) From the company that developed the vaccine, and sponsored the trial. GSK = GlaxoSmithKline.

* Editorial accompanying the article: New Promise for Vaccines against Tuberculosis. (B R Bloom, New England Journal of Medicine 379:1672, October 25, 2018.)

* The article: Phase 2b Controlled Trial of M72/AS01E Vaccine to Prevent Tuberculosis. (O Van Der Meeren et al, New England Journal of Medicine 379:1621, October 25, 2018.) A copy of the article is available through PubMed Central.

Other posts that mention tuberculosis include...

* An improved procedure for vaccination against tuberculosis? (March 13, 2020).

* A look at Chopin's heart (January 9, 2018).

* How did tuberculosis get to the Americas? (January 24, 2015).

* Rats, bananas, and tuberculosis (March 11, 2011).More on vaccines is on my page Biotechnology in the News (BITN) -- Other topics under Vaccines (general).

November 7, 2018

Two extended "news" articles, consecutive in a recent issue of Nature. Both are useful overviews of interesting topics.

1. GWAS. That's genome-wide association studies -- looking for statistical correlations between genome sequences and characteristics such as disease prevalence. Useful but confusing, and prone to false leads. It's getting better, as both the data and experience increase.

* News feature: The approach to predictive medicine that is taking genomics research by storm. (M Warren, Nature, October 10, 2018.) In print, with a different title: Nature 562:181, October 11, 2018.

* A recent GWAS post: Ear lobe genetics: more complicated than you thought (March 23, 2018).

2. CubeSats. Discussion of developments in space technology, with an emphasis on the increasing role of tiny -- and relatively simple and inexpensive -- satellites.

* "Comment" story, written by scientists in the field: Explore space using swarms of tiny satellites. (I Levchenko et al, Nature, October 8, 2018.) In print: Nature 562:185, October 11, 2018.

* More about small satellites: The effect of Starlink satellites on astronomical observations (January 29, 2022).

November 6, 2018

Flores Island in Indonesia is the site where some unusual hominin fossils were found. Fossils of very small people, now usually classified as the species Homo floresiensis, and often referred to as "hobbits". Understanding the significance of these fossils has been a continuing challenge, and has been discussed in several Musings posts [link at the end].

In fact, there are small people living on Flores Island now. They are the Rampasasa pygmies; they are not as small as the hobbits, but they are distinctly small. Their home is actually very close to the site where the hobbit fossils were found.

Study of the hobbits has been hampered by the inability -- so far -- to find any DNA for them. However, the Flores pygmies are a living people. With arrangements, a team of scientists has collected DNA from some of the pygmies, and sequenced their genomes. The work, as reported in a recent article, provides some insight into the pygmy population, and by inference perhaps into the hobbits.

As so often with genome articles, there is massive data, analyzed by computers. We just look at some of the conclusions.

One issue the scientists examined was genetic variants that are associated with short stature. Using knowledge from accumulated human genomes, they find that the pygmy genomes are quite enriched for genes for shortness. They make a genetic prediction about the height of the pygmies.

The following graph shows how the actual height compared to the genetic predictor for height in their sample of the pygmy population.

|

You can see that there is a general trend of agreement between actual and predicted heights. It's not perfect, of course. The environment, including nutrition, affects height. Further, the understanding of how the height genes interact is limited. The point is that the general trend suggests that the genes being studied here are in fact relevant to height, and that this population has undergone selection for genes for short stature.

One part of that deserves emphasis... The pygmies are enriched for short-stature variants that already existed (and are known in other populations). That is, their short stature is based on selection of appropriate alleles from the gene pool. (Whether there are also new mutations for shortness in the population is unclear.) For reference: 4 feet 6 inches = 137 centimeters; 5 ft = 152 cm. This is Figure 4C from the article. |

In another part of the work, the authors show that the pygmy population contains Neandertal and Denisovan DNA sequences, as expected. However, there is no significant amount of sequence of unknown origin. This leads them to suggest that there is no connection between the modern pygmy population and the earlier hobbits.

If indeed the pygmies and hobbits are unrelated, it means that populations of small people have arisen on Flores Island twice.

News stories:

* No evidence of 'hobbit' ancestry in genomes of Flores Island pygmies. (EurekAlert!, August 2, 2018.)

* The modern pygmies of Flores are not related to Homo floresiensis -- Modern people's stature evolved separately millennia after hobbits' extinction. (K N Smith, Ars Technica, August 2, 2018.)

* News story accompanying the article: Human evolution: How islands shrink people -- Evolutionary dwarfing affected living people on the island of Flores, and may explain the stature of the extinct hobbit. (A Gibbons, Science 361:439, August 3, 2018.)

* The article: Evolutionary history and adaptation of a human pygmy population of Flores Island, Indonesia. (S Tucci et al, Science 361:511, August 3, 2018.)

Background post about the hobbits: The little people of Indonesia (May 14, 2009). Links to more, perhaps a complete list of related posts.

Another unusual human group in Indonesia: Bigger spleens for a bigger oxygen supply in Sea Nomad people with unusual ability to hold their breath (July 2, 2018).

More from Indonesia... Oldest known picture of a pig (February 21, 2021).

More about human height: Is being tall bad for your health? (July 12, 2022).

There is more about genomes on my page Biotechnology in the News (BITN) - DNA and the genome. It includes an extensive list of related Musings posts.

November 5, 2018

Perhaps not literally, but the work in a new article evokes the idea. It's an interesting story.

The following graph shows a key result...

|

A quick glance... The title of the graph suggests this has something to do with hair. And one bar is distinctly high.

The bars show two types of hair follicle cells: active and inactive. The bright bars are for the active type. The y-axis is unhelpfully labeled "arbitrary unit". But I think we can take it as percentage. Each bar has two parts, totaling 100. Take the bottom (brighter) part as the active cells, the top (lighter) part as the inactive cells. Or just look at the main, bright bar, and think of this as an ordinary bar graph; that works fine. |

|

What are the bars for? The high bar is for skin samples treated with Sandalore; that gives the highest percentage of active cells (about 70). The other bars are all much lower, about 40. The most important of those is the bar at the right: Sandalore + Phenirat. The Phenirat inhibits the action of the Sandalore. The other bars are for controls: the "vehicle" alone, and the inhibitor alone; neither of those has any effect on its own. This is part of Figure 1b from the article. A similar graph, part of Figure 1e, is labeled in percentage. That suggests that the labeling of the graph above may be an error, and that it really is in percentage. | |

So, Sandalore promotes active hair follicles. There is a lot of evidence in the article on that point.

What is Sandalore? It is a synthetic chemical that mimics the odor of sandalwood. It's used in cosmetics; real sandalwood is an expensive material, as is its oil.

What's really interesting is how Sandalore acts. It acts via a protein called OR2AT4. OR? That stands for olfactory receptor. A receptor for detecting odors. An odor receptor in your skin -- your hair follicles. Doing something interesting and perhaps useful.

In fact, there are many examples of "olfactory receptors" in various odd places in the body. Physiological functions for some of them have been worked out. Beware your biases based on terminology. Olfactory receptors are receptors for specific chemicals. We have named one big family of such receptors after one common role. It might be better to think of them, broadly, as chemosensory receptors.

How do the scientists know that Sandalore is acting through this odor receptor? There are various lines of evidence, some of it in an earlier article. For example, the inhibitor used is known to be specific for that receptor. The ultimate test: the scientists removed this receptor genetically; that eliminated the effect of Sandalore.

Back to the piece of wood, alluded to in the title of this post. Would smelling a piece of wood -- or rubbing it on the skin -- elicit the hair-growth effect? Apparently not. The effect occurs with the synthetic sandalwood mimic, but not with any natural sandalwood ingredient.

The reason for the discrepancy between natural and synthetic materials may be investigated further, but it may be as basic as that they are different chemicals, and they do different things -- even though they have similar odors. In any case, Sandalore is a commercial cosmetic product, and it seems to have an effect that had not been anticipated.

Overall, we have here a story of an olfactory receptor, which one might have expected to be found in the olfactory system, in the hair-growing system. It responds to a cosmetic product that is on the market. What are the implications? A clinical trial of the product for hair growth is in progress.

There are also questions about the natural system. What is the natural role of the OR? What stimulates it naturally? The authors have some hint that it may be related to the microbiome of the hair follicles.

News stories:

* Synthetic sandalwood found to prolong human hair growth. (B Yirka, Medical Xpress, September 19, 2018.)

* Hair follicles Engage in Chemosensation - Olfactory Receptor OR2AT4 Regulates Human Hair Growth. (Monasterium Laboratory, September 18, 2018.) From the company that is the lead affiliation. (You might want to check the "Competing interests" statement in the article.)

The article, which is freely available: Olfactory receptor OR2AT4 regulates human hair growth. (J Chéret et al, Nature Communications 9:3624, September 18, 2018.)

Previous post about (fake) wood: Artificial wood (November 3, 2018). The previous post, immediately below.

More about hair growth: A treatment for senescence? (June 4, 2017).

Other posts about hair include:

* Why do many tarantulas have blue hair? (March 7, 2016).

* Cryptozoology meets DNA: No evidence for Bigfoot or Yeti or such (September 13, 2014).Posts on the complexity of olfaction itself:

* Is it possible to have a normal sense of smell without olfactory bulbs? (January 28, 2020).

* The chemistry of a tasty tomato (June 18, 2012).

November 3, 2018

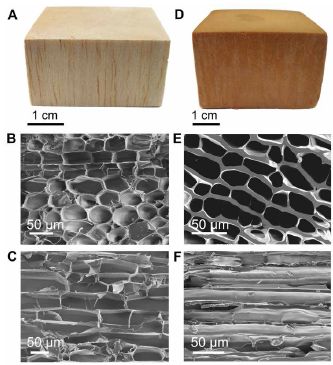

Here is what an artificial word looks like, along with a natural wood...

|

Each column is for one type of wood.

The top row shows a macroscopic view. Below that are two scanning electron micrographs of each wood, showing the cellular structure. One is perpendicular to the grain; one is parallel to it. Perhaps you have guessed that the one on the left is the natural wood; it is balsa. But the big picture is that they aren't very different. The new stuff looks passably like wood. This is part of Figure 2 from the article. |

Properties? Strength?

|

The graph shows strength (y-axis) vs density (x-axis) for numerous materials. Both are log scales.

There is a lot of information there; let's get some pieces of it. Towards the right are two small bluish ovals, labeled "Woods". Two? Parallel or perpendicular to the grain, as shown with the symbols under the word. Then there are two long narrow tannish ovals (or bands), labeled "Polymeric woods", awkwardly referring to the artificial woods that the scientists have developed. (Natural wood is polymeric, too.) Again, the two ovals are for the two directions. Perhaps the most striking finding is that they have materials over a very wide range of densities, with a consistent trend of strength vs density. When the materials are tested "parallel", the natural and artificial woods substantially follow the same pattern. When the materials are tested "perpendicular", the artificial woods are a little better, maybe about 4-fold stronger for the same density. That is, there is less difference in the strengths between directions for the artificial woods than for the natural woods. (Visually... the two ovals for artificial woods are closer together than for natural woods.) This is Figure 3C from the article. |

Overall, the scientists have made a range of wood-like materials. Perhaps a little stronger, especially in the direction where wood is weaker.

Other properties of the new stuff, compared to wood? More resistant to fire, and to some chemicals that attack wood, including water. Better as thermal insulation. Easier to make; you don't need to wait for it to grow for a few years. (Hmmm.)

How did they make these products? They used common materials, such as melamine and phenolic resins. They tried to use these familiar materials in novel ways, to make wood-like products.

The artificial wood may be tree-free, but it does have some shrimp in it. Chitosan, derived from the shells of shrimp (or other arthropods).

Why did they make these products? It might seem odd to make synthetic wood. The work is from China, a country that is a major importer of wood. That provides them a motivation. What makes the article noteworthy scientifically is that it enhances our toolkit for making diverse materials. Time will tell what is useful.

News stories:

* Making Wood Out Of Synthetic Resin -- Researchers in China have developed a family of bioinspired artificial wood from phenolic and melamine resin. (Asian Scientist, August 20, 2018.)

* Synthetic wood is fire and water resistant. (T Puiu, ZME Science, October 22, 2018.)

* Team develops a family of bioinspired artificial woods from traditional resins. (Phys.org, August 13, 2018.)

The article, which is freely available: Bioinspired polymeric woods. (Z-L Yu et al, Science Advances 4:eaat7223 August 10, 2018.)

More about wood and other building materials:

* Growing wood in lab culture (March 20, 2021).

* Transparent wood (March 6, 2021).

* Staying warm -- polar-bear style (July 23, 2019).

* Using old clothes as building materials? (February 5, 2019).

* Could smelling a piece of wood improve the growth of your hair? (November 5, 2018). The next post, immediately above.

* Making wood stronger (March 19, 2018). The article of this post is reference #8 of the current article.

* Stone age human violence: the Thames Beater (February 5, 2018).

* Building with wood: might it replace steel and concrete? (June 14, 2017).More about melamine: Melamine toxicity: possible role of gut microbiota (April 21, 2013). This relates to incidents where melamine was used to adulterate food products (leading to a false high value for protein content). Is melamine toxicity relevant to the current work? Using plastics based on melamine is well established. That's bound melamine, as in the current material. Whether the new material has any significant amount of free melamine, which might be of concern, remains to be tested.

October 31, 2018

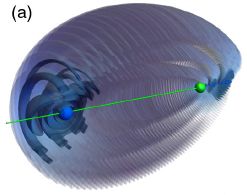

In ordinary chemical bonds, the bonding electrons are localized between the two atoms being bonded. Would it be possible to artificially cause the electrons to be localized, so that they look like they are in a bond, even though there is nothing there to bond to?

A new article proposes that it may be possible -- and describes how to do it. A caution... it's all theoretical at this point, but the authors think that what they propose is experimentally testable.

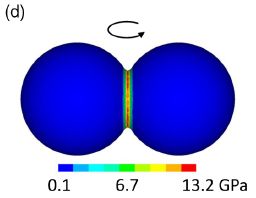

The following figure gives an idea of what they are trying to do...

|

The green dot near the right shows the nucleus of an atom.

The blue region, mainly at the far left, shows the probability distribution for the bonding electron. That the electron is so asymmetrically distributed might suggest that it is involved in bonding to something out there. This is Figure 2a from the article. |

But there is no "something out there". There is no other atom at the left. What we have here is a ghost bond -- a bond to nothing.

How did the electron get out there? It was manipulated, by carefully designed magnetic and electric fields.

Or so it is proposed. Remember, the article is all theoretical; the picture is a computer image of what the scientists calculate will happen. However, they think that it is practical, that the confused atom might hang around long enough to detect (perhaps 200 microseconds), and that they know how to detect it.