Home

> Musings: Main

> Archive

> Archive for January-April 2013 (this page)

| Introduction

| e-mail announcements

| Contact

Musings: January-April 2013 (archive)

Musings is an informal newsletter mainly highlighting recent science. It is intended as both fun and instructive. Items are posted a few times each week. See the Introduction, listed below, for more information.

If you got here from a search engine... Do a simple text search of this page to find your topic. Searches for a single word (or root) are most likely to work.

Introduction (separate page).

This page:

2013 (January-April)

April 30

April 24

April 17

April 10

April 3

March 27

March 20

March 13

March 6

February 27

February 20

February 13

February 6

January 30

January 23

January 16

January 9

January 3

Also see the complete listing of Musings pages, immediately below.

All pages:

Most recent posts

2026

2025

2024

2023:

January-April

May-December

2022:

January-April

May-August

September-December

2021:

January-April

May-August

September-December

2020:

January-April

May-August

September-December

2019:

January-April

May-August

September-December

2018:

January-April

May-August

September-December

2017:

January-April

May-August

September-December

2016:

January-April

May-August

September-December

2015:

January-April

May-August

September-December

2014:

January-April

May-August

September-December

2013:

January-April: this page, see detail above

May-August

September-December

2012:

January-April

May-August

September-December

2011:

January-April

May-August

September-December

2010:

January-June

July-December

2009

2008

Links to external sites will open in a new window.

Archive items may be edited, to condense them a bit or to update links. Some links may require a subscription for full access, but I try to provide at least one useful open source for most items.

Please let me know of any broken links you find -- on my Musings pages or any of my regular web pages. Personal reports are often the first way I find out about such a problem.

April 30, 2013

Using your brain waves to log on to the computer

April 29, 2013

|

That is UC Berkeley professor John Chuang, wearing a headset that may allow him to log on to his computer simply by thinking of his password -- or "pass-thought". The key part is the arm of the headset that is pressed against a specific region of his forehead: a sensor to capture brain waves from his frontal cortex.

This is from the first news story, below.

|

A news story about this work reminded me of a recent post, in which brain waves were sent from one animal to another [link at the end]. The news story is based on a talk given recently by Chuang's group. Even though the information available is limited, it seemed of interest to briefly note this new work.

Of course, it isn't the idea here that is novel, but the implementation. There are a couple of main themes in this work:

1) One is that they use an inexpensive commercially available device to collect brain waves. It is a single channel electroencephalogram (EEG) device, with a sensor worn on the forehead, as shown in the picture above. The signal -- the brain waves from the person trying to log on -- is compared to what the person has previously recorded; in a sense, it is just like a regular password system, but what is checked is what the person thinks, not what the person types.

This device is much simpler than the complex EEG sensors used when the goal is to assist people with disabilities. In the current work, the goal is relatively simple: they need only associate the brain waves with the individual. When brain waves are being used to perform tasks, a more refined signal is needed.

In fact, one of their conclusions is that they now get results with the simple EEG that are as good as previous results with the more complex "clinical" EEGs.

2) The other theme is what we might call consumer acceptance. Much of the talk is about what kinds of mental tasks people might find appropriate for this computer operation. They find that people vary in what they consider easy or boring. Their general conclusion is that it is probably best to allow users to select their own type of task.

Does it work? With their best system, they get an error rate of about 1% -- mostly due to false rejection of the proper user (rather than to acceptance of an improper user). As noted, an important point for them is that they achieve this with a simple device. They do not claim it is ready for real world use, but rather that it is worth studying further.

News stories:

* New Research: Computers That Can Identify You by Your Thoughts. (UC Berkeley School of Information, April 3, 2013.) Good overview.

* Thoughts could be future of security. (Daily Cal (UC Berkeley student newspaper), April 10, 2013. Now archived.) A more informal overview.

Chuang's group gave a talk about this work recently at a meeting; here is the paper they presented. It is freely available; the link here is to a copy from the authors: I Think, Therefore I Am: Usability and Security of Authentication Using Brainwaves. (J Chuang et al, 2013 Workshop on Usable Security, Seventeenth International Conference on Financial Cryptography and Data Security, April 2013.) Remember, this is a meeting talk, not a published paper. We note it here briefly as fun and interesting -- and potentially useful. And it is, for the most part, a quite readable paper. Formal publication of a peer-reviewed paper will presumably follow at some point.

Background post: Can one rat know what another rat is thinking? (April 8, 2013). A brain signal is sent from one rat to another; the receiving rat acts on the signal.

Examples of the use of brain-computer interface to assist the disabled.

* Brain-computer interface -- without invasive electrodes (December 28, 2016).

* Brain-computer interface: Paralyzed patients control robotic arm by their thoughts (June 16, 2012).

More about brains is on my page Biotechnology in the News (BITN) -- Other topics under Brain (autism, schizophrenia).

More about computer security:

* Computer security: web-based password managers (September 29, 2014).

* Can computers talk to each other? Could it be a new type of security threat? (June 18, 2014).

Loudspeakers: From gold-coated pig intestine to graphene

April 27, 2013

Audio devices transmit sound to us via vibrating membranes, driven electrically or magnetically. We refer to the membrane devices with terms such as loudspeakers or headphones. Desired characteristics of such devices include a flat frequency response over the range of human hearing, say from 20 to 20,000 Hertz (Hz), and good power efficiency.

Mankind has been making such devices for decades. Early electrically-driven phones, using gold-coated pig intestine as the material for the vibrating membrane, are long forgotten. Now, UC Berkeley physicists announce that they can do better than high-quality commercial headphones almost on their first try using graphene membranes only 30 nanometers (nm) thick. Why graphene? First, it is naturally electrically conducting. Second, it is incredibly strong, allowing use of a very thin membrane.

|

Above are frequency response curves for two headphone devices.

The x-axis is the sound frequency, from 20 to 20,000 Hz -- on a log scale. The y-axis shows the headphone response, shown as the relative sound pressure level (SPL), in decibels (dB).

Part a (upper) is for the electrostatically driven graphene speaker (EDGS) -- the device they made. Part b (lower) is for a high-quality commercial headphone.

You can see that the two devices perform similarly for much of the frequency range. At high frequencies, the graphene speaker outperforms the commercial headphone. (The tail-off at low frequencies is probably an artifact of their measurements.) Their subjective observations are in agreement with these data.

This is part of Figure 3 from the article.

|

Their general conclusion is that, with little development effort, they have made headphones of quality similar to if not better than those commonly used. In addition to the good frequency response, their graphene headphones are efficient -- probably 10-fold more efficient than the commercial phones; that's an issue in these days of battery-operated devices.

As noted above, the secret is the strength of graphene, allowing use of a very thin membrane. This simplifies the design. In particular, a thin membrane is damped simply by the surrounding air, without needing a separate damping system. In addition to being simpler, this allows more of the energy to go directly into producing sound. (Damping refers to stopping a vibration. If damping did not occur, one sound would pile upon the previous one.)

Is this going to make it to market? I don't know. There is also a gap between lab-scale and commercial development. But it does sound like it may be worth exploring. Remember, graphene is a rather new material, and people are still learning how to use it.

News stories:

* First Graphene Audio Speaker Easily Outperforms Traditional Designs. (Physics arXiv Blog (MIT Technology Review), March 13, 2013.)

* Experimental graphene earphones outperform most commercial headsets. (Nanowerk, March 21, 2013.) (Caution... The title of this item notes "most" commercial headphones. In fact, they seem to have tested only one. This is a problem of headline writing; the article itself seems quite on-target.)

* UC Berkeley researchers develop first graphene-based headphones. (Daily Cal (UC Berkeley student newspaper), March 18, 2013. Now archived.) Starts with a funny story.

The article: Electrostatic graphene loudspeaker. (Q Zhou & A Zettl, Applied Physics Letters 102:223109, June 5, 2013.) It is also available from Zettl's web site: Zettl publication list -- scroll down to the item.

More on graphene:

* A simple way to make a supercapacitor with high energy storage? (January 6, 2014).

* Graphene bubbles: tiny adjustable lenses? (January 15, 2012).

A post about carbon nanotubes, which are closely related to graphene: Characterization of carbon nanotubes (December 3, 2013).

More about sound: The golden ear: A nano-ear based on optical tweezers (July 13, 2012).

More about "listening": A rapid test for antibiotic sensitivity? (July 19, 2013).

More from Alex Zettl: CITRIS: Zettl; new energy series (November 1, 2009).

This post is listed on my page Introduction to Organic and Biochemistry -- Internet resources in the section on Aromatic compounds.

Hard seeds or soft seeds?

April 26, 2013

Interesting little story. Some plants make hard seeds, some make soft seeds; some make both kinds. Why?

The common view has been that seed hardness provides a physical basis for dormancy, promoting the lifetime of the seeds. A new article suggests another advantage of hard seeds: they may be less attractive to animals -- because they give off less of the odor molecules that attract the predators.

Their experimental approach is simple and fun. The scientists provide hamsters with an array of little dishes filled with various little things... soft seeds, hard seeds, and gravel. (In a given test, the hard and soft seeds are from the same type of plant.) They measure what the hamsters find.

|

A hamster looking for seeds.

This is trimmed and reduced from a figure in the news story. Figure 1 of the paper shows several similar scenes, as well as the general test set-up.

|

In some experiments, the hamsters could see the seeds. In that case, hard vs soft made little difference. However, in some experiments, the seeds were under the gravel, and could be detected only by odor. In that case, the hamsters removed many more soft seeds than hard ones.

The simple conclusion, then, is that hard seeds are harder for the hamsters to detect by odor.

That is, the hard seed is an anti-predator trait.

The authors speculate about the importance of various reasons for the seed types, but there is not much to go on. One particularly interesting speculation is about why a plant might make both hard and soft seeds. The soft seeds attract animals, and the hard ones may go straight through the digestive system. Thus the mixture serves to promote dispersal of the seeds.

A bit more explanation... A key feature of "hard" seeds is that they are impermeable to water. They do not easily germinate -- and start to metabolize -- when exposed to water. Thus the general feature of impermeability, which promotes dormancy, also reduces release of the volatiles that the hamsters detect, probably by preventing their production by metabolism. The key experiments, in which odor detection was important, used seeds that were not only buried but also wetted, to promote metabolism.

News story: Physical dormancy in seeds: a game of hide and seek? (New Phytologist Trust, March 8, 2013.) A useful brief overview.

The article: Physical dormancy in seeds: a game of hide and seek? (T R Paulsen et al, New Phytologist 198:496, April 2013.)

More about seeds: Miniature helicopters -- and botany (July 6, 2009).

More about detecting food by odor:

* Malaria-infected mosquitoes have greater attraction for people (May 28, 2013).

* What happens if you block the left nostril of a mole's nose? (April 19, 2013).

More, May 7, 2013... Comment. A reader questioned a word usage in this post. Is it proper to use the term predator to describe an animal that eats seeds? I had wondered, too, but gone along with the usage by the authors of the paper. Turns out that seed predation is a well-accepted usage; put the term in a search engine or Wikipedia, if you want.

April 24, 2013

It's a dog-eat-starch world

April 23, 2013

|

That's the idea.

The picture is actually a fake -- a composite. Still, it's cute.

This is from the Broad Institute news story.

|

The point, however, is important. Dogs do digest starch -- and that is noteworthy. Dogs are of the carnivore family, descended from wolves. Wolves are rather strict carnivores.

A new article examines the genomes of wolves and dogs. Many of the characteristic differences are in brain genes. That finding is expected, since there are numerous behavioral differences between dogs and wolves. However, another major group of differences is in genes for digestion; several of these differences seem to promote the expansion of the digestive abilities of the dog. For example... One key enzyme for breaking down starch is amylase; dogs contain several times more amylase than wolves do.

There are two broad reasons why this is of interest...

* One is for its insight into the evolution of dogs. It is well accepted that dogs descended from wolves. They adapted to become more sociable; the changes in brain genes presumably relate to this. And they adapted to our diet, the diet of agricultural man. Perhaps genetic adaptation to digesting starch was an early event, as some wolves began to explore the garbage piles left by humans. However, we should stress that the genome data yields little information at this point on the timing of any of these changes; much of what you read about the steps in the evolution of the dog is speculation.

* The other is that the change in dog digestion mirrors a change in human digestion. Humans, too, changed to a more starchy diet with the dawn of agriculture. This change was noted in a recent post [link at end].

News stories:

* Dogs Adapted to Agriculture -- As wolves became domesticated, their genes adapted to a starch-rich diet of human leftovers. (The Scientist, January 23, 2013.)

* In divergence from wolves, doggie diet made a difference. (Broad Institute (MIT & Harvard), January 23, 2013.)

* News story accompanying the article: Evolutionary genomics: Detecting selection. (G S Barsh & L Andersson, Nature 495:325, March 21, 2013.)

* The article: The genomic signature of dog domestication reveals adaptation to a starch-rich diet. (E Axelsson et al, Nature 495:360, March 21, 2013.)

Background post on human digestion: Bacteria on human teeth -- through the ages (March 24, 2013).

More about dogs (and wolves):

* Will a wolf puppy play ball with you? (February 7, 2020).

* The oldest known dog leash? (January 23, 2018).

* Doggy bags and the food waste problem (January 4, 2017).

* Sharing microbes within the family: kids and dogs (May 14, 2013).

* Dog fMRI (June 8, 2012).

* Pet Diary (September 25, 2009).

More about carnivores:

* Loss of ability to taste "sweet" in carnivores (April 6, 2012).

* Carnivorous plants: A blue glow (March 16, 2013).

More about domestication... Atmospheric CO2 and the origin of domesticated corn (February 14, 2014).

There is more about genomes on my page Biotechnology in the News (BITN) - DNA and the genome.

Life in an Antarctic lake

April 22, 2013

Briefly noted...

Lake Vida is a lake in Antarctica. It is covered with ice, and has probably been isolated from external input for nearly 3,000 years. We would consider the conditions in Lake Vida to be rather extreme. The temperature is about -13 °C, and it is quite salty but devoid of oxygen. In a recent article, a team of scientists report that Lake Vida is teeming with life -- microbes.

Exploring the sub-surface lakes of Antarctica is a new and active field. It's also a difficult and controversial field. The idea is to isolate water from these underground lakes -- without contaminating them with anything from the outside world. It involves drilling a hole -- while maintaining absolute biological sterility. And doing it under some of the most inhospitable conditions on Earth.

In recent weeks there have been news stories about possible isolation of novel microbes from an Antarctic lake thought to be isolated for millions of years. The initial news report was followed by denials, and counter-denials. Unfortunately, at this point there are no real facts on that story.

The current article is a small step in the study of Antarctica's underground lakes: a published paper about a younger lake. It opens the subject of finding microbial life in such lakes. Published papers do not always turn out to be right, but at least a formal publication gives us something solid to look at.

The article is largely a description of what they found, both for chemicals and microbes. As examples... The lake water contains 20% salt. It is supersaturated with N2O (nitrous oxide, laughing gas). There is a diverse collection of microbes (about 32 species across eight phyla, based on analyzing DNA), at around 106/mL. It is presumed that the energy source for the microbes is inorganic chemicals, deriving from the rocks in contact with the lake.

News story: Hearty Organisms Discovered in Bitter-Cold Antarctic Brine. (Science Daily, November 26, 2012.) (One might guess that the first word of the title should be "hardy". The "error" apparently derives from a press release from one of the lead institutions involved in the work.) A useful overview.

The article, which is freely available: Microbial life at -13 °C in the brine of an ice-sealed Antarctic lake. (A E Murray et al, PNAS 109:20626, December 11, 2012.)

More from Antarctica:

* What do microbes eat when there is nothing to eat in Antarctica? (April 2, 2018).

* A quasi-quiz: The fate of bone and wood on the Antarctic seafloor -- and the discovery of new bone-eating worms (August 20, 2013).

* How were the Gamburtsevs formed? (December 7, 2011).

* How an octopus adapts to the cold -- by RNA editing (March 5, 2012).

Underground water on Mars? ... A lake on Mars? (August 24, 2018).

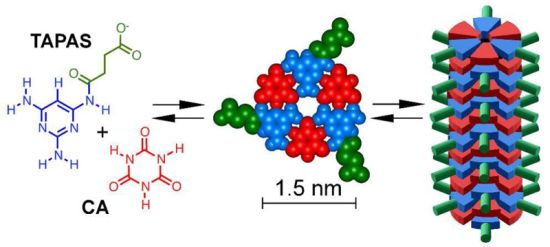

Melamine toxicity: possible role of gut microbiota

April 21, 2013

A few years ago, there were two well-publicized incidents of poisoning due to the chemical melamine. One involved pet food, and the other involved milk used for infants. These incidents probably involved deliberate adulteration of the food products with melamine. Why? Because melamine is counted as "protein" by common analyses, and it is cheaper than real protein.

But if the incentive was economic, why would anyone adulterate a food with a poison? Because melamine is not poisonous. And thus we begin to see the melamine mystery. Not only were these cases of fraud, but they were also cases of poisoning by something that was not poisonous. There must be more to the story.

Scientists immediately investigated, and soon came to the conclusion that the melamine was toxic by interacting with another chemical, called cyanuric acid (CA); the combination of melamine plus CA led to kidney stones. With CA, melamine was toxic; without CA, melamine was not toxic. It was plausible that the melamine used for adulteration contained some CA (the two chemicals are actually related).

A recent article introduces a new twist to the melamine story. A team of scientists now suggests that animals consuming melamine may convert some of it to CA. More specifically, they suggest -- and show -- that the conversion is done by bacteria in the gut of the animals, that is, by the gut microbiota. If this is correct, then consumption of "pure" melamine might lead to kidney stones of melamine plus CA, because the animals, via their gut bacteria, make the CA.

It was already known that some bacteria can convert melamine to CA. With the increased understanding of the importance of the gut microbiota, scientists wondered if this might be relevant to the melamine poisoning. In their first experiment, they simply tested the effect of giving an antibiotic on melamine toxicity in rats. The antibiotic treatment reduced the melamine toxicity! That alone is an interesting result, and would seem to implicate bacteria -- somehow -- in melamine toxicity.

The scientists then went on to isolate bacteria from the rat feces that could convert melamine to CA. They ended up focusing attention on Klebsiella bacteria, particularly an isolate of Klebsiella terrigena. Here is an example of what they found using this strain...

|

In this experiment, rats were given melamine; some were also given the Klebsiella bacteria. The bars are labeled "Mel" for melamine alone, or "K+Mel" for Klebsiella bacteria + melamine.

The bars show the chemicals found in the kidneys -- presumably in the form of kidney stones. The left hand graph (A) is for melamine in the kidneys; the right hand graph (B) is for cyanuric acid (CA).

|

You can see that the levels of both chemicals were enhanced by adding the bacteria. The interpretation is that the bacteria convert some of the melamine to CA, and that promotes kidney stone formation.

This is Figure 5 parts A & B from the article.

|

Overall, we have evidence that bacteria can promote the formation of CA from melamine, and that they can promote kidney stone formation -- presumably by that conversion. This offers one more clue as to how melamine can be toxic.

The story is incomplete, however. If normal gut microbiota make melamine toxic by converting some of it to CA, why then does melamine test as non-toxic? A possible answer is that the prevalence of CA-forming bacteria varies widely. The authors even speculate that the level of such bacteria was one factor determining why some children were more affected by the melamine-containing milk.

Here is another "loose end"... Some work showed that the children who were poisoned by the melamine formed kidney stones with the melamine combined with uric acid, not CA. Interestingly, the experiment described above also showed increased levels of uric acid in the kidneys (part C of the figure, not shown here). The significance is not clear. It is possible that this simply reflects greater stone formation, with uric acid being included in the stones. There is no evidence about whether the bacteria might be stimulating uric acid production. The point for now is that CA may be only part of the story. It is plausible that clinically relevant kidney stones are due to melamine interacting with both CA and uric acid.

News story: Gut Microbes Could Determine the Severity of Melamine-Induced Kidney Disease. (Science Daily, February 14, 2013.)

The article: Melamine-Induced Renal Toxicity Is Mediated by the Gut Microbiota. (X Zheng et al, Science Translational Medicine 5:172ra22, February 13, 2013.)

For more on the melamine story, see my page of Internet resources for Introduction to Organic and Biochemistry, in the section on Amines, amides. That page includes structures of melamine and CA.

For more about the gut microbiota:

* A phage treatment for inflammatory bowel disease (August 13, 2022).

* Malnutrition: is more (or better) food the answer? (March 8, 2013).

* Sharing microbes within the family: kids and dogs (May 14, 2013). A broader perspective.

* Red meat and heart disease: carnitine, your gut bacteria, and TMAO (May 21, 2013).

A post about the bacteria associated with a sponge: Theonella's secret: Entotheonella (March 18, 2014).

More melamine: Artificial wood (November 3, 2018).

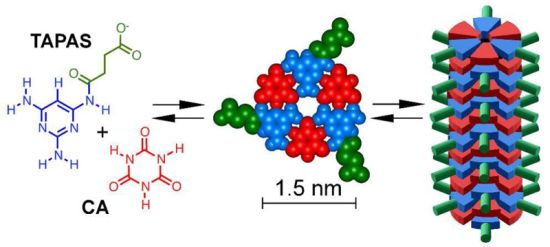

For another story about melamine-related chemicals, see A novel type of polymer -- and its possible relevance to the origin of life (March 15, 2013).

More about adulteration: Purity of dietary supplements? (October 23, 2018).

What happens if you block the left nostril of a mole's nose?

April 19, 2013

It veers to the right.

|

Scalopus aquaticus, the common American mole.

Focus on the nose.

This picture is from the Science Daily news story.

|

Humans have two ears, spaced some distance apart. Each ear sends a signal to the brain, which then integrates the two signals to obtain information about the direction and distance of the sound source. This is an example of stereo sensing.

Little is known about smelling in stereo. A new article shows that one animal seems to be able naturally to smell in stereo. It is the mole, shown above. This little burrower depends on smell as its primary sense for finding food.

The work began by noticing that the moles shifted their head back and forth while sniffing out a food source. This suggests that they are processing repeated sniffs to gain information about the source. This observation led a scientist to set up controlled conditions for studying how the moles respond to odor cues. The testing included tests not only for serial sniffing but for stereo sniffing: integration of the distinct information from the two nostrils.

A key piece of evidence came from experiments of the type noted in the title of this post. Here is an example of what they found in such a blocked-nostril test with one particular mole.

|

In this test, the mole was offered a piece of food, and its path to get to the food was measured. We'll look at exactly what was measured in a moment, but for now simply note that a shorter time -- a lower bar -- is "better". There were three test conditions: normal, and with one or the other nostril blocked.

|

You can see that the results for "normal" were the lowest. With one nostril blocked, the results show one high bar and one low bar. For example... the red bars are for the case where the left nostril is blocked. The result for "left" is about 0.1 seconds; the result for "right" is nearly 2 seconds. Those times are the amount of time the animal spent to that side of the food source. That is, with the left nostril blocked, it spent much of its extended search time to the right of the food -- on the side of the open nostril.

This is Figure 3c from the article.

|

The overall observation is that blocking one nostril causes the mole to veer to the other side -- toward the side of its open nostril. This result suggests that the mole is using the information from the two nostrils separately to determine the location of the food reward.

News stories:

* Moles Smell in Stereo to Find Food, Dodge Predators. (National Geographic, February 5, 2013. Now archived.)

* Evidence Moles Can Smell in Stereo. (Science Daily, February 5, 2013.)

The article, which is freely available: Stereo and serial sniffing guide navigation to an odour source in a mammal. (K C Catania, Nature Communications, 4:1441, February 5, 2013.)

More about moles: The basis of intersexuality in moles (November 28, 2020).

Other posts about smelling include:

* Copper ions in your nose: a key to smelling sulfur compounds (October 10, 2016).

* What does blue light smell like? (July 18, 2010).

* How do you tell if bees are pessimistic? (August 5, 2011).

* Hard seeds or soft seeds? (April 26, 2013).

April 17, 2013

An ancient navigation device?

April 16, 2013

A team of scientists has reported taking a beautiful natural crystal and making a mess of it -- in two weeks. It's an interesting story.

|

Start with the middle picture -- part b. It shows a crystal of calcite, a form of calcium carbonate, CaCO3. It is about 5 cm (2 inches) long; see scale bar at lower right of the entire figure. Beautiful, isn't it?

Part c (bottom) shows this same crystal (from part b) after treatment.

Why is this change of interest? Look at part a (top). This is a photo of a "stone" recovered from a 16th century shipwreck. The scientists think it was originally a nice crystal of calcite (like that of part b), and that it has been degraded by being on the sea bottom for four centuries. The appearance seen in part c is a hint of what prolonged treatment with sea water can do to a crystal of calcite.

Oh, what was that treatment that got us from part b to part c? Sand abrasion, plus two weeks immersion in sea water. As a touch of authenticity, they even used water from the English Channel, the site of the shipwreck.

This is reduced from Figure 1 from the article.

|

It's that old object in part a that is the real story here. It's in bad shape, but it might well have been a single beautiful calcite crystal -- 400 years ago. And if it was, it might have been a navigation device used onboard the ship. Calcite crystals have interesting optical properties; looking through such a crystal allows one to find the sun, even through clouds or when the sun is somewhat below the horizon.

There are references in ancient writings about the Vikings using "sunstones" for navigation. By the time of this ship, magnetic compasses were coming into use, but were still somewhat mysterious. (You thought a compass pointed north? Try telling that to someone at high northern latitudes.) Sunstones may have been still in use, alongside the modern tools. Perhaps this one is an example. If so, it would be the oldest known navigation sunstone recovered from a ship.

News story: First Evidence of Viking-Like 'Sunstone' Found. (M Gannon, Live Science, March 6, 2013.) (This is a replacement news story. The one listed originally is no longer available.)

The article: The sixteenth century Alderney crystal: a calcite as an efficient reference optical compass? (A Le Floch et al, Proc R Soc A 469:20120651, May 8, 2013.)

Here is another news story, about an earlier paper on this work. You may find it useful as background. The Viking Sunstone Revealed? (Science Now, November 1, 2011.)

Musings posts on calcium carbonate include:

* A see-shell story (February 21, 2016).

* Bending a rigid rod (May 17, 2013).

* Quiz: What is it? (March 6, 2012). See the answer.

More about shipwrecks...

* Should physicists be allowed to use lead from ancient Roman shipwrecks? (December 2, 2013).

* A quasi-quiz: The fate of bone and wood on the Antarctic seafloor -- and the discovery of new bone-eating worms (August 20, 2013).

* The Antikythera device: a 2000-year-old computer (August 31, 2011).

Infant cured of HIV?

April 15, 2013

It was a big news story in the popular media last month: an infant apparently cured of HIV. It is a potentially important story, so let's look at it. A big caution... It is also an incomplete story. At this point, what we have is a report at a meeting; no scientific paper has been published. If we take the basic facts presented at the meeting as correct, we must remember that this is one case. We think we know what happened, but cannot be sure. The only way to know whether the result holds more broadly is to test it more broadly. It is an exciting enough result that such testing will undoubtedly be done.

Here is the basic story... A child was born to an HIV-infected mother, who had received no treatment. The child was put on HIV-therapy 30 hours after birth -- even before test results were available; the testing showed that the child was indeed positive for the virus. Treatment continued for 18 months, but was stopped. The child has been off therapy for about a year, and appears to be free of HIV. Thus, if we accept the test results showing that the child was indeed infected and is no longer infected, this seems to be a "cure".

What's novel here? Normally, withdrawing treatment of an HIV-infected person leads to a rebound of the virus. The implication is that the virus is "hiding" in the body, in some latent form not susceptible to the ordinary treatment. Treatment works fine, but relax the treatment and the virus rebounds. In the new case, the treatment was relaxed, and no rebound occurred. One possible interpretation is that the treatment began so early after infection that the virus never established its latent or hidden infection. That would be an exciting point, if it really holds.

As we noted at the outset, neither the facts nor the implications are entirely clear. This is a case report, not a controlled experiment. Was the child truly infected? Is it indeed disease-free, or at least virus-free?

If the story is correct as presented, we do not know for sure why it worked here. Perhaps this child was, for some reason, a special case. Nevertheless, we learn from single reports; we at least ask good questions. In this case, the implication is that we should treat immediately after exposure. This might apply to babies born to mothers who were infected but untreated. However, the number of such cases is small in the developed world, and acting on this in the developing world will be challenging.

News stories:

* Toddler 'functionally cured' of HIV infection, NIH-supported investigators report. (NIH, March 4, 2013.) From a funding source.

* Doctors Cure Baby Born With HIV For First Time. (Medical News Today, March 4, 2013.)

* In Medical First, a Baby With H.I.V. Is Deemed Cured. (New York Times, March 3, 2013. Link is now to Internet Archive.) If you read this, try to read it through to the end. Some things are a bit mixed up along the way.

Here is the abstract of the presentation given at the meeting: Functional HIV Cure after Very Early ART of an Infected Infant. (D Persaud et al, 20th Conference on Retroviruses and Opportunistic Infections, March 4, 2013. Now archived.)

More July 21, 2014 (1)...

Here is the article that was later published about that initial report: Absence of Detectable HIV-1 Viremia after Treatment Cessation in an Infant. (D Persaud et al, New England Journal of Medicine 369:1828, November 7, 2013.) Check Google Scholar for an available copy, including an author manuscript at PubMed Central.

More July 21, 2014 (2)...

The follow-up news is not good. Now, a year later, the child is clearly infected.

We had limited information at the time of the initial post, and we have even less at this point on the update. Nevertheless, we should note the setback.

News story: HIV Returns in "Cured" Child -- A Mississippi girl who was thought to have been "functionally cured" of HIV as an infant once again harbors detectable levels of the virus. (The Scientist, July 11, 2014.)

* Previous post on HIV... A simpler assay for detecting low levels of HIV, using gold nanoparticles (January 3, 2013).

* Next... How HIV destroys the immune system (March 3, 2014).

My page for Biotechnology in the News (BITN) -- Other topics includes a section on HIV

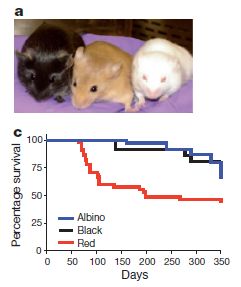

Mice with human brain cells

April 13, 2013

Adding human brain cells to mice makes the mice smarter.

That is a true statement about what was reported in a recent article. However, as so often, the attention-getting one-liner -- and indeed the article -- are just small parts of a big story. We want to look at some specifics, but also provide some perspective for the big story. You should go away with more than just the provocative point that mice with human brain cells are smarter.

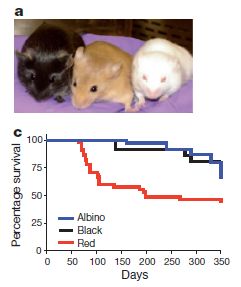

|

Let's start with one of the results. Here is an example of what they found...

Without going into the details for the moment... Three groups of mice were given a behavioral test. You can see that one group, labeled "Chmeric" (red curve), did the best. This is the group of mice with added human brain cells; the other two groups are controls. (The word chimeric refers to the mice being hybrid: part mouse, part human.)

This is Figure 6a from the article.

|

Those results should whet your appetite, so let's look further. What does it mean to say that these mice have added human brain cells?

There are various types of brain cells; the ones added to the mice were astrocytes. Neurons get the most attention, but there is increasing appreciation that astrocytes may be particularly important. One clue is that one of the greatest differences between the brains of man and other animals is in how well human astrocytes are developed. Astrocytes are involved with neural signal transmission, but their role is unclear.

The way the scientists added human astrocytes was to graft human astrocyte precursor cells into the developing brains of newborn mice. Thus the mice were allowed to develop their brains in the presence of the human cells. In fact, the human astrocytes integrated into the mouse brains, but appeared to be the well-developed astrocytes typical of humans. Further, the human astrocytes resulted in improved performance of the mice on certain tests, as illustrated with the graph above.

One of the controls was engrafted using the same procedure but with mouse cells; this control is labeled "Allografted". It is something of a sham control; the animals underwent all the steps of the procedure, but did not get the treatment itself. The other control, "Unengrafted", did not undergo the grafting procedure; that is, these are just normal mice.

Here is one possible interpretation of these results... Astrocytes play a supporting role in the brain, and the extensive development of human astrocytes was an important part of humans developing more advanced brains. When the human astrocytes are in mice, they provide better support there, too, thus enhancing at least some mouse brain functions. It's important to take this as a "possible interpretation", something that can help you see where the results might fit. It is certainly not proven; much more work is needed.

So what's the bigger story? They have an experimental system to study astrocytes. These cells are not well understood. One aspect of the work is that they are able to develop the precursor cells from people with various neurological conditions. They can then add astrocytes reflecting various diseases into the mice, and study the defects experimentally. There is much more to come from this experimental system -- and it is not about making mice smarter.

News stories:

* Using Human Brain Cells to Make Mice Smarter. (Science Daily, March 7, 2013.)

* Using human brain cells to make mice smarter. (Medical Xpress, March 7, 2013.)

* News story accompanying the article: Do Your Glial Cells Make You Clever? (R J M Franklin & T J Bussey, Cell Stem Cell 12:266, March 7, 2013.)

* The article: Forebrain Engraftment by Human Glial Progenitor Cells Enhances Synaptic Plasticity and Learning in Adult Mice. (X Han et al, Cell Stem Cell 12:342, March 7, 2013.)

Video. There is a 5-minute promotional video with two of the senior authors describing the work. It is linked to various stories, and is also available at YouTube video.

Also see...

* As we add human cells to the mouse brain, at what point ... (August 3, 2015).

* A drug that delays neurodegeneration? (June 14, 2013).

* Fish with bigger brains may be smarter, but ... (January 25, 2013).

* The smartest chimpanzee? (September 29, 2012).

* Making smarter flies (July 18, 2012).

* Smart dust: A central nervous system for the earth (July 20, 2010).

More about brains is on my page Biotechnology in the News (BITN) -- Other topics under Brain (autism, schizophrenia).

Caffeine boosts memory -- in bees

April 12, 2013

Who would try feeding caffeine to bees? Coffee plants. (And some citrus plants.) The nectar of coffee flowers contains caffeine -- just a little, not enough to taste too bitter. Perhaps enough to make the bees come back.

A new article looks at how bees respond to caffeine. The basic experimental design is familiar. Bees are offered choices. A scent leads to a reward -- of sugar. The question is whether the bees learn to associate the scent with the reward. In this case, one variable was the level of caffeine.

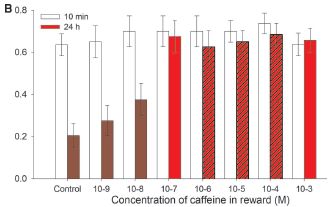

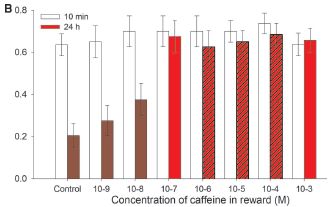

The following graph shows how the bees responded.

|

The y-axis is the fraction of "correct" responses. The x-axis is the concentration of caffeine (log scale).

The open bars show how the bees respond 10 minutes after learning. They get it right about 60-70% of the time, regardless of the caffeine level.

The colored bars (all of them, regardless of the color markings) are for 24 hours after the learning. At 24 hr, only about 20% of the responses are right for the control -- with no caffeine. As the caffeine level rises, so does the performance of the bees in this learning test.

|

At the higher caffeine levels, the bees do as well at 24 hr after learning as they had done at 10 min. At 24 hr, their "score" here has improved about three-fold by having caffeine.

Three of the red bars are "hatched" (marked with diagonal lines). These three show the levels of caffeine in the natural nectars the scientists examined (from coffee and citrus flowers). The level of caffeine that affects the bees' performance is in the range found in natural nectars.

This is Figure 2B from the article.

|

We see, then, that caffeine can enhance the ability of bees to remember what they learned, and that the level of caffeine required is about what is found in the nectar of some flowers. Thus the scientists suggest that what they studied here, under lab conditions, is likely to be relevant "in the field". They suggest that the plant benefits from providing some caffeine in its nectar, by getting more pollination. It's already known that caffeine at high levels is an insect repellent; finding a beneficial effect of low caffeine would be an interesting development. The results here are consistent with that, but certainly not sufficient. For example... Would it be possible to do field studies and show that increased caffeine leads to more pollination?

News stories:

* Bees Get a Buzz from Flower Nectar Containing Caffeine. (Science Daily, March 7, 2013.)

* Coffee and Citrus Plants Boost Bee Memory With Caffeine. (C Wilcox, Science Sushi (Discover blog), March 7, 2013.)

* News story accompanying the article: Neuroscience: Caffeine Boosts Bees' Memories -- Caffeine in floral nectar enhances the memory of bees for the flowers' scent by altering response properties of neurons in the bee brain. (L Chittka & F Peng, Science 339:1157, March 8, 2013.)

* The article: Caffeine in Floral Nectar Enhances a Pollinator's Memory of Reward. (G A Wright et al, Science 339:1202, March 8, 2013.)

Posts about caffeine or coffee include...

* Added February 25, 2026.

Are coffee stains useful -- in electron microscopy? (February 25, 2026).

* Chocolate: 1200 years old (February 18, 2013).

* Your desire for caffeine: It may be in your genes (May 31, 2011).

More on bees:

* What should a plant do if it hears bees coming? (December 10, 2019).

* Bees and flowers: A 30-volt story (June 21, 2013).

* Novelty-seeking behavior (May 26, 2012).

* The traveling bumblebee problem (January 11, 2011).

More about pollination:

* What if there weren't enough bees to pollinate the crops? (March 27, 2017).

* A "flower" that bites -- and eats -- its pollinator (December 27, 2013).

More about memory: Near-death experiences: are the memories real? (August 11, 2013).

Also see: Why some citrus fruits are so sour (April 22, 2019).

April 10, 2013

SO2 reduces global warming; where does it come from?

April 9, 2013

A major problem in studying "global warming" is that the effects are quite small over short time scales. Not only small, but also variable. A 100-year trend of major warming may include years, or even decades, with little or no warming.

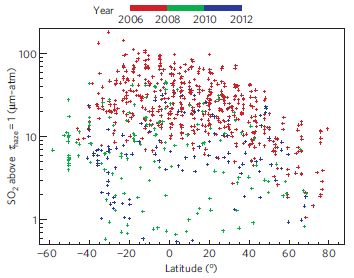

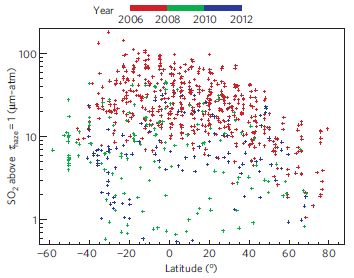

The decade of the 2000s was such a decade, with little change in global temperature. We noted this in an earlier post, which showed that increased sulfur emissions were likely responsible [link at the end]. What was not clear was the major source of the sulfur emissions. Some work suggested that volcanoes were the major source and some suggested that increased burning of coal (especially in Asia) was the major source. Both emit sulfur, in the form of sulfur dioxide, SO2. Atmospheric SO2 leads to aerosols, which cool the planet.

A new article offers some resolution to that issue. The authors show that, for the decade of the 2000s, the sulfur emissions from small to medium-sized volcanoes were the major sulfur source. This is an important finding, since the smaller events were often neglected in earlier work; they may be small, but there are many of them. (The contribution of occasional large volcanic eruptions to cooling has long been recognized.)

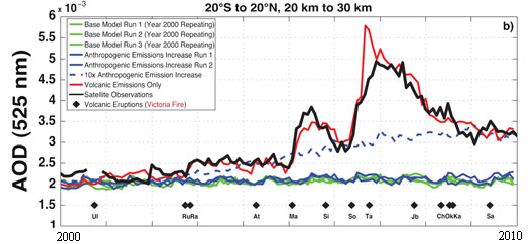

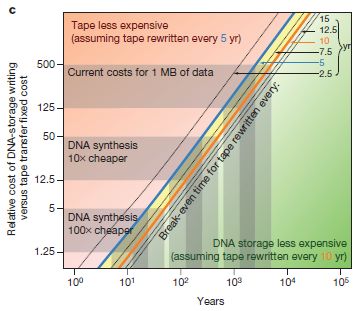

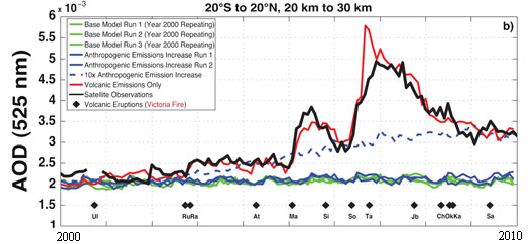

The following graph is an example of their findings.

|

The graph shows a measure of atmospheric aerosols over time, for various situations. The y-axis is the aerosol optical depth (AOD), a measure that largely reflects the sulfur emissions in the atmosphere. The x-axis is time, from the year 2000 to 2010. This particular graph is for the equatorial region, between 20° S and N.

There are several curves. Let's look at some of them. The black curve (labeled "Satellite observations") is the observed result: how much aerosol was found by actual measurement. The other curves are all based on modeling done in the new article. The red curve is their model result if they include only "volcanic emissions". The blue curves are their model results if they include only "anthropogenic emissions", that is, human-caused emissions, such as from burning coal. The solid blue curves are for their best estimate of these emissions; the dashed blue curve shows what their model predicts if the anthropogenic emissions were 10-fold higher than their estimates.

This is Figure 1b from the article.

|

You can see that the aerosol levels predicted based on volcanic emissions (red curve) agree rather well with the aerosols observed (black). Aerosols predicted from anthropogenic emissions, even elevated 10-fold (dashed blue curve), do not match the actual record.

A caution... The graph above is for the equatorial region. The full figure in the article also includes north and south temperate regions. The results for those regions are much less clear than for the equatorial regions, although the volcanic contributions seem most important.

The article is a useful contribution, with more advanced modeling. It contributes to our understanding of short-term fluctuations in climate. It shows an important role for emissions from smaller volcanoes.

News story: Volcanic aerosols, not pollutants, tamped down recent Earth warming. (American Geophysical Union, March 1, 2013.)

The article: Recent anthropogenic increases in SO2 from Asia have minimal impact on stratospheric aerosol. (R R Neely et al, Geophysical Research Letters 40:999, March 16, 2013.)

Background post: Why isn't the temperature rising? (September 12, 2011). I do encourage you to go back to this post to fill in the story.

Also see:

* Predicting the "side-effects" of geoengineering? (September 23, 2018).

* Geoengineering: the advantage of putting limestone in the atmosphere (January 20, 2017).

* National contributions to global warming (June 25, 2014).

* When does global warming occur: day or night? (October 28, 2013).

* Climate change and hare color (May 10, 2013).

* Sulfur dioxide in the atmosphere of Venus (February 16, 2013).

Can one rat know what another rat is thinking?

April 8, 2013

Sure. Send the brain signal from one rat to the other.

A new article reports doing just that. We note it briefly...

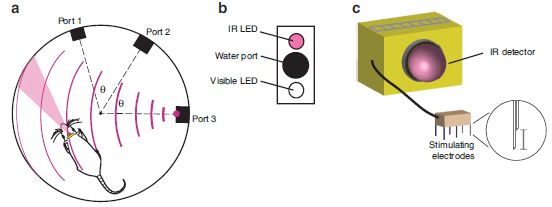

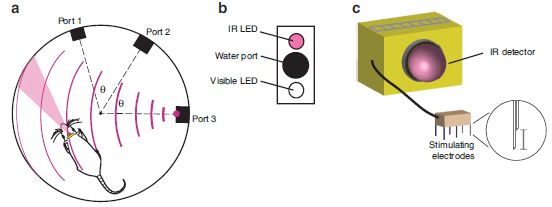

A recent post showed that a rat could respond to an infrared (IR) sensor that was connected to its brain [link at end]. As a result, the rat gained the ability to respond to IR light, effectively adding a new sense to its sensory repertoire. The new work is from the same group of scientists; in some ways, the new work is similar to that reported in the earlier post. Each is a small step in a big field involving learning about brain signals.

The authors refer to the new work as establishing a brain-to-brain interface (BTBI). The general plan of an experiment is as follows... One rat is given a behavioral test, and makes a decision. Electrodes implanted in the brain of this rat transmit brain signals from this "encoder" rat. Those signals are then transmitted, in real time, to a "decoder" rat, whose actions are then observed. Both rats face the same situation; it turns out that the decoder rat does what the encoder rat had chosen -- with a significant probability.

In one test, just to emphasize the point, the scientists connected two rats on different continents. The encoder rat, making the original decision, was in Brazil; its brain signal was connected to a decoder rat in the United States, who acted on the decision made by the rat in Brazil. How did the signal get from one to the other? Via the Internet, of course. The decoder (receiver) rat did just fine at making use of the information.

This work has something of a "stunt" aspect, and some of the discussion of it includes hype about what might be done in the future. Ok, but the point is that this is all part of the big story of learning about brain signaling. In the IR post, rats learned to use the signal from an external electronic device. In the current post, brain signals are obtained from one rat and transmitted to another. In other work, we have already noted examples of humans benefiting from such applications, even though they are quite primitive at this time. As to developing computers based on such interconnected animals... Well, that illustrates a line of work the authors want to pursue. It's good that they are enthusiastic about their work. Let's see what they learn from it.

News stories:

* Intercontinental mind-meld unites two rats -- But critics are skeptical about predicted organic computer. (Nature News, February 28, 2013.)

* First direct brain-to-brain interface between two animals. (Kurzweil, March 1, 2013.)

The article, which is freely available: A Brain-to-Brain Interface for Real-Time Sharing of Sensorimotor Information. (M Pais-Vieira et al, Scientific Reports 3:1319, February 28, 2013.)

Movies. There are two movie files for this work. One shows a couple of test sequences. The second is an interview with the lab head about the work. You can find them at the lab web page: First brain-to-brain interface allows transmission of tactile and motor information between rats. (Nicolelis lab, Duke University.) (The first is also available with the article, at the journal web site.)

Background post: Can rats touch infrared light? (February 25, 2013). As noted, this work and the current work are from the same lab. This post includes links to other relevant Musings posts.

More...

* The brain-machine interface -- at the World Cup (July 2, 2014).

* Using your brain waves to log on to the computer (April 29, 2013).

And more... Computer scientist thinks; psychologist moves finger (September 24, 2013).

More about enhancing rat brain function... Can blind rats learn to use a geomagnetic compass? (June 29, 2015).

More about brains is on my page Biotechnology in the News (BITN) -- Other topics under Brain (autism, schizophrenia).

Thanks to Borislav for alerting me to this work.

Bacteria can make mouse stem cells

April 6, 2013

Briefly noted...

Last year's Nobel Prize for Medicine or Physiology was awarded, in part, to Shinya Yamanaka for discovering how to reprogram adult cells from the mammalian body back to a stem-cell-like state, known as induced pluripotent stem cells (iPSC). Turns out that some bacteria do something very similar. Leprosy bacteria.

Leprosy bacteria infect nerve cells -- a specific type of nerve cell known as Schwann cells. In the new work, scientists found that the bacteria converted some of these nerve cells to a stem-cell-like state; these stem cells could migrate, thus spreading the bacteria. Overall, it seems that the bacteria-induced stem cells promoted both the dispersal of the bacteria, as well as some protection from the host immune system. Further, the loss of the nerve cells probably contributed to the nervous system damage that is a characteristic of leprosy. The work here is in mice; we presume, for now, that the infection follows a similar course in humans, though that has not yet been examined.

That bacteria can do what we can do should not be surprising. The lesson from Yamanaka with iPSC is that a small number of specific proteins can induce such changes in the state of differentiation. Bacteria can change the levels of host proteins; it is not surprising that bacteria can also induce changes in differentiation state. It is not surprising that they can, but it is new to find that they do.

An odd story, perhaps, but also potentially important. The new work shows that bacteria make stem-cell-like cells, but we know little about how they do it at this point. Clearly, further work will be done to learn the details of the process. This is potentially important, in two ways. First, it represents an improved understanding of the leprosy bacteria; perhaps over time it will lead to improved treatment. Second, we want to pursue this to see what the implications might be for us making stem cells.

News story: Bacteria's hidden skill could pave way for stem cell treatments. (Phys.org, January 17, 2013.)

The article: Reprogramming Adult Schwann Cells to Stem Cell-like Cells by Leprosy Bacilli Promotes Dissemination of Infection. (T Masaki et al, Cell 152:51, January 17, 2013.)

Recent post on stem cells: The role of the immune system in making stem cells (February 8, 2013).

I have more on stem cells on my page Biotechnology in the News (BITN) - Cloning and stem cells.

Why the facial tumor of the Tasmanian devil is transmissible: a new clue

April 5, 2013

Briefly noted...

We previously noted the case of the facial tumor of the Tasmanian devil [link at the end]. An unusual feature of Devil facial tumor disease (DFTD) is that it is transmissible. The animals bite each other -- and transmit the cancer; the population of Tasmanian devils has become endangered.

Transmissible cancers are extremely rare, and not understood. Usually, the immune system serves as a barrier to cancer transmission to a new host, even of the same species. Obviously that barrier is not effective with DFTD. A new article offers some explanation of what is going on with this transmissible tumor.

Briefly, the scientists find that the tumor is failing to display tumor antigens, thus making the tumor invisible to a new host. That's different from what was expected, that the devil tumor might not have any distinctive tumor antigens. Further, they think that the failure to display the tumor antigens is not due to a mutation in the display system, but to some operational problem. It's there, but is turned off. In fact, in the lab they can get the tumor to display antigens that could be used to target it.

What are the implications? It's too early to know, but at least it represents a better understanding of a novel disease. Knowing a more specific cause of the problem allows work to proceed focusing on that specific cause. There are tumor antigens and maybe they can be expressed. Further, knowing that poor antigen display is a key issue raises some hope that a vaccine might be useful.

News story: Hope for Threatened Tasmanian Devils. (Science Daily, March 11, 2013.)

The article, which is freely available: Reversible epigenetic down-regulation of MHC molecules by devil facial tumour disease illustrates immune escape by a contagious cancer. (H V Siddle et al, PNAS 110:5103, March 26, 2013.)

Background post: The devil has cancer -- and it is contagious (June 6, 2011). Includes pictures.

An important follow-up: Immunization of devils: a treatment for a transmissible cancer? (April 24, 2017).

Another transmissible cancer? ... Is clam cancer contagious? (April 21, 2015).

More about immune systems: Bach and the immune system (August 26, 2013).

More about epigenetic marks: A DNA test that can distinguish identical twins (July 17, 2015).

April 3, 2013

Propionibacterium acnes bacteria: good strains, bad strains?

April 1, 2013

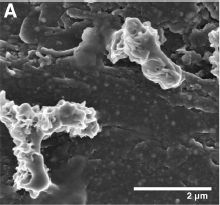

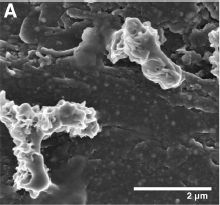

Propionibacterium acnes (P acnes) bacteria have long been associated with acne. However, the nature of the relationship is not clear. A new article offers some insight. By using more refined analysis, the scientists show that there are various kinds of P acnes bacteria -- with different relationships to the disease.

In the new work, scientists sampled the skin of 101 people, about half of whom had acne. They analyzed the bacteria found on the skin. They determined the prevalence of P acnes, but they also went further and characterized the type of P acnes.

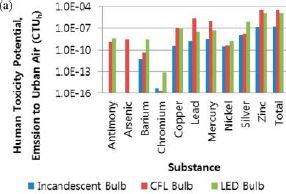

The overall prevalence of P acnes was similar in people who did or did not have acne. However, the results for specific strains were quite different. Here is a brief summary of the results for three strains.

| Strain

| % of isolates,

people with acne

| % of isolates,

people with clear skin

|

| RT1

| 48%

| 52%

|

| RT5

| 99%

| 1%

|

| RT6

| 1%

| 99%

|

As an example of how to read the table... The first row lists the results for a strain called RT1. Of all the isolates of this strain, 48% were found in people with acne, and 52% in people who did not have acne.

|

This is a condensed version of Table 1 from the article.

|

The table lists three strains of P acnes. As noted, strain RT1 is found about equally in people with or without acne. But the other two strains give quite different results. Strain RT5 is found almost entirely in people with acne, and RT6 is found almost entirely in people without acne.

Not all P acnes are equal! Recognizing this may be an important step toward understanding the relationship of these bacteria to the disease. However, we must once again emphasize that we do not know what that relationship is. For example, the results here are consistent with two quite different models...

* It is possible that strain RT6 is a "good" strain, which helps prevent the growth of a "bad" strain such as RT5. In this case, providing people with the good strain might be beneficial.

* However, it is also possible that acne creates conditions on the skin where strains such as RT5 can grow; in this model, the bacteria are a result of the disease, not a cause.

These alternative models are not new. And there are more possibilities. What's new is that we now know it is not sufficient to just say P acnes; we need to look at specific strains. Thus the new article does not solve the acne problem, but it allows a new type of testing to proceed.

News story: Got Pimples? You May Need Better Bacteria. (Science Now, February 27, 2013.)

The article: Propionibacterium acnes Strain Populations in the Human Skin Microbiome Associated with Acne. (S Fitz-Gibbon et al, Journal of Investigative Dermatology 133:2152, September 2013.)

More about the bacteria associated with acne:

* Acne, grapevines, and Frank Zappa (August 1, 2014).

* A virus that could treat acne? (October 21, 2012)

More competition between skin bacteria... Staph fighting Staph: a small clinical trial (April 8, 2017).

A broader view of our microbes: Sharing microbes within the family: kids and dogs (May 14, 2013).

The benefit of providing alcohol to the eggs

March 30, 2013

Given a choice, fruit flies may choose to lay their eggs where there is a high concentration of alcohol (ethanol). Why? To protect their offspring from parasitic wasps, which would lay their eggs in the larval flies. The wasps cannot tolerate the alcohol. On the other hand, the flies, whose natural environment is fermented fruit, can tolerate alcohol.

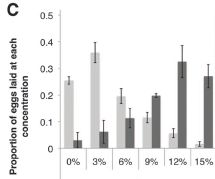

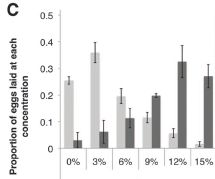

|

This figure shows the basic observation.

Female fruit flies were offered dishes with various concentrations of ethanol, from 0% to 15%. Some were exposed to female parasitic wasps, which could threaten their offspring; some were not.

The bar height shows the fraction of the flies that laid their eggs at the indicated ethanol concentration.

|

The dark bars are for flies exposed to a female wasp. These flies tended to lay their eggs in dishes with high levels of ethanol. About 80% of them chose one of the three highest levels.

In contrast, flies that were not exposed to a female wasp (light bars) tended to lay their eggs in dishes with low levels of ethanol. About 80% of them chose one of the three lowest levels.

This is Figure 1C from the article.

|

Thus it is clear that the flies respond to the presence of the female wasps in a way that benefits the fly offspring. (The flies do not respond to male wasps -- just to the females, the ones that lay eggs in their offspring.) The authors consider this something of an immune response. More specifically, they call it a behavioral immune response, an interesting idea. They also refer to it as medication; the article title uses this terminology.

How do the flies know there is a female wasp around? The scientists do additional experiments in which they manipulate the sensory responses of the flies. Flies with their olfactory (smell) system disrupted respond normally in these tests. However, flies with their visual system disrupted fail to respond to the wasps. Thus the scientists conclude that the flies detect the wasps -- and distinguish male and female -- visually.

News stories:

* Fruit flies defend eggs using alcohol. (Naked Scientists, February 21, 2013.)

* Fruit flies medicate their larvae with alcohol. (Phys.org, February 22, 2013.)

The article: Fruit Flies Medicate Offspring After Seeing Parasites. (B Z Kacsoh et al, Science 339:947, February 22, 2013.)

More about parasitic wasps: Cockroach should be disinfected before eating it (February 12, 2013).

More on fruit flies:

* "Moonwalkers" -- flies that walk backwards (May 28, 2014).

* Making smarter flies (July 18, 2012).

Bat meets spider

March 29, 2013

|

Sometimes, the spider wins.

"Dead bat (Rhinolophus cornutus orii) caught in the web of a female Nephila pilipes on Amami-Oshima Island, Japan (photo by Yasunori Maezono, Kyoto University, Japan; report # 35)." That's from the figure legend; this is Figure 2 part I from the article.

|

A new article is about spiders catching bats. Apparently, not much was known about the topic. That led a scientific team to do an extensive search to see how many incidents they could find. The article is a compilation of all the reports they found: 52 of them.

The article lists the incidents, and has separate tables for the types of spiders and types of bats involved; the tables include the weights of the predator and prey, as best they know them. And the article includes a figure with 12 photos; one of those photos is shown above.

Most of the reported incidents involved a spider catching a bat in its web, a testament to the strength of spider silk. Some involved active predation by large spiders, such as tarantulas.

With only 52 cases found after an extensive search, it would seem that spiders capturing bats is still an uncommon occurrence. But their point is that it is more common than appreciated, and we don't know how often it occurs in natural settings. Findings of giant spider webs across the entrances to bat caves are intriguing; of course, we don't know what is actually caught in those webs.

The article starts with an overview of some unusual feeding habits of spiders; that is worth a look.

News story: Spiders eat bats all the time, scientists reveal -- The capture and killing of small bats by spiders might be more common than previously thought, show recent studies of bat predation by spiders. (Christian Science Monitor, March 18, 2013.)

The article, which is freely available: Bat Predation by Spiders. (M Nyffeler & M Knörnschild, PLoS ONE 8(3):e58120, March 13, 2013.)

More about spiders and spider silk...

* Spider silk: Can you teach an old silkworm new tricks? -- Update (February 11, 2012).

* Tarantulas in the trees (November 11, 2012).

* Spiders in the sky (February 20, 2013).

More about bats...

* Little yellow-shouldered bats -- and the Guatemalan bat flu (March 30, 2012).

* Should you get a rabies vaccination before boarding an airliner? (May 7, 2012).

* Baseball and violins (May 15, 2012).

March 27, 2013

How many moons hath Pluto? Follow-up

March 26, 2013

Original post: How many moons hath Pluto? (July 20, 2012). That post, less than a year ago, presented the newly discovered fifth moon of Pluto. Aside from the fun of discovery, knowing the moons of Pluto is important because we have a spacecraft on its way to Pluto; the success of the New Horizons mission depends on it not hitting the moons. We ended that post by wondering, at least implicitly, how many more, undiscovered, moons Pluto might have.

Here we have a new study with something of an answer to that question. Now, we need to be clear: they did not find anything; they did not even look. Rather, they ran computer simulations of how they think moon formation occurs around Pluto. The following figure summarizes one of their computer results.

|

Pluto and its moons: what the region might look like, based on computer simulation.

Pluto and its large moon Charon are in the center. The four other known moons are shown in white (above and to the right). Three possible new moons, not yet discovered, are shown in green (near bottom). Also shown, in blue, is the disk of rings of dust from which the smaller moons presumably have condensed.

This is from the Astrobites news story. It is also Figure 13 from the article.

|

That figure shows three more moons. But they don't know how many there might be. One estimate is that there might be ten more -- all too small to detect by current methodologies, but large enough to damage the New Horizons spacecraft. The message? New Horizons will have to fend for itself as it approaches Pluto, looking for unknown moons and adjusting its course as needed.

By the way, that disk around Pluto has never been seen either. It's for New Horizons to find and measure -- data that will feed back to the models of how the moons formed. That is, the computer simulations here and the upcoming observations by New Horizons will not only serve to protect the spacecraft, but also to enhance our understanding of moon formation.

The number of moons of Pluto is both fun and important. Stay tuned.

News story: The Many Moons of Pluto. (Astrobites, March 8, 2013.)

The article: The Formation of Pluto's Low Mass Satellites. (S J Kenyon & B C Bromley, Astronomical Journal 147:8, January 2014.) A copy of the manuscript, as accepted for publication, is freely available at the arXiv.

More about a dwarf planet: Ceres is leaking (February 18, 2014).

A virus with an immune system -- stolen from a host?

March 25, 2013

A virus with an immune system. Fascinating -- and a bit confusing at this point.

As background, we need to introduce the adaptive immune system of bacteria, which was recognized only a few years ago. This system, commonly known as CRISPR, learns from previous infections, and protects the bacteria against future infections by the same agent. It does this by incorporating a bit of the genome of the infecting agent into its own genome, and then making RNA from that copy to watch for and protect against new infections. This immune system is, in some ways, logically similar to our adaptive immune system: it learns (that is, it is adaptive), and it retains its immunological "memory" by changes in its own genome. Of course, the mechanisms of these two adaptive immune systems are quite different.

And now? A virus -- a bacteriophage, a virus that infects bacteria -- that has a CRISPR-type immune system, and uses it to defend against its host.

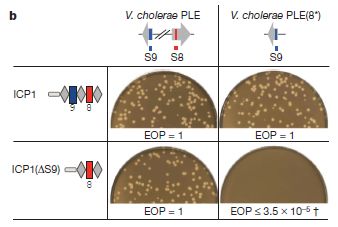

The story starts rather accidentally. The scientists sequenced the genome of the phage they were studying, and found something that looked like a bacterial CRISPR system. That's odd. Does it function? Here is a test...

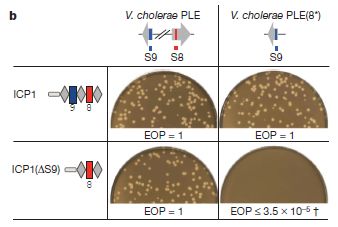

|

This figure shows that the CRISPR "immune system" in the phage is active, and helps the phage grow in its bacterial host.

It's complex, with a lot of jargon. But the key points can be made simply. Let's look. First, look at the results, as shown by the photos. Each photo is showing whether the phage can grow on the bacteria under a specific set of conditions. The little light spots (holes, or "plaques" as they are called with virus work) are a positive result, showing that the phage grew. (The results are also summarized by a number shown immediately below each photo. The number is called efficiency of plating, or EOP. EOP = 1 means the phage grew well; a low EOP means it did not.)

|

You can see that the phage grew well in three of the four cases (lots of plaques, and EOP = 1), and very poorly in the fourth case (no plaques, low EOP). What's special about that case? They had modified the phage and the bacteria so that the phage CRISPR no longer targeted the host. With the phage no longer protected by its "immune system", it was unable to grow.

You can skip down to below the figure explanation if you want; the key idea is above. But, if you'd like a bit more detail...

The experiment shown above involved two bacterial host strains and two phage strains. Two strains of the host bacteria are listed across the top. One (PLE, the wild type) contains two sequences that are relevant here: S8 and S9 (red and blue, respectively). These sequences are part of a host system that helps protect the bacteria against the phage. The bacteria on the right [PLE(8*)] are modified, so that S8 looks different; it still functions, but its specific gene sequence is different. (This involves making so-called silent or synonymous changes, which affect the gene, but not the protein it codes for.)

Two phage strains are shown at the left. The first (ICP1) is wild type; its immune system targets both regions 8 & 9. But the second phage [ICP1(ΔS9)] has been constructed with the response against 9 deleted. The second phage can target 8 but not 9.

Look at the upper left case: wild type phage growing in wild type bacteria. The phage can defend against both 8 and 9 -- and grows fine. This is the key control. Now look at the lower right case. The phage cannot target 8, because 8 has been modified in the bacteria. And the phage cannot target 9 because its response to 9 has been deleted. Thus the phage in the lower right case cannot target either 8 or 9 -- and cannot grow. This shows that its immune response is needed for growth.

(The other two cases involve partial responses. The results here are not easily predicted, and are not part of the key story.)

This is Figure 3b from the article.

|

Overall, they make a good case that the CRISPR immune system is functioning in this virus, and is defending the virus against the host. It is a novel finding.

How did this virus acquire this "immune system"? We don't know. Given what we know about CRISPR, it seems plausible that this bacterial virus "stole" it from a bacterial host. There is a catch, however. The virus grows on Vibrio cholerae bacteria. To our knowledge, this species of bacteria does not contain a CRISPR system. Perhaps there are strains that do carry it, and we do not know about them yet. Or perhaps there is a more complex story somewhere back in history. In any case, this may well be an example of horizontal gene transfer. Perhaps one reason CRISPR is widespread among the bacteria is that viruses are helping to move it around. Are there other viruses with CRISPR systems? How is the CRISPR system maintained in the virus? More mysteries. The current paper is the first report of a phage with what we thought was a bacterial immune system.

News story: Viruses can have immune systems, new research shows. (Phys.org, February 27, 2013.) Too much hype, but it is also a useful overview. (The hype comes from the original news release from the university, and was repeated in many news stories. As an example, the item says that "The study lends credence to the controversial idea that viruses are living creatures...". It does no such thing. The study shows that a virus may have this particular function; it says nothing about the grand status of viruses.)

* News story accompanying the article: Virology: Phages hijack a host's defence. (M Villion & S Moineau, Nature 494:433, February 28, 2013.)

* The article: A bacteriophage encodes its own CRISPR/Cas adaptive response to evade host innate immunity. (K D Seed et al, Nature 494:489, February 28, 2013.)

More on horizontal gene transfer (HGT):

* An extremist alga -- and how it got that way (May 3, 2013).

* GEBA: B -- revisited

or

Horizontal gene transfer: the web of life? a challenge to evolutionary theory?

(March 26, 2010).

More about CRISPR:

* CRISPR: an overview (February 15, 2015).

* CRISPR: What's it doing to help bacteria carry out infections? (September 8, 2013).

* Exploiting the bacterial immune system as a tool for genetic engineering: The Caribou approach (May 4, 2013).

More about cholera bacteria: Designing a probiotic that fights cholera (December 13, 2010).

Bacteria on human teeth -- through the ages

March 24, 2013

|

A human jaw, labeled as "prehistoric". With teeth. With tooth decay.

This is trimmed and reduced from a figure in the National Geographic news story.

|

What makes this interesting is that scientists were able to extract DNA from the decayed teeth. The calcified dental plaque seems to protect the bacterial DNA from being lost over time. They then identified the types of bacteria that were present. They did this for 34 early European skeletons, spanning several thousand years and various lifestyles. From their results, they concluded that the nature of the human oral microbiota -- the bacteria in our mouth -- changed at two key times in human history. One was the transition from hunter-gatherer to farmer, and the other was the industrial revolution. They further suggest that these changes were important for our understanding of modern oral disease.

It's interesting that they were able to do this. It is another testament to the revolution in sequencing DNA -- and in carefully handling ancient DNA. I think it's fair that the conclusions here should be taken as preliminary. In the big scheme of things, they have a small data set at this point, only 34 individuals. What is important is they have established the approach; more data -- a wider variety of samples -- is bound to come. We are just beginning a story of how the human oral microbiota has adapted to changing human lifestyles.

News stories:

* Calcified Bacteria Sheds Light on the Health Consequences of the Evolving Diet. (SciTech Daily, February 18, 2013.)

* Prehistoric Plaque and the Gentrification of Europe's Mouth. (E Yong, Not Exactly Rocket Science (National Geographic blog), February 17, 2013.) Now archived.

The article: Sequencing ancient calcified dental plaque shows changes in oral microbiota with dietary shifts of the Neolithic and Industrial revolutions. (C J Adler et al, Nature Genetics 45:450, April 2013.)

A recent post on the gut microbiota: Malnutrition: is more (or better) food the answer? (March 8, 2013).

A recent post on the skin microbiota: A virus that could treat acne? (October 21, 2012)

For a broader perspective on our microbiota: Sharing microbes within the family: kids and dogs (May 14, 2013).

More from dental plaque: Division by 14: a new mode of bacterial growth (November 13, 2024).

More from the analysis of old DNA: Tracking the pathogen of the Irish potato blight (June 25, 2013).

More from the study of old teeth:

* How to eat if your jaw looks like a circular saw -- a follow-up (March 8, 2015).

* The case of the missing incisors: what does it mean? (September 13, 2013).

More about tooth decay:

* How the teeth are sensitive to cold (June 14, 2021).

* Is fluoride neurotoxic to the human fetus? (December 13, 2017).

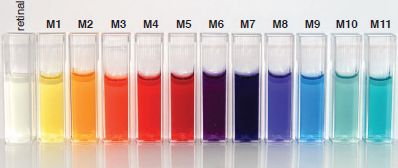

Also see: It's a dog-eat-starch world (April 23, 2013).