Home

> Musings: Main

> Archive

> Archive for May-August 2017 (this page)

| Introduction

| e-mail announcements

| Contact

Musings: May-August 2017 (archive)

Musings is an informal newsletter mainly highlighting recent science. It is intended as both fun and instructive. Items are posted a few times each week. See the Introduction, listed below, for more information.

If you got here from a search engine... Do a simple text search of this page to find your topic. Searches for a single word (or root) are most likely to work.

Introduction (separate page).

This page:

2017 (May-August)

August 30

August 23

August 16

August 9

August 2

July 26

July 19

July 12

July 5

June 28

June 21

June 14

June 7

May 31

May 24

May 17

May 10

May 4

Also see the complete listing of Musings pages, immediately below.

All pages:

Most recent posts

2026

2025

2024

2023:

January-April

May-December

2022:

January-April

May-August

September-December

2021:

January-April

May-August

September-December

2020:

January-April

May-August

September-December

2019:

January-April

May-August

September-December

2018:

January-April

May-August

September-December

2017:

January-April

May-August: this page, see detail above

September-December

2016:

January-April

May-August

September-December

2015:

January-April

May-August

September-December

2014:

January-April

May-August

September-December

2013:

January-April

May-August

September-December

2012:

January-April

May-August

September-December

2011:

January-April

May-August

September-December

2010:

January-June

July-December

2009

2008

Links to external sites will open in a new window.

Archive items may be edited, to condense them a bit or to update links. Some links may require a subscription for full access, but I try to provide at least one useful open source for most items.

Please let me know of any broken links you find -- on my Musings pages or any of my regular web pages. Personal reports are often the first way I find out about such a problem.

August 30, 2017

Fixing nitrogen -- can U help?

August 29, 2017

Nitrogen is an important part of life. There is an abundant supply of nitrogen: about 80% of air is nitrogen. However, it is the form of N2, which is very unreactive.

There are processes for "fixing" nitrogen: converting if from the N2 in the air to something useful, such as ammonia, NH3. Both the biological and industrial processes of nitrogen fixation require considerable energy, simply to break the triple bond in the raw material.

A recent article may open up a new approach. The scientists take steps toward developing a process for fixing nitrogen under mild conditions, at room temperature.

The following figure shows the idea. Caution.. In discussing the figure we will see a lot of oxidation states (or charges) on individual atoms. They are not easy to figure out from the figure. They are based, in part, on additional evidence not presented here. It will be good if, at the end, you see how the oxidation states changed, but otherwise don't get bogged down with them.

|

Compound 1, at the top, is the central player. It's complicated; just focus on a few atoms, shown in color.

There are two uranium atoms, in green. They are labeled UIII, meaning that they are in oxidation state +3.

There is a nitrogen atom (dark blue) between the two U atoms. That N is -3.

There are three potassium atoms (light blue) around that N. They are common K cations, +1.

The rest is just scaffolding.

Now look at the reaction... Expose that compound 1 to nitrogen gas, at atmospheric pressure and room temperature. They react to form compound 2, shown at the bottom.

What's the difference? First, there are now three N in the product. The top two are bonded together. That's the N2 that reacted. The two N are still together, but they are also bonded to other things. At least, that stable triple bond of the N2 has been broken. Those two N are now -2 each.

And the two U are now +5 each, UV.

This is part of Figure 2 from the article.

|

Overall, the N2 from the air has been incorporated into the big molecule. The very stable N2 has been partially broken; that's a big step.

The two N atoms of the original N2 have been reduced from 0 to -2. That is, they gained a total of four electrons. From where? From the uranium atoms, each of which was oxidized from +3 to +5.

And it all happened under quite mild conditions. That's what makes this of interest.

What next? The scientists try various things, with limited success. They add hydrogen gas, and make NH3. That would be nice, but it really isn't very efficient. They try other things; the full Figure 2 shows some of them (often without all the details). It shows there are possibilities.

Using uranium to reduce atmospheric nitrogen gas isn't a totally new idea. Fritz Haber was aware of the possibility a century ago. The new work seems to be the first case where a specific well-defined U compound has been shown to reduce N2 under mild conditions. It's a start; the scientists think it is the basis for further development.

News story: A uranium-based compound improves manufacturing of nitrogen products. (Phys.org, July 19, 2017.)

The article: Nitrogen reduction and functionalization by a multimetallic uranium nitride complex. (M Falcone et al, Nature 547:332, July 20, 2017.)

More nitrogen fixation...

* A better way to make ammonia, using lithium? (July 19, 2021).

* Using light energy to power the reduction of atmospheric nitrogen to ammonia (May 20, 2016).

* How soybeans set up shop for fixing nitrogen -- and how we might do better (December 2, 2019).

* The downside of nitrogen fixation? (November 4, 2017).

A recent U post: Role of biological processing in the formation of a uranium ore (June 30, 2017).

A post about another triple bond... A new form of carbon: C18 (September 24, 2019).

This post is listed on my page Introductory Chemistry Internet resources in the section Lanthanoids and actinoids.

The Paris climate agreement: How are we all doing?

August 28, 2017

The two posts preceding this (immediately below) are about aspects of the science and economics of climate change. In both cases, the message may be that the story is more complex than we might have thought.

At least we have the Paris agreement. The countries of the world have come together and agreed to solve the problem, or at least to make good progress. Right. That story, too, may be more complex than we might have thought.

The "essay" (or "comment" story) listed below caught my attention in that spirit. It's a provocative discussion of the nature of the Paris agreement and the action following. I encourage you to read it.

Comment story, freely available: Prove Paris was more than paper promises -- All major industrialized countries are failing to meet the pledges they made to cut greenhouse-gas emissions. (D G Victor et al, Nature 548:25, August 3, 2017.) The authors are from the US, EU and Japan (as listed at the end). That explains their emphasis on those areas.

The two posts immediately below are on climate change issues.

More about climate change: Climate change and sea level (October 2, 2017).

Aerosols and clouds and cooling?

August 27, 2017

You've probably heard that aerosols help cool the Earth, in part by their effect on clouds. That may be, but there is considerable uncertainty about how much effect they have.

A recent article reports some interesting results that help scientists test the effect of aerosols on clouds. The article is based on measurements made during an eruption of a volcano in Iceland. For six months, Holuhraun emitted sulfur dioxide into the atmosphere at a rate about equal to the entire SO2 emissions of the European Union. This is a fissure eruption, with a steady, non-explosive release of material through cracks in the volcano wall.

The resulting SO2 plume was easily tracked. The scientists have good data about how much SO2 was released. The question is, what were its effects? Here are a couple of examples...

|

Frame b (top) shows the droplet size in the clouds for two time periods

The y-axis is the fraction of droplets at various sizes, as shown on the x-axis. reff is the effective radius of a droplet.

The two time periods are

- 2014 (blue), much of which included the eruption;

- 2002-13 (green), prior to the eruption.

The pattern is clear: the droplets were smaller in 2014. One way to see this... The peak of the curve for 2014 is about 12 µm; for the preceding reference (or "control") years, it is about 14 µm.

Frame d (bottom) is similar, except that what is plotted on the x-axis is the LWP. That's the liquid water path. It is the amount of water in a vertical column of a given area. (That's why it is in grams per square meter. Imagine a square meter area; the LWP is all the water in that area, regardless of how high it is (near the top of the cloud, or near the bottom).

The LWP is about the same for both periods.

This is from Figure 2 of the article.

|

To summarize, the two graphs above show that 2014 led to smaller droplets in the clouds, but no significant change in the amount of water.

The first result was expected, due to the major injection of SO2-based aerosols from the volcano. The second result was contrary to some expectations; many models suggest that aerosols have effects on clouds beyond simply changing drop size. The current work shows no evidence for such additional effects.

(The smaller drop size leads to greater reflectance. That is, it leads to cooling by reflecting the incoming sunlight. The effect is small.)

There is much about the article that is confusing, apparently even to experts in the field. The authors note that they do not know how general their result is. Therefore, conclusions must be tentative. That's fine. That's how science works. There are various models of what might happen. People collect data. We now have one data set on this matter. Is it representative? The only way to know is to get more data.

For the sake of discussion, let's accept the result here. It says that clouds are less affected by aerosols than some thought. As noted, current models for the effects of climate change have different predictions on this point. The results will help refine climate change modeling.

The authors also note that it may really be ok to clean up aerosol pollution. There has been some concern that doing so might enhance warming; if the current results hold, that may not be important.

News stories:

* Role aerosols play in climate change unlocked by spectacular Icelandic volcanic eruption -- Cloud systems 'well buffered' against aerosol changes in the atmosphere, research shows. (Science Daily, June 21, 2017.)

* Volcano reveals simpler than expected cloud-climate response to tiny aerosol particles. (R Allan, Climate Lab Book, July 7, 2017.) By an author of the article. The comment section at the end of the page is fairly high quality; this seems to be a page for experts. But also note that the conclusions are not clear.

* News story accompanying the article: Climate science: Clouds unfazed by haze. (B Stevens, Nature 546:483, June 22, 2017.)

* The article: Strong constraints on aerosol-cloud interactions from volcanic eruptions. (F F Malavelle et al, Nature 546:485, June 22, 2017.)

A post about aerosols and climate change: Why isn't the temperature rising? (September 12, 2011).

More about aerosols...

* Atmospheric aerosols from passive (non-erupting) volcanoes -- a re-evaluation (February 13, 2023).

* Predicting the "side-effects" of geoengineering? (September 23, 2018).

* Reducing diesel emissions from ships (March 3, 2018).

More about sulfur pollutants: The importance of HPMTF in the atmosphere (June 9, 2020).

There are three consecutive posts in the broad area of climate change: this one, and the ones immediately above and below.

More from Iceland: How horses learned to walk (September 21, 2016).

More about volcanoes: How frequent are volcanic eruptions that are truly catastrophic? (April 10, 2018).

Economic analysis of the damages (and benefits) from climate change

August 26, 2017

Climate changes. What difference does it make? Well, various things may happen.

Does it really matter?

One way to address that question is to do an economic analysis. What are the economic consequences of the changes? Not just the effect on one group, but overall, considering all possible effects. That's a standard approach in economics.

A new article presents a new economic analysis of climate change. It's probably the most advanced economic modeling yet developed in this field. One feature is that it addresses a quite fine scale: counties in the United States.

Here is an example of the results...

|

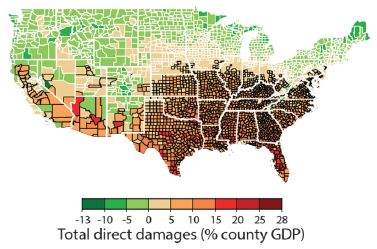

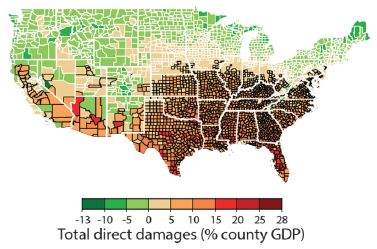

The map shows the economic effect of climate change from one model, projected to 2080-2099. The map is by county for the contiguous United States.

The effect is the projected change in gross domestic product (GDP). The magnitude is color-coded; see the key at the bottom. Reds show damage, greens show negative damage -- that is, benefit.

The big picture is clear: economic damage as high as 28% in the southeastern part of the country; economic benefit -- as high as 13%, but usually smaller -- in the north.

|

This is Figure 2I from the article. Parts A-H of the full figure each show a similar map for one type of effect. Those effects are agricultural yields, mortality rates, electricity demand, labor supply -- exposed to outdoor climate or not, coastal storms, property-crime rates and violent-crime rates. The map here, part I, shows the overall effect (with all the effects converted to economic terms, and weighted appropriately).

|

What's the model? It's complex, of course. If you want a sense of the design, here is a figure that gives an overall diagram of the model: The model [link opens in new window]. It shows the major modules in the model, and the types of data that go into the calculations.

This figure is from the news story by Pizer accompanying the article.

What do we make of this? Such models are one approach to seeing the consequences. But be careful. The model is intended to be comprehensive, or at least to show major effects. Maybe it is, or maybe not. The model makes assumptions, and uses data. Some of the assumptions may be wrong, or at least subject to differences in opinion. Outputs from such models are not objective facts. But they can be useful.

A simple example... The figure above shows that the economic damages vary with location. In fact, some places will benefit from a warmer climate. That's neither new nor surprising, but it is a point that sometimes gets lost in the rhetoric. The overall economic damage for the US is fairly small, according to their model: about 1.2% of GDP per degree (Celsius). But that small overall number hides huge effects, which are important.

One use of modeling is sensitivity testing. How do the results depend on various assumptions? For example, how much difference does it make whether the growing season in the north is extended by 5 days or 10 days? This kind of analysis is useful in understanding a complex system.

Modeling such as this should be part of the dialog. It is one tool for helping us project the future. Models should be critiqued and developed. In fact, the authors note that their model is flexible, making it easy to add features and data.

News stories:

* The American South Will Bear the Worst of Climate Change's Costs -- Global warming will intensify regional inequality in the United States, according to a revolutionary new economic assessment of the phenomenon. (R Meyer, The Atlantic, June 29, 2017.)

* Study maps out dramatic costs of unmitigated climate change in the U.S.. (K Maclay, University of California Berkeley, June 29, 2017.) From one of the institutions involved in the work.

* News story accompanying the article: Economics: What's the damage from climate change? -- Improved damage models put social cost of carbon estimates on a firmer footing. (W A Pizer, Science 356:1330, June 30, 2017.) A good, very readable overview of the work, including its strengths and limitations.

* The article: Estimating economic damage from climate change in the United States. (S Hsiang et al, Science 356:1362, June 30, 2017.) Check Google Scholar for a freely available copy. The article, too, is quite readable, perhaps surprisingly so given the inherent complexity. The authors describe the big issues, and spend considerable time discussing the uncertainties. Of course, there is vast detail about the modeling that is beyond the content of the article itself.

Most recent post on climate change: Was there a significant slowdown in global warming in the previous decade? (May 30, 2017).

There are three consecutive posts in the broad area of climate change: this one, and the two immediately above (August 27 and 28).

Among many posts about global warming...

* Global warming trend? Independent evidence (March 22, 2013).

* Global warming (August 3, 2008). Winners and losers.

More maps: A mosquito map for the United States (October 3, 2017).

August 23, 2017

Evidence for brain damage in players of (American) football at the high school level

August 23, 2017

Musings has discussed the problem of long term implications of head injuries for those who play (American) football [link at the end].

A new article adds to the data, with some evidence that those who play only at the high school level may be at risk.

The heart of the new work was to examine the brains of 202 people that had been donated to a brain bank. The criterion for inclusion in the current study was that the person had played football.

It's important to emphasize that this is not in any way a random sample. It is likely that the donation of a brain was influenced by the person having difficulties. (Most commonly, the brain is donated by the family after death. It is also possible for a person to register that their own brain be donated upon death.)

The brains were examined "neuropathologically" in the lab. Tissue slices were stained in various ways, and examined with a microscope. Standard procedures. The brains were scored for chronic traumatic encephalopathy (CTE).

Here is a summary of the findings.

| Highest level of play

| Total

| Mild CTE

| Severe CTE

|

| Youth

| 2

| 0

| 0

|

| High school

| 14

| 3

| 0

|

| College

| 53

| 21

| 27

|

| Semi-pro

| 14

| 4

| 5

|

| Canadian Football League

| 8

| 1

| 6

|

| (US) National Football League

| 111

| 15

| 95

|

| Total

| 202

| 44

| 133

|

The table shows how many of the brains examined showed signs of mild or severe CTE.

To illustrate how to read the table... Look at the row for the (US) National Football League (NFL). 111 of the brains examined were from people who had played in the NFL. Of these, 15 were found to have mild CTE, and 95 were found to have severe CTE. That's a total of 110 out of 111 that showed some signs of CTE.

Now look at the row for high school. This means that the highest level of football for the person was high school. Out of 14 brains examined at this level, three had CTE, at the "mild" level.

This is largely from Table 1 of the article. The numbers for the "Total" column are taken from the text on the preceding page. The full table includes other information about the people, including standard demographics and what position they played. CTE is more prevalent in lineman than in kickers. No surprise there.

The big trend is that players who have gone on to higher levels of football have more CTE.

Perhaps the most intriguing, or even most important, finding is that for the high school level. Even those who played football only through high school are at risk for CTE. As noted before, we can't make anything of the statistics because of the nature of the sample. Nevertheless, the results here suggest there is some association. This may be the first evidence that playing high school football can lead to long term brain problems.

News stories:

* High prevalence of evidence of CTE in brains of deceased football players. (Science Daily, July 25, 2017.)

* Will New CTE Findings Doom the NFL Concussion Settlement? (M McCann, Sports Illustrated, August 15, 2017.) This is a discussion of the implications of the new findings for a recent legal settlement between the NFL and the players regarding compensation for concussion injuries. The author of this page is a lawyer.

(The author byline on the page notes an upcoming symposium on the topic. It is September 13, and may be available on the web. I have not checked further, but a link is provided.)

* Editorial accompanying the article: Advances and Gaps in Understanding Chronic Traumatic Encephalopathy From Pugilists to American Football Players. (G D Rabinovici, JAMA 318:360, July 25, 2017.)

* The article: Clinicopathological Evaluation of Chronic Traumatic Encephalopathy in Players of American Football. (J Mez et al, JAMA 318:360, July 25, 2017.)

Background post: Early detection of brain damage in football players? A breakthrough, or not? (September 14, 2015).

More... Comparing the death rates of American football and baseball players (July 2, 2019).

More about trauma:

* Studying concussions in egg yolks (February 28, 2021).

* Head injuries in Neandertals: comparison with "modern" humans of the same era (February 22, 2019).

* Type O blood and survival after severe trauma? (July 7, 2018).

There is a section of my page Biotechnology in the News (BITN) -- Other topics on Brain. It includes a list of related Musings posts.

There is now an extensive list of sports-related Musings posts on my page Internet resources: Miscellaneous under Sports.

Sparing glucose for athletic endurance

August 21, 2017

If one does long-distance running, at some point you "run out of juice". Almost literally. You run out of glucose. Exercise training allows one to extend the time you can endure the activity; the body spares the glucose for its critical role. If you don't know what that critical role is, you may be surprised; more about that later.

A recent article explores the mechanism of how glucose can be spared, allowing extended endurance activity. It does this by using a drug that mimics the effect of the exercise training.

Some results... In the experiment here, mice were tested on a treadmill to see how long they could run. These were mice that had not trained for exercise; that is, they were considered "sedentary" mice.

|

The solid lines on the graph show blood level of glucose (y-axis; left-hand scale) vs time (x-axis) during the treadmill run for the mice.

The dashed lines, near the bottom, are for lactic acid; see the right-hand scale.

The general trend for all the glucose lines is that glucose was maintained near 140 mg/dL for some time. It then declined, eventually falling below 70 -- a critical level below which the mice could no longer run.

|

The red lines are for control mice, fed regular chow. The blue lines are for mice given a drug called GW (short for GW501516).

The general result is that the glucose decline occurred later for all the blue curves -- for the mice given GW. It looks like the GW allowed the mice to run about an hour longer, on average; that's about 30%.

The lactic acid data show that all the curves are similar. That is, lactic acid is not an issue here. It's not the muscles running out of fuel that causes the mice to stop.

This is Figure 2J from the article.

|

The experiment shows that the drug GW increases athletic endurance, as judged by this treadmill test. In that sense, the drug mimics exercise training.

What's happening? The key player is a protein called PPARδ. That's short for peroxisome proliferator-activated receptor delta. Don't worry about that archaic name; you can just say "p-par-delta". PPARδ is a transcription factor, which regulates certain genes. Of relevance here, it regulates metabolism in muscle cells. When PPARδ is activated, the muscle cells switch to using more fat and less glucose as fuel. The glucose remains available to fuel the brain, which uses only glucose (but not fat). It's the brain running out of glucose that limits endurance exercise; sparing glucose in the muscles allows the brain to endure longer.

Much of the story has been known. Exercise training leads to that shift in muscle metabolism. What's new here is finding a small molecule, a "drug", that can activate PPARδ and spare the glucose for the brain.

It's not quite so simple. Earlier work had shown that the GW drug led to some of the effects, but, by itself, did not increase endurance. The current work used a higher dose of the drug, fed over a longer time. That resulted in improved endurance, as shown above. How many pieces are there to this story?

GW may be a useful tool for probing what happens during exercise training.

Or maybe the drug can just replace the exercise.

News stories:

* "Exercise in a Pill" Boosts Athletic Endurance By 70 Percent. (Neuroscience News, May 5,2017.) (The 70% number comes from Figure 2I. I see more like 30% from the figure above, but that is a rough estimate from the graph. The number doesn't really matter much for now. It's a substantial improvement.)

* The science of 'hitting the wall'. (EurekAlert!, May 2, 2017.)

The article: PPARδ Promotes Running Endurance by Preserving Glucose. (W Fan et al, Cell Metabolism 25:1186, May 2, 2017.)

Among posts on exercise:

* High-performing athletes: might they have performance-enhancing microbes in their gut? (June 28, 2019).

* Measuring the level of a non-existent hormone (April 10, 2015). Note that there is a rebuttal post for this.

* Would wild mice use an exercise wheel? (July 11, 2014).

* Why exercise is good for you, BAIBA (March 10, 2014).

* See cat run (March 14, 2012).

More about endurance running: Should you run barefoot? (February 22, 2010).

There is now an extensive list of sports-related Musings posts on my page Internet resources: Miscellaneous under Sports.

Largest field trials yet... Neonicotinoid pesticides may harm bees -- except in Germany; role of fungicide

August 20, 2017

Musings has discussed bee problems in numerous posts. A broad concern is population declines, sometimes called colony collapse disorder (CCD). A more specific issue is the use of a class of pesticide called neonicotinoids, nicknamed neonics ("neo-nics"). These pesticides are the subject of regulatory debates, because they may harm bees. The underlying biological data are mixed. Lab experiments show that they can harm bees; the issue is whether they actually do so under field conditions. There is a link to one background post on these issues at the end.

Two recent articles, published together, add to the evidence, and perhaps to the confusion. One of them may offer a glimmer of clarification. Let's look at them, in turn.

Article 1

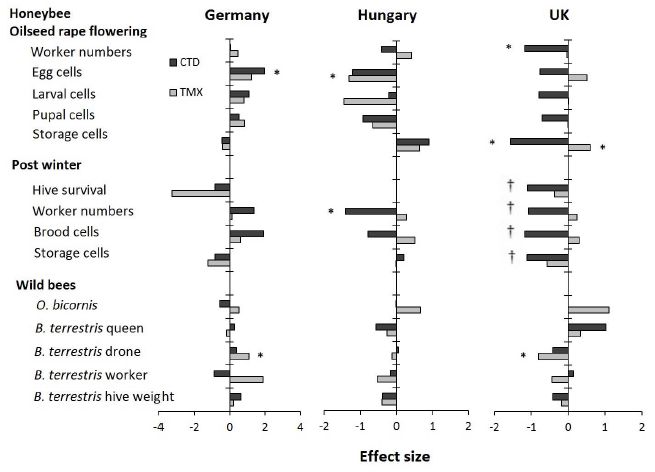

Article 1 reports what is probably the most extensive field trial of the effect of neonic pesticides on bees yet done... Two neonic pesticides, tested at multiple sites in three countries. Fourteen parameters measured.

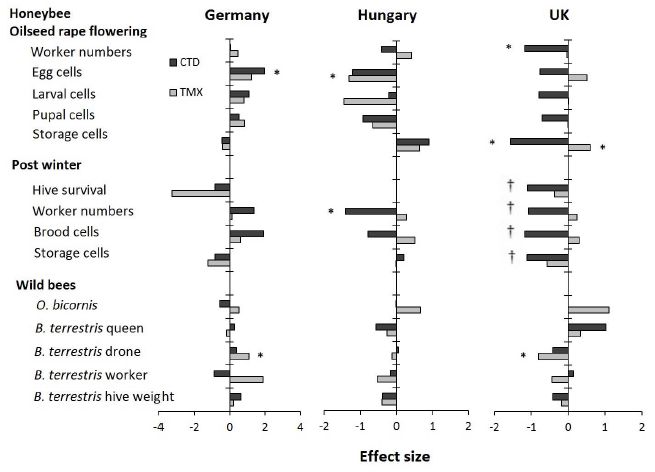

Here is a summary of the results...

The table lists fourteen parameters at the left. The first two sets of parameters relate to honeybees; the third set relates to two types of wild bees.

The remaining columns are for the results for the three countries. (There were 3-4 sets of sites per country, where a set of sites includes one for each of the three treatments: control and two neonic pesticides.)

There are two neonic pesticides: clothianidin (CTD; dark bars) and thiamethoxam (TMX; light bars).

For each parameter-country-pesticide combination there is a result. That is, there are 14 (parameters) * 3 (countries) * 2 (pesticides) = 84 results possible. The results are all shown normalized: effect size, in standard deviations.

The big picture? Look for the asterisks; they mark results that are found to be statistically significant (p = 0.05).

Eight results have an *. There are two * for Germany, both showing that the pesticide was beneficial to the bees. There are two * for Hungary, both showing that the pesticide was harmful to the bees. And there are four * for UK, three showing harm and one showing benefit.

That is, few of the results are significant, and those that are significant do not yield any consistent picture. Except that the pesticides are beneficial in Germany but harmful in Hungary (and probably in the UK).

One group of results, for "post winter" for the UK, is marked with daggers (†). Survival was so poor for all conditions that there is no meaningful analysis.

This is Figure 2 from article 1.

|

What's going on? Remember, it is known that the pesticides can affect the bees. The question is whether the effect is significant "in the real world". This test was designed to be "real world", and the results are not simple.

Among plausible interpretations...

* It may be that little or nothing is really significant here.

* It may be that the test conditions are right at the edge of significance.

* It may be that there are hidden, or "confounding" variables. It seems unlikely that "country" affects how the pesticide acts on bees. But it may be that there are additional variables, not yet identified, that correlate here with country. If only we could identify those extra variables, it might lead to clarity. In fact, the authors emphasize this point. They also note that the bees in Germany were generally the most healthy; this supports the idea that the neonics may be an additional stress, which is most important when other stresses are also present.

Article 2

This article offers more field testing. But of most interest for us here is a lab experiment showing an interaction between the neonic pesticides and a fungicide that is sometimes used in the field. That is, this experiment may reveal one of the confounding variables.

The experiment measured the acute toxicity of the neonics, with the additional feature that two other chemicals were tested. Here are the results...

|

The y-axis shows the acute toxicity of the neonic as measured at 24 hours following an oral dose. The toxicity is shown as the LD50, the dose that kills half the bees (in 24 hours in this test). The two neonics used here are the same as those for article 1, above. The bars are for various conditions.

The first (left-hand) bar is for the neonic CTD. The bar height shows that its LD50 is about 0.005 µg per bee.

The next two bars are for the toxicity of the same neonic, but when a second chemical is also present. For the second (orange) bar, that extra chemical was the herbicide linuron, used at a dose considered typical of what the bees would encounter in the field. For the third (blue) bar, the extra chemical was the fungicide boscalid.

You can see that the second bar shows that the linuron had no significant effect on the toxicity of the CTD. However, the third bar shows that the boscalid made the bees much more sensitive to the CTD. (The boscalid alone did not affect the survival of the bees.)

The next group of three bars shows results for the same kind of experiments with a different neonic, TMX. The pattern is the same. In particular, the fungicide boscalid makes the bees more sensitive to the neonic.

This is Figure 3 from article 2.

|

The important result from article 2 is identifying a specific factor that affects how the neonics affect bees. It is common that various things are added to the crops, for various reasons. They are typically tested individually, but they act together. We now see an example of how a specific combination can have an effect that is not predictable from tests on the individual chemicals.

What's the big message from the two articles together? The effects of neonic pesticides on bees are complex and hard to predict -- even in realistic large scale field tests. We now see one specific example of why.

The issue of neonic pesticides has become political. It's important to understand that it needs to be addressed at two levels. One is the scientific level: what is going on, and why? The other is the political level. Regulatory decisions are made weighing various factors, including the best scientific information available. Sometimes, that "best scientific information" is incomplete or unclear, as in this case. Yet it is still necessary to make a political decision as to what is allowed; making no decision is not exactly an option, since it leads to the default, whatever that may be under the law. The regulatory process also weighs the benefits and alternatives, and may invoke societal values as well as objective facts. In this case, the scientific background has been confusing. The current articles don't change that, but perhaps offer a little hope that some clarity is possible. It is important to be clear which level is being discussed. The main goal with Musings is to discuss the scientific level.

News stories:

* First pan-European field study shows neonicotinoid pesticides harm honeybees and wild bees. (Phys.org, June 29, 2017.) Good overview of article 1.

* Exposure to neonics results in early death for honeybee workers and queens: study. (Phys.org, June 29, 2017.) Article 2. Note that we have discussed only one small piece of this article here. This page features a photograph of a bee with an RFID tag.

* Field Studies Confirm Neonicotinoids' Harm to Bees -- Two large studies find that, in real-world conditions, the insecticides are detrimental to honey bees and bumblebees. (A P Taylor, The Scientist, June 29, 2017. Link is now to Internet Archive.)

* Expert reaction to CEH study of the effects of neonics on honeybees and wild bees. (Science Media Centre, June 29, 2017.) A long and diverse set of comments! The comments focus on article 1, but some people also note article 2. There is a lengthy comment by a scientist from Syngenta, the manufacturer of the neonic pesticide TMX. Not surprisingly, he downplays the suggestion that the main effects are negative. Perhaps more importantly, he stresses the need for further work to understand what is going on.

* News story accompanying the articles: Agriculture: A cocktail of poisons -- The effects of sustained neonicotinoid exposure on bees depend on location, but are usually negative. (J T Kerr et al, Science 356:1331, June 30, 2017.)

* Two articles:

1) Country-specific effects of neonicotinoid pesticides on honey bees and wild bees. (B A Woodcock et al, Science 356:1393, June 30, 2017.) The work was funded by Syngenta and Bayer (another neonic supplier).

2) Chronic exposure to neonicotinoids reduces honey bee health near corn crops. (N Tsvetkov et al, Science 356:1395, June 30, 2017.)

Background post: Neonicotinoid pesticides and bee decline (July 12, 2014). Links to more.

Most recent post about bees... What if the caterpillars ate through the plastic grocery bag you put them in? (May 26, 2017).

More...

* Glyphosate and the gut microbiome of bees (October 16, 2018).

* The advantage of living in the city (July 27, 2018).

More about toxicity: Predicting the toxicity of chemicals (September 11, 2018).

More about fungicides/pesticides:

* Keanu Reeves and a broad-spectrum fungicide (March 6, 2023).

* What do we learn from the sulfur isotopes in the California vineyards? (June 28, 2022).

* A sticky pesticide (June 21, 2019).

August 16, 2017

Solar energy: What if the Moon got in the way?

August 16, 2017

Nothing hypothetical about it. We are having an eclipse of the Sun next Monday (August 21). The entire contiguous United States (except for the tips of Maine and Texas) will experience some time with at least 50% loss of sunlight. There will be a narrow band of totality from Oregon to South Carolina.

Nothing new about solar eclipses, but as our use of solar energy increases, the effect of the eclipse becomes greater. This will be the first time that managers of the electrical power grid in the US need to make significant adjustments because of an eclipse. There shouldn't be any big problems. California is the state that will have the biggest power loss, but it is still only a few percent of the total, and is manageable. North Carolina will lose about 90% of its solar power, but solar is an even smaller percent of the total there. Anyway, grid managers have had plenty of time to plan for the event.

But what about next time, when solar power is a much greater contributor to the total energy mix? The question is a sign of progress.

News stories:

* How Will the Eclipse Affect Solar Power? (J Prochnik, Natural Resources Defense Council, August 10, 2017.) General overview.

* California Prepares for Solar Power Loss During the Great Eclipse. (L Geggel, Live Science, June 8, 2017.)

* Solar eclipse on August 21 will affect photovoltaic generators across the country. (Today in Energy, from the US Energy Information Administration (EIA), August 7, 2017.) Includes a map of the eclipse, showing percent of totality across the US and major sites of solar power generation.

More about this eclipse: Why did many bees in the United States stop buzzing mid-day on August 21, 2017 (January 2, 2019).

More about solar energy:

* MOST: A novel device for storing solar energy (November 13, 2018).

* Using your sunglasses to generate electricity (August 14, 2017). The post immediately below.

There is more about energy issues on my page Internet Resources for Organic and Biochemistry under Energy resources. It includes a list of some related Musings posts.

Update October 2023...

This past weekend, we had an annular solar eclipse: the disc of the moon passed completely in front of the Sun, but was not big enough to block the entire solar disc. (In principle, the eclipse was visible where I am, but fog prevented actually seeing it.)

* Video of annular eclipse. Exploratorium video, posted at YouTube. It is just over 3 hours. Go to about 1 hr 30 min to get to the central event. Back off by three minutes, and then you can watch the final approach to the peak of the event.

* A couple of pages from the Exploratorium, with information and more videos. (I am not sure these are permanent pages.)

- Exploratorium: Watch Our Livestreams. Click on "Live Telescope View of Annular Solar Eclipse, Ely, NV" to get to the page listed next, which is devoted to this video.

- Live Telescope View of Annular Solar Eclipse, Ely, NV. This is the same video as listed above at YouTube.

Update November 2023...

* One more... Darkened by the Moon's Shadow. (Emily Cassidy, NASA Image of the Day, October 17, 2023.) Features a photo of North America during the eclipse -- as seen from a satellite in orbit above Earth.

Using your sunglasses to generate electricity

August 14, 2017

The first step in using solar energy to make electricity is to capture the sunlight. Sunglasses are a device to capture sunlight. So it would seem logical that sunglasses would be a good place to use solar energy.

A new article reports building a solar power generator into sunglasses.

|

Energy-harvesting sunglasses.

This is the first figure in the Phys.org news story. (Fig 7 of the article is similar.)

|

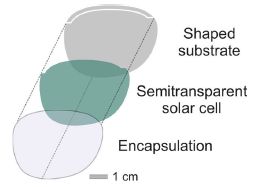

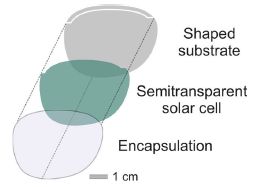

The figure at the right shows the basic design. Conceptually, it is simple: a solar cell is sandwiched between two structural layers.

The solar cell, of course, is transparent. It is an organic solar cell.

This is part of Figure 4a from the article.

|

|

The article contains a lot of data, with tests under various conditions: indoor and outdoor lighting, of various intensities. The cell is capable of generating nearly a milliwatt of power; 200 microwatts is an estimate of what it can reliably generate under a range of reasonable conditions. A lot of numbers. 400 µW (2 lenses each with 200 µW) is enough to operate a calculator, a hearing aid, or perhaps a watch. That is, it is a meaningful amount of power.

The authors emphasize that their process for making the power-generating lenses is straightforward, and can be scaled up. It should be possible to make sunglasses of various colors, and it should be possible to integrate the solar cells with corrective lenses. Cost? I don't see any cost estimates in the article.

The article establishes that using sunglasses to generate solar power is possible. It is an example of using organic solar cells. It is another step in the arena of wearable electronics. Whether this is a useful product remains to be seen.

A chemistry note... Two of the chemicals used to harvest light are derivatives of fullerene (buckyballs).

News story: Glasses generate power with flexible organic solar cells. (Phys.org, August 3, 2017.) (The version I have gives the power output as 200 milliwatts; it should be 200 microwatts.)

The article: Solar Glasses: A Case Study on Semitransparent Organic Solar Cells for Self-Powered, Smart, Wearable Devices. (D Landerer et al, Energy Technology 5:1936, November 2017.)

A recent post on solar energy... Is solar energy a good idea, given the energy cost of making solar cells? (March 24, 2017).

Next: Solar energy: What if the Moon got in the way? (August 16, 2017). Immediately above.

And more... MOST: A novel device for storing solar energy (November 13, 2018).

Posts about flexible electronics include:

* An air-conditioner you can wear? (August 19, 2019).

* eSkin: Developing better sense of touch for artificial skin (November 29, 2010). The topics of flexible electronics and wearable electronics often interact. With the sunglasses, flexibility of the solar cell material is an issue in the manufacture.

There is more about energy issues on my page Internet Resources for Organic and Biochemistry under Energy resources. It includes a list of some related Musings posts.

The major source of positrons (antimatter) in our galaxy?

August 13, 2017

The positron is the antimatter counterpart of the electron. It is just like an electron, except that it has the opposite charge.

It is estimated that about 1043 positrons are destroyed every second in the Milky Way galaxy. Why? Because they collide with electrons; the matter-antimatter interaction leads to their mutual annihilation. A gamma ray is also given off, reflecting the conversion of the mass to energy. It's a distinctive γ-ray; astronomers have been aware of it in the galaxy since the 1970s.

What's not so clear is where all those positrons are coming from. A new article offers a solution.

There are many nuclear decays that give off positrons. An example that people may come across is fluorine-18, which is used in positron emission tomograpghy, commonly called PET scans. The problem is figuring out which processes are important in explaining what the astronomers see.

The following figure summarizes part of the argument. Caution... Although the figure is useful, part of it may also be confusing.

The story starts with a supernova (SN), at the left. A supernova is an exploding star; there are various types of SN. In supernovae, there are many nuclear fusion reactions, leading to heavier and heavier nuclei.

Many of these new nuclei, of course, are unstable, and decay. Some decay with the production of positrons. The figure shows two examples: nickel-56 (upper part) and titanium-44 (lower).

The type of SN that makes Ni-56 is common. It ends up making Fe-56, the heaviest stable isotope made by these fusion reactions. The type that makes Ti-44 is less common; this type is known as SN 1991bg.

Now look a bit to the right, where the x-axis is labeled "~2 months". What happens at this time is about the same in both cases. Both decays produce positrons (red) and electrons (blue). A collision, shown in the circle to the right, annihilates the particles, and gives off a γ-ray. But that γ-ray doesn't do us (on Earth) any good. After only two months, the collision will most likely be so close to the core of the SN that the gamma ray never gets out.

Further to the right is the scene at "~70 years". The situation is now different for the two SN types. In the lower part, with Ti-44 decay, there are still positrons, which collide with electrons in the interstellar medium (ISM) -- producing γ-rays. In the upper part, there are no positrons, no collisions, and no γ-rays. Why? Because there is no more Ni-56. The half-life of the Ni-56 decay chain is only about 2 months. It may make lots of positrons, and lead to many γ-rays -- but no longer. And the ones it did make earlier didn't reach Earth.

The confusion I alluded to earlier is that the horizontal dimension is used both for time and space. It's labeled for time. But within each time segment, it seems to show a spatial diagram. That may be, but overall the figure is not spatial. In particular, you can't tell that the γ-rays at the 2-month time can't escape from the source SN -- and that is a key point. I suggest that you think of the x-axis not so much as a scale of any type, but simply a guide. The figure shows some little diagrams of what happens at three times: zero, 2 months, and 70 years.

This is Figure 1 from the news story accompanying the article in the journal.

|

The conclusion from this discussion is that the common SN, with Ni-56 decay, doesn't make the positrons that we see on Earth. However, a less common SN type, with Ti-44, is a good candidate for the positron signal that we see. As a bonus, the same process may also be the major source of Ca-44. That's the second most common isotope of calcium, but its source has not been clear.

The type of supernova here involves the merger of two low-mass white dwarfs, followed by explosive "burning" (fusion) of helium. The article discusses more about the source, largely using computer modeling to show that the claim is plausible. However, questions remain, and the proposed source of galactic positrons can only be considered a promising hypothesis at this time.

News story: Astrophysicists Solve Mystery of How Most Antimatter in Milky Way Galaxy Forms. (Sci.News, May 30, 2017.)

* News story accompanying the article: High-energy astrophysics: A rare Galactic antimatter source? (N Prantzos, Nature Astronomy 1:0149, June 2, 2017.)

* The article: Diffuse Galactic antimatter from faint thermonuclear supernovae in old stellar populations. (R M Crocker et al, Nature Astronomy 1:0135, May 22, 2017.)

More positrons...

* Lightning and nuclear reactions? (January 28, 2018).

* Early detection of brain damage in football players? A breakthrough, or not? (September 14, 2015).

* What is the charge on atoms of anti-hydrogen? (July 15, 2014).

More about supernovae...

* How long does a supernova event last? (January 14, 2018).

* Could you find debris from a supernova in your backyard? (April 27, 2016).

Among posts about titanium...

* Titanium oxide in the atmosphere? (December 9, 2017).

* Photocatalytic paints: do they, on balance, reduce air pollution? (September 17, 2017).

* 3D printing for space: a titanium woov, and more (April 29, 2014).

* Titanium biology (September 29, 2008).

My page of Introductory Chemistry Internet resources includes a section on Nucleosynthesis; astrochemistry; nuclear energy; radioactivity. That section links to Musings posts on related topics.

Why does Zika virus affect brain development?

August 11, 2017

The Zika outbreak has peaked in some places, but is still important. Why? It isn't just the numbers. There are far more cases of Chikungunya than Zika. Early in the recent Brazilian Zika outbreak, we found that Zika was associated with microcephaly. Mothers infected with Zika during pregnancy were at increased risk of having children with microcephaly. Over time, it became clear that Zika causes a variety of brain development problems.

Why? A recent article uncovers one of the reasons. It's an interesting story.

Here is one key experiment...

|

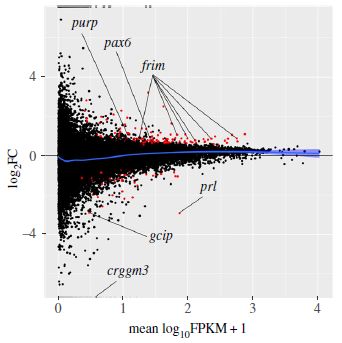

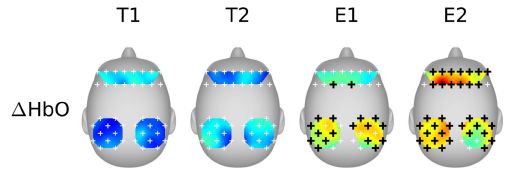

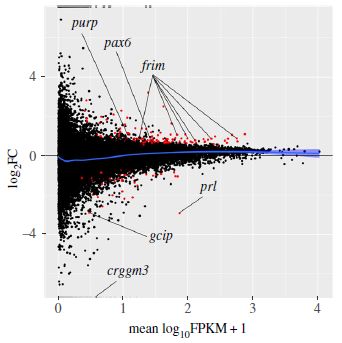

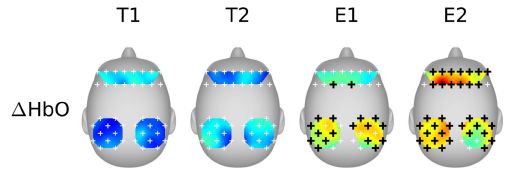

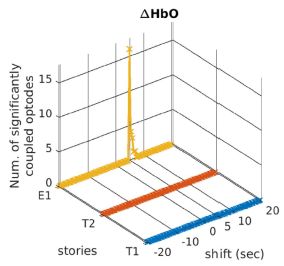

The graph shows the role of host protein Musashi-1, or MSI1, in Zika virus replication.

The y-axis shows the amount of viral RNA made. Log scale. Results are shown for four conditions.

There are two pairs of conditions; let's look at the first two sets (bars) of data for now. You can see that the first group of points (at the left) is fairly high (about 3x104 -- halfway between 104 and 105 on the log scale). The next group is about 10-fold lower. The first group is for a control; the second group is for the virus growing in cells in which the MSI1 protein level has been reduced. That is, reducing MSI1 reduces Zika viral RNA production. That is one of the key results: MSI1 is needed for good virus replication.

That's the first pair of conditions. The second pair shows the same pattern. It's the same experiment, now in a different type of host cell.

|

How did the scientists reduce the level of MSI1 protein? They used a small RNA molecule that targeted that gene, preventing its mRNA from functioning. That RNA is called siRNA, for small interfering RNA.

There is a timeline for the experiment at the top of the figure. The SiRNA was added at time 0. 36 hours later, the virus (PE243) was added. 36 hr later, viral RNA was measured.

There were two kinds of SiRNA, as labeled at the bottom for each data set: siCon as a control, and SiMSI1 to knock down that gene.

Below the RNA labels are the labels for the two types of cell used. Both are neural cells, with high level expression of MSI1.

This is Figure 2A from the article.

|

What is this MSI1 protein? It is an RNA-binding protein -- known to be abundant in neural stem cells and involved in brain development. In fact, mutations in MSI1 have been associated with a rare genetic form of microcephaly. Scientists had recognized that the Zika virus genome contained possible binding sites for MSI1; the present article shows that they are functional, and that they matter.

The model that emerges from the work is that Zika virus grows preferentially in cells of the developing brain, where it makes use of the abundant MSI1 protein. The use of that protein by Zika virus makes it less available to the developing brain, leading to neurological problems.

There is another piece to the story, but it is incomplete at this point. The finding that Zika was associated with microcephaly in Brazil came as a surprise. No such association had previously been made. There were various possible explanations for the discrepancy. One of them is that the Zika virus strain in Brazil is different from the one most common elsewhere. The new work supports that suggestion. Analysis of various Zika strains shows that the Brazilian strain binds MSI1 more strongly than the other strains. Beyond that, we don't know; further work along this line might be fruitful.

The authors caution that their work does not preclude that other factors may be relevant. They have found what appears to be one part of the story of why Zika grows preferentially in the developing brain and causes defects there, but it is not yet clear what the full story is.

News stories:

* New insights into how the Zika virus causes microcephaly. (Science Daily, June 1, 2017.)

* Why Zika might offer a brain cancer cure. (C Smith, Naked Scientists, June 1, 2017.) As the title might suggest, this page goes beyond the basics, with some speculations about how the finding might be useful. Be careful with the speculations, but they are intriguing.

* News story accompanying the article: Neurovirology: Why are neurons susceptible to Zika virus? (D E Griffin, Science 357:33, July 7, 2017.)

* The article: Neurodevelopmental protein Musashi-1 interacts with the Zika genome and promotes viral replication. (P L Chavali et al, Science 357:83, July 7, 2017.)

Previous Zika post: Can antibodies to dengue enhance Zika infection -- in vivo? (April 15, 2017).

More Zika...

* A recent genetic change that enhanced the neurotoxicity of the Zika virus (December 1, 2017).

* Zika fallout: Should pregnant women receive immunizations? (September 30, 2017).

A post about looking for host genes needed for Zika infection: Finding host genes that are required for growth of Zika virus (and related viruses) (August 8, 2016). The current works seems to be independent of the work from this earlier post.

More siRNA: Targeted degradation of the viral genome as a treatment for COVID? (March 13, 2022).

There is a section on my page Biotechnology in the News (BITN) -- Other topics on Zika. It includes a list of Musings post on Zika.

A post about Chikungunya: Chikungunya in the Americas -- are vaccines near? (March 17, 2015).

August 9, 2017

Is there useful ancient DNA in the dirt?

August 8, 2017

We have a new article that makes a rather simple point -- one with potentially huge implications.

Studies of ancient organisms typically start with a visible piece of the organism -- a fossil, in the general sense. If we can isolate DNA from the specimen, then we can get some genome information about the ancient organism. As an example, we have considerable information about the genome of the type of human known as Denisovan. Yet all we have as a physical sample of Denisovan man is a piece of finger and a few teeth.

In some applications of genome sequencing, we don't need any particular physical sample of the organism. Forensics uses samples from crime scenes, sometimes free of any immediate biological context. And the emerging field of metagenomics looks at the DNA in the environment, and tries to infer what it is from.

Could we do that with ancient samples? Archeological metagenomics. That is what the new article tries -- and claims -- to do. The scientific team, one of the leading labs in ancient DNA work, analyzes cave sediments from seven well-characterized archeological sites in Eurasia.

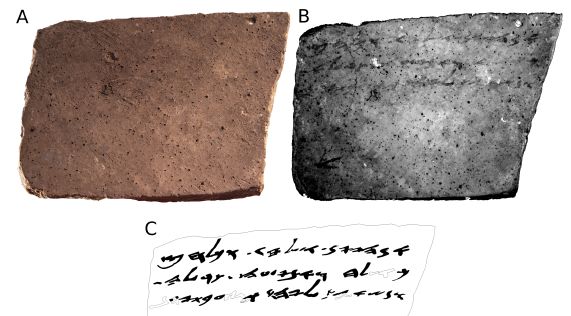

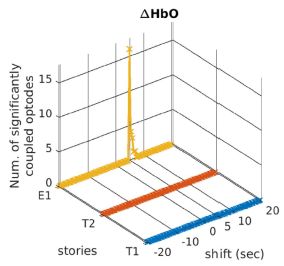

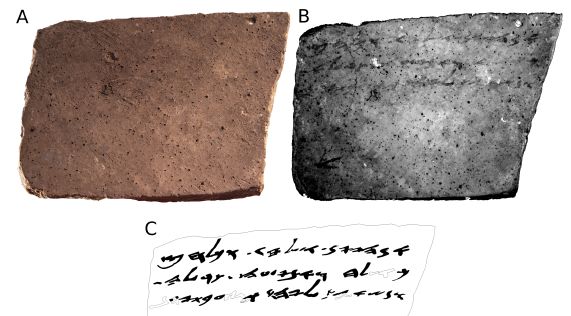

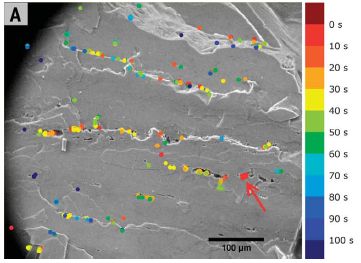

The following figure is a pictorial summary...

The figure is based on a map, showing the seven sites. For each site, there is a box of information.

For example, look at site 2, Trou Al'Wesse (in Belgium). The 2nd line of information says LP:5/5. LP means the site is dated as Late Pleistocene. 5/5 means that 5 of 5 samples tested yielded useful DNA-- DNA that could be identified as one or another animal group. The animals identified at the site are shown here by pictograms; there is a key at the bottom of the figure.

Look at the data labeled LP in the various information boxes. You will see that most LP samples yielded useful DNA sequences. These are all known sites, and the DNA data is consistent with what is known about them.

Three of the sites (3, 5, 7) also have some data labeled MP. That is Middle Pleistocene -- older samples. The success rate with MP samples was lower. Only at the Denisova Cave (site 7) did MP samples yield useful DNA.

LP is 12-126 thousand years ago. MP is 126-781 thousand years ago.

This is modified from Figure 1 of the article. I added numbers for the sites, for ease of reference. The numbers are at the left, usually upper left, of the information boxes.

|

One concern you might have... There might be plenty of DNA in cave sediments. How do we know that the DNA being analyzed isn't just from an early explorer, or from animals that frequent the cave? It's a good question, one that scientists who study ancient DNA have struggled with over the years. It turns out that DNA carries a "date of manufacture", and the scientists have learned how to read it. DNA collects damage; the chemistry of that damage is understood. The amount of damage in a DNA sample is a measure of its age. It is something those studying ancient DNA now pay attention to, helping them to avoid distraction from modern DNA.

Another experimental procedure helps the scientists find the rare pieces of useful DNA. They focus on the more abundant mitochondrial DNA (mtDNA), and use probes for various animal mtDNAs to capture those rare sequences from the bulk samples.

The conclusion is that there is useful ancient DNA in the dirt -- and that we have the tools to find it and sequence it. That will allow an additional level of study of archeological sites. Great care will be needed in the interpretation, and some false steps are probably inevitable. But the field of ancient DNA has dealt with these issues before. Caution, yes, but in the long run, this is likely to be a significant step.

News stories:

* Ancient human DNA found in Ice Age caves -- even when bones were missing. (A Potenza, Verge, April 27, 2017. Now archived.)

* Denisovan and Neanderthal DNA Uncovered in Caves without Skeletal Remains. (Sci.News, April 28, 2017.)

The article: Neandertal and Denisovan DNA from Pleistocene sediments. (V Slon et al, Science 356:605, May 12, 2017.)

The first Musings post about Denisovan man: The Siberian finger: a new human species? (April 27, 2010).

Here are examples of recent posts using metagenomic analysis. Each post notes that the conclusions are tentative because the genome information lacks any physical specimen at this point.

* More giant viruses, and some evidence about their origin (June 13, 2017).

* The Asgard superphylum: More progress toward understanding the origin of the eukaryotic cell (February 6, 2017).

More... What caused the extinction of the mammoths -- I (January 16, 2022).

Head injuries in Neandertals: comparison with "modern" humans of the same era (February 22, 2019).

There is more about genomes and sequencing on my page Biotechnology in the News (BITN) - DNA and the genome. It includes an extensive list of related Musings posts.

Is Harry Potter responsible for the increased owl trade in Indonesia?

August 6, 2017

That's the suggestion from a new scientific article.

|

Owls for sale in a bird market in Indonesia. These are scops owls.

This is trimmed from the top frame of Figure 3 from the article. The full figure shows several such pictures, with various kinds of owls.

|

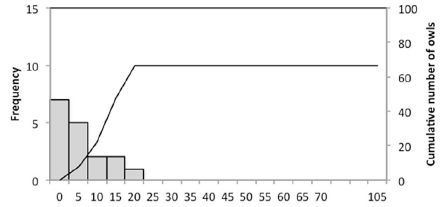

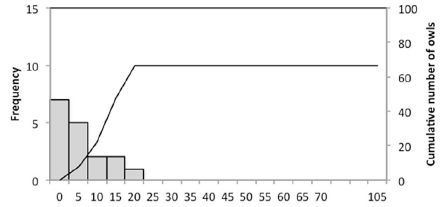

The following figure shows an example of the analysis...

|

The scientists sampled bird markets on the Indonesian islands of Bali and Java. How many owls? The results are shown as a frequency distribution. For example, 7 samples had zero owls (left-hand bar); 5 samples had 1-5 owls (second bar). The line shows the cumulative total number of owls; see the scale along the right-hand y-axis.

You can see that most samples had 5 owls or less. And the total was about 65 owls, an average of 4 per survey.

This is the top frame of Figure 1 from the article. The full figure shows similar analyses for various time periods.

|

That graph is for 1996-1997. The results from surveys in 1999-2003 were similar (actually a little lower). For 2012-2016, the total owl count was over 1800, an average of about 17 per survey. Most (over 60%) of that last set of surveys yielded more than 5 owls, with several over 50. The fraction of total birds in the markets that were owls increased by about seven-fold.

The Harry Potter books and movies were introduced into Indonesia, in local languages, in 2000. The spike in owl trade, seen above, occurred a few years after the introduction of Harry Potter. The authors also note that owls, formerly called Burung Hantu (ghost birds) are now often called Burung Harry Potter.

The authors emphasize that the results show a correlation. They do not prove a causal connection. What else might be going on? One contributor could be the rise of the Internet -- and, later, social media. Of course, both could be involved, perhaps synergistically.

Is the increased interest in owls good? In some ways, perhaps yes. But it also raises questions about legality of the owl trade and conservation status. This is discussed some in the article and news reports, but I don't want to get into it here. There is also concern about how the pet owls are treated.

It's a fun story, with possible serious consequences. The authors note other examples of animals being introduced via literature and media, with a subsequent rise in the popularity of the animal itself. The time lag is similar in this case to those other experiences. The story here may be incomplete, but there is reason to believe that the general phenomenon can be real.

News stories:

* Harry Potter may have sparked illegal owl trade in Indonesia. (S Dasgupta, Mongabay, July 3, 2017.)

* Has Harry Potter mania cursed Indonesia's owls? (I Vesper, Nature News, June 28, 2017. In print: Nature 547:15, July 6, 2017.)

* The 'Harry Potter effect' on the Indonesian owl trade. (Oxford Brookes University, June 29, 2017. Now archived.) From the university. The page includes a comment which I take to be from Mr Potter's office on keeping owls as pets.

The article, which is freely available: The Harry Potter effect: The rise in trade of owls as pets in Java and Bali, Indonesia. (V Nijman & K A-I Nekaris, Global Ecology and Conservation 11:84, July 2017.)

Previous Musings posts referring to Harry Potter: none.

Recent post making a literature-science connection: Bob Dylan and biomedical research (January 20, 2016).

More from Indonesia: The little people of Indonesia (May 14, 2009). Links to more. (There is a literary connection here, too. The humans discussed here are commonly referred to as hobbits.)

More ghosts: A chemical bond to an atom that isn't there (October 31, 2018).

More conservation: Can we train animals to fear their predators? (July 14, 2019).

Methane hydrate: a model for pingo eruption

August 4, 2017

Musings has discussed various aspects of methane hydrate [links at the end]. Briefly, methane and water can form a solid, ice-like structure under certain conditions. Low temperatures and high pressures favor the formation of methane hydrate. Not surprisingly, methane hydrate is found below cold oceans.

It is possible that methane hydrate could be mined for the gas. But there is also a concern about the hydrate, one that follows from the description of where and why it occurs. What if conditions changed, and the hydrate became unstable? This could result in the release of methane into the water, and then presumably into the air -- perhaps at a catastrophic scale.

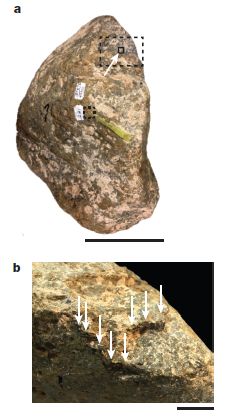

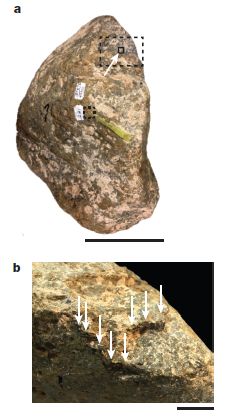

A new article analyzes the geological record, and develops a model for how such methane releases from hydrate may happen.

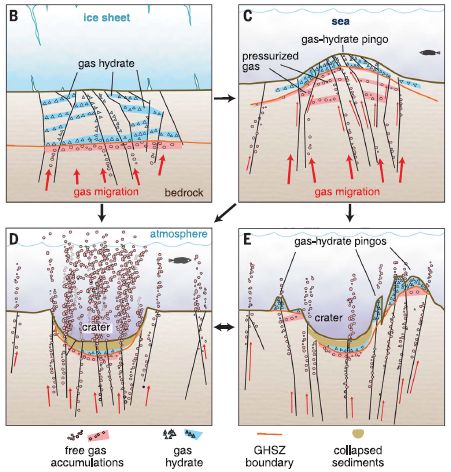

The following figure summarizes the model. It shows a site at four different times, as we progress from frame B to frame E.

Frame B shows the stable situation. A horizontal black line about half way down separates water (bluish) from rock (brownish). There is methane hydrate (also blue) just below the water, and free gas below that. Importantly, there is a layer of ice at the top. The ice serves as a cap.

Frame C shows what happens if the ice melts. Free of the pressure from the ice sheet, the gas pressure from below leads to a bulge -- a mound, called a pingo.

Frame D: The pingo can rupture, leading to escape of gas. And the place where there was a mound now has a crater.

Frame E: This shows a possible later stage. Continuing gas pressure can lead to the development of new pingos.

This is from Figure 3 of the article.

|

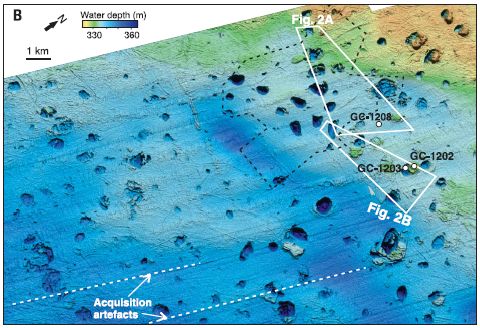

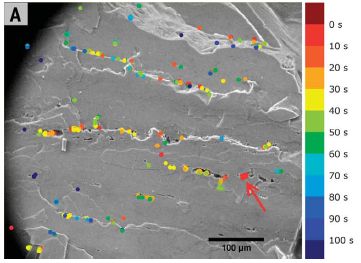

A major part of the work was a detailed analysis of the sea floor in a region known to have active methane hydrate. Here is a map of the study area -- a bathymetric map...

The map is coded by the bathymetric measurements: the depth of the water.

Of particular interest are the little roundish regions. They are about a kilometer across, and as much as 30 meters vertically. (See scale bar, near upper left.) Depending on whether they are darker or lighter than the background, they are craters or mounds (pingos). That is... A region known to be rich in methane hydrate is marked by craters and mounds. That's important background for the scientists' model.

Where is this? Bjørnøyrenna. That's Bear Island Trough, in the Barents Sea north of mainland Norway, right near Svalbard.

This is Figure 1B from the article.

|

Such craters and mounds have been seen before, and associated with methane deposits. The current work is the most extensive account, leading to the integrated model presented above.

The sea floor events studied here happened about 12-15,000 years ago, at the end of the last ice age. We are now in a new era of extensive melting of polar ice. What are the implications of the model presented here for current methane hydrate deposits under ice?

News stories:

* Ancient Arctic 'gas' melt triggered enormous seafloor explosions. (B Geiger, Science News for Students (now Science News Explores), June 13, 2017.) Includes videos and a glossary. This site is associated with the well-known Science News (SN). The page claims they provide "age-appropriate, topical science news to learners, parents and educators". (I thought that is what SN did. Anyway, perhaps it is worth noting the site. Certainly, this page is quite good.)

* Massive craters formed by methane blow-outs from the Arctic sea floor. (Science Daily, June 1, 2017.)

The article: Massive blow-out craters formed by hydrate-controlled methane expulsion from the Arctic seafloor. (K Andreassen et al, Science 356:948, June 2, 2017.) The article is from the Centre for Arctic Gas Hydrate, Environment and Climate (CAGE), at The Arctic University of Norway.

Among posts on methane hydrates...

* Recent craters in Siberia due to methane release from hydrates in the permafrost (January 15, 2025).

* Svalbard is leaking (March 7, 2014). From the area of the current work.

* Ice on fire (August 28, 2009). First post on the topic. Links to more.

More from the Arctic:

* Is Arctic warming leading to colder winters in the eastern United States? (May 11, 2018).

* Eye analysis: a 400-year-old shark (September 3, 2016).

Also see: Who cleans up the forest floor? (November 3, 2017).

August 2, 2017

The new IUPAC periodic table; atomic weight ranges

August 1, 2017

I recently had reason to look up the current periodic table (PT) from IUPAC. It reflects some developments that seem worth noting.

Of course, the table is current through the recent recognition and naming of the remaining elements from 113-118. It is now "full" up through element 118.

Here is a piece of the current IUPAC periodic table, so we can focus on one interesting development...

I chose this region to illustrate elements with and without an atomic weight range, and to show the key.

Start with beryllium. The layout of the Be box should look familiar. In particular, note the atomic weight on the last line of the box. The key identifies this line as the "standard atomic weight".

Now look at hydrogen. The last line, the standard atomic weight, says [1.0078, 1.0082]. The line above that has the more familiar value 1.008, and is labeled "conventional atomic weight".

|

What's going on? Why are atomic weights more complicated than before?

In a sense, they are not more complicated. It's just that an old problem is being dealt with in a new way -- more openly.

The basic idea of the atomic weight is that it reflects the weight of an average atom of the element, as found in nature. The mass of any specific atom is well defined, and known to high precision. But the atomic weight of an element deals with nature -- with the natural abundance of the isotopes. As a simple example... bromine has two major isotopes, one of mass 79 and one of mass 81. Natural bromine contains about 50% of each. Thus the atomic weight of bromine is about 80 -- the number you will see on the periodic table.

So what is the problem? Different natural samples of the same element may have different isotope compositions. That is, the (average) atomic weight of an element depends on the sample of it that you have. That is real variation among samples, not just measurement uncertainty.

There are various reasons for the differences in isotope composition. They include the role of radioactive decay processes in making specific isotopes, and the fact that chemical (and biochemical) processes may occur at different rates with different isotopes, thus changing the isotope composition of the products compared to the reactants. All these effects are small, usually well under 1%, but they are real -- and sometimes we want to know.

In the old days, scientists tried to come up with a single number that best represented the known natural samples. The new IUPAC recommendation is to openly recognize the variability. The value shown in brackets is the range of atomic weights found for the element. The value shown for hydrogen, [1.0078, 1.0082], means that there are samples of H where the average atom weighs as little as 1.0078, and samples as high as 1.0082. The square brackets are the mathematical symbol for an interval -- or range. IUPAC uses this range, when available, as the "standard atomic weight". Of course, that range is not so useful when you want to do a routine calculation without reference to a specific sample. So they also provide the old-style value, as a "conventional atomic weight".

There is another, smaller, change. Some elements have no natural abundance, at least on the modern Earth. It has been a tradition to show the mass number of the most stable isotope, in square brackets, in the space for atomic weight. However, for the newer elements, that information is uncertain, and may change. We really don't know what the most stable isotope is. In the new PT, IUPAC has dropped this. There is just a blank for the atomic weight. I think that's good.

I checked a couple of well-known web sites with periodic tables. They have not yet introduced any of the changes (other than updating for the new elements, of course). It remains to be seen how the new IUPAC changes are accepted.

Source: Periodic Table of Elements. (International Union of Pure and Applied Chemistry (IUPAC). Current version is dated November 2016.) Includes a PT as an image file, as well as pdf versions. The page is full of information on recent changes, as well as the procedures for accepting and naming new elements.

A post about the content of the periodic table: Nihonium, moscovium, tennessine, and oganesson (June 11, 2016). This is about the proposed names for the most recently accepted elements. Those names were officially recognized later in 2016.

Recent posts about variation in isotope composition include:

* Role of biological processing in the formation of a uranium ore (June 30, 2017).

* Is photosynthesis the ultimate source of primary production in the food chain? (April 2, 2017).

My page of Introductory Chemistry Internet resources includes several sections of relevance here, on new elements, naming, isotopes, atomic weights, and the periodic table.

Clinical trial of self-administered patch for flu immunization

July 31, 2017

Musings has noted the development of a flu vaccine that is delivered by a skin patch [link at the end]. We now have a small clinical trial of a dissolvable microneedle patch flu vaccine. It is the first trial in humans of such a vaccine. In general, the results are encouraging.

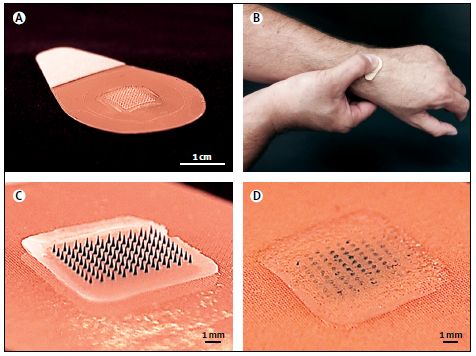

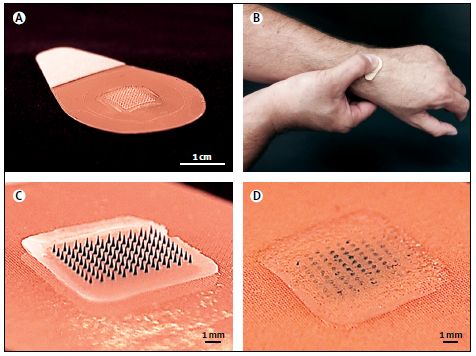

The following figure illustrates the system:

|

Part A shows the patch. In the middle is an array of 100 needles; this is clearer below.

Part B shows a person self-applying the patch.

Part C shows a close-up of the active part of the patch.

Part D shows that region after use; the needles are largely gone. The needles dissolve in the skin to deliver their payload. (As a result, the waste is not "sharp", and can be disposed of easily.)

This is Figure 1 from the article.

|

The trial is small and short-term. It's Phase I; the major goal is to ascertain safety.

The trial included four groups, 25 people each. One group received the traditional vaccine by injection. Two groups received the vaccine by the patch. In one of those groups, the patch was administered by a healthcare worker; in the other, the person applied their own patch. The type and amount of vaccine protein was the same in needle and patch vaccines. A fourth group used the patches, but they were placebo, with no vaccine antigens.

No major safety issues were seen. The patches did cause some local reaction -- as did needles. However, this was not a serious concern in either case.

The immune responses, as measured by antibody levels at one and six months, were similar for the vaccine groups. (The trial was too small to measure actual protection against infection. None of the study participants came down with the flu during the trial.)

Self-administration of the patches seemed to work fine. However, the robustness of the procedure is not clear. In this trial, those who were to self-apply the patch received brief training.

The scientists did lab tests on patches that had been stored at 40 °C for a year; they appeared to fully retain the antigens. That long term stability at ambient, even hot, temperatures is presumably because the patches are dry. In any case, that stability is good; it facilitates transport and use without requiring cold. That is especially important for use in remote areas. (The patches are stored in sealed envelopes prior to use.)

Overall, the trial suggests that the patch vaccine is safe and effective, with the additional advantages of being relatively painless, convenient, and stable. It is also low cost. Further testing will proceed.

News stories:

* Microneedle patch developed for flu vaccination. (Science Daily, June 28, 2017.)

* Microneedle flu vaccine patch passes phase 1 trial. (S Soucheray, CIDRAP, June 28, 2017.)

* Comment story accompanying the article: Influenza vaccine: going through a sticky patch. (K Höschler & M C Zambon, Lancet 390:627, August 12, 2017.)

* The article: The safety, immunogenicity, and acceptability of inactivated influenza vaccine delivered by microneedle patch (TIV-MNP 2015): a randomised, partly blinded, placebo-controlled, phase 1 trial. (N G Rouphael et al, Lancet 390:649, August 12, 2017.)

Background post on the vaccine patch system: A better way to deliver a vaccine? (July 25, 2010). The article here is, in part, from the same labs as the current article.

More... Printing microneedle patches for vaccine delivery to the skin (October 5, 2021).

Recent post about flu vaccines: The nasal spray flu vaccine: it works in the UK (April 12, 2017). A theme is looking for alternatives to the usual injection.

Posts on flu and flu vaccines are listed on the page Musings: Influenza (Swine flu).

More on vaccines is on my page Biotechnology in the News (BITN) -- Other topics under Vaccines (general). It includes a list of related Musings posts.

Another use of a microneedle patch: Treating obesity: A microneedle patch to induce local fat browning (January 5, 2018).

More microneedles: Treating a heart attack using a microneedle patch (January 11, 2019).

Diesel emissions: how are we doing at cleaning up?

July 30, 2017

Emissions from diesel engines are a major source of air pollution. Regulations are in place to reduce those emissions.

A recent article analyzes diesel emissions, with the general goal of examining how well we are meeting the regulations that are in place, and then looking to the future.

The following graph is an attempt to summarize a huge amount of information in a way that can be understood, yielding a sense of the big message.

|

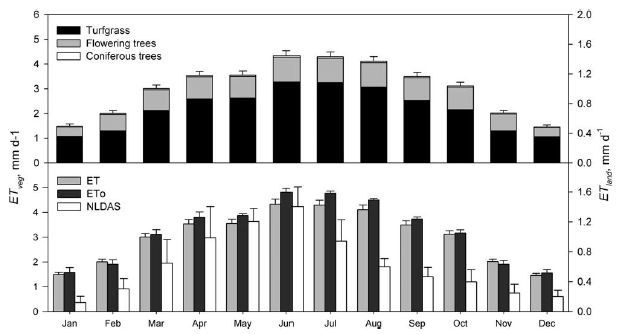

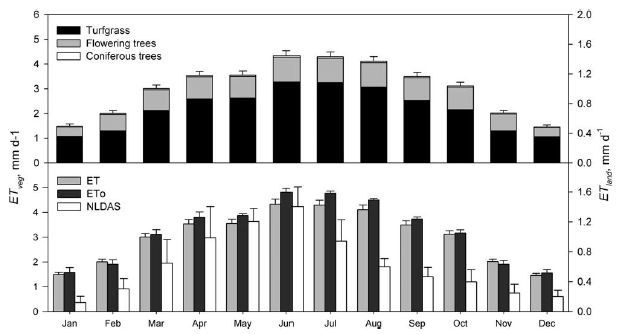

The graph shows diesel emissions vs year, for different types of vehicles and different scenarios.

There are two sets of lines. The solid lines are for heavy duty vehicles (HDV; trucks and buses). The dashed lines are for light duty vehicles (LDV; passenger cars).

The emission plotted is nitrogen oxides, commonly abbreviated NOx. It is shown in megatonnes (Mt). The numbers here are Mt for the year shown.

The scope of the analysis is 11 large markets for vehicles. One of those is the "EU-28"; the others are real countries. Together, these 11 markets are responsible for about 2/3 of the worldwide emissions from diesel vehicles (Fig 2b).

|

Let's start with the HDV, the solid lines. The two main curves are labeled "Limits" (yellow) and "Baseline" (red). "Limits" shows the amount of emissions predicted if regulations in place at that time were followed. It is based on lab measurements of the vehicle emissions. "Baseline" shows the actual emissions.

You can see that HDV emissions have been declining. However, the baseline curve is always higher than the limits curve. That is, actual emissions were always greater than expected, if regulatory targets had been met. The gap has been getting bigger -- in absolute terms, and even more so in percentage terms. For future years, the main curves assume that the gap continues.

Towards the right side are two additional curves, which show major declines in emissions. These are based on proposed regulations. If these regulations were successful, they would lead to major reductions in NOx emissions from diesel engines.