Home

> Musings: Main

> Archive

> Archive for September-December 2011 (this page)

| Introduction

| e-mail announcements

| Contact

Musings: September-December 2011 (archive)

Musings is an informal newsletter mainly highlighting recent science. It is intended as both fun and instructive. Items are posted a few times each week. See the Introduction, listed below, for more information.

If you got here from a search engine... Do a simple text search of this page to find your topic. Searches for a single word (or root) are most likely to work.

Introduction (separate page).

This page:

2011 (September-December)

December 28

December 14

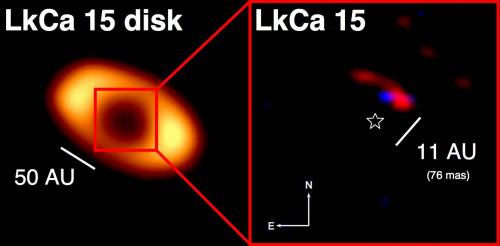

December 7

November 30

November 22

November 16

November 9

November 2

October 26

October 19

October 12

October 5

September 28

September 21

September 14

September 7

Also see the complete listing of Musings pages, immediately below.

All pages:

Most recent posts

2026

2025

2024

2023:

January-April

May-December

2022:

January-April

May-August

September-December

2021:

January-April

May-August

September-December

2020:

January-April

May-August

September-December

2019:

January-April

May-August

September-December

2018:

January-April

May-August

September-December

2017:

January-April

May-August

September-December

2016:

January-April

May-August

September-December

2015:

January-April

May-August

September-December

2014:

January-April

May-August

September-December

2013:

January-April

May-August

September-December

2012:

January-April

May-August

September-December

2011:

January-April

May-August

September-December: this page, see detail above

2010:

January-June

July-December

2009

2008

Links to external sites will open in a new window.

Archive items may be edited, to condense them a bit or to update links. Some links may require a subscription for full access, but I try to provide at least one useful open source for most items.

Please let me know of any broken links you find -- on my Musings pages or any of my web pages. Personal reports are often the first way I find out about such a problem.

December 28, 2011

Heart health and python blood

December 28, 2011

A recent paper might seem to lead to the suggestion that drinking the blood of a well fed python could be good for your heart.

Some background... Burmese pythons carry the idea of feast or famine to an extreme. They are able to fast for a year, but can down a rather large animal -- equal to their own body weight -- in one meal. A meal is a big event for a python. The metabolic rate may increase 40-fold upon eating, and the heart may enlarge by 50% over a couple days.

In the new work. scientists explored the molecular basis of this heart growth. In one set of tests, they examined heart growth under various conditions. They found...

|

Each bar is for a particular treatment. The height of the bar shows the amount of heart growth found.

The left hand bar shows the heart growth after feeding. ("3 DPF" means 3 days post-feeding.)

The remaining treatments were done with fasted pythons, and involved infusing the snake with some test sample.

The first two used plasma (the liquid part of blood) from fasted or fed pythons. You can see that the plasma from the fed python mimics the effect of feeding.

|

Analysis of the "fed plasma" showed remarkable levels of triglycerides (50 times normal), with no observable ill effect. Analysis of these lipids (fats) in the python blood suggested three fatty acids (FAs) that might be of particular importance. So, they tried a mixture of those three FAs (dissolved with the help of the protein BSA). This mixture of fatty acids (right hand bar, "FAs") stimulated heart growth about as well as the fed-plasma or the feeding. (The BSA bar is a control without the FAs.)

|

So we have learned something about an interesting issue of python biology. Not only does the heart grow rapidly following a meal, but the effect can be mediated by the plasma of a fed python, or by some key fatty acids.

They did one more experiment -- one that takes this beyond the realm of python biology. That fatty acid mixture that mimicked the effect of feeding and stimulated python heart growth -- they gave that same fatty acid mixture to mice. They found...

|

There are two experiments here -- with mice. The basic idea is the same. Mice were given the same fatty acid mix found to work with the pythons; the BSA solution serves as the control. Two measurements of heart growth were made. By both criteria, the FA mix stimulated heart growth in the mice.

|

Thus we see that a fatty acid mix found to stimulate heart growth in pythons also stimulates heart growth in mice. Does that mean it might also work in humans? Would that be good? Those questions are beyond the current work. (And my opening sentence, designed to get your attention, is beyond the current work in another way. They gave the mice the FA mix -- one based on what they learned from the pythons. They did not feed the mice python blood. Would that have worked? Interesting question.)

Overall, this is a fun story. Python biology is fun, and the new work begins to uncover part of the molecular basis of that story. Intriguingly, one of their findings seems to have some relevance to a mammalian system. Is there any relevance to humans? Who knows. It's fun to make the connection.

News story: Python Study May Have Implications for Human Heart Health. (Science Daily, October 27, 2011.)

The article: Fatty Acids Identified in the Burmese Python Promote Beneficial Cardiac Growth. (C A Riquelme et al, Science 334:528, October 28, 2011.) The figures above are Figures 4A and 4B from this paper.

More on triglycerides... Mutations that lead to reduced risk for heart disease (November 21, 2014).

More on heart health:

* Heart damage: role of mitochondrial DNA (June 1, 2012).

* Cardiac stem cells as a treatment for heart damage: preliminary results are "very encouraging" (November 29, 2011).

More about snakes and blood:

* Snakes and humans: who eats whom? (January 23, 2012).

* How to find the blood (August 29, 2011).

* Why is there an advantage in being left-handed -- if you are a snail? (January 18, 2011).

For more about lipids, see the section of my page Organic/Biochemistry Internet resources on Lipids.

The Happy Planet Index

December 27, 2011

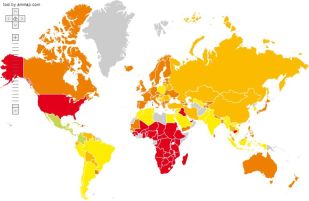

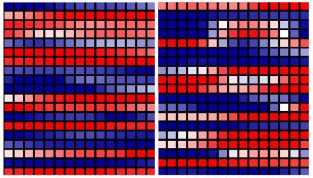

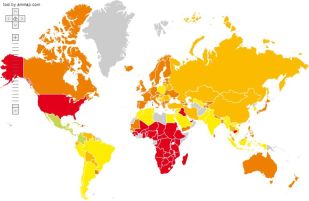

|

Now there's a map that gets your attention.

Countries are color-coded. Green is good, red is bad.

The worst countries -- bright red -- are the USA and much of Africa. Australia, Canada, and much of western Europe are in the second worst category.

The best countries? Careful... Green -- bright green -- is the top category. There are no bright green countries on this map. There are some countries that are light green, the second best category; they are in or near Central America.

|

What's this all about? Simply, the map shows how well countries fare on the various components of the Happy Planet Index (HPI). We should note right upfront that the purpose of the HPI is overtly political. Some aspects are even a bit contrived, in order to make a point. But it has a purpose, which I hope we would recognize as good.

There are various measures of a country's success. A common one is the gross domestic product (GDP); it is a measure of economic activity. The HPI takes a different approach: it is a ratio -- with something "good" in the numerator and something "bad" in the denominator. The HPI uses a measure of happiness (not economic wealth) in the numerator; it uses a measure of resource usage in the denominator. A good HPI comes from more happiness with less consumption. More specifically, the HPI is Happy Life Years (HLY) divided by Ecological Footprint (EF). Let's look at these two terms.

The numerator, a measure of the success of the country, is HLY. This is calculated by multiplying together two terms: the average life expectancy and the life satisfaction score. Life expectancy is a standard measurement. It currently ranges from 41 to 82 years for various countries. Life satisfaction? How does one determine how satisfied people are? One approach is to ask them. The HPI uses a short set of questions that lets people express how satisfied they are. Multiply life expectancy by life satisfaction (expressed as a fraction), and one gets HLY. The idea seems reasonable, though it is hard to know whether the specifics are optimal.

The denominator is the EF. How much resources does the country use? Perhaps we have seen tables showing how much energy various countries use; the EF is the same idea, but broader -- all resources. It's expressed as area of land required to provide those resources. On a bigger scale, we can state that as planets (earths) worth of land. It turns out that all of us together, over the world, are consuming resources that take about 1.3 earths to provide. But we have only one earth. For us to require 1.3 earths is not a sustainable situation. Further, some countries are using much more than average -- with the US being one of the worst. If all the world consumed resources the way the US does, we would need more than 4 earths worth of resources!

So there we have it: the HPI is a measure of the efficiency with which we achieve a good life. The map at the top of this post follows from that (though in a complex way). The red countries either are not achieving happiness, or they are doing so inefficiently -- and unsustainably. Green countries are efficiently achieving happiness. The report, at the HPI web site, discusses some of the greenest countries. I'm not sure I buy all their analysis. However, the big story here is the beginning of the development of a measure that rewards efficiency. If you have criticisms, then suggest improvements.

Web site for the Happy Planet Index.

A news story: Time to legislate for the good life -- Charles Seaford argues that a clear measure of well-being should be devised to help people judge how government policies affect their quality of life. (C Seaford, Nature 477:532, September 20, 2011.) This is a "Comment" article -- an opinion piece. It is how I first came across the HPI. It includes a graph that is about as striking as the map shown at the top of this post.

Also see...

* Energy wastage: The set-top box (August 1, 2011).

* Happyness, a House, and a Mouse (September 12, 2010).

* Are you happy? (July 5, 2008).

December 14, 2011

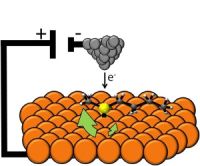

Engineering E coli bacteria to convert cellulose to biofuel

December 13, 2011

A team of scientists led by UC Berkeley's Jay Keasling has achieved an interesting milestone in the development of biofuels. At the outset we must note that this is nowhere near being economically practical, and there is no assurance that it will become practical. Let's enjoy their science, and their bold approach to a new generation of biofuels.

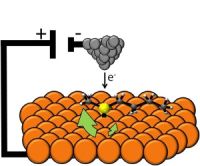

They engineer Escherichia coli bacteria to grow on plant material, such as cellulose, and make biofuels. Ordinary E coli can do neither of those. The cellulose does require a pretreatment, but not one involving enzymes. Thus their process uses only one organism. They actually make variants, to produce three different kinds of biofuels. The following figure diagrams the process. (This is Figure 1 of the paper.)

|

Part A shows two simple flowcharts: for a conventional process (upper) and for their "consolidated" process (lower). Both start with "plant feedstock" and a "pretreatment" step; both end with "fuel". Count the arrows for a simple view of the steps: the upper process has four arrows, whereas the lower process has two. Three distinct steps of the upper process (enzyme generation, biomass hydrolysis, biofuel production) have been combined into one step in the lower process.

Part B diagrams their engineered E coli, and shows what it does. Hexagons represent sugars; the chains at the left would be cellulose, for example. The big oblong object to the right represents the cell. The cell makes two types of enzyme to degrade the cellulose:

* Blue enzymes are secreted outside the cell; they break the cellulose down to 2-sugar units.

* Red enzymes are retained with the cell; they break the 2-sugar units down to simple sugars (1-sugar units), which enter ordinary metabolism.

The cell then converts the simple sugars to three biofuels. (Any one type of cell would make only one of the biofuels.)

|

A major part of this work, then, is in building the bacterial strains. In some sense, this is simple: they use known genes from other organisms, and transfer them to E coli. That's what genetic engineers (also sometimes called "synthetic biologists") do. Doing it for pathways involving multiple genes is more work, but no new principles. Extra work is required to get the pathways to work smoothly; it's one thing to get a gene to work, but it is another to get multiple genes to function in a coordinated way. For now, getting this to work at all is the goal. They will optimize it later.

An important step here is their pretreatment process. They treat the cellulosic material by dissolving it in an ionic liquid (IL). This is a gentle and very effective way to break down the crystalline structure of the cellulose, which is a serious impediment to its degradation. They recognize that this IL treatment is rather expensive at this point, but for now this is a demonstration. As with so many aspects, one can hope that costs will be reduced with experience. (In the case of IL, an important part of the economics is learning to recycle it efficiently.)

What are we to make of this? Scientifically, it is a major achievement. In terms of practical use, it is hopeless at this point. They consider the work here a milestone -- a technical milestone; that seems a proper view. Will it become useful? The only way to find out is to continue the development work. The economics of fuel production are difficult. Even the well-developed process of making ethanol from sugar (or corn starch) is only marginally viable economically. Making biofuel from cellulose is likely to always be more expensive. On the other hand, over the long term, the price of fuel is likely to rise. So let's welcome this as a milestone, and see what happens. Even if the process proposed here never comes to fruition, perhaps some parts of it will be useful.

News stories:

* E. coli bacteria engineered to eat switchgrass and make transportation fuels. (PhysOrg, November 29, 2011.)

* JBEI researchers engineer E. Coli to produce gasoline, diesel and jet fuel substitutes or precursors directly from switchgrass without external enzyme assistance. (Green Car Congress, November 29, 2011.)

The article, which is freely available: Synthesis of three advanced biofuels from ionic liquid-pretreated switchgrass using engineered Escherichia coli. (G Bokinsky et al, PNAS 108:19949, December 13, 2011.)

Also see...

* A tower of (solar) power -- which makes kerosene (August 22, 2022).

* Some fun reading: Fuel cell gadget and growing diesel (December 13, 2008). Part of this post talks about a fungus that converts cellulose to fuel; no information on its economic utility is available. The post also introduces Keasling's work -- his now-classic work to make the anti-malaria drug artemisinin, and the early ideas about the current project.

* Making biofuels from cellulose (May 17, 2010). Discussion of a process involving chemical degradation of cellulose, after dissolving it in an ionic liquid. Includes some discussion of the economics.

* Cellulosics for energy: an update (October 30, 2010). Overview of the use of cellulosics -- and the slow progress being made.

* Cellulose: improved processing (February 25, 2011). This post offers two improvements in cellulose processing.

* A better way to divide 6 by 2: A more efficient way to use sugar (November 10, 2013).

There is more about energy on my page Internet Resources for Organic and Biochemistry under Energy resources. It includes a list of some related Musings posts.

Human birth: When to cut the cord?

December 11, 2011

When should be umbilical cord be cut upon human birth? Should it be cut as soon as possible -- within seconds of birth? Or should one wait for three minutes or so? Interestingly, there are arguments on each side. We need to weigh the competing arguments. Since the arguments per se do not lead to a clear conclusion, we need to collect data comparing the methods.

Among the issues... In the minutes immediately following birth, blood continues to flow from the placenta to the baby. The key question is whether this is good or bad. It may be good if this blood represents an important portion of the baby's blood supply. It may be bad if it results in the baby having too much blood, or if it causes harm to the mother. Part of this is the iron level for the baby. Iron is an essential mineral, but also one that can cause problems. Too little or too much iron can be bad.

A new paper does a controlled trial comparing the two methods. The data support waiting. The key points are that babies with the delayed cut have a lower incidence of iron shortage at 4 months, and no ill effects are seen. As one goes through this, it is important to note the various arguments, so one can evaluate whether the results warrant a strong conclusion.

News story: Delayed Cord Clamping Protects Newborn Babies from Iron Deficiency, Research Finds. (Science Daily, November 15, 2011.)

* Editorial accompanying the article: Delayed cord clamping and improved infant outcomes -- Enough evidence exists to encourage a routine change in practice. (P van Rheenen, BMJ 343:d7127, November 15, 2011.) Good overview of the issues. The author reaches a clear conclusion; I do not know how widely that conclusion is shared.

* The article, which is freely available: Effect of delayed versus early umbilical cord clamping on neonatal outcomes and iron status at 4 months: a randomised controlled trial. (O Andersson et al, British Medical Journal (BMJ) 343:d7157, November 15, 2011.)

Also see:

* An advanced placenta -- in Trachylepis ivensi (October 18, 2011).

* The problem of human birth (July 8, 2011). This post deals with the timing of human birth, both compared to other animals and the problem of premature birth.

Does XMRV cause CFS? Wrap-up.

December 10, 2011

Update February 6, 2012

In October 2009, a report appeared claiming an association between xenotropic murine leukemia virus-related virus (XMRV) and the mysterious human illness chronic fatigue syndrome (CFS). This turned out to be a contentious finding. Musings noted the finding and the beginnings of the dispute in the post A virus that is or is not associated with chronic fatigue syndrome (February 12, 2010). That post also links to some follow-up posts, including developments earlier this year that cast considerable doubt on the association of the virus with the disease.

We now have a more official wrap up -- accompanied by an excellent summary. The original issue is whether people with CFS have a virus called XMRV (or similar) in their blood. Different labs were reporting different results. Since many things can affect such studies, a good way to resolve the dispute is to centrally prepare a set of standard samples, and have all labs test the same samples. This has been done, with the participation of all labs that had reported results, positive or negative, during the dispute; all labs are represented on the authorship of the paper. The results are clear... Most labs found nothing. The two labs that reported some positive results had no consistency in their findings. That is, the occasional positives they reported were for both control and CFS samples, and the two labs did not agree on which samples were positive. This is a well-designed study -- a good example of how such disputes should be handled.

Overall, the XMRV-CFS story is a good case study in how a scientific dispute is handled.

News story: XMRV, Related Viruses Not Confirmed in Blood of Healthy Donors or Chronic Fatigue Syndrome Patients. (Science Daily, September 22, 2011.)

* News story accompanying the article: False Positive. (J Cohen & M Enserink, Science 333:1694, September 23, 2011.) On the article itself, the title is actually written as False Posi±ive. This major "newsfocus" summarizes the entire XMRV-CFS story. It is an excellent overview, and would be a good place for a newcomer to start. It was published in print at the time the following article was accepted for publication and posted online.

* The article: Failure to Confirm XMRV/MLVs in the Blood of Patients with Chronic Fatigue Syndrome: A Multi-Laboratory Study. (G Simmons et al, Science 334:814, November 11, 2011.)

For an idea of the results, look at Table 1 of the article. The rows of the table are for various types of tests and various labs. The first column is for control patients; the next two columns are for CFS patients for whom the virus had been detected earlier. You will see that the table contains mostly zeroes; a scattering of non-zeroes has no particular pattern. The last column (at the right) is a positive control: samples have been "spiked", by adding the virus. Most labs detected all of these spiked samples. Interestingly, the only lab that failed to detect all of the positive controls is the one that reports the virus in other samples.

More, February 6, 2012...

The two main papers in support of the association of XMRV with CFS have been retracted. One was the original report, which was partially retracted by the authors, and then officially retracted by the journal. A second report that had offered positive but conflicting evidence has been retracted by the authors.

Musings noted a retracted paper in the post Prejudice against outsiders -- in monkeys (May 10, 2011). In that case, the retracted paper had been the focus of the post; that is, the post was about the paper as a new development. Subsequent retraction effectively negates the post.

Cleaner cars: the Honda Civic Natural Gas car

December 9, 2011

Our automotive writer sends his review of an interesting new car: a model of the venerable Honda Civic designed to run on natural gas.

The review: 2012 Honda Civic Natural Gas [pdf file; link opens in new window]. (Brian Sy, November 2011.)

You may wonder how fuel usage is compared for gasoline vs natural gas. The convention is to use energy content. That is, a gallon-equivalent of natural gas has the same energy as a gallon of gasoline. Wikipedia: Gasoline gallon equivalent.

Brian's review of the 2010 Aptera 2e is part of the post Electric cars (May 9, 2009). The post focuses on the Tesla cars. Update, December 2011... Brian notes that Aptera has recently gone out of business. This does not necessarily reflect on the merits of the car, but does reflect on the difficulties of the market. Aptera closes its doors. (CNet, December 2, 2011.) (I have added this update to the original Aptera post.)

December 7, 2011

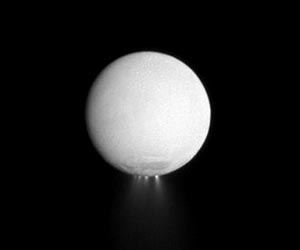

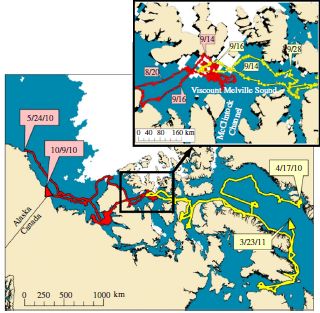

How were the Gamburtsevs formed?

December 7, 2011

Do I need to first explain what the Gamburtsevs are? It might seem odd that educated readers would not be familiar with one of Earth's great mountain ranges, sometimes compared to the Alps. However, this mountain range is in a remote area. Further, even if you were there, you would not be able to see the Gamburtsev Mountains; no human has ever seen them. The Gamburtsev Mountains are in Antarctica -- buried under a thick sheet of ice. They were discovered only in the 1950s, by radar. The Gamburtsevs are our least understood mountains.

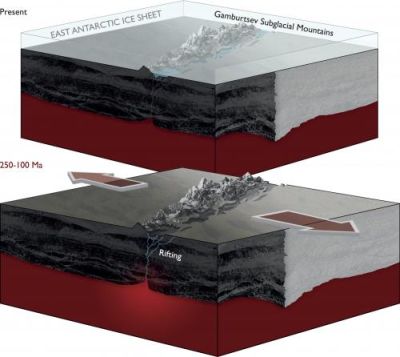

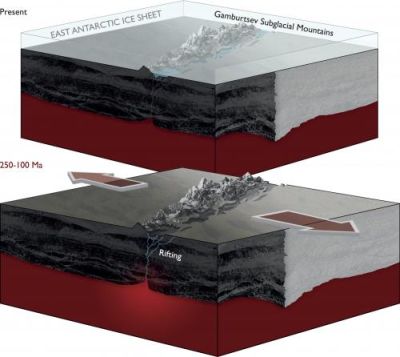

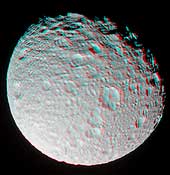

|

Here is a pair of diagrams, giving an idea of what the Gamburtsevs looked like long ago (bottom) and what they look like now (top).

The lower diagram shows land masses, with a crack in the middle, labeled "rifting". The mountains rise through this crack. More about this below.

The upper diagram is substantially the same, but with a layer of ice on top. (The ice layer is about 3 km thick.) It's hard to see the ice, but note the label near upper left. The ice, of course, is the typical land cover in Antarctica. There is enough ice to completely cover the mountains, which are now labeled "Gamburtsev Subglacial Mountains".

This figure is from the PhysOrg news story listed below.

|

A team of scientists has now studied the Gamburtsevs more extensively. Their tools? Airplanes -- with ice-penetrating radar, gravity meters and magnetometers. From their findings, they propose how these mountains developed. One part of the story is shown in the lower part of the figure. Modern Antarctica is part of a rift system -- a place where two parts of the land mass pull apart. (A famous rift system is that of East Africa.) The big arrows in the lower diagram show the rifting. This rift system is what split India from Antarctica. The roots of ancient mountains lay buried, but the rifting events of 100 million years or so ago created an opening that allowed a new generation of mountains to rise. This is the best analysis yet of the history of the Gamburtsevs, based on a major round of measurements. It leads them to propose a model. I'm sure this is just the first step of working out the full story. It may be incomplete and preliminary, but it is just fun to read about that buried mountain range.

News story: Gamburtsev Subglacial Mountains enigma unraveled in East Antarctica. (PhysOrg, November 16, 2011.) Browse this for a nice overview of a complex story.

* News story accompanying the article: Geophysics: Earth's longest fossil rift-valley system. (J Veevers, Nature 479:304, November 17, 2011.)

* The article: East Antarctic rifting triggers uplift of the Gamburtsev Mountains. (F Ferraccioli et al, Nature 479:388, November 17, 2011.)

There is a small animation showing the breakup of the supercontinent Pangaea in Wikipedia. It's too small and too fast to work well, but still gives you some idea. You can't see Antarctica very well in it, but you can see India breaking off and racing northward. Wikipedia: Pangaea. (Gondwana is a part of Pangaea that splits off the southern end.)

More about African rifting: Africa is falling apart (July 27, 2010).

More about measuring local gravity: The potato we call home: a study of the earth's gravity (May 3, 2011). The post notes how local gravity is affected by mountains.

More about mountains: Our mountains are growing (May 19, 2012).

More from Antarctica:

* What do microbes eat when there is nothing to eat in Antarctica? (April 2, 2018).

* IceCube finds 28 neutrinos -- from beyond the solar system (June 8, 2014).

* Life in an Antarctic lake (April 22, 2013).

More from Gondwana:

* Did selenium deficiency play a role in mass extinctions? (February 5, 2016).

* Quiz: What is it? (August 17, 2015).

* The oldest known plants (November 2, 2010).

More about super-continents: Briefly noted... America and Asia are getting closer (March 15, 2023).

Therapy based on embryonic stem cells: the first clinical trial -- follow-up

December 5, 2011

Original post: Therapy based on embryonic stem cells: the first clinical trial (October 23, 2010).

Geron (the company behind this trial) has announced that they are getting out of the stem cell business. They blame it on economic factors, with no indication that there is any scientific setback per se. It is hard to know what is really behind the decision, so we simply note this as an addendum to the initial post. The trial is a high-risk -- and expensive -- venture, partly because it is the first step into a new area. Patients who have already been treated will continue to be monitored; that means we will get some information about the treatment.

News story: Stem cell trial halted. (BBC, November 15, 2011.) As I understand it, the title here is a bit misleading. As noted above, monitoring of patients that have already been treated will continue.

Geron's announcement: Geron to Focus on its Novel Cancer Programs. (November 14, 2011.) Geron has removed most of their pages on the stem cell work; the page is now archived, at: Geron announcement.

And then... Geron sells its stem cell business (January 23, 2013).

For more on stem cells:

* Cardiac stem cells as a treatment for heart damage: preliminary results are "very encouraging" (November 29, 2011).

There is more on stem cells on my page Biotechnology in the News (BITN) - Cloning and stem cells.

Chemical elements 114 & 116: flerovium, livermorium (proposal)

December 5, 2011

Chemical elements 114 and 116 were recently officially recognized: Chemical elements 114 and 116 officially recognized (June 8, 2011). IUPAC has now formally announced proposed names for these two elements. Both of these elements were discovered (or, more precisely, synthesized) in collaborative work between the Flerov Laboratory of Nuclear Reactions at the Joint Institute for Nuclear Research, in Dubna, Russia, and Lawrence Livermore National Laboratory (LLNL), in Livermore, California (about 40 miles southeast of San Francisco). The proposal names one element after each of the two laboratories. The proposed names and symbols are

* element 114: flerovium (Fl);

* element 116: livermorium (Lv).

There is an official period for comments on the proposal. The names will probably be officially adopted in mid-2012. [See below.]

News release from one of the labs: Livermore and Russian scientists propose new names for elements 114 and 116. (LLNL, December 1, 2011.) (August 15, 2012... The news story originally listed is no longer available. This is a replacement.)

Follow-up: Chemical elements 114 & 116: flerovium & livermorium are now official names (June 5, 2012).

More on the elements...

* Naming: Element #112: Copernicium (July 15, 2009).

* Discovery: Chemical element #117 (April 13, 2010).

Leopard horses

December 2, 2011

|

The picture at the left shows two spotted horses. The picture is based on a painting on the wall of a cave in France, and is thought to be 25,000 years old.

Larger picture [link opens in new window].

Such horses, called leopard horses, are known. However, many scientists thought that they did not exist 25,000 years ago. Were that true, it would imply that these paintings were works of the imagination by the ancient cave painters.

|

Now, a team of researchers has provided some new evidence. They tested DNA from 31 horses of that same era, and found the gene for leopard spotting in six of them. The conclusion, then, is that leopard horses did exist at that time; therefore, the cave paintings may well have been based on what the artists saw.

It's an interesting use of DNA technology.

News story: Prehistoric Cave Paintings of Horses Were Spot-On, Say Scientists. (Popular Archaeology, November 7, 2011. Now archived.) The page contains several beautiful pictures -- including the one shown above.

The article: Genotypes of predomestic horses match phenotypes painted in Paleolithic works of cave art. (M Pruvost et al, PNAS 108:18626, November 15, 2011.)

Other posts on prehistoric art include:

* The oldest known dog leash? (January 23, 2018).

* Images from 30,000-year-old motion pictures (July 22, 2012).

* Early American art: a 13,000 year old drawing of a mammoth (July 18, 2011).

Other posts that deal with horses:

* How horses learned to walk (September 21, 2016).

* The oldest DNA: the genome sequence from a 700,000-year-old horse (August 4, 2013).

* Can giraffes swim? (August 6, 2010).

* Stripes protect zebra against horseflies -- another story of polarized light (February 26, 2012).

There is more about art on my page Internet resources: Miscellaneous in the section Art & Music.

There is more about sequencing on my page Biotechnology in the News (BITN) - DNA and the genome. It includes a list of Musings posts on sequencing and genomes.

Also see: Monitoring the wildlife: How do you tell black leopards apart? (August 10, 2015).

November 30, 2011

Does dry cleaning cause cancer?

November 30, 2011

The title should get your attention. It's an important question. A recent paper takes a stab. It's also interesting because of how the work got started, based on the initiative of a high school student. However, there is no clear conclusion -- as the authors realize, and is so often the case with issues of environmental contaminants. So let's look at what they did -- realizing at the outset that this is an incomplete story.

One common solvent for dry cleaning is perchloroethlyene (PCE, Cl2C=CCl2). It works, but it is also known to be toxic. It may even cause cancer, though this has not been clearly established. The use of a toxic solvent raises two safety issues. One is for the workers, and the other is for the consumers. As typical of many chemical exposure issues, workers using it may be exposed to high levels for long periods, and then there is the possibility of consumers getting some exposure to residues. That second part is what is explored here. Are consumers exposed to harmful levels of PCE by having their clothes dry cleaned? The question was posed by a high school student, who then sought out university researchers to help her with the problem. They did some nice work, but it is only part of what we need to know -- the easy part.

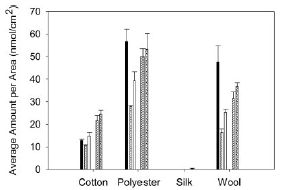

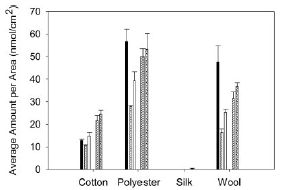

What did they do? They sent out samples of four different fabrics to seven local dry cleaners. They then analyzed the cleaned fabrics for PCE. That is, they asked if clothes that are dry cleaned retain some of the solvent. The following figure summarizes the results.

|

The y-axis shows the amount of PCE found. The bars are grouped by fabric type, with one bar for each of the seven dry cleaners for each fabric.

Main observations:

* Silk does not retain PCE.

* There are only five bars per fabric group, not the seven I said. That's because two of the dry cleaners gave results with zero PCE for all samples. They say these are dry cleaners who advertise themselves as "green".

* The other fabrics at the other dry cleaners all yielded PCE. The differences between them are not important for us here.

|

A bit of fine print...

* They raise the possibility that the negative result for silk is an artifact of their method, but they think that unlikely. It can be tested at some point by trying an alternative method.

* The two "green" cleaners apparently do not use PCE. The authors deduce what solvents these places do use (but seem not to ask the cleaners to confirm their science).

The figure above is Figure 4 from the paper.

|

In other parts of the work, they show that the level of PCE on the fabrics increases with multiple washings. They also show that the PCE can "off-gas" -- come off the fabric.

A simple summary, then, is that the common dry-cleaning solvent PCE can be retained on fabrics. We may be exposed to PCE, known to be toxic, either by direct contact, with adsorption through the skin, or by breathing air into which the PCE has off-gassed. (Imagine for example that the cleaned clothes are left in a hot car.) The key question, then, is whether any such exposure is likely to be at high enough level to be significant. They discuss aspects of this at some length, but the bottom line is that they don't know. That's partly because little is clear about the possible carcinogenicity of PCE. A useful outcome of this work would be if it led to improved study of PCE toxicity.

The situation here is all too typical. Toxicity issues are a matter of dose. Simply saying that something is toxic and that we are exposed is not very helpful. We need to know something about the levels involved -- and that information can be hard to get.

What about those "green" cleaners? This paper does not contain any serious discussion of their pro and con issues, so there is no basis here for discussing this. PCE serves as a reminder of one big concern. PCE itself was introduced as "better" than the previous solvent used for dry cleaning, which was found to be contributing to ozone depletion. Solving one problem sometimes leads to another. We need information.

In the meantime, you can greatly reduce your exposure to PCE by allowing dry-cleaned items to off-gas for a few days -- in a ventilated space. Or you can wear silk.

* News story: Cleaning up: Fabrics retain remnants of dry cleaning fluid. (Spectroscopy Now, November 1, 2011.)

* Press release: High Levels of Carcinogens in Dry-Cleaning, Study Shows. (Georgetown University, August 30, 2011. Archive copy.) This page notes the role of the high school student who proposed the study.

The article: Quantification of Perchloroethylene (PCE) Residues in Dry Cleaned Fabrics. (K S Sherlach et al, Environmental Toxicology and Chemistry 30:2481, November 2011.)

Also see:

* The role of combinations of chemicals in causing cancer? (September 21, 2015).

* Are government safety inspections worthwhile? (June 12, 2012).

* The bisphenol A (BPA) controversy (September 19, 2010). Another example of controversy about the risks of a chemical in our environment.

My page for Biotechnology in the News (BITN) -- Other topics includes a section on Cancer.

Cardiac stem cells as a treatment for heart damage: preliminary results are "very encouraging"

November 29, 2011

A heart attack (myocardial infarction) leads to reduced heart function, due to loss of functional heart muscle. The human body is poor at regenerating replacement heart muscle. What if we could stimulate the body to do so? It's an active research topic, and stem cells are one approach. A new paper reports very preliminary results of a clinical trial using one type of stem cell; the results are "very encouraging".

The work uses cardiac stem cells (CSC). These are cells found in heart tissue that have the capability of growing and differentiating into the various types of heart cells. That is, these would seem to be the cells that would normally lead to heart muscle formation. (Of course, that leads to the question of why they do not normally do so very well. Is this simply a matter of numbers, or does it involve signals? Those questions remain unanswered.) Further, each patient is treated with his or her own stem cells. Thus we already see two good features of this approach: the type of cell used "makes sense", and immunological problems are avoided by using the patient's own cells. Of course, predicting that these are good features does not mean they will work.

What they do is to take a sample of heart tissue from the patient (during a surgery that is otherwise scheduled). They grow out the cells ("expand" them, as they say), and isolate the CSC. These expanded CSC are then injected into the patient's heart.

A Phase 1 clinical trial, the first test of this procedure in humans, is in progress, and preliminary results are being reported.

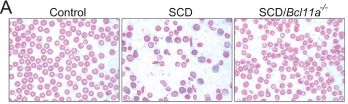

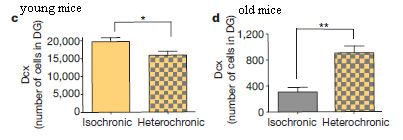

|

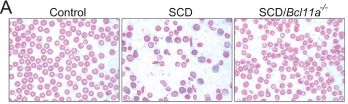

Here is a key set of results. This is Figure 4A of the paper.

The y-axis shows a measure of the heart function: the "ejection fraction", as measured by echocardiographic imaging of the heart during a beating cycle. Results are shown for the control patients (left side) and the patients treated with CSC (right side). For each group, there are results for time zero (baseline) and 4 months. Results are shown for individual patients (the lines), and for the group averages (the squares).

Let's start with the group averages, shown with the red squares. For the control patients, the red square is at about 30% both at baseline and 4 months. For the CSC-treated patients, the baseline value is also about 30%, but at 4 months it is near 40%. This is not only a statistically significant improvement, but also one that is of meaningful benefit to the patient.

|

Also shown are the results for each patient. For example, on the left are seven lines, one for each of the seven control patients. You can see that the patients varied -- in each group. However, it is clear that there are several patients in the CSC-treated group with better results than any of the control patients. (It also looks to me like the patient with the worst result was one of the treated patients.)

|

The results above suggest that the treatment results in benefit, on average, but also cautions us that there is considerable variability.

Other parameters measured lead to a similar conclusion. Further, limited data for some of the patients suggest that the benefit shown above is retained at 12 months; it may even be a bit better.

An interesting feature of the trial is that it is treating old damage. The average age of the infarcts (damaged areas) was over 3 years. If this part of the study holds up, it means that this is not simply a first-line treatment, which must be administered promptly, but one that can be administered "at will". It is also possible that repeated treatments, perhaps over a time span of years, would lead to further improvement; that is completely beyond the current trial, but is worth testing.

Conclusion? This is where we need to be very careful. This is a Phase 1 clinical trial. In fact, it is only part of that trial -- early data on some of the first patients. The trial is neither double-blind nor placebo-controlled. The primary purpose of a Phase 1 trial is to test safety, and a simple protocol is common. (Safety? No serious problems have been seen.) Measuring efficacy is secondary. The authors call their results here "very encouraging". What that means is that they want to proceed to further, more thorough testing. The ultimate verdict on the method comes from the continued testing. It is common that the judgment of a treatment or drug becomes more complex as more data become available. For now, the very limited data we have suggest that the use of CSC is promising; it is worth testing it more.

News stories:

* Using Heart's Own Stem Cells To Treat Heart Failure. (Medical News Today, November 15, 2011.)

* First Clinical Trial of Autologous Cardiac Stem Cells Shows Positive Results. (GEN, November 14, 2011.)

* News story accompanying the article: SCIPIO brings new momentum to cardiac cell therapy. (G Heusch et al, Lancet 378:1827, November 26, 2011.)

* The article: Cardiac stem cells in patients with ischaemic cardiomyopathy (SCIPIO): initial results of a randomised phase 1 trial. (R Bolli et al, Lancet 378:1847, November 26, 2011.)

Also see:

* Stem cell treatment of heart damage: a new interpretation (March 31, 2020).

* Synthetic stem cells? (April 30, 2017).

* How good is "good cholesterol" (HDL)? (September 21, 2012).

* Using patient-specific stem cells to study Alzheimer's disease (February 24, 2012).

* Heart health and python blood (December 28, 2011).

* Therapy based on embryonic stem cells: the first clinical trial -- follow-up (December 5, 2011).

* Using stem cells to study a heart condition (April 19, 2011).

Human violence

November 28, 2011

We're all aware of human violence. It's in the news constantly, and it is in our history. We may even think it is increasing. Of course, human population is also increasing, as is our awareness of the world around us. In a new book, Harvard psychologist Steven Pinker argues that human violence is decreasing, and he then explores the reasons. A short article based on the book appeared in Nature, and is listed below. There are two issues... The first, whether violence is decreasing, involves facts -- data. Controversial data, perhaps, but data. The second is the reason(s) for such a decline -- if indeed there is a decline. This, too, is interesting and provocative, but more subjective.

I hope that this item stimulates some serious thinking. It's not to quickly decide whether he is right or wrong, but to think about individual issues that he raises. Do reasonable data support the suggestion that human violence is decreasing, at least in some cases? Do we learn something about human society from some of his suggested reasons? The book itself is a massive tome; it's not on my agenda to tackle it. If we are going to learn about Pinker's views from secondary (and perhaps biased) presentations, it is important to read some range of them, and to be cautious about reaching judgment.

The article: Taming the devil within us -- We are getting smarter, and as a result the world is becoming a more peaceful place, says Steven Pinker. (S Pinker, Nature 478:309, October 20, 2011.) It says: "This article is adapted from his new book The Better Angels of Our Nature: The Decline of Violence in History and its Causes (Allen Lane, 2011)." It's quite short. Please read it.

Here is one book review, which gives a good sense of the issues: Is Violence History? (P Singer, New York Times, October 6, 2011.)

I wanted to find a review that offered substantive criticism of the book. While looking, I came across the following page, which notes a range of reviews. Briefing note: The Better Angels of Our Nature by Steven Pinker. (The Omnivore, October 29, 2011.) You might check the listed review in the Washington Post for an interesting negative review of the book. While negative, this review talks of much that is good about the book. It may be a fair summary that the book is useful in how it will provoke better discussion of the issues; it is not "the last word".

Other Musings posts about books include Silent Spring -- on its 50th anniversary (October 5, 2012).

This post is also noted on my page Book suggestions: Pinker, The Better Angels of Our Nature.

More about human violence:

* In the aftermath of gun violence... (January 8, 2018).

* Violence within the species -- in various mammals; implications for the nature of humans (December 6, 2016).

* The earliest human warfare? (February 17, 2016).

November 22, 2011

Royal Society opens its historic journal archives to free access

November 22, 2011

The Royal Society (of London) claims to be the world's oldest scientific publisher. Its peer-reviewed journal Philosophical Transactions of the Royal Society dates from 1665. In October the Society announced that it is now providing free access to its entire collection of old journals -- for those older than 70 years.

The Royal Society announcement listed below gives you some idea what this historic collection contains. One of the examples they note is featured in the accompanying post, below.

News story: Royal Society journal archive made permanently free to access. (Royal Society, October 26, 2011.)

More about their first journal: Happy birthday, Phil Trans (March 25, 2015).

More from the Royal Society: Royal Society suggests science books (July 27, 2009).

Benjamin Franklin and the electrical kite

November 22, 2011

The Royal Society has opened access to its historic collection of scientific journals, dating back to the 17th century. This was noted in the accompanying post, above. One of the articles they featured in their announcement was a letter to the Society by one Benjamin Franklin of Philadelphia, concerning an electrical kite. I'm sure you've all heard about this; now you can read what Franklin wrote.

The article: A Letter of Benjamin Franklin, Esq; to Mr. Peter Collinson, F. R. S. concerning an Electrical Kite. (Benjamin Franklin, Philosophical Transactions 47:565, 1752.) It's quite short, and generally readable (so long as you remember that most of those characters that look like f are really s).

With restraint and reluctance, I have avoided applying an adjective of nationality to Franklin. The paper here dates from 1752 -- 24 years before the English colonies on the eastern coast of North America declared their independence from Mother England.

For more about Franklin the scientist, see my page of Book Suggestions: Charles Tanford, Ben Franklin Stilled the Waves: An informal history of pouring oil on water with reflections on the ups and downs of scientific life in general. 1989.

A post about an article that is a follow-up to Franklin's work: High-voltage thunderstorms: how high? (April 29, 2019).

More about the journal: Happy birthday, Phil Trans (March 25, 2015).

* Previous post about a historic paper: Central Dogma of Molecular Biology (August 16, 2011).

* Next history post: Quiz: What's the connection... (February 14, 2012).

My page Internet resources: Miscellaneous contains a section on Science: history. It includes a list of related Musings posts.

Are some languages spoken faster than others?

November 21, 2011

When you hear someone speak in another language, does it seem that they speak very fast? Is that real, or is it just a perception due to unfamiliarity? And if they really are speaking faster, does that mean they are communicating information faster?

If we are going to answer those questions with some objectivity, we need to define our terms carefully, and then take careful measurements. A recent paper does just that, and offers some intriguing findings.

For speed, the authors choose to count syllables. That is, they express speaking speed in syllables per second. Expressing the information content is tricky; they choose to do it by comparing the same texts translated into several languages. That is, they do not attempt to measure information content in any absolute sense, but simply assume that it is the same for the given text in different languages. They use several text samples, and several speakers per language. The speakers are either native speakers of the language, or considered to be fluent.

They present their results in both a table and a graph. Interestingly, I think the table is clearer, so here it is.

|

Let's look at two rows in detail, to illustrate what the table shows. I'll choose Mandarin and Spanish, which are adjacent in the table -- and happen to be near the extremes of what they observe.

This is Table 1 from the paper. Figure 2 is equivalent. However, I found the figure less clear; a better choice of marking the bars for different parameters might have enhanced the visual impact.

|

The simplest data column is the middle one, labeled "syllabic rate #syl/sec". This is the measured speaking speed, in syllables per second. You can see that Mandarin is spoken at about 5 syllables per second, whereas Spanish is spoken at about 8 syllables per second. These are very near the extremes they found in this set of eight languages. Thus we already see that, in this sense, some languages are spoken faster than others.

The first data column is the information density in the language, IDL. One might think of this as the amount of information conveyed per syllable. Since they have no specific measure of information, it is expressed here on a relative scale. IDLfor one language (Vietnamese) is set to 1, for "reference"; IDL values for the other languages are given relative to Vietnamese. This is done, as introduced above, by comparing how many syllables it takes to convey the same texts translated into the various languages. If you look at the table for our two focus languages, you can see that Mandarin has an information density of 0.94, whereas Spanish has an information density of 0.63. Once again, these are near the extremes.

Example... In the Appendix of the paper, they show one of their texts translated into each language. For the first sentence, the English text is 13 syllables, whereas the Spanish text is 18 syllables. That is, it takes Spanish more syllables to say the same thing; the information density is lower for Spanish. Of course, my one sentence example may not be representative. In the paper they show the result averaged over all the texts. The table shows that indeed IDL is lower for Spanish than for English. (Actually, my example sentence is very close to being representative.)

Ok, Spanish is spoken with more syllables per second than Mandarin. But Mandarin has more information per syllable than Spanish. If you multiply these two together -- and again normalize so that Vietnamese is set at 1 -- you get the rate of speaking information, which is shown in the right hand data column as "Information rate". It is just about the same for Mandarin and Spanish; the difference is small compared to the uncertainties shown in parentheses.

|

That's the big idea here. Yes, some languages are spoken faster than others: more syllables per second. But in terms of information, they may come out about even. If you look at the third column of the table, you can see that most of the languages they studied have about the same information rate. However, Japanese is clearly rather different.

There are only eight languages here, so this is just a start. But it offers an intriguing idea, and also suggests that not all languages may fit the main pattern. There is much here for further study.

News story: Language Speed Versus Efficiency: Is Faster Better? (Science Daily, September 1, 2011.)

The article: Across-language perspective on speech information rate. (F Pellegrino et al, Language 87:539, September 2011.) (Put the title in Google Scholar, and you may find a freely available copy.)

For more about language...

* Deciphering the inscription on an old comb (November 27, 2022).

* Do dolphins talk to each other? (November 19, 2016).

* Mountains and human language? (June 28, 2013).

* Speech: Are chimps good listeners? (July 25, 2011).

* Language: What do we learn from other animals? (August 3, 2010).

* Is it language? (July 9, 2009).

* Spleling (June 11, 2009). I suspect this is related to the current post.

* Language development (May 7, 2009).

* Musings: Bilingual. This is a supplementary page, consolidating multiple posts on issues of being bilingual.

November 16, 2011

To kill a mastodon

November 15, 2011

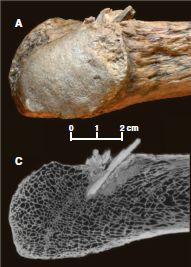

Dead mastodon. Wound in rib. Let's look...

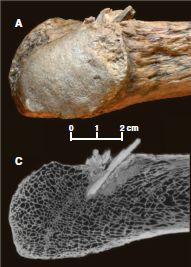

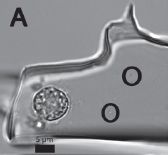

|

The bone within the bone. Two views:

* Part A (upper) shows an ordinary photograph of a part of the rib bone, with the projectile sticking out.

* Part C (lower) shows a CT scan (X-ray) of the same region.

This is Figure 1, parts A and C, from the paper. Note that the scale bar is for both of these parts.

|

It's that second picture that got me to post this item. A CT scan of a 14,000 year old animal, showing quite spectacularly the embedded weapon. Of course, it took more than this one spear to kill the animal. The point (no pun intended) is not to present a complete story about this animal's death, but to see what we can learn from it.

The real story, to the scientists doing this work, is about what humans did -- and when they did it. This kill has implications for our understanding of the early history of humans in North America. This animal had been uncovered in the 1970s, at a site on the Olympic Peninsula, west of Seattle, Washington. An age of nearly 14,000 years was presented, but many were skeptical. Why? Well, there were no humans in that area that long ago -- according to established view. What's new here is definitive evidence to support the dating to 13,800 years ago, making this the oldest known hunting weapon from North America. It comes at a time of increased willingness to accept that man's history in North America does date back that far.

Testing of DNA and protein samples indicate that the weapon tip, as well as the killed animal, is mastodon. That's further evidence that this was from a human attack, not an accident.

News story: Paleo CSI: Early Hunters Left Mastodon Murder Weapon Behind. (LiveScience, October 20, 2011.)

* News story accompanying the article: Archaeology: Pre-Clovis Mastodon Hunters Make a Point. (A Lawler, Science 334:302, October 21, 2011.)

* The article: Pre-Clovis Mastodon Hunting 13,800 Years Ago at the Manis Site, Washington. (M R Waters et al, Science 334:351, October 21, 2011.)

A recent post was also on the topic of proboscideans and evidence for early man in North America: Early American art: a 13,000 year old drawing of a mammoth (July 18, 2011). That post links to a book suggestion on the topic.

And more... Did the First Americans eat gomphothere? (July 29, 2014).

Another mastodon: The first Americans: Is it possible we have the date wrong by 100,000 years? (June 28, 2017).

More on wounds: Targeting growth factors to where they are needed (April 21, 2014).

Cystic fibrosis: treating the underlying cause -- for some people

November 13, 2011

Cystic fibrosis (CF) is a genetic disease -- the most common genetic disease among Caucasians. The gene for CF codes for a protein that transports chloride ion (Cl-) across the cell membrane. The protein and its gene are known as cystic fibrosis transmembrane conductance regulator (CFTR). There are varied effects, but CF patients show deteriorating lung function, which is ultimately fatal.

As so often, treatment is typically symptomatic, not dealing with the cause. However, a new drug, ivacaftor, has been developed which addresses the cause. The drug binds to and restores function of the mutant CFTR protein. A new paper reports results from a phase 3 clinical trial of the new drug.

|

Here is an example of the results.

The graph shows the results of a test for lung function over time, for patients treated with the drug and for control patients treated with a placebo. You can see that the patients treated with the drug showed a rapid improvement, which remained constant during the study. In contrast, the placebo group showed no change (or perhaps a slight decline).

This is Figure 1A from the paper. Other parts of Figure 1, as well as Figure 2, show similarly positive results for other parameters that were measured.

|

The results are generally impressive: good benefit, and no significant side effects. I would not be surprised if the drug receives approval soon.

Despite the favorable results, we must remember that any such clinical trials have limitations. We might consider them in two classes.

* Numbers. A certain number of people were treated for a certain period of time. Thus the test would not show "rare" effects, and says nothing about long term use. This is a general issue for all clinical trials. That is why evaluation of a drug continues after formal approval.

* Disease target. The drug was designed to interact with a particular mutated form of the CFTR protein. All patients tested here carried the particular mutation, called G551D. (The mutation name means that amino acid #551 of the protein is changed from G to D; amino acids G and D are glycine and aspartic acid, respectively.) People with this mutation make an altered form of the CFTR protein; it is inserted into the membrane, but just does not function well. Unfortunately (in this context), that is a relatively uncommon CF mutation. Thus the drug is applicable for only a small subset of CF patients. The drug will be tested on people with other mutations, but there is no clear prediction about its usefulness. A particular issue is that the most common CF mutation leads to a protein that does not even enter the cell membrane; a drug such as ivacaftor, which improves protein function, is useless if the protein is not there.

The second point, on disease target, illustrates a general feature of "personalized medicine"; drugs that have been customized to meet the needs of certain people have a narrow audience. Nevertheless, the drug here seems to be a useful step, and is likely to benefit real patients.

News story: Cystic fibrosis drug ivacaftor offers patients new hope -- Vertex Pharmaceuticals' ivacaftor reduced pulmonary flare-ups by 55% compared with a placebo. For now it applies to those with a certain genetic mutation, but the pool of patients could grow. (Los Angeles Times, November 2, 2011.)

* Editorial accompanying the article: Therapy for Cystic Fibrosis - The End of the Beginning? (P B Davis, New England Journal of Medicine 365:1734, November 3, 2011.)

* The article: A CFTR Potentiator in Patients with Cystic Fibrosis and the G551D Mutation. (B W Ramsey et al, New England Journal of Medicine 365:1663, November 3, 2011.)

More on cystic fibrosis:

* Restoring ion transport in cystic fibrosis patients -- using a pore-forming drug? (April 12, 2019).

* How our immune system may enhance bacterial infection (September 19, 2014).

Several posts on personalized, genome-based medicine, are listed at: Personalized medicine: Getting your genes checked (October 27, 2009).

A microscope small enough that a mouse can wear it on its head

November 12, 2011

|

There it is -- sitting on a person's finger.

It is 22 millimeters high -- just under an inch. It weighs 1.9 grams -- about the weight of the smallest US coin.

This figure is from the PhysOrg news story listed below.

|

To see a mouse wearing the microscope on its head, see the movie file that is linked to the article web page, below. The left side of the movie shows the mouse, with attached microscope, doing various activities. The right side shows the image obtained from the microscope during the same time. The mouse had received an intravenous injection of a fluorescent dye, which labels the blood plasma. Thus the microscope allows the observers to watch the blood flow inside the head while the mouse goes about its usual activities. (The scale bar for the right side is 100 micrometers.)

Attachment of the microscope is not as simple as it might sound. The microscope cannot see through the skull. What is done is to surgically remove a portion of the skull, and replace it with a piece of glass. The glass provides a window into the head; the microscope is attached over the window.

This is a technology story (and the article is from a "methods" journal). The microscope capability here is novel, allowing good observation of an animal that is substantiality free. A table in the paper compares the specs to those of available technologies. Further, the microscope here is simple. They argue that it could be inexpensively mass produced, though I don't see any specific costs.

News stories:

* Stanford group creates miniature self-contained fluorescence microscope. (Phys.Org, September 12, 2011.)

* Cheap, portable mini fluorescence microscope eyes in-vivo and in-vitro applications. (Laser Focus World (was BioOptics World), September 13, 2011.)

The article: Miniaturized integration of a fluorescence microscope. (K K Ghosh et al, Nature Methods 8:871, October 2011.) The movie file is linked to the article web page under Supplementary information.

A post about work making use of such a system: What happens to capillary blood flow in the brain during sleep -- and why? (November 8, 2021).

A previous post on microscope technology: Connecting a cell phone and a microscope (September 2, 2009).

Another small microscope: A ream of microscopes for $300? (June 22, 2014).

Also see a section of my page Internet resources: Biology - Miscellaneous on Microscopy.

Another way to see what is going on inside the head of an animal... Observing inside animals with an improved bioluminescence system (April 6, 2018).

More about making holes in the skull: Skull surgery: Inca-style (August 21, 2018).

We've used the US dime as a frame of reference for weight before... Graphene by the roll -- and soon in your living room (July 31, 2010). The small coin is also useful as a reference for size: The smallest frog (January 31, 2012).

The dime has even been the subject of a post: Money question (May 20, 2009).

November 9, 2011

Is whole genome sequencing a useful medical tool?

November 9, 2011

The idea of personalized medicine is that people are different -- including in their genes. It follows, then, that at least some aspects of medical treatment might best be customized to the individual -- including their genes. We have discussed various aspects of personalized medicine, often emphasizing that this is a very new area, and there is much uncertainty. Of particular note is the post Genome sequencing to diagnose child with mystery syndrome (April 5, 2010), which links to a follow-up. This is about a young child whose genome was sequenced in order to help diagnose his condition; he now seems to be thriving as a result.

So, is this the future? A better answer is that it is a tentative and uncertain step toward that future. Nature has recently published a "news feature" exploring this topic. It properly offers both hope and caution. I encourage you to read it, for a snapshot of today's perspective on an emerging technology.

The article, which is freely available: Genomes on prescription: The first clinical uses of whole-genome sequencing show just how challenging it can be. (B Maher, Nature 478:22, October 6, 2011.)

One of the driving forces behind this is the rapidly declining cost of DNA sequencing. This was discussed in the post The $1000 genome: Are we there yet? (March 14, 2011).

Several posts on personalized, genome-based medicine, are listed at: Personalized medicine: Getting your genes checked (October 27, 2009). The items listed there include both technical advances and other discussions of the difficult birth of this new field.

There is more about sequencing on my page Biotechnology in the News (BITN) - DNA and the genome. It includes a list of Musings posts on sequencing and genomes.

Diagnosis of prostate cancer in a 2100 year old man

November 8, 2011

Cancer is a major disease in modern society. What about ancient times? There is little evidence, and that leaves plenty of room for speculation. Some think that there were fewer carcinogens long ago, so there would have been less cancer. Of course, people did not live as long -- and most cancers are diseases of old age. The issue of ancient cancer was discussed in the post Cancer in the ancient world (November 1, 2010).

A new paper, which Thien called to my attention, adds a bit to the story. It uses high resolution CT scanning of mummies, and provides evidence for a case of metastatic prostate cancer in an ancient Egyptian.

|

Left: The guy. He is thought to have lived sometime in the 1st-3rd centuries BC. He probably died in his 50s.

Right: Imaging of his spinal column. The white spots on the squarish plates are considered lesions from bone cancer.

These are Figures 1 and 4 from the paper.

|

|

The evidence that this is from prostate cancer is indirect. What they are observing are the metastatic lesions, which seem typical of that disease. The paper considers alternatives, and concludes that metastatic prostate cancer is the most likely explanation of what is observed.

The importance of the work is the methodology. They apply improved technology and find something new. It reminds us that we really do not know the incidence of this condition from ancient times. Finding this case does not add to our general understanding of the frequency of ancient cancer.

News stories. Both of these are useful overviews of the new work. You will find some discrepancies between them; mostly, these represent differing interpretations of things that are not known.

* Mummy Has Oldest Case of Prostate Cancer in Ancient Egypt. (Science Now, October 26, 2011.)

* Cancer Found in 2,000-Year-Old Mummy. (Discovery News, November 2, 2011.)

The article: Case Study: Prostate metastatic bone cancer in an Egyptian Ptolemaic mummy, a proposed radiological diagnosis. (C Prates et al, International Journal of Paleopathology 1:98, October 2011.)

More on mummies:

* How to make a mummy (May 7, 2023).

* A new approach for testing a Llullaillaco mummy for lung infection (August 17, 2012).

* The Most Remarkable Funeral Treasures (September 1, 2010).

Here are two other posts on prostate cancer. Note the ambiguity inherent in the titles of both.

* Is folic acid good for you or bad for you? (April 10, 2010).

* A virus that is or is not associated with chronic fatigue syndrome (February 12, 2010). This is about the virus that was claimed to be associated with CFS. It had also been proposed to be associated with prostate cancer. Neither story has held up; the post listed here is my first on the topic of the virus.

An even older tumor... A tumor in a Neandertal (July 8, 2013).

More on prostate cancer: SyAMs: Synthetic drugs that act like antibodies (May 31, 2015).

More about metastatic cancer: Anti-oxidants and cancer? (October 18, 2015).

My page for Biotechnology in the News (BITN) -- Other topics includes a section on Cancer.

My page Internet resources: Biology - Miscellaneous contains a section on Medicine: history. It includes a list of some related posts.

Electronic devices that can work under water

November 7, 2011

Water and electricity don't mix. You know that. Getting an electrical device wet is likely to lead to a short circuit. Of course, we can make an electrical device waterproof by enclosing it in some container or case that keeps water out.

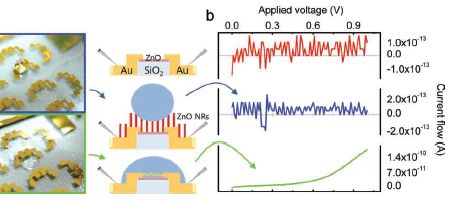

The question is, can we design an electrical device that is fundamentally resistant to water? A device that we could immerse in water with its innards exposed, and have it work just fine? A team of scientists claim to have done just that. Let's look...

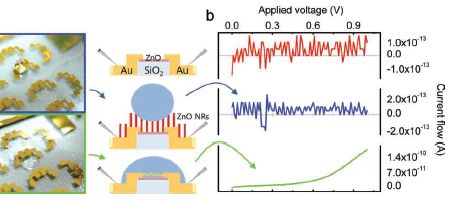

|

The figure here compares the regular device with their special non-wettable device. The figure is complex, so let's break it down, and go through it slowly.

The figure has three sections. From left to right, there are photos, diagrams, and graphs.

|

Let's start with the diagrams (middle). The top diagram shows the device. Some details are shown, but we don't need them here. The second diagram shows the modified device. The modification is the presence of the zinc oxide nano-rods (ZnO NR), the vertical red bars. The diagram also shows a water droplet, in blue. The bottom diagram is the original (unmodified) device, now with a water droplet. The diagrams show the different behavior of the water droplets; let's look at the data behind that difference.

The photos at the left show the water droplets on modified (top) and unmodified (bottom) devices. The photos show that the water wets the unmodified device (and therefore spreads out), but just sits on top of the modified device (without getting to the electrical contacts). If you can't see this clearly here, you might try the figure as shown in the pdf file, perhaps at high magnification.

More importantly, perhaps, the graphs at the right show an electrical measurement that reflects the water behavior. The y-axis of each frame shows the current across the device, as voltage is applied. There shouldn't be much -- unless the water causes a short. Look at the bottom frame -- for the wet device. As the voltage increases, the current increases -- rather clearly. The other two graphs look very different. It's easy to see that the current doesn't vary smoothly with the voltage applied. But to really appreciate the result, you need to look at the numbers on the y-axis for the three cases. The top two frames show currents around 10-13 A (ampere). The bottom frame shows a current that goes up to about 10-10 A; that is a thousand times more than in the top two. Thus we see that the bottom frame shows a substantial current, whereas the top two do not. The bottom device is obviously wet. The top one is obviously dry. But the middle one shows a drop of water, and yet the device remains -- functionally -- dry. Their modification of the device made it inherently water repellent -- or superhydrophobic, as they say.

|

This is new work -- research. Will it turn out to be useful? They think their process is practical. We'll see.

News story: Waterproofing electronic nanodevices. (Nanowerk, October 5, 2011.)

The article: Overcoming The Water Vulnerability Of Electronic Devices: A Highly Water-Resistant ZnO Nanodevice With Multifunctionality. (S Lee et al, Advanced Materials 23:4398, October 11, 2011.) The figure shown above is part of Figure 1 of this paper.

More about hydrophobic materials...

* Nanotechnology leads to the development of a superoleophobic polypropylene -- and a better shampoo bottle (November 6, 2016).

* Water droplets on a trampoline (April 9, 2016).

* A box that will fold up upon command -- heat- or light-actuated switches (September 3, 2011). This discusses another example of the use of hydrophobic materials.

More about ZnO nanorods: Could vibration (or loud music) improve the performance of a solar cell? (December 11, 2013).

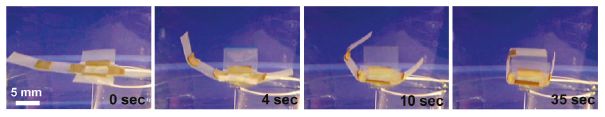

Wings for better walking

November 5, 2011

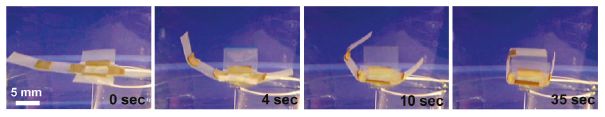

|

UC Berkeley's DASH has been fitted with wings.

DASH = Dynamic Autonomous Sprawled Hexapod. It's also known as the artificial cockroach.

This figure is from the authors' news story. It is probably the same as Figure 1a of the paper. From the figure legend in the paper: "Robot length (excluding the tail) is 10 cm; wingspan is 30.5 cm."

|

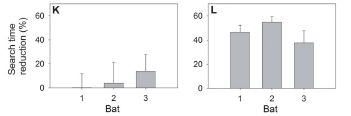

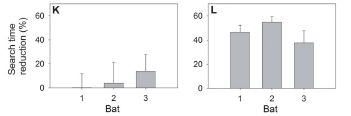

New work shows that the wings help DASH walk better. Let's look at some results.

|

The graph shows two types of measurements for the robot with flapping wings and for three control robots. Even before we get to the details, you can see that the robot with flapping wings (left set of bars) gives the highest results for both types of measurement (blue bars and red bars). In both cases, high is "good".

Each pair of bars is for one type of robot. The blue bars (y-axis scale on left) show the maximum speed the device could achieve running on a flat surface. The red bars (y-axis scale on right) show the maximum incline angle that the device could climb.

|

As noted, the left set of bar is for the winged robot, pictured above. The next set, labeled "legs-only", is for the "parental" robot, without wings. Simply comparing these first two sets gives the basic conclusions: wings help. For example, the winged robot can climb an incline of about 17°, whereas the legs-only robot can only climb an incline of about 6°.

The other two sets of bars are for two more controls. "Inertial spars" means that only the metallic framework of the wings is present; this has most of the weight. And "passive wings" means the same winged robot, but with the wings turned off (not flapping). Both of these controls give results similar to the "legs-only" case. Pictures of all the robots are in the paper, and at the author web site. Videos showing many of the results are available.

This is Figure 4 of the paper.

|

The main implications of this work are direct: the role of wings in helping a robot walk. The paper also addresses the biological question of how wings might have arisen. What good were primitive wings before they were capable of flight? This part of the paper seems to be an afterthought, but those interested can read that story. (Another suggested role for primitive wings is as heat radiators.)

Videos:

There is a set of three videos that accompany this work. There is one for each of the two effects discussed above, and one showing that the wings stabilize the walking robot against roll instability. The videos are less than one minute each, and are very nice. They are available at the author site listed below, well-labeled. They are also available with the article at the journal site, under "Supplementary data"; unfortunately, these files have uninformative names -- but they seem to be the same movies.

News stories:

* Robotic Bug Gets Wings, Sheds Light On Evolution of Flight. (Science Daily, October 17, 2011.)

* Publicity information for: K. Peterson, P. Birkmeyer, R. Dudley, R. S. Fearing, A wing-assisted running robot and implications for avian flight evolution, Bioinspiration and Biomimetics, October 18, 2011 6 046008. Author site. Good source for pictures and the movies.

The article, which may be freely available: A wing-assisted running robot and implications for avian flight evolution. (K Peterson et al, Bioinspiration & Biomimetics 6:046008, October 17, 2011.)

Most recent post on robots: Berkeley Bionics: From HULC to eLEGS -- Follow-up (July 26, 2011).

More on wings:

* Introducing Supersonus -- it stridulates at 150,000 Hz (June 16, 2014).

* Butterflies and UV vision (June 29, 2010).

More on walking... How horses learned to walk (September 21, 2016).

A book about flying -- and therefore about wings -- is listed on my page Books: Suggestions for general science reading. Alexander, On the Wing -- Insects, pterosaurs, birds, bats and the evolution of animal flight (2015).

More on DASH: Acrobatic cockroaches inspire robot design (September 16, 2012).

See my Biotechnology in the News (BITN) topic Bio-inspiration (biomimetics). It includes a listing of Musings posts in the area.

November 2, 2011

Quiz: The monkey-cat -- answer

November 2, 2011

I have updated the original post to include the "answer", with source information. Go to the original post: Quiz: The monkey-cat (October 26, 2011).

An old eye

November 1, 2011

|

An eye. A compound eye, typical of the eye of an arthropod (such as insect or crustacean).

This one is about 515 million years old.

The figure is from the news story listed below. It seems to be a version of Figure 1a from the paper. The figure width represents about 6 millimeters.

|

The eye here is comparable in complexity to that of modern arthropods. The key is to look at the ommatidia, the individual vision units; these are seen as individual "dots", in a regular pattern. With the compound eye, the number of these ommatidia is an indicator of how good the eye is. The eyes found in this study have around 3000 ommatidia.