Musings is an informal newsletter mainly highlighting recent science. It is intended as both fun and instructive. Items are posted a few times each week. See the Introduction, listed below, for more information.

If you got here from a search engine... Do a simple text search of this page to find your topic. Searches for a single word (or root) are most likely to work.

Introduction (separate page).

This page:

2019 (May-August)

August 28

August 21

August 14

August 7

July 31

July 24

July 17

July 10

July 3

June 26

June 18

June 12

June 5

May 29

May 22

May 15

May 8

Also see the complete listing of Musings pages, immediately below.

All pages:

Most recent posts

2026

2025

2024

2023:

January-April

May-December

2022:

January-April

May-August

September-December

2021:

January-April

May-August

September-December

2020:

January-April

May-August

September-December

2019:

January-April

May-August: this page, see detail above

September-December

2018:

January-April

May-August

September-December

2017:

January-April

May-August

September-December

2016:

January-April

May-August

September-December

2015:

January-April

May-August

September-December

2014:

January-April

May-August

September-December

2013:

January-April

May-August

September-December

2012:

January-April

May-August

September-December

2011:

January-April

May-August

September-December

2010:

January-June

July-December

2009

2008

Links to external sites will open in a new window.

Archive items may be edited, to condense them a bit or to update links. Some links may require a subscription for full access, but I try to provide at least one useful open source for most items.

Please let me know of any broken links you find -- on my Musings pages or any of my web pages. Personal reports are often the first way I find out about such a problem.

August 28, 2019

Pavlovian plants: a follow-up. In an earlier post, we noted an article suggesting that plants could show Pavlovian responses. Pea plants learned to associate a fan with food, and would grow toward the fan even if that path did not lead to food. It's controversial work. Some use such work to suggest that plants have consciousness. Others object to that interpretation. We now have am "opinion" article from a group of skeptics. As before, I encourage people to try to understand the experiments that have been done, and the basis of the differing views. Understanding nature is good; semantic debates may not be so good. The goal here is to stimulate discussion and understanding, not to reach a verdict.

* News story: Botanists Say Plants Are Not conscious. (C-Y Hou, The Scientist, July 5, 2019. Now archived.)

* The "opinion" article: Plants Neither Possess nor Require Consciousness. (Lincoln Taiz et al, Trends in Plant Science 24:677, August 2019.)

* Background post: Can a plant learn to associate a cue and a reward? (March 3, 2017).The article discussed in this background post is reference 29 of the current article. The current authors discuss the earlier work at some length. They note an attempt to reproduce the earlier work, which is not yet published, and which seems to have yielded complex results.

August 27, 2019

4.4x1020.

How many worms are there on Earth? Perhaps you have wondered about it. Now we have a number. To be more specific, it is an estimate of the number of nematode worms in the top layer (15 cm) of soil. Nematodes, commonly called round worms, are the most abundant animals on Earth (they are tiny).

Here is a map showing where they are...

|

The map shows the density of nematode worms. in number per 100 grams of soil. That density is color-coded; see the color bar. Light colors are for high densities (as many as 19,000 worms per 100 grams soil); dark colors are for low densities (as low as 100 worms per 100 g soil -- which is one worm per one gram soil). (Does anyone else find that color coding "backwards"?)

The map shows worm densities. It's a little math to get from the densities to a worm count. This is Figure 3 from the article. |

How did the scientists get that? Well, it's an estimate. They sampled a number of locations, then developed a computer model that allowed them to calculate an expected value for very location on Earth.

They sampled 6,759 locations, as summarized on the following map...

|

The figure shows the results from their samples. As before, there is a color code to show the worm density at each site. Note that the color code here is very different from the one in the top figure.

This is Figure 1a from the article. |

What do we learn from all this? The top map shows a striking pattern. There is quite a north-south gradient in soil nematode prevalence on Earth. That is unusual; in general, animals are more abundant in the tropics.

There are some exceptions and special cases. South America seems to have its own east-west gradient of nematodes. And New Zealand's prevalence of soil nematodes seems odd. The level of soil organic carbon (SOC) is one factor that correlates with nematode abundance, but there is much more to be learned.

The scientists actually did more than just count the nematodes in their samples. They classified them, mainly by what type of food the worms ate. So the article contains data about the prevalence of difference types of nematodes.

One thing scientists do is to measure things. Count things. The work provides some basic numbers about Earth that we did not know before. It's a step toward understanding the complex biology of soil. And it satisfies our curiosity.

News stories:

* Global study of world's most abundant creatures published today in Nature. (Asian School of the Environment, Nanyang Technological University, July 31, 2019.)

* There are 57 billion nematodes for every human on earth; Understanding them will help address climate change. (T Hollingshead, BYU, July 31, 2019.)

* News story accompanying the article: Ecology: Global maps of soil nematode worms. (N Eisenhauer & C A Guerra, Nature 572:187, August 8, 2019.)

* The article: Soil nematode abundance and functional group composition at a global scale. (J van den Hoogen et al, Nature 572:194, August 8, 2019.)

More nematodes:

* Engineering a worm to treat cancer (July 23, 2022).

* What can we learn from 17,000-year-old cat feces? (September 16, 2019).

* How to avoid cannibalism (May 25, 2019).

* Extending lifespan by dietary restriction: can we fake it? (August 10, 2016). A post about a nematode that is a workhorse of lab research, Caenorhabditis elegans.

* How does worm "fur" divide? (January 4, 2015).

* Why would a plant have leaves underground? (January 21, 2012).Other counts include...

* Briefly noted... How many ants are there? (December 10, 2022).

* Counting trees on Earth from space -- at one-tree resolution (January 12, 2021).An attempt to count everything: The ultimate census: the distribution of life on Earth (June 22, 2018). The article of this earlier post is reference 36 of the current article. The current authors note that their estimate of global nematode biomass is considerably higher than estimated just a year ago in the broader survey.

August 26, 2019

Almonds are a major food crop. But the "natural" form of almonds is not so good. Very bitter, and quite poisonous. Both of those features are due to a chemical called amygdalin. When we ingest amygdalin, our metabolism releases hydrogen cyanide from it. Turning almond into an important human food required domestication. We have limited knowledge about the history of almonds.

A recent article reports sequencing the genome of almonds: ancient "bitter" almonds and modern "sweet" almonds. Interestingly, the scientists find a single mutation that is responsible for this one important change.

The following figure shows how amygdalin is made in almonds. It also shows the levels of the relevant enzymes in sweet and bitter almonds.

|

The central part of the figure shows the biochemical pathway for making amygdalin. It starts with the standard amino acid phenylalanine (top). The top two steps lead to a nitrile group (also called cyano; -CN) at the right. The next two steps add two sugars, leading to amygdalin (bottom).

The genes for the enzymes that carry out those steps are all known. What is shown here is the level of those enzymes in the two types of almond. Actually, what is measured here is the level of the messenger RNA for the enzymes; that is often (but not always) usefully related to the enzyme level. That level is shown by the color-coded boxes; there is a color key at the left, labeled FPKM. You can see that the boxes for the last two steps are about the same for the two almond strains. But for the first two steps; the bitter almonds have red boxes, and the sweet almonds have blue boxes. Check the color code, and you see that the sweet almonds have very low levels of the enzymes for the first steps in making amygdalin. This is Figure 2 from the article. |

That figure tells us the biochemical difference between bitter and sweet almonds. But what is the genetic difference behind that biochemical difference?

In the new work, the scientists sequenced the genomes for bitter and sweet almonds. Of particular interest, they looked at the genome sequences for the region thought to contain the mutation leading to sweet almonds. It showed differences in a group of transcription factors: proteins that help choose which genes get transcribed into messenger RNA.

There are five such transcription factor genes in that group. The scientists tested each one, and found that one of them was responsible for transcribing the genes for the first two steps shown above. The specific genetic change they found for that gene explained the biochemical difference. That genetic change, it would seem, was a key step in domesticating the almond.

Knowing this genetic change may help scientists uncover the history of the almond. They may be able to test whether archeological samples of ancient almonds were bitter or sweet -- if they can get some almond DNA from the sample.

Miscellaneous...

You may have heard of amygdalin. It is a component of apricot seeds. We don't eat apricot seeds, but the amygdalin has gotten attention (because of a claim, probably incorrect, that it is useful in treating cancer). Interestingly, the almond and apricot are closely related. Others in the family include apple, peach and cherry. For most of those, we eat the flesh of the fruit. The almond is the only one where we normally eat the seed itself.

The article notes that "death by peach", using the seeds, was practiced in ancient Egypt as a form of capital punishment. The article provides references.

This is a local-interest story. A California story. About 80% of the world's commercial almond crop is grown in California. (The article is from universities in Europe.)

News stories:

* Almond Genome Sequenced. (Sci.News, June 17, 2019.)

* Sequencing the almond reveals how it went from bitter to sweet. (B Yirka, Phys.org, June 17, 2019.)

* Danish researchers unravel how toxic almonds became edible. (University of Copenhagen, June 17, 2019. Now archived.)

The article: Mutation of a bHLH transcription factor allowed almond domestication. (R. Sánchez-Pérez et al, Science 364:1095, June 14, 2019.)

Another story of cyanide poisoning from a food crop: Briefly noted... Cassava poisoning. (April 24, 2019).

The enzymes for the first two steps in making amygdalin (the two enzymes that are reduced in the sweet strain) are cytochrome P450 oxidases. Another post about this class of enzyme: Reconstructing an ancient enzyme (February 26, 2019).

More cyanide, in a more constructive role: Modeling the role of hydrogen cyanide in the pre-biotic formation of life's chemicals (September 8, 2019).

Other domestication posts include...

* Will a wolf puppy play ball with you? (February 7, 2020).

* The oldest known dog leash? (January 23, 2018).

* What can we learn from a five thousand year old corn cob? (March 21, 2017).Other California posts include...

* Formation of the Moon: the California connection (October 10, 2014).

* Groundwater depletion in the nearby valley may be why California's mountains are rising (June 20, 2014). There is even an almond connection here... The almond crop is water-intensive; the increasing growth of almonds in California is exacerbating water problems.There is more about genomes on my page Biotechnology in the News (BITN) - DNA and the genome. It includes an extensive list of related Musings posts.

August 24, 2019

Can we predict that an organ failure is imminent, so that we can introduce treatment before the crisis?

Can AI (artificial intelligence) predict that an organ failure is imminent?

A recent article reports some progress using AI to predict kidney failure. As often with AI, the article has provoked controversy. The goal here is to give an idea of what the scientists tried to do, what they accomplished, and why there are reservations.

The following figure serves to frame the discussion.

|

Both parts of the figure show some parameter plotted against time (days in the hospital).

Part a (top) shows some actual data for one patient. The parameter plotted is blood level of the chemical creatinine. The level is (apparently) stable for a while, then starts to rise on day 4. About two days later, the creatinine level rises dramatically: AKI (acute kidney injury). It is the kidneys' job to clear excess creatinine from the blood; high creatinine level is a marker for kidney failure. But that early blip (day 4) is not a reliable predictor; by the time creatinine rises significantly, the damage has been done. Part b (bottom) shows how the AI model developed in the article works. What's plotted on the y-axis is a probability value (green line) from the AI system: the p that AKI will occur (within 48 hours). The red line marks a threshold (p = 0.2), which turns out to be a useful predictor. You can see that the p value is low for a while, then reaches 0.2 at day 4. A warning. Two days later, p shoots up, reflecting the kidney failure. This is part of Figure 1 from the article. |

What is the basis of the AI prediction? Well, we don't know. The computer figured it out after being trained on a large data set from hospital records. We don't know what it did. (It does report the reasons for each specific prediction it makes.)

How well does it actually work? Let the authors speak for themselves. Here are two sentences from near the end of the abstract...

Our model predicts 55.8% of all inpatient episodes of acute kidney injury, and 90.2% of all acute kidney injuries that required subsequent administration of dialysis, with a lead time of up to 48 h and a ratio of 2 false alerts for every true alert. ... Although the recognition and prompt treatment of acute kidney injury is known to be challenging, our approach may offer opportunities for identifying patients at risk within a time window that enables early treatment.

Is that good? Perhaps that is not the right question. It is a development. We do not yet know what such a system can ultimately do. That is for future work.

The work reported in this article was based on electronic records of past patients. About 700,000 of them. A portion of the available data set was used for training the computer. The resulting proposed algorithm was then tested on a separate subset of the data. It has not been tested "live".

News stories. As you read these news stories, note the range of views and questions.

* Using AI to predict acute kidney injury. (B Yirka, Medical Xpress, August 1, 2019.)

* Kidney Injury and Artificial Intelligence Still Not Ready for Prime Time. (C Dinerstein, American Council on Science and Health (ACSH), August 5, 2019.)

* Google's DeepMind follows a mixed path to AI in medicine -- The DeepMind unit of Google is finding ways to detect deterioration in patients in hospitals, but it's not ready for primetime; instead, the software that's actually making a difference is a simple mobile alerts app. (T Ray, ZDNet, August 2, 2019.) More of a computer perspective here.

* Using AI to give doctors a 48-hour head start on life-threatening illness. (M Suleyman & D King, DeepMind, July 31, 2019.) From the AI company doing the work. The authors of this page are two of the authors of the article.

* Expert reaction to study on a deep learning approach to predicting acute kidney injury. (Science Media Centre, July 31, 2019.) As usual from this source, a range of opinions from knowledgeable people.

* News story accompanying the article: Medical research: Deep learning detects impending organ injury. (E J Topol, Nature 572:36, August 1, 2019.)

* The article: A clinically applicable approach to continuous prediction of future acute kidney injury. (N Tomašev et al, Nature 572:116, August 1, 2019.)

There are two additional articles published recently (July 2019) on related issues. The authorship is overlapping among the three articles. The additional articles are freely available. The ZDNet and DeepMind news stories link to both of them. (The ACSH story refers to one of them, but has an incorrect DOI for it.) This post does not discuss either of these other articles, but they are possibly of interest to some readers.

* * * * *

Among posts on artificial intelligence...

* Help design a new alphabet (March 1, 2016).

* Robots that can quickly adapt to disabilities (June 23, 2015).Does AI always mean artificial intelligence? Apparently not... When rivers (or streams) join, what is the preferred angle between them? (April 18, 2017).

Among kidney posts...

* Kidney regeneration in small rodents (December 14, 2021).

* WAK: Early clinical trial is encouraging (July 1, 2016). Links to more.

August 21, 2019

Two related items. They deal with the recently discovered group of archaea known as Asgard, which have been suggested to be a key link between prokaryotes and eukaryotes. The first is a news feature, with a general overview of the Asgard story. The second is a preprint of a specific new finding.

1. An update on the Asgards (and Lokis). (Asgard is a superphylum; Lokiarchaeota is a phylum within Asgard.) A news feature gives a nice overview.

* News feature: The trickster microbes that are shaking up the tree of life -- Mysterious groups of archaea - named after Loki and other Norse myths - are stirring debate about the origin of complex creatures, including humans. (T Watson, Nature News, May 14, 2019. In print: Nature 569:322 May 16, 2019.)

2. Asgard in culture. So far, the Asgards have been known only from metagenomics: accumulated DNA sequences that imply an organism. We now have the first report of an Asgard being grown in lab culture. This is a significant development, regardless of how we end up classifying these novel microbes.

* News story: Elusive Asgard Archaea Finally Cultured in Lab -- The 12-year-long endeavor reveals Prometheoarchaeum as a tentacled cell, living in a symbiotic relationship with methane-producing microbes. (N Lanese, The Scientist, August 12, 2019. Now archived.) Links to the article, which is freely available as a pre-print.

* The article has now been published; it is discussed in the post: An Asgard in culture (February 4, 2020).

Background post... Our Loki ancestor? A possible missing link between prokaryotic and eukaryotic cells? (July 6, 2015). Links to more.

August 20, 2019

Hematopoietic stem cells (HSC) are stem cells of the blood-forming system. They are useful therapeutically as well as for research.

Supplying HSC in large numbers is still difficult. A new article reports some progress, for mouse HSC.

The following figure shows the idea...

|

Part a (left) shows the experimental design, at least in part. Stem cells were isolated from a donor mouse at the left. For the main part of the experiment, a very small number of such cells (50) were taken and "expanded" -- grown in lab culture for about a month to increase the number of cells. The colored pot in the middle shows the expansion step.

The expanded population of stem cells was then injected into the recipient mouse, at the right. The labeling of the two mice, which you need not follow, shows that the two mice are different. That difference can be tracked in the next stage of the experiment, using antibodies specific for the markers from each strain. Part b (right) shows the growth of the donor cells over time in the recipient mouse. The y-axis is a measure of the growth of the donor stem cells that were added. (It is labeled PB chimerism (%). PB = peripheral blood. Chimerism reflects that there are two kinds of cells, one of which is the kind we want, from the donor.) There are two data sets. In one case, the donor cells had been "expanded", as shown in part a. In the other case, "fresh" HSC isolated from the donor and used without expansion, were injected. You can see that essentially nothing happened with the fresh donor cells (dark symbols). However, with the expanded donor cells (light symbols), their abundance increased over time. This is part of Figure 4 from the article. |

That is, the expanded donor cells worked. But there is more you need to know to understand the significance. Did they add the same number of cells in both cases? No. They added very few fresh cells, but a large number of expanded cells. Why? Because "very few" fresh cells is all they had. It is the expansion step that gave them enough cells so that the donor cells could "take".

One more point... The label of the recipient mouse says it is "nonconditioned". It is common when adding donor stem cells to first "condition" the recipient. For example, radiation treatment of the recipient destroys the recipient's own blood-forming system. That allows a small number of fresh cells to work. But the conditioning itself is a dangerous step. The point here is that the expansion step allows the use of recipients that have not received this conditioning treatment.

How did the scientists figure out the conditions that allowed good expansion? It was largely trial and error. Here is an example...

|

In this experiment, they tested two growth factors, each at four concentrations. All possible combinations. The numbers show the results. For convenience, they also color-coded the numbers; see the scale at the bottom. Red is best; you can quickly see that one particular combination gave a "best" result (brightest red).

This is Figure 1a from the article. |

One aspect of the development does deserve a comment. When cells are grown in the lab, it is common that one ingredient is the protein serum albumin. Unfortunately, albumin is something of a problem, even with carefully produced recombinant forms. Lots of things stick to it, and it ends up being a source of contaminating ingredients. In the current work, the scientists were able to replace the serum albumin -- with a rather simple chemical, polyvinyl alcohol.

Will the developments reported here work for human HSC? That needs to be tested. For now, this work mainly benefits those who work with mouse HSC for research. But it will motivate and guide people to try something similar for human HSC.

News stories:

* Radiation-free stem cell transplants, gene therapy may be within reach. (Medical Xpress (K Conger, Stanford University Medical Center), May 30, 2019.)

* Blood stem cell breakthrough could make treatments safer and more effective. (Blood Cancer UK, May 30, 2019.) (The organization behind this site partially funded the work reported here.)

The article: Long-term ex vivo haematopoietic-stem-cell expansion allows nonconditioned transplantation. (A C Wilkinson et al, Nature 571:117, July 4, 2019.)

A post about a person who seems to have run out of HSC: A 115-year-old person: What do we learn from her blood? (November 18, 2014).

My page Biotechnology in the News (BITN) for Cloning and stem cells includes an extensive list of related Musings posts.

August 19, 2019

If the room you are in is too hot or cold, you adjust the setting on the air conditioner or heater. The room reaches a more desirable temperature.

Wouldn't it be more efficient to just adjust your personal temperature, rather than the whole room?

Doing that when heating is needed is relatively straightforward; an ordinary jacket may suffice. But doing it when cooling is needed is not so easy. A recent article reports a single device that can do both.

Look...

|

Part A, the photo at the left, shows the device on a person's arm. It is labeled TED = thermoelectric device. (The white square contains the actual device. The blue is armband.)

Part B (right) shows some results. The frames of Part B are for six different starting temperatures -- the air temperature (Tair). Each frame shows temperature vs time. Let's look at the first one in detail. The black line near the bottom is labeled Tair. For the first frame, it was set to 22 °C -- and held constant. The colored curve is Tskin. In this case, it started at about 28 °C for the first few minutes; that reflects the natural response of the person to the air T. Then, at about 5 minutes, TED was turned ON. Tskin rose, quite rapidly, to 32 °C. 32 °C was chosen here as the desired T, or "set point" for the device. The top of the frame is labeled TED OFF and TED ON. The arrows refer to the two sides of the graph, unshaded to the left and shaded to the right, respectively. The six frames differ in Tair; it ranges from 22 °C to 36 °C. In each case, after turning TED ON, Tskin quickly became 32 °C. That required TED to heat the arm in the first three cases, and to cool the arm in the last three. This is part of Figure 5 from the article. |

That is, the TED was able to rapidly provide the desired T given air temperatures over a range of 14 Celsius degrees, heating or cooling as needed. (Without the device, the person could maintain the desired T over only about a 2 degree range.)

So what is this little device? What does thermoelectric (TE) mean?

As the name might imply, a TE device involves heat and electricity. In the device shown above, an electric current is used to pump heat from one side to the other. Switch the direction of the current, and you switch the direction of heat transfer. That's from the skin or to the skin, in this case. The TE (or "Peltier") effect is well known to physicists, but probably unfamiliar to many people; it takes advantage of an unusual combination of properties in some materials, and is otherwise not easy to explain.

The principle of TE devices is fine; making them practical is another matter. The technical progress in the current work was the internal design of the TED, so that the heat was efficiently removed to the outside world. (The device contains no "heat sink".) Imporantly, the device was made to be flexible, as needed if it is to effectively become an article of clothing.

|

Part A (left) shows a diagram of the device. You can see its open and flexible structure.

Part B (right) shows a photograph of the device. It is about 5 cm on a side, and less than 1 cm thick. See scale bar. |

|

This is part of Figure 1 from the article. The left side, which I labeled "A" here, is actually one part of Figure 1A in the article. | |

The results in the top figure show cooling of 4 C°. Other work shows that the device can provide 10 degrees cooling. Of course, the power required increases for the bigger effect.

The TE is fundamentally simple, and well-suited to small devices. In general, TEDs are long-lived; after all, they have no moving parts. Time will tell whether the implementation here is robust. Battery lifetime is an issue, and will depend on how much skin you want to affect, as well as on the T change. Of course, if you are inside, you could plug it in; as we noted at the outset, air conditioning yourself rather than the whole room is a motivation for the work.

News stories:

* Researchers Develop Wearable Cooling and Heating Patch. (Sci.News, May 20, 2019.)

* Wearable Patch Can Regulate Body Temperature. (E Montalbano, Design News, June 14, 2019. Now archived.)

The article, which is freely available: Wearable thermoelectrics for personalized thermoregulation. (S Hong et al, Science Advances 5:eaaw0536, May 17, 2019.) The Introduction section provides a nice overview of how "personalized thermoregulation" may be useful, and summarizes previous efforts to achieve it.

The Wikipedia page on "Thermoelectric cooling" seems useful. It includes good discussions of the pro and con of TE. However, don't expect it to lead to good understanding of how it works.

* * * * *

More about flexible electronics: Supercapacitors in the form of stretchable fibers -- suitable for clothing (May 2, 2014).

More things you might wear...

* An ultrasound device you can wear (September 17, 2022).

* Brain imaging, with minimal restraint (June 2, 2018). Check the picture.

* Using your sunglasses to generate electricity (August 14, 2017).A different application of thermoelectrics... A step toward a practical thermoelectric converter: get the oxide out (October 11, 2021).

Also see: Using ultrasound to recharge an implanted medical device (October 19, 2019).

There is more about energy issues on my page Internet Resources for Organic and Biochemistry under Energy resources. It includes a list of some related Musings posts.

August 17, 2019

A recent article claims that people have more fabellae now than they used to -- a hundred years ago.

Here is the data summary from the article...

|

The graph shows the prevalence of fabellae in people over time, for about the last century.

Prevalence is shown (y-axis) as the fraction of knees with a fabella. Each point on the graph is for one reported set of measurements in the scientific literature. The dark line shows a best-fit; the shaded regions show the confidence intervals, at various levels of confidence. There are two main observations: - The prevalence of fabellae seems to be increasing over time. - At any particular time, reports of fabella prevalence vary widely. This is Figure 4 from the article. |

So. what are fabellae? Here are pictures of some human knee joints, each of which has a fabella. It may help you to know that the word fabella means "little bean".

|

Each picture is for the right knee of an adult human female.

Each knee here has a fabella. (You did find the "little bean"?) The article identifies these fabellae as large, medium, small (left to right). That is, the range of sizes shown here is intended to be instructive. This is Figure 1 from the article. |

That's the "what". You probably have more questions. In general, the answer is, we don't know. It's that kind of topic.

There are methodological questions, especially about the older reports. The authors report that the prevalence rates of other, similar (sesamoid) bones have not increased over the same time period; that provides something of an internal control suggesting that the trend is real. In any case, the high variability of the numbers in the modern reports would seem to stand.

One more fact that is particularly interesting... An individual may have 0, 1 or 2 fabellae. That is, development of fabellae on the two knees of a person seems to be (at least partially) independent.

What causes the variation -- over time and between knees? There may well be genetic underpinnings, but the rapid changes in fabella prevalence reported here cannot be due to genetic changes. That implies environmental influence. What? The authors suggest it may be due to people getting bigger, thus altering the forces in the developing joint system. That's just speculation, but is an example of something that can be studied further.

The fabella seems to have come and gone before during the evolutionary history of primates.

An odd little article, about an odd topic. It involves interesting methodological issues, and it focuses us on an aspect of human variation that is probably unfamiliar. Does it matter? There is some evidence linking the presence of fabellae to knee problems, including arthritis. Not a strong case, but a clue to follow.

News stories:

* Tiny Knee Bone, Once Lost in Humans, Is Making a Comeback -- The fabella disappeared from our lineage millions of years ago, but over the last century, its presence in people's knees has become more common. (J Akst, The Scientist, April 19, 2019.) Now archived.

* Mystery arthritis-linked knee bone three times more common than 100 years ago. (C Brogan, Imperial College London, April 17, 2019.) From the university.

The article, which is freely available: Fabella prevalence rate increases over 150 years, and rates of other sesamoid bones remain constant: a systematic review. (M A Berthaume et al, Journal of Anatomy 235:67, July 2019.)

Among the questions about the fabella... what is the proper plural? Amusingly, the authors use both -e and -s within this article. The Wikipedia page avoids the point, though making clear that the term comes from Latin.

The term sesamoid bones is used here. It refers to bones in tendons and muscles. The term comes from their common size; think "sesame seed".* * * * *

Posts about knees include...

* Can we predict whether a person will respond to a placebo by looking at the brain? (February 21, 2017).

* Using your nose to fix knee damage (January 28, 2017).

August 14, 2019

Two related items, about brain-computer interfaces (BCI). The first is a report from Elon Musk's new company in the field. The second is a brief overview of the challenges in the field. Thanks to Borislav for sending the first item; the second showed up in looking for information about the first.

1. Elon Musk -- and the brain-computer interface. A new Musk company, called Neuralink, made a big splash recently by announcing some progress... They have developed miniaturized brain-implantation devices with ten times more electrodes than those used previously, and a robotic surgical technique for inserting them.

* News story: Elon Musk's Neuralink Says It's Ready for Brain Surgery. (A Vance, Bloomberg, July 16, 2019. Now archived.)

* The article, which is freely available: An integrated brain-machine interface platform with thousands of channels. (Elon Musk & Neuralink, July 16, 2019.) It is posted at a preprint server. There is no indication that any peer review or further publication is planned. The article is described as a white paper. There is nothing wrong with this; it's just that you should realize that the work has not been given any external review. Other materials you will find should generally be taken as promotional.

Update December 16, 2021... The article has been published; it is labeled "white paper". Here is a direct link to the published article...

* The article, which is open access: An Integrated Brain-Machine Interface Platform With Thousands of Channels. (Elon Musk, Journal of Medical Internet Research 21:e16194, October 31, 2019.) Links to some comments (see first page).

* and one more news story: Neuralink's Technology Is Impressive. Is It Ethical? -- Elon Musk's audacious vision of a smartphone-controlled brain-machine interface comes with potential risks. (Andrew Maynard, Medium, July 23, 2019.)

2. The BCI challenge. A brief but interesting "opinion" article about the field came out in late 2018. It emphasizes the emerging role of tech entrepreneurs. It mentions Neuralink, but without specifics.

* The article, which is freely available: Silicon Valley new focus on brain computer interface: hype or hope for new applications? (S Mitrasinovic et al, F1000Research 7:1327, First published: August 21, 2018.) It is posted with reports by three reviewers (in a process sometimes called open review).

* Recent BCI post: Using a brain-computer interface to direct synthetic speech (July 16, 2019).

* More about brains is on my page Biotechnology in the News (BITN) -- Other topics under Brain. There is a list of related Musings posts.

August 13, 2019

A recent article makes a connection between two lines of work on Alzheimer's disease (AD). One is the common story of a small peptide called amyloid-beta (Aβ or AB). Aβ is a fragment cleaved off a larger normal protein. Aggregated forms of Aβ are suspected to be an important part of the disease process. However, attempts at therapy based on this model have, so far, failed. The second line of AD work here is one we usually hear less about, though it has been noted for decades: people with AD have reduced blood flow to the brain, a vascular deficiency.

The new article provides evidence that the Aβ peptide causes vascular deficiency.

The first figure shows the idea, based on lab testing...

|

In this test, human brain slices were examined in the lab.

In the photos at the left, there is a capillary running horizontally across the figure. Look at the red bars near the right end of each capillary. Each red bar marks the diameter of the capillary at that site. The top red bar is clearly longer than the bottom red bar. What's the difference? Aβ peptide was applied to the lower sample at that site. That is, Aβ peptide caused the capillary to constrict. The graph at the right shows the effect over many measurements. The graph shows the diameter of capillaries over time. (It's a normalized diameter, with the initial size of each capillary set to 1.) The top, control, curve (open symbols), shows that the diameter remains more or less constant over time. The bottom curve (dark symbols) shows the result when Aβ is added at time zero. You can see that application of the Aβ peptide causes the capillary diameter to decline over the 40 minute observation period. On the graph, the label says Aβ1-42. That means that the specific form of Aβ peptide used here has amino acids 1-42 of the original protein. There are various forms of Aβ, but this is a common one. aCSF = artificial cerebrospinal fluid. This is Figure 1H from the article. |

The experiment above shows that Aβ can constrict capillaries -- in the lab. It doesn't say it happens in nature or is relevant to the disease process.

Here's some data from people...

|

In this test, brain samples obtained during surgery were examined to see whether or not they contained Aβ deposits. Capillaries from the brain samples from both groups were measured.

The graph shows the result of measuring the diameters of about 5000 capillaries from the brains of people with Aβ deposits, along with a similar control set (no Aβ deposits). |

|

The graph shows that the capillaries of people with Aβ deposits are narrower than for the control group. It's not a big effect, but, hey, this is the blood supply to the brain. And the effect certainly is statistically significant. The number that is so prominent near the bottom of each bar? It's the number of images examined. Ignore it. This is Figure 4C from the article. | |

It's a long complex article; we have offered only hints of its argument here. Importantly, the scientists work out how Aβ constricts capillaries.

In brief... The presence of Aβ leads to the production of reactive oxygen species (ROS). The ROS set off a chain of events that leads to an effect on cells around the capillaries called pericytes, causing them to contract. In fact, the capillary measurements reported above were made at the site of pericytes.

If this finding holds up, how does it affect our understanding of AD? That remains to be seen. However, our current understanding has not been sufficient to lead to a treatment, so a better understanding should be welcome progress.

As an example of how things might go... Perhaps the effect of Aβ on capillaries is one of its most important effects. If so, treatments targeted at capillary constriction might be useful. Such treatment might not directly deal with Aβ at all, instead focusing on one of its targets. But for now that is speculation. What's important is that the article connects Aβ and vascular effects, and seems to open up some new lines of work for exploration.

It is easy to get confused or overwhelmed when reading about AD. One aspect of the work is to describe what happens, and why. Another aspect is to try to sort through the things that happen and figure out which ones are most important in the disease process. It is not easy to make that distinction, and the AD field is full of effects of unknown importance.

News stories:

* Squeezing of blood vessels may contribute to cognitive decline in Alzheimer's. (Neuroscience News (University College London), June 23, 2019.)

* Aβ Acts Through Pericytes to Throttle Brain Blood Flow. (M B Rogers, ALZFORUM, June 22, 2019.) Includes some comments from scientists in the field at the end.

* News story accompanying the article: Neurodegeneration: The vascular side of Alzheimer's disease -- Protein aggregates restrict cerebral blood flow, which causes neural injury. (A Liesz, Science 365:223, July 19, 2019.)

* The article: Amyloid β oligomers constrict human capillaries in Alzheimer's disease via signaling to pericytes. (R Nortley et al, Science 365:eaav9518, July 19, 2019. Online only; not in print edition, except for a one-page summary, p 250.)

Previous post on AD: Formation of new neurons in adults: relevance to Alzheimer's disease? (May 21, 2019)

Next: Alzheimer's disease: a role for inflammation? (January 18, 2020).

My page for Biotechnology in the News (BITN) -- Other topics includes a section on Alzheimer's disease. It includes a list of related Musings posts.

August 11, 2019

The "Paleolithic" ("paleo") diet is an odd one. The idea behind it is that we should eat what ancient man ate; that was, perhaps, the natural diet for humans. In particular, the paleo diet avoids the products of modern agriculture. There are numerous difficulties with that idea. We don't know it was good for them. And we certainly don't know that it is good for us, in the quite different circumstances of modern man. (For example, infectious disease is a much smaller burden -- and selective force -- now than for ancient man.) Of course, all ideas are welcome. Why don't we test them?

Testing human diets is not an easy task. Not surprisingly, not much good testing gets done. However, a new article reports a test of the paleo diet. It's a limited test, but it offers some interesting results.

Here are some of the data. I have selected a few items, from various places in the article, to present here, because they are part of one story -- the major story that the authors develop.

| Control diet | Strict paleo diet | Significant?? | |

|---|---|---|---|

| Food intake (from Table 2) | |||

| Whole grains (servings/day) | 2.9 | 0.1 | ** |

| Red meat (servings/day) | 0.4 | 0.8 | NS (but probably close) |

| Clinical measurements (from Table 3) | |||

| TMAO (µM, blood serum) | 3.9 | 9.5 | ** |

| Gut bacteria (from Figure 3) | |||

| Hungatella (relative abundance) | 0.01 | 0.02 | ** |

There were about 20 people in the strict paleo group; they had a long commitment to that diet, but the study followed them here for only a few days.

For significance, ** means p ≤ 0.01 for difference from control group (a common convention). * would mean p ≤ 0.05. NS means p > 0.05.

"Serving" is defined for each type of food in the article. All that matters here is that it is consistent across a row.

Numbers shown for the bacteria are my estimates from the graph.

What's this about? The player of interest is TMAO (trimethylamine-N-oxide). We have noted it before; it has been linked to consumption of red meat -- and to heart disease [link at the end].

The key finding, then, is that TMAO is elevated in people on the strict paleo diet. Not good, given the link to heart disease. (Note that the current study is short term, and has no direct measure of heart disease.)

Are there clues as to why people on the paleo diet have elevated levels of TMAO? Yes, three of them, shown in the table above.

One is presence of Hungatella bacteria in the gut. That may not be a familiar name, but it has been established that it makes TMAO. High levels of Hungatella bacteria in the gut correlate with high levels of TMAO in the blood, and we understand why.

Hungatella is a strict anaerobe, formerly classified as Clostridium.

And there are two clues in the diets. The people on the paleo diet eat more red meat and less whole grains; those two dietary points correlate with having high TMAO.

The red meat effect does not test as significant; see the table. Nevertheless, there is a correlation here. (The lack of significance is due, in part, to the high variability of meat consumption in both groups.) We note the red meat effect, despite the lack of statistical significance here, to make the connection to the earlier Musings post.

It is a plausible hypothesis from this work that the grains serve to reduce the amount of Hungatella bacteria in the gut. The red meat provides the substrate for making TMAO; the Hungatella convert that substrate to TMAO; the dietary grains reduce the Hungatella. (More specifically, the authors attribute the "grains" effect to "resistant starch".)

The trial here is too small (and not fully controlled) to be convincing. But it seems to provide interesting leads to guide further work.

There is a third test group in the study. This group, termed pseudo-paleo, followed a loose version of the paleo diet. The results for that group are partly consistent with what is discussed above, but frankly are a little confusing. Let's attribute that, at least in part for now, to the small study size.

News stories:

* Paleo diet linked linked [sic] to greater odds of heart disease. (A Slachta (Cardiovascular Business), July 24, 2019.)

* Heart disease biomarker linked to paleo diet. (Science Daily (Edith Cowan University), July 22, 2019.)

The article, which is freely available: Long.term Paleolithic diet is associated with lower resistant starch intake, different gut microbiota composition and increased serum TMAO concentrations. (A Genoni et al, European Journal of Nutrition 59:1845, August 2020.)

Background post on TMAO: Red meat and heart disease: carnitine, your gut bacteria, and TMAO (May 21, 2013).

A post about low-carb diets in general: Low-carb diets: Long-term effects? (September 4, 2018). To help you compare current and earlier posts... The strict-paleo group in the current study consumed carb for about 17% of their energy intake (Table 2); that is an extremely low value.

A recent post about a human diet: How a "low-gluten" diet may benefit those who are not gluten-sensitive (January 27, 2019).

More... Does eating vegetables reduce the risk of cardiovascular disease? (February 28, 2022).

My page Internet resources: Biology - Miscellaneous contains a section on Nutrition; Food safety. It includes a list of related Musings posts.

August 9, 2019

A team of scientists set out to make a new type of glass -- one that would not shatter upon impact.

The results are clear...

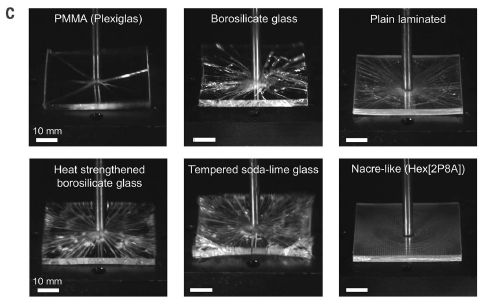

|

In this test, six pieces of glass, or glass-like materials, were tested for impact resistance.

One survived in good condition. That's the "nacre-like" material at the lower right. The pieces were 5 x 5 x 0.3 cm. This is Figure 4C from the article. |

What did the scientists do? And what's nacre? Nacre (mother-of-pearl) is part of mollusk shells (e.g., clamshells). It is the material responsible for their strength. The basis of the strength of nacre is, in part, its multi-layered brick-like structure. The small pieces ("bricks" or "tablets") can move independently. Impact forces are dissipated by "brick" movement rather than breakage.

The nacre-like glass is like that, with alternating layers of glass bricks and plastic. Of course, there is a lot of detail to "get it right". The major technical development is a process for making large sheets of tiny glass "bricks". (The brick size is about 1 millimeter.) In brief... They start with a regular glass sheet. They then engrave lines between bricks (using a laser beam); the sheet is intact but weakened at the lines between the bricks. The process of attaching the plastic layer weakens the engraved lines further, resulting in free brick pieces.

Unbreakable? No, but they say it is 2-3 times more resistant to impact than the best current glasses.

There is a trade-off. The tablet structure makes the glass less able to support weight; it bends more easily. Balancing out the pro and con features will be for future work.

News stories:

* New type of glass inspired by nature is more resistant to impacts. (Phys.org (C Q Choi, Inside Science, American Institute of Physics), June 28, 2019.) Good figures, showing the design concept.

* Seashells inspire shatterproof glass. (B Dumé, Physics World, July 2, 2019.)

* News story accompanying the article: Materials science: Bioinspired improvement of laminated glass -- Laminated glass with a microstructure inspired by nacre has a higher impact resistance. (K C Datsiou, Science 364:1232, June 28, 2019.)

* The article: Impact-resistant nacre-like transparent materials. (Z Yin et al, Science 364:1260, June 28, 2019.)

A post about a multi-layered mollusk skeleton: Armor (February 5, 2010).

Among many posts about glass...

* How to mold glass (June 19, 2021).

* Libyan desert glass, King Tut, and the hazards of meteorite strikes (May 31, 2019).

* A new record: spinning speed (October 12, 2018).

* Why bats fly into windows (December 3, 2017).

* Turning metal into glass (September 21, 2014).For more about bio-inspiration, see my Biotechnology in the News (BITN) topic Bio-inspiration (biomimetics). It includes a listing of Musings posts in the area, and has additional information.

August 7, 2019

Drug metabolism by your microbiota. A recent post was about how the gut microbiota affects treatment of Parkinson's disease by metabolizing a common drug. Looking for information on the article led me to a current news feature in The Scientist broadly on the metabolism of drugs by the gut microbiota. It's largely on cancer drugs, but includes the new Parkinson's article. This is an emerging field, and there is considerable confusion, as the current item notes. The microbiome effect is undoubtedly important, but our understanding is still quite incomplete.

* News feature: How the Microbiome Influences Drug Action -- Through their effects on metabolism and immunity, bacteria in the gut affect whether medications will be effective for a given patient. (S Williams, The Scientist, July 15, 2019. In print: July issue, p 38.) Now archived.

* Background post: Metabolism of the Parkinson's disease drug L-DOPA by the gut microbiota (July 26, 2019).

August 6, 2019

Pieces of the Earth move around. Sometimes, strain builds up. Sometimes, the strain is released suddenly -- in an earthquake. That's the general idea, but the details are unclear, and there has been no good model system for studying quakes in the lab.

A new article reports the development of such a system. It involves a layer of disks between two concentric cylindrical shells. There is a weight on top, and the bottom edge of the layer is driven to rotate. Sensors measure force -- and sound. That the system rotates allows it to run for extended periods. In a routine 24-hour test, it can generate a million labquakes.

It may be good to check out the video at this point. It is included with the news story listed below. It shows the apparatus -- in action. The color changes represent changes in the forces; the optical properties of the disk material vary with force.

The video may not be clear, but at least it will give you an idea. In fact, the nature of the apparatus is not very clear, even after reading the details in the Supplemental Material. We'll come back to the apparatus later. For now, what matters is the concept: a shear strain in a rotating device.

The following figure illustrates the data from the force sensors...

|

The graphs show the torque (circular force) over time, for one hour (3600 seconds) of operation. The torque being measured is at the top of the rotating layer of disks, against the cover of the apparatus.

Part c (top) shows the raw data. The torque varies, in an irregular manner. Much of the time, the torque increases; this is due to the strain in the system building up. (The device is being driven at constant speed. If it weren't for that layer of disks gumming up the system, the torque would be constant.) From time to time, the torque drops -- abruptly. That's a quake event. Something happened in the material, in that layer of disks, to relieve the strain that had built up. |

|

Part d (bottom) shows the same data plotted a little differently, to make the drops clearer. In this graph, they plot the "torque difference" -- but only during drops. That is, each quake event -- a drop in torque -- is now a "blip" (a vertical blue line). And each such event is marked with an x. You can see that there are many blips and x's. Several stand out clearly; for some, you can make the connection between a drop in part c and a blip in part d. There are also a lot of x's that are very close to the zero line. The inset graph shows an enlargement of one such region. Smaller quakes are now apparent. It's also clear that there are many more of the very small events than the big ones that are so clear. This is part of Figure 1 from the article. | |

So the device generates irregular releases of strain within the material. And most of those releases are small events. Earthquakes are like that.

The authors go on to analyze the data quantitatively. Here is one of those analyses...

|

The graph shows the frequency distribution (y-axis) of quakes of different energies (x-axis). PDF = probability density function. The energy scale is in arbitrary units (au). Both scales are logarithmic.

There are two sets of data. One is for quakes detected by the force sensors (red data; upper). The other is for quakes detected by the acoustic sensors (blue data; lower). The latter can detect a much wider range of quakes, including very low-energy events. Main finding... both curves have the same slope: -1.7. That's the slope seen for such curves for earthquakes -- the Gutenberg-Richter law. The law says that small quakes are more frequent than big ones. Since the graph is log-log, the slope is the exponent in the relationship. This is Figure 2a from the article. |

The graph above shows that the labquake device generates events that follow one of the important laws of earthquakes.

The authors go on to test two more quantitative relationships found with earthquakes. In both cases, their experimental system is in good agreement with what is found for natural quakes. Good quantitative agreement. The agreement suggests that the system deserves further study as a lab model for earthquakes.

We've noted that it is hard to describe the apparatus. We've also noted that one can follow the concept without understanding the apparatus. The following statement, from the article Supplement, may be useful... Although our apparatus is composed of two plates that compress and shear a granular material, it is important to point out that this system replicates the dynamics of a tectonic fault, but not the fault itself. Our grains do not necessarily represent the granular material inside a real fault. Our granular system provides, thanks to the force network, a very heterogeneous and evolving matrix to store the mechanical energy generated by the relative movement of the plates. This emerging and evolving heterogeneity in terms of energy thresholds is the key ingredient of our system, and it is responsible for a distribution of events that resembles the Gutenberg-Richter law. The quotation is from the start of Section III of the Supplement, Insights into real earthquakes. It is complete except for removing references.That is, the scientists do not suggest that the events they create and observe are just like little earthquakes, but only that they behave like them statistically.

News story: Focus: Collection of Disks Mimics Earthquakes. (M Schirber, Physics 12:62, May 31, 2019.) Excellent overview of the work. The main figure gives you an idea of the layer of disks, though without context in the device. It is similar to the inset of Figure 1b from the article, but much clearer.

A short video is included in that news story (12 seconds; no sound). It shows the device in operation. A somewhat longer version (30 seconds) is posted with the article at the journal web site, below; that version requires subscription access. It seems to be just like the short video included with the news story -- just longer.

The article: Continuously Sheared Granular Matter Reproduces in Detail Seismicity Laws. (S Lherminier et al, Physical Review Letters 122:218501, May 31, 2019.)

A recent post about earthquakes: Another million earthquakes for California (June 30, 2019).

August 3, 2019

One type of fatty acid is known as ω-3 (ω = omega). This type of fatty acid is characterized by having a carbon-carbon double bond three positions in from the "far end". Musings has described this in an earlier post [link at the end]. As noted there, some people take ω-3 fatty acids, in the form of fish oil, as a food supplement.

A recent article looks at the importance of ω-3 fatty acids to the fish. Stickleback fish.

The little stickleback fish fascinates biologists. There are many populations of them around the world -- distinct strains, or even distinct species. How did so many species arise? Extensive study of the biology of the sticklebacks has led to the idea that the marine (ocean) form is the ancestral form, and then numerous species developed from this in various freshwater environments.

The first figure examines two marine stickleback species found near Japan...

|

In this test, the survival of two strains of fish was tested with two feeding conditions.

The two strains of fish are called Japan Sea and Pacific Ocean, referring to the source. The baseline feeding condition was with a diet known to be low in ω-3 fatty acids. The second condition was that base diet supplemented with "marine food". |

|

There are two main observations: - The fish strains differ. On the base diet, Pacific Ocean fish survived better than did the Japan Sea fish (compare curves 1 and 2). That is, the Pacific Ocean fish had a longer lifespan. - The diet supplemented with "marine food" improved the survival for both fish strains (curves 1 vs 3 and 2 vs 4). This is slightly modified from Figure 2A from the article. I have added numbers for the curves, at the far right. | |

The "marine food" is known to be a good source of ω-3 fatty acids, thus remedying the deficiency of the base diet. As the article develops, various lines of evidence point to the ω-3 fatty acids as being the key underlying variable. In particular, the genetic work focused on a key gene for making them: Fads2. (Fads = fatty acid desaturase, the enzyme that puts in the double bond.)

The following graph shows the results for one experiment on the role of the Fads2 gene...

|

In this test, the Japan Sea strain was modified by adding an additional copy of the Fads2 gene. As a control, another gene (GFP) was added to another batch of fish.

Adding the Fads2 gene improved the survival of the fish. (The control curve here, with the GFP gene, is similar to the control curve (#1) for the same strain in the top experiment.) This is Figure 2F from the article. |

What really interested the scientists was the evolution of strains that could thrive in fresh water. The purpose of the work summarized in the following graph was to look at the number of copies of the key gene Fads2, introduced above, in marine and freshwater stickleback strains.

|

The y-axis shows the number of copies of the Fads2 gene. (Actually, the relative number, but we need not worry about that.)

Results are shown for three strains (or groups) -- and separately for males and females. Two of the three strains shown here are those shown above. The third (right-hand) data set is for a group of freshwater strains from the same general area. Observations: - Females have more copies of the Fads2 gene than do males. (The main copy of the gene is on the X chromosome.) Focusing on the females is useful. - The freshwater fish have the highest copy number for this gene. - The Pacific Ocean strain has more copies of the gene than does the Japan Sea strain. This probably accounts for the difference seen in the first figure above. This is part of Figure 4A from the article. The rest of Figure 4A shows similar results for fish from other regions. In each case, the freshwater strains have more copies of the gene. |

Those results show that freshwater fish have more copies of the gene for making ω-3 fatty acids than do the marine strains. Comparison of similar sets of stickleback strains from other regions gave that same general result.

In fact, analysis of a broader range of fish beyond sticklebacks suggested that the effect holds across fish in general. Since the underlying problem is a nutritional deficiency associated with fresh water, that is reasonable.

Overall, the work shows the importance of ω-3 fatty acids to fish. In particular, it suggests that mutations leading to increased synthesis of ω-3 fatty acids were one important step in the development of fish that can thrive in freshwater.

News stories:

* Freshwater find: Genetic advantage allows some marine fish to colonize freshwater habitats. (Phys.org (Research Organization of Information and Systems), May 30, 2019.)

* "Copying & pasting" a gene allows stickleback to live in freshwater habitats. (University of Bern, June 3, 2019.)

* News story accompanying the article: Evolutionary biology: Jumping gene gave fish a freshwater start -- Fish diversification depended on multiple copies of a metabolic gene. (J N Weber & W Tong, Science 364:831, May 31, 2019.)

* The article: A key metabolic gene for recurrent freshwater colonization and radiation in fishes. (A Ishikawa et al, Science 364:886, May 31, 2019.)

A background post on ω-3 fatty acids: Omega-3 fatty acids; fish oil (March 29, 2010).

More... Vitamin D, omega-3 fatty acids, and autoimmune disease? (March 29, 2022).

A recent post about fish genes: Fish may adapt to pollution by stealing genes from another species (July 21, 2019). In contrast to how genes changed in that earlier work... In the current work, the number of copies of the Fads2 gene probably changed due to transposon action.

For more about lipids, see the section of my page Organic/Biochemistry Internet resources on Lipids. It includes a list of related Musings posts.

July 31, 2019

Cow genetics and methane emissions? Cows -- or, rather, the microbes in cow stomachs -- generate methane, making a significant contribution to greenhouse gases. Cows vary, but there has been no practical way to control methane emissions. A team of scientists has now analyzed the genomes and microbiomes of diverse dairy cows. They have found cow genes that correlate with microbiome composition, and thus with methane emission. This opens the prospect of breeding low-emission cows. There is no certainty that such breeding will be successful and without side effects, but it is an interesting lead. (Some of their data suggests that reduced methane emission may be associated with higher milk production. That's reasonable, since methane emission is a waste of energy.)

* News story: Potential for reduced methane from cows. (Science Daily (University of Adelaide), July 8, 2019.) Links to the article, which is freely available.

July 30, 2019

Circadian rhythm: the natural variation of biological activity through the daily cycle. Humans sleep and wake up even in the absence of clocks, and they do so on a rather regular basis.

What is the basis of our circadian rhythm? Part of the story is that, as darkness comes, our level of the hormone melatonin rises. That promotes sleep.

That must make us wonder... How does artificial lighting, common only in the last two centuries, affect our sleep?

A recent article provides more evidence on the matter. In particular, it provides evidence for huge variation between individuals in how they respond to the artificial light of the modern human evening.

Look...

|

The main graph shows how numerous individuals respond to light in the evening, shortly before bedtime.

The response measured is suppression of melatonin, as a percentage (y-axis). That is plotted against the light intensity (x-axis; log scale). Each curve, regardless of color, is for one person. The main finding is that these curves vary -- a lot. The blue-curve person has 50% suppression at less than 10 lux; that is high sensitivity to light. The red-curve person has 50% suppression only well above 100 lux; that is high sensitivity. That is, the range of sensitivities to light, as judged by melatonin suppression, is more than 10-fold (actually, about 50-fold). The three graphs at the right show the effect another way. They show the actual melatonin levels for the two individuals whose data are shown in color. The three graphs are for low, medium, and high light intensities (top to bottom). Each curve shows the melatonin level in the individual vs time, from about 4 hours before bedtime to an hour after bedtime. For the lowest light level (top graph), both the red and blue curves rise about the same: melatonin levels start to rise about 2 hours before bedtime. For the highest light level (bottom), the red curve is about the same, but the blue curve shows very little melatonin. That is, the light-sensitive person has had their natural preparation for sleep completely disrupted. The label on the top graph says 0.1 lux. That is probably supposed to be 1 lux. Doesn't really matter for us. This is Figure 2 from the article. |

Common room lighting is often in that range of 10-100 lux. (The authors suggest that the average lighting is about 30 lux.) That is, the range shown above is relevant. Some people, such as the blue-curve person above, can easily have their natural sleep cycle disrupted by such evening lighting. But people vary a lot, and some would be unaffected. That's the big message here: people vary in how common evening lighting affects their natural preparation for sleep.

News stories:

* Sensitivity of human circadian system to evening light. (EurekAlert! (PNAS), May 27, 2019.) Brief, but to the point.

* Light the night? Monash research finds that some of us are hypersensitive to evening illumination. (Monash University, June 3, 2019.)

The article, which is freely available: High sensitivity and interindividual variability in the response of the human circadian system to evening light. (A J K Phillips et al, PNAS 116:12019, June 11, 2019.)

Other posts about sleep cycles, melatonin, and such include:

* Conversing with dreamers (July 6, 2021).

* The genetics of being a "morning person"? (April 15, 2016).

* Does it matter what time of day you milk the cow? (December 28, 2015).

* How caffeine interferes with sleep (December 11, 2015).

* Melatonin and circadian rhythms -- in ocean plankton (November 24, 2014).My page of Introductory Chemistry Internet resources has a section on Lighting: halogen lamps, etc. It includes a list of related Musings posts.

July 29, 2019

A team of engineers in the United States and India has recently shown that the leaves of wheat plants can sneeze. The engineers suggest that wheat sneezing could transmit disease.

The first figure shows the idea...

|

In this cartoon, the big green thing is a wheat leaf. The blue things are water droplets, and the smaller brown things are fungal spores.

A key fact is that wheat leaves are extremely hydrophobic -- or "superhydrophobic". As a result, nearby water droplets are likely to come together and coalesce into one droplet. The coalescence of drops releases energy -- enough to propel the droplets into the air. |

|

If there are spores on the leaf, they may attach to a droplet, and thus be expelled from the leaf surface. Expelled far enough that they could be carried away by even a gentle wind. This is Figure 1a from the article. | |

The article provides both theory and experimental evidence for parts of the story. The following two figures show some of the evidence...

|

This figure shows an example of a wheat-leaf sneeze.

It shows superimposed images from time-lapse photography over about 30 milliseconds. A water droplet was formed at the lower left by coalescence; it rose (propelled by the energy released during droplet coalescence), and then fell. At the peak, it was about a millimeter above the surface (assuming the scale bar applies for height). That's about high enough that the droplet could be blown away by a wind. This is Figure 3a from the article. |

The sneeze above is from an uninfected leaf. Sneezing itself has nothing to do with infection. It follows simply from having small water droplets on the superhydrophobic surface. In nature, that would commonly be dew droplets, formed during the daily cycle.

Video. There is a video posted with the article. (Five minutes, no sound, some labeling.) It consists of a series of droplet-jump sequences. Some are clear, some not. I suggest you go through it once, and emphasize seeing some jumps. You can go back for more if you want. The video is posted at the journal web site, under Supplemental Material. Or: direct link to video. (The video is freely available, regardless of your subscription status for the journal.)

|

This figure shows that spores can be lifted off the leaf surface.

In this experiment, a piece of paper was carefully positioned at a defined height above the surface of the leaf -- a leaf with fungal spores on it. Droplets that were expelled from the surface rose and hit the paper. After some time, the spores that had attached to the paper were counted. The three sets of data are for three different leaves. For each leaf, data is shown for collecting spores at three different heights, from 1.5 to 5 millimeters above the surface. The observation is that all papers, at all heights tested, collected spores. The differences between the bars is not of particular interest for now. This is Figure 5c from the article. |

That figure provides evidence for droplets rising off the wheat leaf surface and carrying fungal spores with them. The authors argue that spores rising even 1 mm above the leaf surface could be blown away by a wind. Thus it seems reasonable that coalescence of dew drops on the surface of an infected wheat leaf could transmit the pathogen to a site on another plant.

Just to be clear... The article does not actually show disease transmission from one plant to another. But it does lay out the pathway by which such transmission could occur. The pathway is supported by both theory and experiment, as far as it goes. The main thing missing is the final step: they never provided a "recipient" to receive the spores.

Overall, the article provides an unusual view of a major food crop -- an engineer's view. But it also reveals information that could be useful in controlling a serious pathogen.

News stories:

* Dew drops spontaneously flinging themselves into the wind may spread wheat infections. (B Yirka, Phys.org, June 19, 2019.)

* Wheat Plants "Sneeze" and Spread Disease. (C Intagliata, Scientific American, June 25, 2019.) Podcast, with transcript.

The article: 'Sneezing' plants: pathogen transport via jumping-droplet condensation. (S Nath et al, Journal of the Royal Society Interface 16:20190243, June 5, 2019.)

More things superhydrophobic...

* An improved bandage, based on superhydrophobic carbon fibers (January 21, 2020).

* A superhydrophobic fly -- that can survive in highly alkaline water (February 25, 2018).

* Water droplets on a trampoline (April 9, 2016). There is some connection between the current work and this older work; both involve water droplets jumping from a superhydrophobic surface, though in different situations.Posts about wheat include...

* How a "low-gluten" diet may benefit those who are not gluten-sensitive (January 27, 2019).

* Wheat, rice, and Starbucks (August 3, 2018).More sneezing: Shark skin inspires design of a new material to reduce bacterial growth (March 13, 2015).

Also see:

* How water droplets damage hard surfaces (June 18, 2022).

* Speech droplets: Can you transmit an infection to someone by yelling "Stay healthy" at them? (June 14, 2020).Another post about rust, the type of fungus-caused plant disease studied in the current work: A sticky pesticide (June 21, 2019).

July 26, 2019

People with Parkinson's disease (PD) are deficient in the neurotransmitter dopamine. They may take the drug L-DOPA (Levodopa). The drug is taken orally; it is transported via the blood to the brain, where it is converted to dopamine.

However, L-DOPA may be converted to dopamine in the person's gut; that effectively renders it inactive, since the dopamine itself does not get transported to the brain. People vary in how much drug they lose at this step; it's a complication in the use of L-DOPA, one that is poorly understood.

A recent article reports progress in understanding the gut metabolism of L-DOPA. It also reports a new drug candidate that may improve the effectiveness and consistency of L-DOPA treatment.

The following figure is a useful summary of some of the key findings...

|

The top row of the figure shows the chemicals involved here and the bacteria that interconvert them in the gut.

The chemicals start with L-DOPA itself, at the left. The first step removes the carboxyl group (red; right-hand end of L-DOPA). That makes dopamine. The next step is to remove one of the hydroxyl groups (red; left-hand end of dopamine), to make a tyramine. The first step is done by Enterococcus faecalis; the second step is done by Eggerthella lenta. Identifying these bacteria that metabolize the drug was the first main finding of the current work. We'll come back to the second row of the figure later. L-DOPA is closely related to two of the standard amino acids. Remove one -OH group on the ring (the upper one), and you get tyrosine. Remove both -OH groups, and you get phenylalanine. This is the summary figure for the article. |

Here is one of the experiments that helped to show that pathway of bacterial metabolism...

|

In this test, mixed microbial samples that did or did not metabolize L-DOPA were tested for the presence of E faecalis. The y-axis (log scale!) shows the level of this bacterial species, as judged by the amount of its characteristic 16S ribosomal RNA. Each point shows the level for one sample.

The points for metabolizers (on the left) are higher than the points for non-metabolizers (right). By about two logs -- or 100-fold. That is, the ability of a sample to decarboxylate L-DOPA correlates well with the presence of this bacterial species. The samples are fecal suspensions from different people. This is Figure 3D from the article. |

Is this information useful? Go back to the top figure, and look at the second row. It shows the action of two drugs that inhibit the first step in L-DOPA metabolism. One of them, carbidopa (left), is an approved drug. It's used to reduce the metabolism of L-DOPA, but it doesn't work very well. The current article tests it against the gut bacteria that inactivate the drug; it doesn't work. The red T in the figure indicates a potential blocking action; the x means it doesn't happen. On the other hand, the drug shown at the right (AFMT), which was uncovered in the current work, does inhibit L-DOPA breakdown by the gut bacteria.

That is, the human and bacterial enzymes for breaking down L-DOPA are sufficiently different that they respond to different inhibitors -- even though they carry out the same reaction. That explains why carbidopa doesn't work very well, and also offers a new drug candidate (AFMT), which should be tested further. If it works in real patients, it may offer a new tool in managing the effectiveness of L-DOPA treatment in PD.

The current article includes some testing of AFMT in mice, but not in humans.

News stories:

* Gut Bacteria Consume Parkinson's Drug Levodopa, Often with Harmful Side Effects. (Sci.News, June 27, 2019.)

* Human Gut Microbes Identified That Process Levodopa. (F Church, Journey with Parkinson's, July 1, 2019.) Stylistically somewhat odd, but this is a good presentation -- by a medical researcher who has PD.

* News story accompanying the article: Microbiology: Gut microbes metabolize Parkinson's disease drug -- A gut bacterial pathway that degrades the drug levodopa is identified and can be inhibited. (C O'Neill, Science 364:1030, June 14, 2019.)

* The article, which may be freely available: Discovery and inhibition of an interspecies gut bacterial pathway for Levodopa metabolism. (V Maini Rekdal et al, Science 364:eaau6323, June 14, 2019; not in print edition.) (Caution... In my copy of the pdf file, the first page is duplicated. Don't know if it will get fixed.)

Posts on PD...

* Could tomatoes be used as the source of a common drug for Parkinson's disease? (April 24, 2021).

* Does the appendix affect the development of Parkinson's disease? (December 11, 2018).

* Possible role of gut bacteria in Parkinson's disease? (March 17, 2017).More about dopamine: A connection: an endogenous retrovirus in the human genome and drug addiction? (October 29, 2018). Links to more.