Musings is an informal newsletter mainly highlighting recent science. It is intended as both fun and instructive. Items are posted a few times each week. See the Introduction, listed below, for more information.

If you got here from a search engine... Do a simple text search of this page to find your topic. Searches for a single word (or root) are most likely to work.

Introduction (separate page).

This page:

2013 (May-August)

August 31

August 28

August 21

August 14

August 7

July 31

July 24

July 17

July 10

July 3

June 26

June 19

June 12

June 5

May 29

May 22

May 15

May 8

Also see the complete listing of Musings pages, immediately below.

All pages:

Most recent posts

2026

2025

2024

2023:

January-April

May-December

2022:

January-April

May-August

September-December

2021:

January-April

May-August

September-December

2020:

January-April

May-August

September-December

2019:

January-April

May-August

September-December

2018:

January-April

May-August

September-December

2017:

January-April

May-August

September-December

2016:

January-April

May-August

September-December

2015:

January-April

May-August

September-December

2014:

January-April

May-August

September-December

2013:

January-April

May-August: this page, see detail above

September-December

2012:

January-April

May-August

September-December

2011:

January-April

May-August

September-December

2010:

January-June

July-December

2009

2008

Links to external sites will open in a new window.

Archive items may be edited, to condense them a bit or to update links. Some links may require a subscription for full access, but I try to provide at least one useful open source for most items.

Please let me know of any broken links you find -- on my Musings pages or any of my regular web pages. Personal reports are often the first way I find out about such a problem.

August 31, 2013

We have discussed food poisoning in various posts, most commonly about microbial contamination of meat [link at the end]. However, contamination of plant products (produce; fruits and vegetables) is also a problem. In fact, it may be more of a problem in one sense: produce is often eaten raw.

A recent article analyzes the sources of contamination in a vegetable crop: spinach. The scientists made a model of the spinach production process, showing a large number of factors one might think of as possibly being relevant. Then they collected lots of data, and correlated the contamination against the features of their model.

The following flow chart shows the possible sources of contamination that they considered. To help you focus, notice the box at the right, about half way down, which says "Generic E coli contamination of spinach". There are a lot of arrows pointing to that box. The chart is a collection of possible factors, which might lead to that box. (There is no need to read it in any detail.)

|

This is Figure 2 from the article. |

Data? The scientists studied 12 farms, over two states, and collected 955 samples of spinach over two years. They measured whether E coli bacteria were present in the spinach samples; about 7% of the samples showed this contamination. (E coli contamination is commonly taken as an indicator of fecal contamination.) They collected information about all the factors shown in the chart above. They then ran statistical tests to see which factors in their model were most correlated with contamination of the spinach.

What did they find? Interestingly, some of the factors they found most important were related to animals being around. For example, a spinach farm close to a poultry farm was predictive of contamination. They also found that measures to keep workers clean, such as portable toilets with hand washing facilities, helped to reduce contamination.

What does this accomplish? It leads to suggested improvements. If farmers act on the factors most correlated with contamination, it may lead to reduced contamination. Simply doing a study such as this, alone, does not solve anything; the study provides some guidance. In particular, it helps to set priorities about what the most important factors may be. The ideas generated by such a study need to be implemented -- and tested. That is, follow-up is needed to see that making desired changes leads to improvement -- and to identify what further measures might be called for.

News story: Factors That Influence Spinach Contamination Pre-Harvest Determined. (Science Daily, June 20, 2013.)

The article: Generic Escherichia coli Contamination of Spinach at the Preharvest Stage: Effects of Farm Management and Environmental Factors. (S Park et al, Applied and Environmental Microbiology 79:4347, July 2013.)

Background post about contaminated food: Killer chickens (December 2, 2009). Links to several related posts. Most are about contaminated meat, but one implicates flour.

Previous post about spinach: Golden rice as a source of vitamin A: a clinical trial and a controversy (November 2, 2012).

Previous post about agriculture: What is the proper use of crop land? (August 23, 2013).

My page Internet resources: Biology - Miscellaneous contains a section on Nutrition; Food safety.

August 30, 2013

Scientists have recently reported finding two stars that are rich in the element lead.

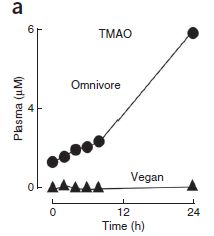

The following graph summarizes key data. If the graph seems complicated, start by noting that there is something special in the upper right corner. We'll fill out the story below.

|

The graph shows the amount of certain elements found in certain stars (or types of stars). The elements are listed across the x-axis; they are in order by atomic number. The y-axis is a measure of how abundant the element is. Before explaining the scale in detail, let's check that upper right corner again. There are data for four heavy elements, including lead (Pb). All the data points shown for those elements, for three individual stars, are quite high on this graph. To understand how high they are, we need to understand the y-scale. It shows how abundant each element is -- compared to our Sun, and on a log scale. 0 on the scale means that the amount of the element on the star is the same as on the Sun. 1 means it is 10-fold more abundant on the star than on the Sun -- and so forth. If you want the details... The y-axis scale is labeled "log ε/ε☉". ε (epsilon) is the abundance of the element in the star of interest; ε☉ is the abundance in the Sun. (The ☉ is a symbol for Sun.) That is, the ratio is the abundance of the element compared to that in the Sun. Then, the ratio is shown as a logarithm (base 10). 0 on the scale means the element has the same abundance on the star as on the Sun (ratio = 1; the log of 1 is 0.) 2 on the scale means the element is 100 times more abundant on the star (ratio is 100; the log of 100 is 2.)Most of the data in this figure are between +2 and -2; that is, the abundances are within about a factor of 100, one way or the other, from the Sun. The exceptions? Those points at the upper right, at about 4 -- meaning those elements are 10,000 times more abundant on those stars than on the Sun. This is Figure 6 from the article. |

The two points shown for Pb are results from the new article. These are the highest levels of Pb ever found for stars -- 10,000 times higher than on the Sun. That is the key observation for this new article.

The blue diamonds are for a star described in a previous article from the same lab. You can see that the blue-diamond star has high levels of strontium, yttrium and zirconium (Sr, Y, Zr). They referred to that star as a "zirconium star". One of the "lead stars" from the current paper is also high for those elements.

The first two symbols shown are for sets of stars, of particular types; the data points, with error-bar lines, show the range of values found for each element.

What do these discoveries of heavy metals mean? That is not entirely clear, and the authors spend considerable time discussing possibilities. Importantly, note that the measurements reported in such work are from the star's atmosphere; the comparison is the atmosphere of the star to the atmosphere of the Sun. It is likely that what they are seeing are "clouds". The question for stellar astronomers is why these metal-rich clouds form in some cases. For now, simply finding them is a step forward. For now, we have a zirconium star and a couple of lead stars.

With lead levels given as 10,000 times that in the Sun's atmosphere, you might wonder how high the level is. In the sun's atmosphere, the Pb is about one part in ten billion. In the atmosphere of the lead stars, it may be about one part in a million. They may be, relatively, lead-rich, but they are not balls of lead.

News stories:

* Astronomers Discover Two Heavy Metal Stars. (Sci.News, August 2, 2013.)

* Under leaden skies - where heavy metal clouds the stars. (Royal Astronomical Society, August 1, 2013. Now archived.)

The article, which is freely available: Discovery of extremely lead-rich subdwarfs: does heavy metal signal the formation of subdwarf B stars? (Naslim N et al, Monthly Notices of the Royal Astronomical Society 434:1920, September 21, 2013.) (The form of the first author's name used here reflects her preference, as shown in the paper.)

More about stars... Star formation has slowed down (December 4, 2012).

More about lead...

* Should physicists be allowed to use lead from ancient Roman shipwrecks? (December 2, 2013).

* CFL and LED lights: energy-efficient, but toxic (March 3, 2013).More about strontium... Revealing the alabaster sources of ancient artists (March 7, 2018).

More about yttrium... Y-Y: the first (May 5, 2019).

This post is listed on my page Introductory Chemistry Internet resources in the section Lanthanoids and actinoids.

August 27, 2013

The confined environment of a long plane trip may promote transfer of infectious organisms. Face masks may reduce such transmission. However, there is little real-world data on these effects. Thus a new article is interesting, even if not very conclusive.

The article is about a 24-hour flight sequence from New York to Hong Kong, and on to Fuzhou (China), during the 2009 flu event. Nine people were recorded as coming down with the flu in a time frame suggesting they acquired it on the plane. Authorities investigated; the new article is their report.

A key finding was that use of a face mask correlated with reduced chances of acquiring the flu. However, the data are extremely limited. The authors are cautious in their interpretation, and mainly encourage other such studies.

Here are their key data, as summarized in the abstract: "None of the 9 case-passengers, compared with 47% (15/32) of control passengers, wore a face mask for the entire flight..." Among the limitations of the study are that only 32 control passengers (those who did not come down with illness) were interviewed, and the type of face mask used is not recorded.

This article makes a modest contribution. It is perhaps interesting to look it over just to see how they did the study. The problem is important: the 2009 flu virus was disseminated around the world largely by plane; the geographical range of the novel and limited SARS epidemic a few years ago was defined largely by plane trips. Some fear what would happen if someone with Ebola managed to make a plane trip; is our main protection against this only that people with Ebola are too sick to travel, even at the earliest stages?

A preview (it's not quite a "news story"): CDC EID: Protection by Face Masks against Influenza A(H1N1)pdm09 Virus on Trans-Pacific Passenger Aircraft, 2009. (Pandemic Information News, August 23, 2013.)

The article, which is freely available at PubMed Central: Protection by Face Masks against Influenza A(H1N1)pdm09 Virus on Trans-Pacific Passenger Aircraft, 2009. (L Zhang et al, Emerging Infectious Diseases 19:1403, September 2013.)

Next post on the flu: Google tracks the flu -- follow-up (April 11, 2014).

More abut trying to reduce flu virus transmission: Effectiveness of alcohol-based hand sanitizers? (September 28, 2019).

Many posts on various flu issues are listed on the supplementary page: Musings: Influenza.

Also see: Should you get a rabies vaccination before boarding an airliner? (May 7, 2012).

And... Should you ask your doctor to go BBE? (May 12, 2014).

More about airplanes... Airport food: What do the birds eat? (May 24, 2014).

More about emerging diseases is on my pages for Biotechnology in the News (BITN): Emerging diseases. That discusses some general issues, and also links to some specific diseases, including SARS, that have emerged in recent decades.

August 26, 2013

Bach-deficient mice have defective immune systems.

More specifically, mice lacking the gene for the protein BACH2 have distinctive immune system problems. They tend to get inflammatory auto-immune diseases. So reports a recent article

Why did scientists look at the effect of BACH2 in mice? Human biology was offering hints. Many people with autoimmune diseases or allergies have small changes in BACH2. However, there has been no understanding of what this protein is doing. That is, analysis of humans suggested that BACH2 might be of interest, so the scientists checked in mice. The experimental mouse system allows them to introduce known mutations in a specific gene.

The following figure shows some of the results. The general plan is that the scientists made mice in which the Bach2 gene had been inactivated -- or knocked out, as they say; these mice are shown as KO. In each part, KO mice are compared with wild type (WT) mice.

|

Part a (upper left) shows the weight of the mice (at 3 months of age). You can see that the wild type (WT) mice grew better than the Bach2 knock-out (KO) mice.

Part b (upper right) show the survival curves. You can see that all of the KO mice died between about days 100 and 300. During that time, most of the WT mice survived. Part d (lower left) shows the lungs of one of each kind of mice at death. You can see that the lungs of the KO mouse are enlarged -- due to inflammation. This is Figure 1 parts a, b & d from the article. |

The Bach2 gene codes for a transcription factor, a protein that regulates the function of other genes. The effect of that BACH2 protein is complex; it affects the balance between various types of immune system cells. The current article shows that lack of BACH2 leads to rampant inflammation -- in mice. The observation that various small changes in Bach2 are associated with a variety of human immune system disorders is consistent with this. It will take a lot more work to sort out the details.

News story: NIH scientists find link between allergic and autoimmune diseases in mouse study. (National Cancer Institute, June 2, 2013. Now archived.) The news story contains a statement from the lead scientist of the project making the connection between the BACH protein and another Bach.

The article: BACH2 represses effector programs to stabilize Treg-mediated immune homeostasis. (R Roychoudhuri et al, Nature 498:506, June 27, 2013.)

What is a Bach protein? It took some effort, but I did track it down. Bach stands for "BTB and CNC homology". Not very helpful, is it? It's part of an attempt to classify proteins by common structural features. Whatever thoughts may have come to mind initially, the point is that Bach proteins may be important.

More about immune systems...

* Can eating peanut protein reduce the incidence of peanut allergy? (March 3, 2015).

* Should bees eat honey? (July 12, 2013).

* Exploiting the bacterial immune system as a tool for genetic engineering: The Caribou approach (May 4, 2013).

* Why the facial tumor of the Tasmanian devil is transmissible: a new clue (April 5, 2013).

* The role of the immune system in making stem cells (February 8, 2013).For more about Bach... Visualizing music (June 18, 2009).

August 24, 2013

We might frame that as a multiple-choice question...

A. They don't like to travel much.

B. Tuxedos are not appropriate for flying.

C. It's too expensive.

A new article provides evidence for answer C.

The idea of flightless birds is intriguing. After all, isn't flight a key characteristic that defines birds? However, there are various flightless birds. For flightless seabirds, such as penguins, we know they travel huge distances. Why don't they fly?

It's long been suspected that swimming and flying don't mix. Wings aren't good for swimming; flippers aren't good for flying. However, the experimental evidence to support this was minimal. The new article analyzes birds that seem intermediate: they swim well but still can fly. The scientists measure their energy usage -- and show that their flying is very expensive energetically.

|

This figure shows the metabolic rate of various birds (and some bats) doing their major activity. That metabolic rate is shown here as a multiple of the basal (resting) metabolic rate (y-axis). This is shown against the body size (x-axis), though that ends up not being too interesting here.

Look at the highest value. It's at metabolic rate 31 times basal -- and is labeled "murre". The next highest point, about 28, is for the cormorant. This is Figure 1C from the article. |

Murre and cormorant. Two birds that do both fly and swim (or dive). They are well-adapted for the latter. They retain an ability to fly, but they are now the least-efficient fliers among the birds. Is this a pattern -- part of a shift from flying to swimming? The scientists suggest it is -- and that the penguins represent the next step, where ability to fly has been lost.

The authors take the story one more step. The murres are more efficient at diving than the cormorants are; for flying, it is the other way. The murres use their wings (flippers) for diving; the cormorants use their feet. Both of these birds are inefficient fliers. The murres are the least efficient, because they now completely rely on their "wings" for diving; for cormorants, the transition to diving is partial.

A caution... The headlines (including mine) about this work are about penguins. However, there is no work with penguins in the article. The experimental work is with the murres and cormorants. Their bigger story is about the energy needs for flying and diving; the scientists put together a hypothesis about how some birds are losing or have lost the ability to fly as they become better swimmers (or divers).

News story: New evidence suggests some birds gave up flight to become better swimmers. (Phys.org, May 21, 2013.)

The article: High flight costs, but low dive costs, in auks support the biomechanical hypothesis for flightlessness in penguins. (K H Elliott et al, PNAS 110:9380, June 4, 2013.) (Auks? For our purposes here, the auks include the murres.)

More about penguins... Does penguin language conform to the laws of human language? (February 18, 2020).

More about seabirds: Bird lays egg (March 19, 2011).

More about flying:

* What is the proper shape for an egg? (September 18, 2017).

* Progress toward an artificial fly (December 6, 2013).

* How to board an airplane (September 16, 2011).

* The traveling bumblebee problem (January 11, 2011).More about swimming or diving:

* Bigger spleens for a bigger oxygen supply in Sea Nomad people with unusual ability to hold their breath (July 2, 2018).

* Caltech engineer turns rat into jellyfish (September 22, 2012).

* Can giraffes swim? (August 6, 2010).More about anatomy and energy: The origins of baseball -- two million years ago? (August 18, 2013).

A book about flying is listed on my page Books: Suggestions for general science reading. Alexander, On the Wing -- Insects, pterosaurs, birds, bats and the evolution of animal flight (2015). The book includes a chapter on animals that have lost flight.

August 23, 2013

A new analysis of the use of crop land has just appeared. It's interesting. The authors start with the basics: on a global scale, we have an impending food shortage. They explore one consideration: how efficiently do we use crop land?

We commonly judge agricultural productivity by how many tonnes of crop we get per hectare. (Related measures, such as calories or protein are also used; the new article uses all of these.) However, not all of the crop is used to feed people. For example, some of the crop is used as animal feed. We may eat the resulting animal, but in terms of efficiency of use of crop for human food, that is a loss. Some crop is now used to make fuel. Whatever the merits of that may be, it's a loss of human food. These are the key points explored in the new article.

Some general remarks at the start... First, these ideas are not new. However, they are perhaps more fully developed here, with numbers, than we usually see. Second, look at this paper for its ideas, and avoid quick or simple judgment. The story of human food is complex; what's here is part of it. Try to see what their point is, and also try to see what the limitations are of their analysis.

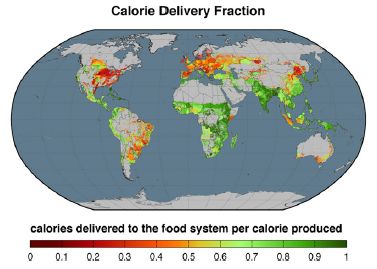

Here are two of their figures, to provide a sense of their presentation.

The first figure shows what fraction of the food that is grown reaches the population. For this figure, they use calories to measure the amount of food. The calorie delivery fraction is, literally, the fraction of what the crop land produces that reaches the human population as food. It is corrected primarily by the two factors noted above: use for animal feed and use for fuel.

|

As an example of what this means...

If crops are directly eaten, then the fraction shown in the figure is high. Regions shown in green are efficient in delivering crop calories to the people. If crops are grown and then used to feed cows, the delivered food is low, because cows inefficiently process the crop. Regions shown in red are inefficient in delivering crop calories to the people. The American midwest, noted for beef production, is a good example. This is Figure 1 from the article. |

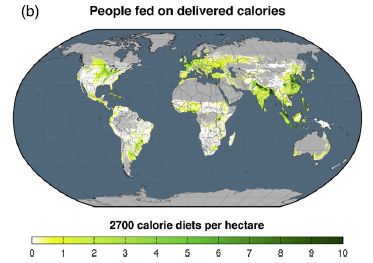

The next figure shows how many people are fed per hectare of crop land. As noted above, we might typically measure agricultural productivity by how much food is produced per hectare; the authors want to shift the emphasis to how many people are fed. This takes into account the inefficiencies of crop use noted above.

|

Note that the color scale used here is different from the one above. This one runs from green through yellow to white. (Wouldn't it be nice if they used the same scale in different figures.)

For perspective... The world average is about 6 people fed per hectare of crop land. |

|

As examples... In the first figure, a striking red area is the US midwest, and a striking green area is India. However, the current figure shows that they end up feeding about the same number of people per hectare of cropland. (Detailed numbers given for the entire countries agree with that.) Another green area in the first figure is southern Africa; however, it is one of the poorest regions in the current graph. This is Figure 3b from the article. | |

The relationship between the two graphs is complicated and interesting. At least in part, inefficient use of crop land is a choice -- one dictated by a preference for meat. The authors note that societies tend to move to greater meat consumption as they become more affluent.

The authors say that their main goal is to move the focus toward how much food reaches the people. Use of crop land for animal feed diverts crop production away from human food. It's a good point, but it is not the whole story. The authors point out many limitations of their analysis. For example, they ignore food waste. That is also an important issue, just not one they choose to deal with here. They note that their criticism of using crop land for animal feed is not a criticism of eating animals per se. Animals grown "wild" (not competing for human food) are not included in their analysis. Again, the authors recognize such limitations; it's important that readers do, too.

Somehow I end up wondering... What if people planned? Period. It's not that this paper is right or wrong, but that it is an attempt to plan. It's only part of what should be considered, but it is planning.

News story: Food for 4 Billion More People: How Reallotting Croplands Could Offer Solution to World Hunger. (Medical Daily, August 3, 2013.)

The article, which is freely available: Redefining agricultural yields: from tonnes to people nourished per hectare. (E S Cassidy et al, Environmental Research Letters 8:034015, August 1, 2013.)

The "Supplementary Information" file, linked to the article at the journal web site, includes a table showing how each country in the study uses its crop land. Quickly scanning the table, it looks like the percentage of crop calories allocated to human food ranges from a low of 19% (Finland) to a high of 99.9% (Barbados). (If you check out this table, note that the countries are listed alphabetically by their country code, not by their name.)

A recent post about crop efficiency: DEEPER ROOTING leads to deeper rooting -- and to drought tolerance (August 16, 2013).

More about the food supply...

* Implementing improved agriculture (January 6, 2017).

* Doggy bags and the food waste problem (January 4, 2017).

* How do vegetables get contaminated? (August 31, 2013).More about Barbados... Are urban dwellers smarter than rural dwellers? (August 2, 2016).

August 20, 2013

|

|

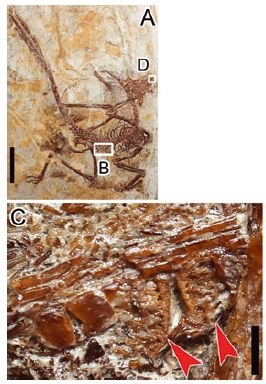

This is an Osedax. The name means that it eats bones. Whale bones, in particular. It's a new species of Osedax, just discovered in the Antarctic and reported in a new article.

The purpose of the work was to explore the fate of bone and wood on the Antarctic seafloor. The approach was experimental: the scientists put pieces of whale bone and wood on the seafloor; they came back a year later, collected the materials, and examined them.

The main finding was that the whale bone was populated by Osedax worms (and more), whereas the wood was largely untouched.

Osedax worms were discovered only a decade ago, but are now known to be widely distributed. They are found on bones, especially whale bones, on the ocean floor around the world. Osedax worms are interesting organisms. They secrete acid to bore through bone, and establish themselves attached to it. They then eat what is inside. Eat? These worms have no mouth. They have no digestive tract. What do they do? They carry bacterial symbionts -- in that root-like structure you see above, the part that penetrates the bone. The bacteria eat, and the worms grow on the bacterial products. The lifestyle of Osedax worms -- incompletely understood -- seems similar to that of the tube worms found at deep sea vents. The new work found Osedax worms on the whale bone: two new species, perhaps specialized for the cold waters of the Antarctic. The worm shown above is an Osedax antarcticus.

The lack of growth on the wood is interesting, too. Antarctica lacks wood -- and has lacked wood for millions of years. The surrounding seafloor may lack wood, too -- except for the small amount deposited by man as shipwrecks. The ocean currents around Antarctica may make it hard for larvae to enter the surrounding waters. The lack of wood and poor circulation may mean that wood digesters are rare in the region. Does this mean that old wooden shipwrecks there may be in good condition? This article may provoke people to find out. Ernest Shackleton's Endurance has been lying there since 1914; will someone now go after it?

Whales and wood. Weird worms. The waters of the Antarctica. The promise of recovering shipwrecks. It's an interesting article!

News story: Bone-eating worms thrive in the Antarctic. But wood-boring counterparts did not turn up in the frigid waters. (Nature News, August 14, 2013.)

The article, which is freely available: Bone-eating worms from the Antarctic: the contrasting fate of whale and wood remains on the Southern Ocean seafloor. (A G Glover et al, Proceedings of the Royal Society B 280:20131390, October 7, 2013.) If nothing else, browse it for the pictures.

More from Antarctica:

* IceCube finds 28 neutrinos -- from beyond the solar system (June 8, 2014).

* Life in an Antarctic lake (April 22, 2013).* Previous whale post: Killer whales: menopause (October 1, 2012).

* Next: On a similarity of bats and dolphins (September 15, 2013).More about whale bones: Whales in the Chilean desert -- the oldest known case of a toxic algal bloom? (April 13, 2014).

More about the role of Osedax in the degradation of whale carcasses: Animal communities around bone-eating worms (June 16, 2017).

More about Osedax: What did Osedax worms eat before there were whales? (May 30, 2015).

The Osedax is a member of the phylum of Annelids. Here are other posts about Annelid worms:

* Melatonin and circadian rhythms -- in ocean plankton (November 24, 2014).

* Unusual synthesis of cadmium telluride quantum dots (February 15, 2013).And more worms -- and their association with bacteria... How does worm "fur" divide? (January 4, 2015).

More about shipwrecks...

* Should physicists be allowed to use lead from ancient Roman shipwrecks? (December 2, 2013).

* An ancient navigation device? (April 16, 2013).* Previous quiz... Quiz: What is it? (November 20, 2012). More unusual fauna from the oceans.

* Next... Quiz: What are they? (September 27, 2013).More about bacterial symbionts... The aphid-bacterium symbiosis: a step toward manipulating it (May 15, 2015).

More about wood: Building with wood: might it replace steel and concrete? (June 14, 2017).

More floors: How does a robot know the floor is clean? (October 30, 2021).

August 19, 2013

Los Angeles and San Francisco. About 400 miles (640 km) apart. It's a seven hour drive, or an hour flight. It's one of the most heavily traveled intercity routes in the world, with not much in between. Hyperloop would cut travel time to about a half hour, and would be cheaper than current or other proposed alternatives.

Hyperloop is a proposed transportation system. The heart of it involves capsules holding 28 passengers traveling through an evacuated tube at near the speed of sound.

Musings wouldn't normally present something that is simply a fanciful proposal. However, a couple of things make this proposal different. First, its father: the proposal is from Elon Musk. Musk is founder of Tesla Motors and SpaceX; he has a track record of technical innovation. Second, the proposal is being put out to the public for comment; it's an open source proposal, as Musk describes it. He has no plans to work on it; it's out there for consideration. Looking at the Hyperloop proposal is not about declaring it good or bad, but about analyzing it, and perhaps deciding what needs to be done.

The announcement: Hyperloop. (E Musk, Tesla Motors blog, August 12, 2013. Now archived.) This is a short introduction. It links to the full proposal, a 57 page pdf file. The first few pages of that is a general overview, not too different from the blog page. The rest is more technical. However, I encourage you to continue with the pdf proposal, if you want more. Much of it is a very readable description of the system. There may be some details you want to pass over, but it is generally good.

A news story: Musk's Hyperloop Plan Draws Praise, Skepticism -- Is 'fifth mode' of transportation just hype? (National Geographic, August 13, 2013. Now archived.) The announcement of the Hyperloop proposal received much news attention -- most of it of little value. After all, what do we want to know, beyond the basic description? We want to know if the proposal is worth pursuing. Instant analysis has little to offer; even engineers need time to work through it. I chose this one news story as an example. It does quote one engineer, who says all the parts sound plausible, but that it would be quite a project to put it all together. Fair enough.

Anyway, it's fun.

Tesla Motors was mentioned in the post Electric cars (May 9, 2009).

A post about problems with current transportation systems: Traffic congestion patterns analyzed from cell phone records (July 7, 2013).

Among other "fanciful proposals" to make Musings... TALISE: A better boat for Titan? (October 16, 2012).

More about traveling... Exoplanet Travel Bureau (February 21, 2015).

More about capsules: Making chemistry easier: single-serving capsules (October 30, 2015).

August 18, 2013

A recent post pushed our story of the history of cancer back by about 100,000 years [link at the end]. A new article may push our story of the history of baseball back by two million years.

The new article is about throwing -- an activity that only humans do well. It's based in part on measurements of baseball pitchers, leading to some understanding of the anatomy that allows a fast pitch (over 100 miles per hour -- as most Americans would know).

The scientists take high speed video of trained pitchers, and analyze the motions. A key finding is that much -- perhaps half -- of the energy in the pitch has been stored in the shoulder.

In another part of the work, they examine anatomical differences between man and chimp. Chimps do not throw much; apparently, they can be trained to throw accurately, but not very hard. The scientists end up focusing on something called the humeral torsion -- an anatomical feature, relating to how the arm is attached.

|

The figure offers an interesting point about the humeral torsion.

It shows the range of humeral torsion found in various animals. The graph shows what they know, for chimps (Pan), humans (with separate data for the two arms), and for two extinct hominins. |

|

The figure shows that the humeral torsion is lower for the throwing arm than for the non-throwing arm. It also suggests that it is lower for humans than for chimps -- for the throwing arm (the dominant arm). And it suggests that the lower value for this angle may be an ancient trait, found in samples of extinct hominins. It is plausible that better throwing was important for early humans -- either for improved hunting, or for more rapid promotion to the major leagues. This is Figure 4d from the article. | |

If you want to take some of those points with some skepticism, that's good. The graph shows what they have. That graph is the basis of the suggestion that a trait needed for good throwing might be very ancient. Even if you don't buy the data at this point, it is something that may well be testable with further data. Regardless, their basic findings are about how humans throw, and that part stands.

News stories:

* Origins of human throwing unlocked. (BBC, June 26, 2013.) Includes some views of scientists who are skeptical of the authors' interpretation.

* How Humans Evolved to Throw a Fastball. (Discover, June 26, 2013.) Includes a little more about the chimps.

* Pitching Science: Why Apes Make Bad Pitchers. (BetterPitching.com, July 2, 2013.) This is not an ordinary science news site, but this page is fun -- and actually rather well done.

Movie. There is a 2 minute promotional video about the article, from the journal. It gives a useful overview of the work -- and has some cute footage. Why chimps don't play baseball. (YouTube, from Nature magazine.)

The article: Elastic energy storage in the shoulder and the evolution of high-speed throwing in Homo. (N T Roach et al, Nature 498:483, June 26, 2013.) There is a copy at: pdf copy.

Background post, on early cancer: A tumor in a Neandertal (July 8, 2013).

Among other posts about baseball:

* Effect of climate change on baseball games (June 10, 2023). Links to more.

* Comparing the death rates of American football and baseball players (July 2, 2019).

* The Mudville story, on its 125th anniversary (June 3, 2013).

* Baseball physics (July 31, 2011).There is now an extensive list of sports-related Musings posts on my page Internet resources: Miscellaneous under Sports.

More about anatomy and energy: Why don't penguins fly? (August 24, 2013).

Also see:

* Can chimpanzees learn a foreign language? (March 10, 2015).

* On handedness in humans (September 30, 2013).

August 16, 2013

Drought is a serious problem for plants -- and for those who grow plants for their use. A new article offers an advance in dealing with drought for one of the world's most important crop plants.

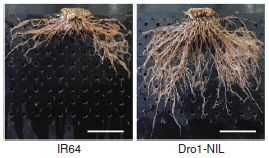

The background is that rice varieties vary in their drought tolerance. In the new work, the scientists find one gene that plays a key role in promoting drought tolerance. They cross this gene into another rice variety -- and it works: the new rice strain is now drought tolerant.

The gene they find affects the nature of the root system of the rice plants, in a fairly simple way. Some varieties of rice have shallow roots, whereas some have deep roots. The deeper roots allow the plant to tap deeper water, and thus promote drought tolerance. The gene for this trait is called DEEPER ROOTING 1, or Dro1 for short.

Here is what the two kinds of root systems look like.

|

The figure shows the root systems of two plants.

IR64 (left) is a standard, widely used rice variety. Its roots are shallow. Dro1-NIL (right) is a strain they constructed by crossing IR64 with another variety, which carried the deep-rooting allele for Dro1. This is part of Figure 1a from the article. The scale bar is 10 cm |

Here are some results showing how the new Dro1 allele affects the response of rice to drought.

|

The figure shows the grain yield per plant, under various conditions.

The conditions are no drought, moderate drought, and severe drought -- as labeled at the bottom. For each condition, they tested the same two strains shown above: IR64 (blue bars) and Dro1-NIL (now called simply NIL; red bars). |

|

In the "no drought" condition, the two strains produce about the same amount of rice. (If you look carefully, you can see that the yield for the new strain is a bit lower, but it is not statistically significant. Further testing is needed to be sure.) In the "moderate drought" condition, the new strain makes as much rice as before, whereas the original strain is substantially reduced. In the "severe drought" condition, both strains show reduced yield. However, the new strain does much better than the original strain. This is part of Figure 5c from the article. | |

In summary, the new strain does as well as the original strain under normal conditions, and does better under drought conditions. That's encouraging.

Is there any downside to using the new deep-rooting Dro1 allele? So far, they don't see any problems. In particular, the growth of the strains with and without the deep-rooting allele seems similar when water is plentiful. The scientists attribute the observed effects to changes in the Dro1 gene, but do not have proof of that at this point. Dro1 seems to affect the angle at which roots form, but they do not know how this occurs. As always, further work should proceed with caution, being alert for unexpected findings, including unexpected effects under real conditions in the field.

News stories:

* Rice Gene Digs Deep To Triple Yields In Drought. (Asian Scientist, August 6, 2013.)

* Newly-discovered rice gene goes to the root of drought resistance. (International Center for Tropical Agriculture (CIAT), August 6, 2013. Now archived; ignore the message about the site moving.) This is from one of the participating institutions.

The article: Control of root system architecture by DEEPER ROOTING 1 increases rice yield under drought conditions. (Y Uga et al, Nature Genetics 45:1097, September 2013.)

Glossary entry: Allele.

A post about the opposite problem for rice: What to do if you are about to drown (September 23, 2009).

Another post about drought tolerance: Plants need bacteria, too (October 9, 2010).

More about rice:

* A perennial rice (March 4, 2023).

* How rice leads to global warming, and what we might do about it (September 2, 2015).

* Can growing rice help keep you from becoming WEIRD? (July 22, 2014).More about crop efficiency -- the big picture: What is the proper use of crop land? (August 23, 2013).

August 14, 2013

A news headline touts 5D-storage and a million year lifetime for a new type of data storage medium. What's real? What's hype? It's hard to tell, but the story is interesting.

What's the story? The scientists use a high energy laser to write to glass. The laser energy modifies the structure of the glass; the changes -- the data -- can be read with a microscope. Thus the data is stored in local structural changes of the glass itself. The new report provides technical developments in the laser writing system, plus a little demonstration.

5D? Three of the dimensions are the spatial coordinates of the spot in the glass. Two involve the optical properties of the altered spot. In common data storage, there is one bit of information (0 or 1) at a particular site (address). In the demonstration reported below, it is three bits per spot. They may intend higher data storage at some point.

Storage life? There is nothing in the current work about storage life, except for some references to earlier work.

The immediate reason the story comes up is that the scientists recently gave a talk about the work. As usual when a post is based primarily on a meeting report, information is limited, and we'll be brief. The point is to note something of interest. The team has been working on this for some time, and background information is scattered throughout the literature. From looking at a couple of the papers, it seems the system is quite experimental. They have only limited understanding of how it actually works. The demonstration reported is a first, but is a modest step. A story in progress; a story worth watching.

News story: 5D nanostructured quartz glass optical memory could provide 'unlimited' data storage for a million years. (Kurzweil, July 10, 2013.)

The text of the meeting talk, which is freely available: 5D Data Storage by Ultrafast Laser Nanostructuring in Glass. (J Zhang et al, Conference on Lasers and Electro-Optics (CLEO), June 2013.) The actual accomplishment was recording -- and then reading -- a 300 kb text file.

Another post exploring an approach to long term data storage: Using DNA for data storage (March 5, 2013).

More about data storage: Progress toward an ultra-high density hard drive (November 9, 2016).

More about silica: Croatian Tethya beam light to their partners (December 16, 2008). This post is about silica-based sponge spicules being used for light transmission -- in the sponge.

More about glass:

* How to mold glass (June 19, 2021).

* Turning metal into glass (September 21, 2014).More about memory: A mouse that remembers an event that did not happen (September 3, 2013).

Thanks to Borislav for suggesting this item.

August 13, 2013

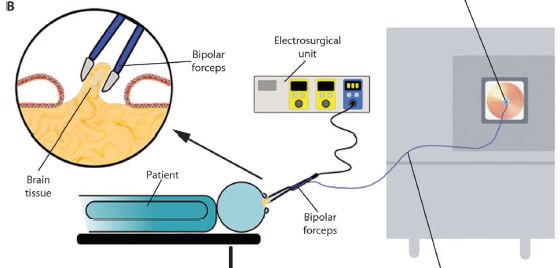

Surgical removal of a tumor is complex. The surgeon wants to remove the entire tumor, but little normal tissue. How does one tell? A common approach is that samples of the tissue that are removed are sent out to the pathology lab, where they are analyzed -- while the surgeon waits. The pathologist reports back, for each sample, that it is tumor or normal. This can take a half hour or so.

A new article offers the prospect of "instant" analysis of the removed tissue to see if it is tumor or normal. The trick is to send the sample to the chemistry lab, rather than to the pathology lab. More specifically, the tissue removed by the surgeon's knife is sent directly to a machine for chemical analysis.

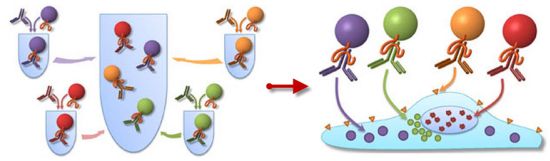

The following figure is a cartoon of the scheme.

|

|

You might start with the patient -- bluish, at the bottom (and labeled). The round part at the right is the head. It's open, because they are doing brain surgery. You can see the "bipolar forceps", a tool that is inserted into the head, and is also connected to instrumentation. A control unit is at the top -- the "electrosurgical unit"; the big box to the right is a mass spectrometer (commonly called "mass spec"). The forceps is the tool for removing tissue; it is a type of surgical "knife". You can see more detail about it in the circled part at the upper left. The forceps (or knife) removes a bit of brain tissue. The tissue is burned. This is all standard. What's new is that the vapors -- or smoke -- from the removed tissue can exit via the forceps and go to the mass spec for analysis. That is, the scientists have attached a mass spec to the current "knife" the surgeons use. They call their new device an intelligent knife, or iKnife. Of course, it's not the knife that is intelligent, but the mass spec. This is Figure 1B from the article. |

The mass spec -- a chem lab instrument -- is the key here. It measures the mass of the molecules in the smoke. With a little luck, it even identifies what they are; however, that really doesn't matter much here. What the scientists do is simple pattern matching. The pattern of molecules in smoke from cancer tissue is different from the pattern from normal tissue. The mass spec computer compares the surgeon's sample with its database of tumor and normal tissues, and reports back whether the sample is tumor or normal. It does this within a few seconds.

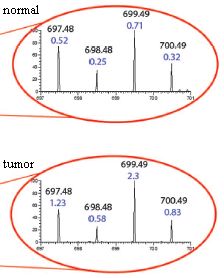

Here is an example of what the analysis looks like, and how it distinguishes normal and cancerous tissue.

|

Two samples were analyzed here. One is a sample from normal liver; the other is a sample from a tumor that has metastasized to the liver from a breast cancer. The small part of the mass spectrum shown here has four peaks. Two numbers are shown for each peak. The upper number is the mass for this peak (e.g., 697.48 for the left-hand peak). (That's the molar mass, or molecular weight, of the chemical of this peak.) The lower number is the amount of material in this peak. This is expressed on a relative scale, where "1" is the amount of another peak not shown here (but well marked in the full figure in the article).

You can see that there is about twice as much of the left-peak chemical (697.48) in the tumor sample (1.23) compared to the normal sample (0.52) -- and so forth. If this strikes you as tedious and difficult... remember, the actual analysis is done by the computer and is based on many peaks. |

|

This is slightly modified from part of Figure 2 B & C from the article. In the article, part B shows the complete mass spectrum for the "normal" sample; part C shows the complete mass spectrum for the "tumor" sample. Then, one small region of each is expanded; that is what is shown here. I have added the labels "normal" and "tumor". The red lines have no significance at this point; in the full figure, they show where these expanded regions come from. | |

The iKnife is a surgeon's knife now connected to a mass spec, so that the surgical sample can be instantly analyzed. The new article is the first test of this device during human surgery, and it scored well.

News stories:

* Next Generation: Smoking Out Cancer -- Researchers analyze smoke generated during surgical tumor removal to distinguish healthy and diseased tissues in real time. (The Scientist, July 17, 2013.)

* 'Intelligent knife' tells surgeon if tissue is cancerous in 3 seconds. (Kurzweil, July 19, 2013.)

The article: Intraoperative Tissue Identification Using Rapid Evaporative Ionization Mass Spectrometry. (J Balog et al, Science Translational Medicine 5:194ra93, July 17, 2013.)

More about brain surgery: 3D printing: Neurosurgeons can practice on a printed model of a specific patient's head (December 16, 2013).

My page for Biotechnology in the News (BITN) -- Other topics includes a section on Cancer. It includes a list of some other Musings posts on cancer.

Another post reporting a medical use of mass spectrometry: A new, simple way to measure bone loss? (September 14, 2012).

More mass spectrometry:

* Close-up view of an unwashed human (July 29, 2015).

* Iridium(IX): the highest oxidation state (December 14, 2014).

August 11, 2013

Near-death experience (NDE) is what is reported by people who have been, well, near death but survived. Intriguingly, many such people report experiences that seem rather similar. A recent article explores the "quality" of these NDE "memories".

Caution... Some of what is reported here may be met with skepticism. My goal is to describe what the scientists did and what they claim. Try to understand what it is they did. If it raises questions, that is fine. This is a controversial field. Experts do not agree what is going on. The current article is an interesting contribution to the field, but neither the authors not I claim it is "the answer".

The general idea behind the new work is to ask whether "memories" of NDE are "real". There are standard tests to measure the quality or strength of memories. Here the scientists apply those tests to NDE "memories", along with some other types of memories for comparison. As background, it is known that memories that are imagined rate lower on these tests than real memories.

The tests were run on four groups of people. The first three were people who had been in a coma. These were subdivided into three groups:

* those who had NDE,

* those without NDE but with memories of the coma,

* and those with no memory of the coma.

* A fourth group was a control group of "normal" people, without coma experience.

Each person was asked to recall certain things, and their recollections were rated by the standard tests.

Here are the results of one test. In this test, each person was asked about a "target" memory. People in the NDE group were asked about the NDE; people in the coma group (with memories of it) were asked about the coma. Others were asked about a childhood experience. (Hm, I wonder how good a control that is. Anyway, that's what they did.)

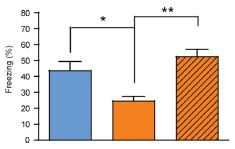

|

The graph shows what they found. Look at the left block of bars, the block labeled "sensory". This compared the four groups of people described above. Those four are, in order from the left:

* NDE -- the darkest bar, at the left * coma (and memory of it) * none (coma, no memory of it) * control -- the lightest bar, at the right |

|

You can see that the NDE group (left bar of each data set) showed the strongest response. Whatever it is that the test is measuring, the NDE memories scored highly. That's true here for the "sensory" category (left data set) and for the "clarity" category (right data set). The full figure shows five such data sets; NDE gave the highest result in four of them. (In one, the four test groups were about the same.) This is part of Figure 2 from the article. I have shown only the two categories on the left side of the published figure. | |

Another test compared these same people for different kinds of memories. These included the target memories, discussed above, as well as other real and imagined memories. The general result was that the NDE memories rated highly -- higher than other real memories and certainly higher than imagined memories.

What do we learn from this? The general picture is that NDE memories are real, not imagined. The authors say, near the end of the abstract, that " ... their physiological origins could lead them to be really perceived although not lived in the reality." The work suggests that there is some biological basis behind NDE memories.

News story: Memories of Near Death Experiences: More Real Than Reality? (Science Daily, March 27, 2013.) Good overview.

The article, which is freely available: Characteristics of Near-Death Experiences Memories as Compared to Real and Imagined Events Memories. (M Thonnard et al, PLoS ONE 8(3):e57620, March 27, 2013.)

More about NDE:

* Briefly noted... Was thanatosis the origin of near-death experiences? (August 11, 2021).

* Brain activity at the time of death: Do rats have "near-death experiences"? (March 8, 2014).

* Near-death experiences: the CO2 connection (April 28, 2010).More about memory:

* More about memory: A mouse that remembers an event that did not happen (September 3, 2013).

* Caffeine boosts memory -- in bees (April 12, 2013).My page for Biotechnology in the News (BITN) -- Other topics includes a section on Brain (autism, schizophrenia). It includes an extensive list of brain-related Musings posts.

August 9, 2013

If you knew how much money you had in your pocket and how many days it had to last, you could divide the two, and calculate how much you could spend each day. If you knew how much energy reserve you had and how many hours it had to last until your next meal, you could divide the two, and calculate how fast you could use your energy without running out. According to a new article, the tiny plant Arabidopsis apparently does just that.

Arabidopsis uses light energy during the day. Among other things, it makes some starch, which is held as an energy reserve for the night. It then uses the starch as its energy source during the night. Running out would be bad; having much left over would represent an inefficiency.

Here is an example of how this little plant makes and consumes starch...

|

The graph shows the starch content of the plants (y-axis) versus time of day (x-axis), for three conditions. The plants were grown in light for the first part of the day (light region of graph), and then switched to darkness (shaded region).

In the three conditions, the lights were turned off at various times: 8, 12, or 16 hours. (All the plants had been maintained with a 12 hour light - 12 hour dark cycle prior to the experiment itself.) |

|

For example... The circle points are for plants that were grown for 8 hours in light, then 16 hours in the dark. You can see that the starch level rises during the period of light, then declines during the darkness. The rate of using starch (the slope of the line during the dark period) is about constant in each case; by the end of the night, each plant has about used up its starch. That is, the plant adjusts its nighttime usage of starch, depending on how much it has and how much darkness is coming. That means that the plant knows how much darkness is coming. This is Figure 1A from the article. | |

Should we be surprised by the result? In one sense, no. It clearly is of benefit to the plant to use its starch judiciously. However, it's less clear why the plant would be able to adapt so readily to sudden major changes in day-night length.

How can the plant do such math -- or at least appear to do so? One can imagine various strategies. Here is one simple possibility... Imagine that the plant makes two signaling molecules: one is a measure of the amount of starch, and the other is a measure of darkness. The first is simple enough: it might even be the amount of starch itself, although using a small soluble molecule might work better. The second? What if, during the light period, the plant made a molecule that controlled the rate of degradation of starch. The longer the light period, the more of the degradation signal it makes. That leads to long days -- which have short nights -- having fast usage of the starch.

The signal for degradation might be the enzyme that degrades starch. That's logical, but may be expensive. More efficient might be a small molecule that controls the starch-degrading enzyme. The main purpose for now is to see the logic of how a system might work -- how a plant may appear to carry out math.

The plant does not literally do math. But it does measure things. The amount of a particular chemical may well be a measure of how long the plant has been making it. The relative amounts of chemicals can determine process rates. And natural selection, acting on such processes, can lead to plants having useful behaviors, which promote their survival.

The authors present some models, to explain the graph above and the other work they report. The models are not answers, but ideas; they are a framework for further work. What should follow is analysis to find out the molecular components of these signals. They begin such analysis in the paper.

News story: Plants do sums to get through the night, researchers show. (Phys.org, June 23, 2013.)

The article, which is freely available: Arabidopsis plants perform arithmetic division to prevent starvation at night. (A Scialdone et al, eLife 2:e00669, June 25, 2013.)

Posts on math abilities of animals include: Making smarter flies (July 18, 2012).

Also see: Multiplication tables, bamboo, 2300 years old (January 13, 2014).

Posts on how organisms tell time include: Light-dark (day-night) cycles affect pregnancy (August 10, 2012). I didn't use the term above, but the Arabidopsis work discussed here is an example of circadian (daily) rhythms.

Among other posts about using Arabidopsis... Cauliflower math (July 26, 2021).

August 5, 2013

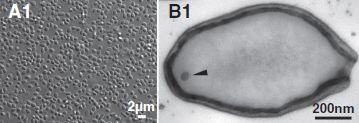

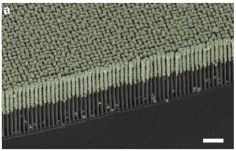

Here are photographs of the largest known virus, as reported in a new article.

| |

|

The left frame (A1) shows a collection of the viruses as photographed under the light microscope. Note the 2 micrometer scale bar; these things are about the size of common small bacteria. The right frame (B1) shows one virus particle, as observed by electron microscopy. It's about 1 µm long (consistent with part A1). (The arrowhead? It points to a dot; the authors don't know what the dot is.) This is part of Figure 1 from the article. | |

These viruses grow in amoebae. They are part of a story that has developed over the last decade or so of finding larger and larger amoeba viruses. The new article reports two new amoeba viruses, which the authors call pandoraviruses. They are not only large, but complex. The bigger one, the virus shown above, has a genome of 2.5 million base pairs, with 2500 genes. Both those numbers are more than half of what the common bacterium Escherichia coli has.

A biologist's first instinct is that any infectious agent this big is likely to be a cell. After all, viruses are small; few are visible at all with a light microscope. Yet this is a virus -- unmistakably. It does not grow and divide, as a cell would. It empties its contents into a host cell, where a hundred or so progeny are then made. It's pretty much a typical virus life cycle. What's novel is the size and complexity of this virus.

The authors make another, more speculative point about these new viruses. Most of the genes (95%) are unrelated to anything they know. These viruses aren't even related to other amoeba viruses known so far. That's odd. What does it mean? The authors speculate that these viruses may be remnants of some unknown life form. They even speculate it might be something completely distinct from the three domains of life we know about (bacteria, archaea, eukaryotes). Interesting speculations -- with little to go on at this point. It's also possible that we just haven't found the relatives of these viruses. These speculations appear in the final paragraph of the article. Unfortunately, the speculations dominate much of the news coverage. Speculations aside, these are the largest known viruses -- and they raise interesting questions.

News stories:

* New Giant Viruses Break Records. (The Scientist, July 22, 2013.)

* Changing View on Viruses: Not So Small After All. (C Zimmer, New York Times, July 18, 2013. Link is now to Internet Archive.) Excellent.

* News story accompanying the article: Microbiology: Ever-Bigger Viruses Shake Tree of Life. (E Pennisi, Science 341:226, July 19, 2013.) Beware the hype.

* The article: Pandoraviruses: Amoeba Viruses with Genomes Up to 2.5 Mb Reaching That of Parasitic Eukaryotes. (N Philippe et al, Science 341:281, July 19, 2013.) The article should be freely available at the journal web site, with registration. Also, check Google Scholar for a copy.

This is the first Musings post on the large viruses of amoebae. However, the topic has long been on my page Unusual microbes in the section A huge virus. That contains background on the nature of viruses, and some of the earlier work on the amoeba viruses.

More about such viruses...

* More giant viruses, and some evidence about their origin (June 13, 2017).

* Recovery of live, infectious virus from 30,000 year old permafrost (March 25, 2014).More about amoebae...

* Capsaspora owczarzaki and you or Where did animals come from? (April 10, 2011).

* Farming by amoebae (February 15, 2011).More about the three domains of life... Carl Woese and the archaea (January 12, 2013).

A previous "largest"... The spruce genome: it's big (July 1, 2013).

There is more about genomes on my page Biotechnology in the News (BITN) - DNA and the genome. It includes a list of Musings posts on sequencing and genomes.

Thanks to Borislav for suggesting this article.

August 4, 2013

A recent article reports the genome sequence of a horse. A horse that may be 700,000 years old. It's the oldest genome yet sequenced, by about a factor of five.

The article is of interest for two reasons. One is the information it provides about the horse lineage. The other is the implications for sequencing ancient genomes.

Horses...

The primary focus of the article is determining the genome sequence for a fossil horse bone that was recently found at Thistle Creek in Canada's Yukon Territory. The bone is estimated to be about 560-780 kiloyears (kyr) old. Sequencing this Thistle Creek sample was a technical achievement, which we'll return to in a moment. Having a genome sequence for such an old sample helps in setting out genealogy charts. To assist further, the scientists sequenced several other horse samples.

|

This figure shows the relationships they infer between various kinds of horses, based on the available DNA sequence information -- much of it from this article.

Numbers such as 12.40X, shown for the donkey, indicate the extent of the DNA sequencing; 12.40X means that the amount of sequencing done covered the genome, on average, 12.4 times. Most modern genome sequencing uses several-fold coverage; 10X or so is common in the figure. The high coverage helps to reduce errors. However, the Thistle Creek sample was sequenced only 1.1X -- due to shortage of material. Another sample was sequenced less than 2X; it, too, is from an old fossil: 50 kyr in that case. |

|

The authors show their best estimates of how the various horses are related. The first branch point they show is between the donkey and the horse group. They now date this split at about 4 million years. That's further back than earlier estimates, but such estimates depend on assumptions that are hard to test. That branch point is marked by a dark dot on the genealogy chart, near the upper left. It is described, with a date estimate, in the figure legend at the lower left. MRCA = most recent common ancestor. This is Figure 3a from the article. | |

Ancient genomes...

The Thistle Creek horse genome is the oldest genome yet sequenced, with an estimated age of 700,000 years. It was done with the latest technologies for sequencing degraded DNA. Importantly, it was possible because of how the bone it came from had been stored over the ages. The colder the better -- and this Arctic bone was stored cold.

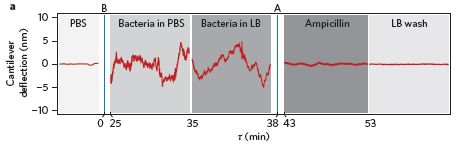

So what does the future hold? What if we find fossils from even colder storage? The "News and Views" item accompanying the article explores this, and presents a graph...

|

This figure at the right shows the stability of DNA (x-axis) vs storage temperature (y-axis). Stability of DNA is shown as the half-life of the DNA. There are two curves, for two different lengths of DNA chains. 30 base pair (bp) chains are now useful in genome sequencing.

These curves are based on various data, including success of sequencing DNA from samples found at various T. A point labeled H is for the newly-sequenced horse. It's the oldest DNA yet sequenced -- and the coldest. |

|

|

What's intriguing of course is extrapolating those lines. What if we could find DNA samples that had been maintained at even lower T? It seems that we might get even older DNA sequences: perhaps as old as a million years -- or more. This is Figure 2 from the Nature "News and Views" story accompanying the article. | |

If you're interested in horses, look over the first figure above. If you're interested in the future of sequencing of ancient genomes, savor that second figure -- the lower right corner.

News stories:

* Ancient horse bone yields oldest DNA sequence. (BBC, June 26, 2013.)

* Horse Genome Is Oldest Ever Sequenced -- By sequencing the genome of a 700,000-year-old horse, researchers have pushed back the time of DNA survival by almost an order of magnitude. (The Scientist, June 26, 2013.)

* A 700,000-Year-Old Horse Gets Its Genome Sequenced. (Science Daily, June 26, 2013.)

* "News and Views" story accompanying the article: Ancient DNA: Towards a million-year-old genome. (C D Millar & D M Lambert, Nature 499:34, July 4, 2013.) Interesting title. The second graph above, about old DNA, is from this story.

* The article: Recalibrating Equus evolution using the genome sequence of an early Middle Pleistocene horse. (L Orlando et al, Nature 499:74, July 4, 2013.)

Records are made to be broken... Briefly noted... The oldest known genome: a new record (August 31, 2021).

Recent posts about sequencing and genomes include:

* Are DNA sequencing devices resistant to radiation? And why might we care? (July 16, 2013).

* The spruce genome: it's big (July 1, 2013).More old DNA: DNA from a 400,000-year-old "human" (December 9, 2013).

And... A claim of finding dinosaur DNA (May 31, 2020).

And perhaps old chromosomes... Chromosomes -- 180 million years old? (April 18, 2014).

And ancient proteins... Blood vessels from dinosaurs? (April 22, 2016).

A book, listed on my page Books: Suggestions for general science reading: Shapiro, How to clone a mammoth -- The science of de-extinction (2015). The book author is one of the authors of the article discussed in this post.

Perspective... DNA sequencing: the future? (November 7, 2017).

There is more about sequencing on my page Biotechnology in the News (BITN) - DNA and the genome. It includes a list of Musings posts on sequencing and genomes.

More about horses...

* How horses learned to walk (September 21, 2016).

* Leopard horses (December 2, 2011). This also involves investigation of an ancient horse using DNA technology.

August 2, 2013

Cars kill birds. The evidence is left on the road; it is commonly called roadkill.

A team of scientists in the American midwest has been examining the roadkill for one bird species in one area. They have recently published some interesting observations. They count the birds -- roadkill and total population. And they measure the wing length -- roadkill and total population. They've been doing this for nearly 30 years.

During the study, the number of roadkill birds per year has generally declined (by about 80% over the study period). That's not due to declining bird populations; in fact, they show that the bird population increased (by about 2-fold).

What about wing lengths?

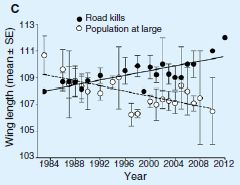

|

The graph shows the average wing length in the bird population over the period of the study (open symbols, dashed line).

It also shows the average wing length of birds killed by cars over the same time (solid symbols, solid line). |

|

You can see that the average wing length in the bird population has been decreasing over the period of the study. For simplicity, they fit a straight line to the data set over the entire period. I wonder if there might have been a more abrupt change around 1996, but that doesn't matter much for now. What about the killed birds? Their wing length does not decline over the study period; in fact, it seems to be increasing. This is Figure 1C from the article. | |

What does this mean? They don't know for sure, but they offer some comments. First, birds with longer wings are less agile; they may be less able to avoid cars. That is, it is reasonable that wing length may be functionally related to the chances of getting killed by a car. Therefore, it is reasonable that the birds have evolved during the study period to have shorter wings, thus promoting their survival in the face of their auto enemy. Why that would lead to the killed birds having longer wings is not clear. We also note that, at the beginning, the killed birds were not those with the longest wings; this fact does not easily fit with the simple model.

They discuss a number of technical issues about the measurements as well as alternative interpretations. It's not conclusive. They have no evidence about the bird's genetics. The possibility that the birds have evolved during the study period, with selective pressure from cars, is reasonable -- as a hypothesis, subject to further testing.

News story: Where, Oh Where, Has the Road Kill Gone? (Science Daily, March 18, 2013.)

The article: Where has all the road kill gone? (C R Brown & M B Brown, Current Biology 23:R233, March 18, 2013.) Check Google Scholar for a freely available copy.

Previous post about cars: Traffic congestion patterns analyzed from cell phone records (July 7, 2013).

Previous post about birds: Of birds and butts (February 2, 2013). Interestingly, it is also about the interaction of birds with the human environment.

And... Airport food: What do the birds eat? (May 24, 2014).

More on wing adaptations: Introducing Supersonus -- it stridulates at 150,000 Hz (June 16, 2014).

July 31, 2013

Old Faithful Geyser (OFG), in Yellowstone National Park, sprays water a hundred feet into the air every 90 minutes -- day and night, summer and winter. Why? Yellowstone is a volcanically active area. Boiling water from underground makes its way through cracks in the Earth to the surface. However, an understanding of the regular geyser eruptions has been emerging only gradually.

A new article reports analysis of seismic activity around OFG. Based on this information, the scientists propose a more detailed model of the plumbing that makes the geyser work.

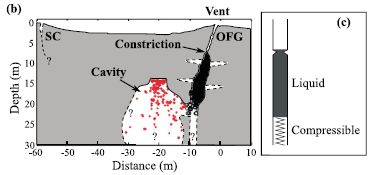

|

Part b of the figure (left side) summarizes their current view of what the Old Faithful site looks like underground. The y-axis is depth; 0 is the surface of the ground. The x-axis is horizontal distance; 0 is the geyser vent.

Part c (right) is a simple model of how the geyser works. |

|

Look at part b. The surface opening, the vent, of OFG is labeled. Below it is a near-vertical channel; it is shown partly in black. This channel has been explored with instruments -- even video cameras -- in the past. A bit below the surface is a constriction in the channel; obviously, that constriction plays a key role in determining an eruption event. To the left of the vertical channel is a cavity, partly marked with red. Discovering this cavity is the major finding of this article. The cavity is connected to the vertical channel. Steam and hot water can accumulate in the cavity. It is a "recharge cavity", where the pressure builds up. The red dots in the cavity mark the locations of tremors, as determined by the new analysis. These tremors result from events such as bubble collapse against a wall. You can see how the pattern of events shows a flat roof for the cavity. (Similarly, the black of the main vertical channel is made up of dots marking tremor sources there.) The model in part c is simple. There is a column of liquid, with gravity holding it down, and hot gas acting like a spring below it. At some point the upward pressure is enough that the liquid column breaks through the constriction; the geyser erupts. The model is simple; the geyser is simple. That's why it is regular (though not as regular as I suggested at the start). The basic model of this figure is not new; it is an idea without specifying the parts. The scientists suggest that the newly-discovered cavity is a key part of the "spring". This is Figure 3 parts b and c from the article. | |

This article enhances our understanding of one of Earth's great natural shows. The discovery of the cavity and the simple model of how OFG works are probably right; however, we should emphasize that the details remain conjecture.

News story: Newfound chamber below Old Faithful may drive eruptions. (American Geophysical Union, April 12, 2013.)

The article, which may be freely available: The plumbing of Old Faithful Geyser revealed by hydrothermal tremor. (J Vandemeulebrouck et al, Geophysical Research Letters 40:1989, May 28, 2013.)

For the basics about Old Faithful Geyser, with some pictures, see Wikipedia: Old Faithful.

Yellowstone National Park was mentioned in the post Did life start in a geothermal pond? (February 28, 2012).

July 29, 2013

Good warning systems enhance survival. We use smoke detectors in our homes; societies set up systems, in the castle tower or in high-flying satellites, to watch for possible signs of attack.

The immediate question at hand... How do bean plants learn of impending attacks from aphids?

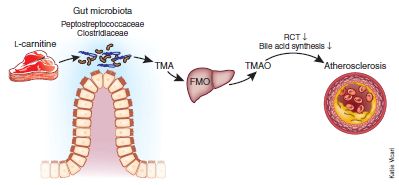

We've known some about this for some time. When a plant is attacked by an insect, a defense system is activated. This includes the release of volatile chemicals into the air; these chemicals can be received by nearby plants, which then activate their defense systems in advance of attack. But what if air communication is blocked? The plants still seem to respond when their neighbors are attacked. A new article suggests that the plants are signaling underground, using fungi to carry their messages. Let's look at how the scientists figured this out. It's quite clever.

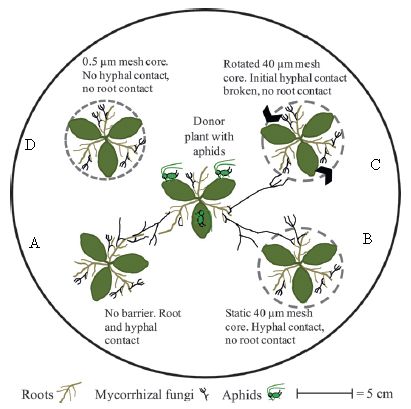

|

The figure shows the experimental arena. It's aphids vs beans.

The arena contains five plants. The central plant will be attacked by aphids; the general question is whether the others respond. Because the central plant is the one that might be emitting warning signals, it is called the "donor" plant. The others are "receivers". No air communication is allowed between the plants, thus blocking one known type of signaling. However, they might communicate underground, either via roots or via the fungi that associate with roots -- the so-called mycorrhizal fungi. To block these contacts, the scientists put a mesh around some plants, as detailed in a moment. They have two kinds of mesh. A 40 µm mesh blocks roots but not the fungal strands, (called hyphae). A 0.5 µm mesh blocks both. A mesh is shown as a dashed line around a plant. |

|

Plant A (lower left) has no mesh. It can communicate with the donor plant in the center by both roots and fungal hyphae. Plant A is a "normal" control. Plant D (upper left) has the small mesh, which blocks both roots and hyphae. Plants B and C (right) have the larger mesh, which blocks roots but allows hyphae. For plant C, they then rotate the mesh, to break the hyphal contact. The black arrowheads on the dashed mesh show this rotation. This is slightly modified from Figure 1 of the article. I have added the letters A-D to label the four outer plants; this is for ease of discussion. | |

That's the set-up. The scientists put aphids in the arena with the central plant -- the donor. They then sampled the air around each plant; that air is known as the "headspace". They did two kinds of tests on the headspace.

In one test, they measured the amounts of chemicals known to be defense chemicals. In another test, they measured whether aphids were attracted to or repelled by the headspace gases. Both tests showed that plants A and B behaved like the donor plant, whereas plants C and D did not. A and B are the plants with hyphal contact with the donor. Thus they conclude that plants can communicate signals without sharing air -- if the fungal network is intact.

Here is an example of their data, for one of the defense chemicals, methyl salicylate:

Level around donor plant: 1.46.

Level around plants A and B, with hyphal contact: 1.42, 1.85 (about the same as for the donor).

Level around plants C and D, without hyphal contact: 0.41, 0.06 (much less than for the donor).

Data are in nanograms of the chemical per gram plant. These results are from Table 2 of the paper, which includes error bars.

Those numbers show that fungal contact is mediating the signal. That is the general picture that emerges from the entire article.

News story: Plants use underground networks to warn of enemy attack. (Phys.org, May 10, 2013.)

The article: Underground signals carried through common mycelial networks warn neighbouring plants of aphid attack. (Z Babikova et al, Ecology Letters, 16:835, July 2013.)

More about aphids...

* The aphid-bacterium symbiosis: a step toward manipulating it (May 15, 2015).

* Are aphids photosynthetic? (September 17, 2012).

* Red and green aphids (June 2, 2010).More about plant defenses...

* Inter-plant communication via the Cuscuta parasite (September 15, 2017).

* How the tomato plant resists the Cuscuta (November 4, 2016).

* Grapefruit and medicine (March 26, 2012).

* A plant that cheats (July 6, 2009).

July 27, 2013

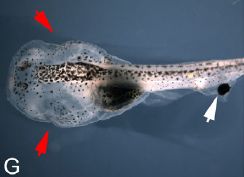

|

A one-eyed tadpole. The eye is on the tail (white arrow). The natural eyes have been removed (red arrows).

This is Figure 1G from the article. |

Making such a tadpole is straightforward surgery. The new eye is transplanted from a separate donor animal (early in development). What's interesting here is whether the tadpole can see.

In a recent article, scientists report that they tested about a hundred of these one-eyed tadpoles. A few were able to learn a behavior requiring vision. Those few did as well as normal tadpoles; tadpoles without eyes could not do this test (though they could sense light).