Home

> Musings: Main

> Archive

> Archive for September - December 2015 (this page)

| Introduction

| e-mail announcements

| Contact

Musings: September - December 2015 (archive)

Musings is an informal newsletter mainly highlighting recent science. It is intended as both fun and instructive. Items are posted a few times each week. See the Introduction, listed below, for more information.

If you got here from a search engine... Do a simple text search of this page to find your topic. Searches for a single word (or root) are most likely to work.

Introduction (separate page).

This page:

2015 (September - December)

December 29

December 21

December 16

December 9

December 2

November 23

November 18

November 11

November 4

October 28

October 21

October 14

October 7

September 30

September 23

September 16

September 8

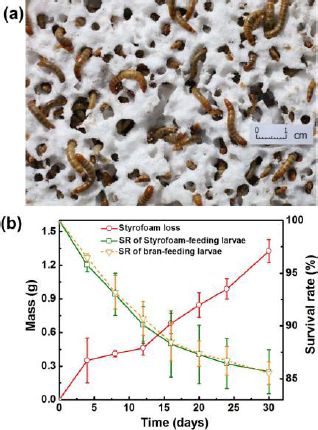

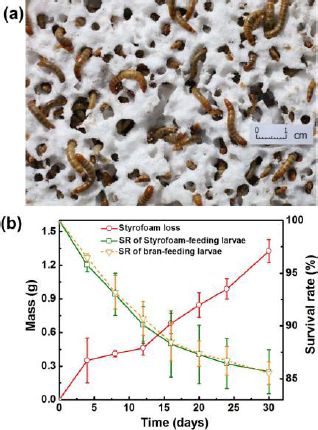

Also see the complete listing of Musings pages, immediately below.

All pages:

Most recent posts

2026

2025

2024

2023:

January-April

May-December

2022:

January-April

May-August

September-December

2021:

January-April

May-August

September-December

2020:

January-April

May-August

September-December

2019:

January-April

May-August

September-December

2018:

January-April

May-August

September-December

2017:

January-April

May-August

September-December

2016:

January-April

May-August

September-December

2015:

January-April

May-August

September-December: this page, see detail above

2014:

January-April

May-August

September-December

2013:

January-April

May-August

September-December

2012:

January-April

May-August

September-December

2011:

January-April

May-August

September-December

2010:

January-June

July-December

2009

2008

Links to external sites will open in a new window.

Archive items may be edited, to condense them a bit or to update links. Some links may require a subscription for full access, but I try to provide at least one useful open source for most items.

Please let me know of any broken links you find -- on my Musings pages or any of my regular web pages. Personal reports are often the first way I find out about such a problem.

December 29, 2015

Can pigeons diagnose cancer by reading patient X-rays?

December 29, 2015

About as well as professional radiologists can.

Or better. According to a new article.

Here is the idea... For training, a pigeon was shown a breast X-ray image, and asked to classify it as benign or malignant. (Well, it was asked to press one of two colored bars.) If the response was correct, it received a food reward. That is, the animal receives a reward for correct answers, and learns what is considered correct.

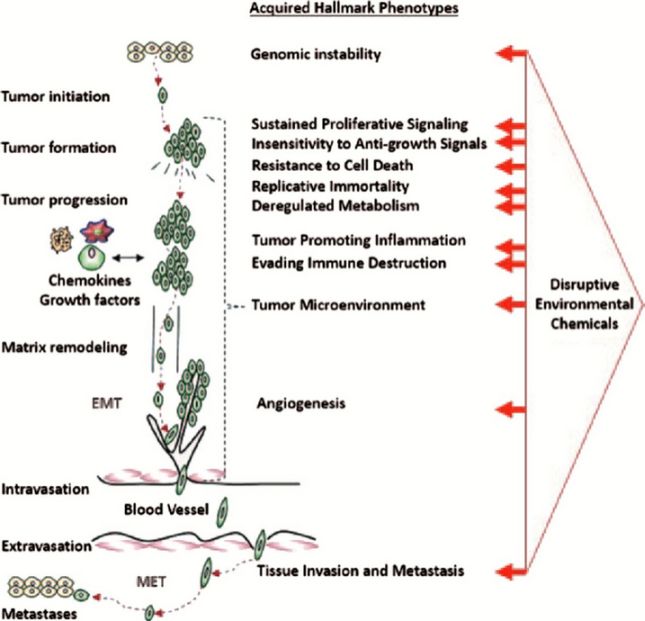

The following figure shows how the pigeons progressed through training...

|

The graph shows the response, as percent correct vs days of training.

There are three data sets. They are for different magnification of the images. Importantly, these are successive training sequences; we will come back to this.

|

The first training was done with images at 4x magnification. This gave the results shown in the lowest curve, with circle symbols. The response rate started at about 50%, which is random. It improved with training, and reached about 85% by day 9.

After training with 4x images, the birds were then tested with images at higher magnifications (10x and 20x). The results for these training routines are shown in the upper two curves. Note that they start fairly high; this is because the birds have already been successfully trained at 4x. The birds' responses improve over a few days, and again reach a plateau, at about the same level.

This is Figure 6A from the article.

The training set-up and examples of the X-ray images are in the news stories, and also in the video listed below.

|

Overall, the pigeons are about 85% accurate in classifying the images as benign or malignant. That's not quite as good as what trained radiologists can do, but it's close -- and requires far less training.

Interestingly, if the results using four pigeons are combined, they are about 99% accurate. (The authors refer to this analysis as flock-sourcing.) They do not report a similar analysis for radiologists.

There are other tests, and the results with the pigeons vary. To some extent, the pigeons find difficult the things the radiologists find difficult, but there is more to it.

Is this useful? I don't think that is the point. The purpose of the work is to study the capabilities of the pigeon visual system and brain. It's also a reference point for the development of computer systems to analyze images. If pigeons can analyze the images, why is it so hard to develop a computer system that can do so?

News stories:

* Pigeons diagnose breast cancer on X-rays as well as radiologists -- When "flock-sourcing," they do better, with 99 percent accuracy --- and they work for seeds. (Kurzweil, November 19, 2015.)

* Pigeons spot cancer as well as human experts. (J Bohannon, Science magazine news, November 18, 2015.)

Video: Pigeons (Columba livia) as Trainable Observers of Pathology and Radiology Breast Cancer Images. (2 minutes; well-labeled, no sound.)

The article, which is freely available: Pigeons (Columba livia) as Trainable Observers of Pathology and Radiology Breast Cancer Images. (R M Levenson et al, PLoS ONE 10:e0141357, November 18, 2015.)

More about radiologists: What if there was a gorilla in the X-rays of your lungs? (July 26, 2013).

Another example of using animals to help with medical diagnosis: Rats, bananas, and tuberculosis (March 11, 2011). (The current article refers to this work.)

Next cancer post: Why are some types of cancer more common than others? Follow-up (January 24, 2016).

My page for Biotechnology in the News (BITN) -- Other topics includes a section on Cancer. It includes a list of related posts.

Does it matter what time of day you milk the cow?

December 28, 2015

By reputation, milk induces sleep. Does that mean that milk is a drug, not just a food? If so, should we wonder whether the details of milk preparation affect its pharmacological activity?

That's the idea behind a new article, which compares the effect of cow milk on mice depending on what time of day the cow was milked.

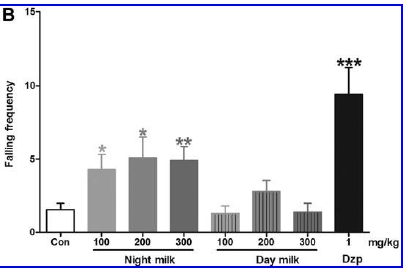

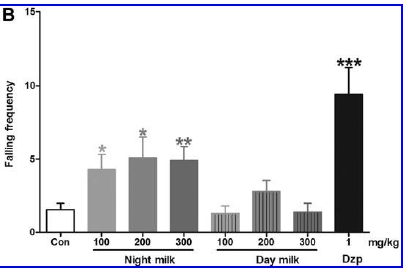

In this work, the scientists compared the effects of "Night milk" (milk collected from the cow at night) and "Day milk" on several behaviors in mice. Each behavior is related to sleep or to sedation. Here is an example...

|

The graph shows the effect of "Night milk" vs "Day milk" on the frequency of falling (during a special test).

The first bar (left, "Con") is a control, showing the falling frequency in untreated mice.

The last bar (right, "Dzp") shows the effect of a sedative drug (diazepam). You can see that it greatly increases the falling frequency. This is a positive control.

|

In between are bars for Night milk and for Day milk. For each kind of milk, there is a series of three bars, at increasing dose. You can see that all three bars for Night milk show an increase in falling frequency; the Day milk had little or no effect. (Asterisks indicate that the result is significantly different from the control.)

This is Figure 2B from the article.

|

I chose to discuss falling frequency here because it showed the biggest effect. Look at the graph above, and it's easy to see that there is an effect. The effects on other behaviors were small. Among the behaviors studied were the time required to fall asleep and the duration of sleep. Both showed small but statistically significant effects.

The scientists analyzed the milks. The Night milk contained about ten times more melatonin than the Day milk. The Night milk also contained about 25% more tryptophan. (These results were expected, based on earlier work.)

Are the observed effects consistent with what one might expect from the levels of melatonin and tryptophan found in the Night milk? Qualitatively, yes. Quantitatively? The authors do not address this, but it should be done. If there is agreement, it would strengthen the case that Night milk is more effective at promoting sleep. If there is not agreement, it would show that the story is incomplete.

Perhaps the most important point here is raising the question. If we are going to use milk as a drug, maybe we should examine it as carefully as we would an "ordinary" drug. It should not be a surprise that the composition of cow milk varies during the circadian cycle; whether it matters is open for testing. The current paper is a step.

News story: Night milk: milk taken from cows at night might be the sleep aid you need -- Sedative effects of night milk have not been tested on people but the high amounts of tryptophan and melatonin suggest it may be healthier than Ambien. (M Gajananm, Guardian, December 15, 2015.) Note the excessive interpretation in the sub-headline there; concentrations are facts, but "healthier" is open for discussion.

The article: Milk Collected at Night Induces Sedative and Anxiolytic-Like Effects and Augments Pentobarbital-Induced Sleeping Behavior in Mice. (I J I dela Peña et al, Journal of Medicinal Food 18:1255, October 26, 2015.)

A recent post about melatonin and sleep: How caffeine interferes with sleep (December 11, 2015).

More... Evening light: how it affects our sleep (July 30, 2019).

A recent post about milk: Breastfeeding and obesity: the HMO and microbiome connections? (November 14, 2015).

and then... Cockroach milk (August 21, 2016).

Also see...

* The nutritional value of yogurt? (September 28, 2018).

* Polled cattle -- by gene editing (July 8, 2016).

* Does it matter what time of day you get a vaccine? (October 26, 2012).

December 21, 2015

How balloons burst

December 20, 2015

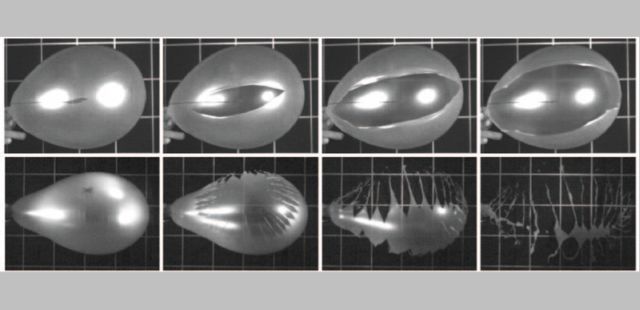

A team of physicists recently published an article on how balloons burst when punctured. The following figure shows part of the story.

Each row of the figure shows a balloon bursting. You can see the puncture site in the first (left-hand) frame.

The balloon in the top row burst along a single line or crack. The crack got larger over time (as you go from left to right).

For the balloon in the bottom row, it is more complicated. Many cracks developed, and the balloon fragmented into small pieces.

This Figure is reduced from one in the news story in Physics. It also appears in the Phys.org story. Figure 2 parts a & b of the article cover about the same ground, though a different case. That figure is labeled with times, up to 133 microseconds.

|

That is, balloons can burst in either of two ways when punctured -- with one crack line, or multiple cracks.

The next question is what determines the mode of bursting. To test this, the scientists developed a model balloon and a test apparatus, in order to have a reproducible system.

|

This is a diagram of the apparatus for testing how a balloon bursts. It is, of course, a two-dimensional cross section.

The balloon is a rubber sheet of defined properties. It is shown as a yellow stripe (labeled "membrane"). The base of the balloon is held by the "frame" (left side).

Two views of the balloon are superimposed. The uninflated balloon is entirely within the frame area; see the dotted line there. Air is added via the "air inlet" at the left. This inflates the balloon.

|

At the right is a "blade". When the expanding balloon reaches the blade, it pops. By varying how far the blade is from the frame, the scientists control how large the balloon is upon bursting. Of course, the balloon size is proportional to the pressure within the balloon.

"Camera 1" (at the right) records the action upon puncture, at 30-60,000 frames per second.

This is Figure 1 from the article.

|

After many tests, the general conclusion is that balloons at low pressure burst with a single crack when punctured; in contrast, balloons at high pressure burst with multiple cracks. Analysis showed that the multiple cracks arose largely by branching.

Of course, it is not simply the pressure that matters; it is the stress in the membrane. At high stress, rapid propagation of the crack leads to further fragmentation of the membrane.

This is probably the best study ever made of how balloons burst. But how things fragment is of wide interest, e.g., to engineers -- and geologists. This study, using simple materials but modern technology, offers some insight.

News story: Into how many pieces does a balloon burst? (Phys.org, November 2, 2015.)

News story from the publisher in their news magazine. Freely available at: Focus: Two Modes of Balloon Bursting Revealed. (P Ball, Physics 8:105, October 30, 2015.)

Videos. The above item, in Physics, includes two video clips from the authors. Video 1 (20 seconds) shows toy balloons bursting, one by each process; these are shown slowed down so you can follow what happens. The images in the top figure above may be from this video. Video 2 (43 seconds) shows you their experimental apparatus -- in action. The videos are well-labeled, but do not have meaningful audio.

The article: Popping Balloons: A Case Study of Dynamical Fragmentation. (S Moulinet & M Adda-Bedia, Physical Review Letters 115:184301, October 30, 2015.)

More about things bursting:

* The aroma of rain (June 13, 2015).

* Pop goes the hemozoin: the bubble test for malaria (January 24, 2014).

More on ballons: Robot uses coffee as a picker-upper (December 17, 2010).

More about stress responses: How to confuse a yeast -- a sensory illusion (January 15, 2016).

GJ 1132b: "the most important planet ever found..."

December 18, 2015

That gets attention, doesn't it? Even with the qualification that follows: " ... outside the Solar System." It's from the news story in the journal. There are so many new planets being discovered these days that it can be hard to get attention.

Yes, GJ 1132b is interesting. Perhaps not up to the standard suggested by the title here, but interesting. In fact, the authors seem to have said "most intriguing". That's more reasonable.

So what's the deal? First, GJ 1132b is about Earth-size. Moreover, it is Earth-like. It's rocky, based on measuring its density. That means it is also Venus-like.

Second, it's rather close, at least by astronomical standards. About 39 light-years away.

Third, it has an interesting Sun. GJ 1132 is a red dwarf star, small and cool. Cool star, but the planet is only a million miles away (it orbits the star every two days!).

What's the point? The temperature on GJ 1132b may be about 230 °C. That is too hot for life (or for liquid water), but it makes GJ 1132b the coolest rocky planet yet found. It's cool enough that it may well have an atmosphere -- and that's why it is interesting. An atmosphere, and close enough to us that we should be able to study it. That its star is small and dim is a bonus; the starlight interference will be smaller. It would be the first case where we are able to get information about the atmosphere of an Earth-like planet beyond our Solar System. That's interesting, even intriguing.

Planet GJ 1132b is referred to both as Earth-like and Venus-like. Of course, in terms of size, it is both. Earth-like is a major focus for planet hunters. The new planet is too hot for life. Venus is hot with an atmosphere; that's why some refer to it as Venus-like.

The news stories listed below are good at describing why the new planet is interesting. The article itself documents the technical information.

News stories:

* Astronomers eager to get a whiff of newfound Venus-like planet. (Science Daily, November 11, 2015.)

* A Relatively Nearby Earth-Sized Planet. (P Gilster, Centauri Dreams, November 11, 2015.)

* News story accompanying the article: Astronomy -- A small star with an Earth-like planet. (D Deming, Nature 527:169, November 12, 2015.)

* The article: A rocky planet transiting a nearby low-mass star. (Z K Berta-Thompson et al, Nature 527:204, November 12, 2015.) Check Google Scholar for a freely available copy at ArXiv.

More from a dwarf star... Habitable planets very close to a star (June 19, 2016).

A post about Venus: Sulfur dioxide in the atmosphere of Venus (February 16, 2013). Links to more.

A recent post about exo-planets: Most Earth-like (habitable) planets haven't formed yet (October 27, 2015).

Also see... Atmosphere suggests planet might harbor life (August 30, 2010). Discusses how one studies the atmosphere.

December 16, 2015

How vitamin C kills cancer

December 15, 2015

You're surprised by the title?

There is a long and fascinating story about vitamin C and cancer. The bottom line is that mainstream science has largely given up on the vitamin for cancer treatment.

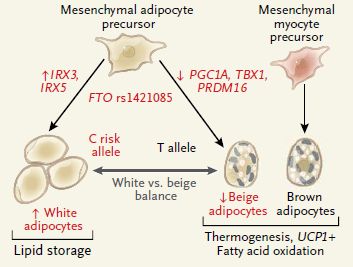

So what's new? A new article shows how it works. And in this case, "how" includes "when". That is, the new article suggests that vitamin C might work against particular types of cancer -- but not others.

The following experiment shows one part of the story...

|

The graph shows the uptake of vitamin C by two human cancer cell lines, grown in lab culture. These particular cell lines are known to have high levels of a glucose transporter called GLUT1.

Three conditions were tested. The two colored bars for each condition are for the two cell lines. The results for the two cell lines were similar in each case, so we'll consider them together.

The first condition (left) is the control. Just vitamin C was added; see the key at the bottom. The amount transported into the cells was set to 1.

|

For the second condition (middle), glutathione (GSH) was also added. It is an anti-oxidant (reducing agent). Its presence ensures that all the vitamin C is in the reduced form; none is in the oxidized form, dehydroascorbate (DHA). The addition of GSH resulted in much less transport of vitamin C; this suggests that it is the oxidized form that was being transported.

For the third condition (right), STF31 was also added. STF31 is an inhibitor of the GLUT1 transporter. It also resulted in much less transport of vitamin C. This shows that it is the GLUT1 transporter that is involved.

This is Figure 1A from the article.

|

Together, the results shown above suggest that the oxidized form of vitamin C is being transported into the cells, using the GLUT1 transporter. And the cell lines used here carry mutations that lead to high levels of that transporter.

Why is this interesting? The scientists go on to show that transporting large amounts of oxidized vitamin C (DHA) into cells can inhibit them, even kill them. Why? Because high levels of the oxidized form of vitamin C create oxidative stress in the cells, which can lead to cell death. That is, transport of vitamin C -- the oxidized form -- kills some cells and not others. It kills cells with a high level of the GLUT1 transporter. Those cells can transport the oxidized vitamin into the cells. That kills them by upsetting the redox (oxidation-reduction) balance in the cells.

What are these cells that can transport vitamin C so well -- to their own detriment? They are certain kinds of cancer cells, with certain specific mutations.

Is it possible, then, that vitamin C would inhibit the growth of cancers with these mutations? To test this, the scientists tested a model system in mice. It worked.

It is an interesting scientific article. The scientists do many experiments, and develop many ideas. It follows from the work that vitamin C might be a useful treatment for certain cancers. But it doesn't prove that it will work "in the real world". Even if all their biochemical insights are correct, it doesn't mean that the effect will be useful. A mouse test is interesting, but mouse testing has a poor track record in predicting cancer treatments.

This needs to be tested in humans. I suspect that will get done. It will be done using cancers that have been pre-screened to see if they carry mutations that lead to high levels of the GLUT1 transporter. That's the key point. The article suggests which cancers may be susceptible to vitamin C. A test needs to focus on those cancers.

There is an incentive to follow up these findings. The mutations studied here are usually associated with cancers that are currently hard to treat.

Is it possible that old trials of vitamin C could be re-analyzed in the light of this new information? That is, in those old trials, were the positive results more prevalent in the cancers with the high GLUT1 level? In principle, it is possible to do that analysis -- if DNA can be isolated from stored samples. I have no idea if such samples are available.

News stories:

* Vitamin C halts growth of aggressive forms of colorectal cancer in preclinical study. (Medical Xpress, November 6, 2015.)

* High-Dose Vitamin C Kills Mutant Colorectal Cancer Cells, Suggesting New Treatment Approach. (L Bushak, Medical Daily, November 6, 2015.) Includes some skepticism about the proposed treatment, but it seems based more on history than on criticism of the new findings.

* News story in the journal previewing the article: Vitamin C could target some common cancers. (J Kaiser, Science 350:619, November 6, 2015.)

* News story accompanying the article: Cancer: Revisiting vitamin C and cancer. (C R Reczek & N S Chandel, Science 350:1317, December 11, 2015.)

* The article: Vitamin C selectively kills KRAS and BRAF mutant colorectal cancer cells by targeting GAPDH. (J Yun et al, Science 350:1391, December 11, 2015.)

Previous cancer post... The WHO report on the possible carcinogenicity of meat (December 12, 2015). That's the post immediately below.

A post that mentions vitamin C -- and says it doesn't work against cancer: Is folic acid good for you or bad for you? (April 10, 2010).

Oxidative stress and the anti-oxidant glutathione were discussed in the post Are birds adapting to the radiation at Chernobyl? (August 3, 2014).

But also see... Anti-oxidants and cancer? (October 18, 2015). Anti-oxidants are a complex issue. The balance is important.

My page for Biotechnology in the News (BITN) -- Other topics includes a section on Cancer. It includes a list of related posts.

The WHO report on the possible carcinogenicity of meat

December 12, 2015

It was a big news story a few weeks ago: the World Health Organization (WHO) reported that processed meat is carcinogenic, and red meat may be carcinogenic.

It's an interesting story, even important. But it is also confusing. Here are some comments about the report. They are based on my reading of some of the materials, plus listening to an hour discussion of it; one of the panelists was on the WHO team that prepared the report.

It's important to keep fact and opinion separate with such a story, so let's start with some facts...

Preparing such reports is a normal activity of WHO. More specifically, it is an activity of the International Agency for Research on Cancer (IARC), within WHO. The IARC team that prepared the report is a team of scientists.

WHO did not test anything. They did not do any experiments. The report is based on an analysis of what has been published. WHO has standards for evaluating the quality of trials, and they have rules that guide their decision-making process.

WHO defines their terms. It is important to understand what it is they claim. Their definition of "processed meat" may be especially important.

The report has not yet been published. There are summaries available. The full report will be published, in a WHO monograph; I do not know when.

Ok, those are facts. Let's look at some of the issues. This part may include my analysis and opinions.

The main findings are stated as broad generalities: processed meat is shown to be a human carcinogen; red meat is a possible carcinogen. These characterizations are based on standards established by WHO.

They have compared processed meat with cigarettes -- and caused considerable confusion by doing so. The comparison is intended to reflect the confidence in the conclusion, not the magnitude. That is, they are just as confident that their analysis shows processed meat is carcinogenic as they are for cigarettes. However, the magnitudes are very different.

Here is an example of a magnitude, using round numbers. If one eats 50 grams of processed meat per day over a lifetime, there is (says the report) a 20% increase in the risk of cancer. Since the baseline risk is about 5%, it becomes 6% with the consumption of processed meat. (The 50 grams, or about 2 ounces, is about two strips of bacon.) These magnitude numbers are sometimes reported in a way that is not clear. It is not two strips of bacon, but two strips of bacon per day over a lifetime. It is important to understand the dose that is involved.

A concern about that statement is what "processed meat" refers to. The report is very clear in saying what they mean, but it is not what most people would mean by the term. For the report, it refers to a wide variety of meats, processed in various ways. (Processing is traditionally done for preservation, but it may also be done for taste.) But that's confusing. Meats are processed in various ways. There is no reason why the various treatments should have similar effects. One would presume that some forms of meat processing are more carcinogenic than others. Lumping them is confusing. Why did they do this? Apparently because they were unable to reach conclusions for individual types of processed meats. That may well reflect limitations of the tests that they analyzed; remember, they did not do any tests, but merely analyzed what was already reported.

This leads to another point of interest... Why are processed meats carcinogenic? Or, better, why is a particular type of processed meat carcinogenic? In fact, there has long been evidence that certain types of "curing", using nitrates, could lead to the formation of nitrosamines, a type of chemical that is known to be carcinogenic. In one sense, it might not matter why something is carcinogenic. If it is, let's avoid it. But in this case, they claim that a heterogeneous class of things is carcinogenic. It really would be nice to sort that out.

What about meat, which they conclude is a possible carcinogen? What meat? Red meat, which means mammalian muscle. The stuff we typically eat, from cows and pigs and such (but not birds).

It's odd that meat is carcinogenic. If meat means mammalian muscle, that's us. That would mean we are carcinogenic. Now, maybe it's only carcinogenic to the digestive system. Maybe.

This gets us back to the issue, why is it carcinogenic? In fact, it's well known why meat is carcinogenic -- at least one reason. It's the way we cook it. High temperature treatment of meat leads to "char", which is quite likely carcinogenic. If this is the main reason they are getting a signal from meat, wouldn't it be good to make a distinction between "meat is carcinogenic" and "how we cook meat makes it carcinogenic". (That also gets us out the hole of ourselves being carcinogenic.)

Some of the points I raised above were among my first thoughts when I heard about the report. Interestingly, they came up during the discussion; the panel member who was involved in the report understood the points, and had proper responses. The comments are not criticisms of the report as much as a reflection on the state of our knowledge. (However, I do wonder why they say some things the way they do. They have a penchant for saying things that are predictably confusing.)

Eating meat is a normal activity for many animals (and even an occasional plant). Cooking it and preserving it are not. (Cooking makes meat more digestible, and we like the taste. Treatment of meat to preserve it was an important development for mankind, before refrigeration.) How much of what WHO found is fundamental to meat, and how much is due to the things we do to it -- all with the best of intentions?

What to do? We can divide that into parts, what an individual should so and what the scientific community should so.

The effects described here are small. If you want to reduce your consumption of meat and especially processed meat, fine; it's not likely it will make much difference in your risk of cancer, but it is a proper step. Further, it would be good to compare the effect with other risks you take. Those who might consume unusually large amounts of meat or processed meats would have a stronger case; it would also be good to learn about other problems from eating large amounts of meat, which are beyond our scope here.

The more important implications are for the scientific community. The work raises lots of questions.

News stories:

* WHO Confirms Eating Meat Causes Cancer, But How Did This Once Healthy Food Become So Deadly? (D Dovey, Medical Daily, October 26, 2015.)

* Processed meat can cause cancer. (Science Daily, October 27, 2015.)

A Q&A posted by WHO at their web site: Q&A on the carcinogenicity of the consumption of red meat and processed meat. (IARC, WHO, 2015.) This is good, though at times you may have the feeling you are dealing with a government report.

News story published by the WHO panel in a scientific journal: Carcinogenicity of consumption of red and processed meat. (V Bouvard et al, Lancet Oncology 16:1599, December 2015.) Check Google Scholar for a freely available copy. Two pages; a good overview by the authors of the study. As noted, their full report will be published separately, and is not yet available.

More about meat...

* Growing meat without an animal? (April 11, 2018).

* Red meat and heart disease: carnitine, your gut bacteria, and TMAO (May 21, 2013).

* Carnivorous algae -- that hunt large animals (October 7, 2012).

More about the WHO, a political organization that deals with science: The role of WHO: the view of its director (December 1, 2015).

And then... Is glyphosate (Roundup) a carcinogen? (March 6, 2016).

Next cancer post... How vitamin C kills cancer (December 15, 2015). This is the post immediately above.

My page for Biotechnology in the News (BITN) -- Other topics includes a section on Cancer. It includes a list of related posts.

My page Internet resources: Biology - Miscellaneous contains a section on Nutrition; Food safety. It includes a list of relevant Musings posts.

How caffeine interferes with sleep

December 11, 2015

Drinking coffee late in the day can keep you awake. A recent article explores why.

Body physiology varies during the day. That's the circadian rhythm. (Circa-dia = about a day.) We have a natural cycle of sleeping and waking. (People vary; for example, there are morning people and night people.) Jet lag is a manifestation of our circadian rhythm. Melatonin is a hormone involved in our circadian rhythm; it is sometimes used to treat jet lag.

The article here looks at the effect of caffeine on melatonin. We will note just one experiment, on the effect of caffeine on the melatonin cycle in humans. ("Melatonin cycle"? It is a specific measure of the level of melatonin, one that marks the circadian response as the beginning of night.)

The general idea is that the scientists gave the test subjects a dose of caffeine and measured the effect on melatonin. We'll skip some details, so don't make much of the specific numbers. Here are some results...

|

The graph shows the effect of various treatments on the melatonin cycle. The y-axis is a time scale, showing the shift in the melatonin cycle, in hours. Negative values mean that the effect is to delay sleep.

The treatments involve light and caffeine.

The first bar (left, clear) is for "dim light". It has little effect; you can even take this as a control.

The second bar (dark) is for dim light plus caffeine. (The first condition included a placebo for the caffeine.) There is a big change in the melatonin cycle. That's the key point.

|

The last two bars (to the right, bars with diagonal lines) are for bright light, without or with caffeine. Both show a greater effect; they are not significantly different from each other.

This is Figure 2A from the article.

|

In summary, the results show that caffeine affects the melatonin cycle, as does light. The direction of the effect is to delay sleep. Of course, light is a normal controller of the circadian rhythm. The results here show that caffeine acts like light. That is, caffeine acts on sleep via the common circadian rhythm. Thus we begin to see why a late cup of coffee can keep you awake.

The experiment above was done shortly before bedtime. At that time of day, light delays sleep -- and so does caffeine. The scientists have not (yet) tested the effect of caffeine on the morning part of the melatonin cycle.

News story: How Caffeine Affects the Body Clock -- Evening consumption of the drug leads human circadian rhythms to lag. (R Williams, The Scientist, September 16, 2015. Link is now to Internet Archive.)

The article: Effects of caffeine on the human circadian clock in vivo and in vitro. (T M Burke et al, Science Translational Medicine 7:305ra146, September 16, 2015.)

Posts on circadian rhythms or melatonin include:

* Evening light: how it affects our sleep (July 30, 2019).

* The genetics of being a "morning person"? (April 15, 2016).

* Does it matter what time of day you milk the cow? (December 28, 2015).

* Melatonin and circadian rhythms -- in ocean plankton (November 24, 2014).

* Sleepy teenagers (July 23, 2010).

Posts on caffeine or coffee include...

* Using caffeine to treat premature babies: risk of neurological effects? (April 27, 2019).

* Good news on the coffee front: Coffee is good for you (March 15, 2016).

* Your desire for caffeine: It may be in your genes (May 31, 2011). This post makes a connection between caffeine and adenosine; if you pursue the current article further, that connection appears again.

* Robot uses coffee as a picker-upper (December 17, 2010).

December 9, 2015

A better way to oxidize americium? A step toward improved processing of nuclear reactor waste?

December 7, 2015

Americium (element #95, symbol Am) is an important waste product from nuclear reactors. It is very "hot". It is also hard to separate from several other elements in the crude waste. That's because it is commonly in the +3 (or III) form (oxidation state), and it behaves much like several other elements that are +3.

It should be possible to oxidize Am to a higher oxidation state, V or VI, thus allowing its chemical separation from those other elements, which can't be oxidized. However, it has proven difficult to do so in practice.

A new article reports an interesting development, which may allow a practical oxidation of Am(III) to the higher oxidation states. It has been known that the oxidation is easier if the Am is in a complex. What the scientists did here was to attach the complexing agent to the electrode. Thus, the Am(III) being oxidized at the electrode was complexed. The oxidized Am could be removed, but the complexing agent stayed on the electrode. This simplifies purification of the oxidized Am, and also makes it easy to reuse the complexing agent. The major product is Am(V), in the form of AmO2+.

The following figure shows some results, and also illustrates how the scientists did the analysis -- by looking at the color change.

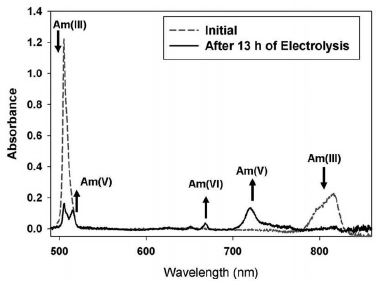

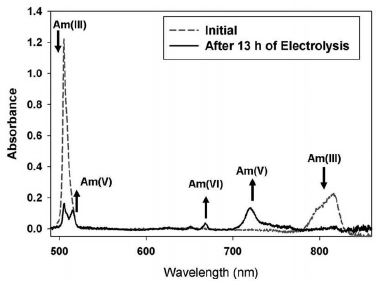

|

The figure shows two spectra, superimposed on the same graph. One is for the Am sample before the treatment (dashed line); the other is for the same sample after the oxidation (solid line).

Quick inspection shows that the two curves are different.

Arrows point to some of the key changes. There are two peaks that are characteristic of the initial Am(III), one each at about 500 nm and 800 nm. Both of these largely disappear during the treatment. There are various peaks for Am(V) and Am(VI), which appear during the treatment.

This is Figure 4, right side, from the article.

|

This is an interesting solution to an interesting chemistry problem. The context of the problem is also interesting. The news story in Science spends considerable time outlining how nuclear reactor waste is processed -- and how a practical way to separate americium could be a step toward a much improved process of waste disposal. The second figure of that news story outlines how they envision a grand scheme for nuclear waste treatment. Here is that figure: Scheme for treatment of nuclear reactor waste [link opens in new window]. The "big idea" is that, with proper separations, only small amounts of waste need the extremely long term storage that we often hear about.

News story: Functionalized porous electrode used for radioactive waste product. (H Zeiger, Phys.org, November 17, 2015.)

* News story accompanying the article: Nuclear fuels: How to isolate americium -- An electrolytic process enables isolation of the radioactive element americium from used nuclear fuel. (C Soderquist, Science 350:635, November 6, 2015.) As noted above, this provides a nice overview of the problem of what to do with waste nuclear fuel.

* The article: Electrochemical oxidation of 243Am(III) in nitric acid by a terpyridyl-derivatized electrode. (C J Dares et al, Science 350:652, November 6, 2015.)

You may recognize americium as a component of common smoke detectors. Would the present work impact the production of those devices? In principle, perhaps, but the amount of Am used for that purpose is actually quite small. Each smoke detector contains less than one (US) cent's worth of Am. Existing stockpiles of Am are enough to satisfy this need for a long time.

Other posts perhaps related to nuclear energy include:

* A nuclear-powered battery for your phone? (October 9, 2024). And it is about americium.

* Analysis of uranium samples from World War II Germany (November 7, 2015).

* Radioactivity released into ocean from Fukushima nuclear accident reaches North America (March 23, 2015).

My page of Introductory Chemistry Internet resources includes a section on Nucleosynthesis; astrochemistry; nuclear energy; radioactivity. It includes a list of related Musings posts.

This post is also listed there in the section Lanthanoids and actinoids.

There is a section of my page Internet Resources for Organic and Biochemistry on Energy resources. It includes a list of some related Musings posts.

When the laundry piles up... An econophysics critique of smart meters

December 6, 2015

This post is about an article in a physics journal; the topic is when you should do the laundry. The authors didn't actually do any laundry; this is a theoretical analysis of laundry, using the tools of econophysics, whatever that is. In all seriousness, it is an interesting and provocative analysis of a current issue in energy resources.

What's the real issue? Smart meters. These are meters that measure your electricity usage and report it continuously back to your electricity company. This lets the company bill you using different prices per unit of electricity depending on when you use it. The idea is that electricity is more valuable during certain times, referred to as peak usage; therefore, peak-usage electricity should cost more. If you do your laundry at night, during off-peak times, they will charge you less than if you do it during the day, when demand is high.

In fact, your smart meter can do more than that. It can turn your washing machine on all by itself, presumably late at night when electricity is cheap. (This assumes that your washing machine is intelligent enough to understand the smart meter; modern washing machines are, or soon will be.)

We understand that power plants have a finite capacity, and that usage patterns vary. For things where you can choose, it is good to use electricity when demand is lower. And it is reasonable that the electricity company charges less for off-peak usage, to encourage you to make that choice. Sounds good. Or is it?

A new article, from the Institut für Theoretische Physik, Universität Bremen, challenges the alleged benefit. The authors argue that attempts to control electricity usage using feedback from the usage level could backfire: it could lead to catastrophic swings in usage, which would defeat the purpose. Imagine the following, extreme scenario... usage declines, so the price is reduced. Everyone turns on their washing machine -- manually or automatically, it doesn't matter; the point is that the lower price triggers demand. The result? A higher overall demand than happens during the usual peak period.

The authors show the effect by computer modeling. It makes sense.

Comment... Isn't this just a problem with the nature of the feedback? This is all about computer software, at the electric company. It monitors usage and sends out commands (or information) that adjust usage. That makes some sense. (We'll ignore privacy concerns here. In particular, we'll assume that accepting usage commands is voluntary.) If the company computer sends out commands to use more electricity than is available, that's not so good. Surely, good software can figure that out.

Smart meters have been the subject of considerable debate, with good arguments for them, and some concerns. The current article raises an interesting issue, but it should be solvable. It's one thing to suggest that feedback from usage to price may be good, but that doesn't mean that any feedback procedure that is suggested is good; details matter.

News stories:

* A seemingly obvious way to make the electricity market better may actually make it worse. (L Zyga, Phys.org, July 23, 2015.)

* Smart Meter Time Varying Pricing Can Lead to "Catastrophic Consequences" for the Grid. (K T Weaver, Smart Grid Awareness, July 27, 2015.) This is from an anti-smart meter site. Despite the obvious bias, it's a useful presentation. Just be aware that it presents only part of the story.

The article: Econophysics of adaptive power markets: When a market does not dampen fluctuations but amplifies them. (S M Krause et al, Physical Review E 92:012815, July 22, 2015.) Check Google Scholar for a copy.

Previous post about laundry: Folding towels (April 10, 2010).

Also see a section of my page Internet Resources for Organic and Biochemistry on Energy resources. It includes a list of some related Musings posts.

Can one tell if a fingerprint is from a male or a female?

December 4, 2015

Fingerprints are a mainstay of police work. The physical pattern of a fingerprint is compared to a database of known prints. There is no way to derive that pattern from any knowledge about the person.

There is now some effort to see what we can learn from analysis of what is in a fingerprint -- the chemicals left from sweat, for example. A new article shows that one can learn the sex of the person leaving a fingerprint from a simple biochemical analysis.

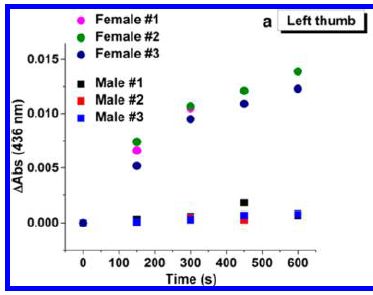

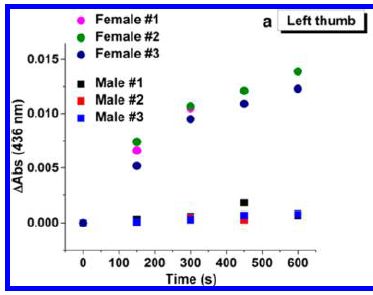

The figure shows some results with real fingerprints.

|

The graph shows the assay response vs time, using samples from fingerprints of three females and three males.

The results for samples from females are shown with circle symbols of various colors; male samples are shown with squares.

You can see that the female samples give a higher signal; it is clear at the first time point (150 seconds).

This is Figure 3a from the article; it shows results for left thumbs. Part b shows right thumb results for the same people; they are quite similar.

|

What is the analysis? It involves extracting the amino acids from the prints. The extracted samples are then tested for the level of amino acids. This is done using an enzyme that oxidizes the amino acids, with the enzyme action being coupled to the production of a visibly colored product.

The y-axis on the graph shows the amount of color seen in the analysis. The results here were recorded with a spectrophotometer, but it is likely that a practical test could be developed based on visual distinction. In fact, the entire assay is simple, and could probably be developed in something like a dipstick format.

It's simple, and seems to work. Is it useful? It is part of a broader effort to, literally, extract more information from fingerprints than simply the pattern. The article establishes that it may work. What the limitations are is less clear. The authors note that their results are for samples from Caucasians. That limitation can easily be tested, but who knows what it will reveal. I wonder what old prints might do. Is it possible that old prints from females may have lost some of their amino acids, and appear male? Extensive testing will ultimately tell us. For now, this is an interesting development; take it with plenty of caution.

News stories:

* Fingerprints Yield Sex Info -- The amino acids left behind in a human fingerprint can be used to determine whether an individual is male or female. (K Zusi, The Scientist, November 5, 2015. Now archived.) A short note.

* Male or female: Gender fingerprinting. (David Bradley, Wiley Analytical Science Magazine, November 30, 2015.)

* Review: Forensic Identification of Gender from Fingerprints. (Emily C Lennert, Florida Forensic Science (Orange County Public Defender, Orlando), July 26, 2017.)

The article: Forensic Identification of Gender from Fingerprints. (C Huynh et al, Analytical Chemistry 87:11531, November 17, 2015.)

More about fingerprints:

* Briefly noted... How fingerprints develop (June 2, 2023).

* Why some people don't leave fingerprints (September 19, 2011).

* Fingerprints (April 2, 2010). Forensic use.

More forensic science: How easy is it to destroy any traces of 43 students by burning them? (October 25, 2016).

December 2, 2015

The role of WHO: the view of its director

December 1, 2015

The World Health Organization (WHO) is an agency of the United Nations (UN). It has been much criticized for how it handled the Ebola outbreak -- and that wasn't the first time. Fact is, WHO is a political organization -- and it behaves as such.

Science magazine recently did an interview with the head of WHO. It's interesting. In offering it here, I am not endorsing anything or anyone, but merely allowing one relevant party to speak. We need to think about how the world community handles medical issues; WHO is part of the effort.

Interview, freely available: In wake of Ebola epidemic, Margaret Chan wants countries to put their money where their mouth is. (K Kupferschmidt, Science Insider, October 14, 2015.) This is an interview with Margaret Chan, WHO director-general, by a member of the news staff at Science. A short version of the interview appeared in the magazine, 350:495, October 30, 2015. The interview also briefly discusses the problem of increasing antibiotic resistance.

Recent Ebola post, which is relevant here: After Ebola, what next? and how will we react? (September 5, 2015).

There is a section on my page Biotechnology in the News (BITN) -- Other topics for Ebola and Marburg (and Lassa). That section links to related Musings posts, and to good sources of information and news.

Something good WHO did... International relations: sharing flu viruses (May 28, 2011)

More WHO...

* WHO: We need to prepare for Disease X (May 13, 2018).

* The WHO report on the possible carcinogenicity of meat (December 12, 2015).

The "hobbits": dentition suggests they were a distinct, dwarfed human species

November 30, 2015

"Hobbits" is a nickname given to some small hominins (human-like animals) found on Flores Island in Indonesia; they are known only from some fossils, estimated age about 18,000 years. Their relationship to modern humans is disputed. Hypotheses include that they represent modern humans, but a diseased state. Alternatively, they might be a new species, with one or another connection to the lineage of modern humans. For those favoring species status, the hobbits are Homo floresiensis. These alternatives have been noted in various Musings posts; one is listed as background at the end here, and it links to the others.

Distinguishing among the hypotheses requires evidence, and that is hard to come by from the limited samples.

A new article reports a thorough analysis of the teeth available from four hobbit specimens.

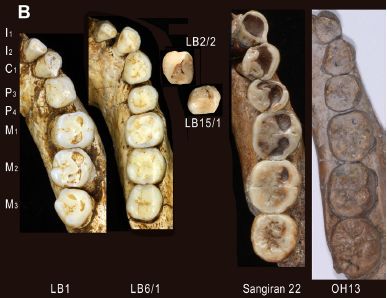

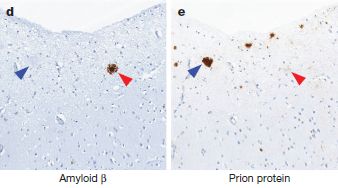

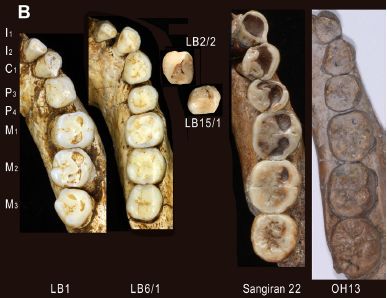

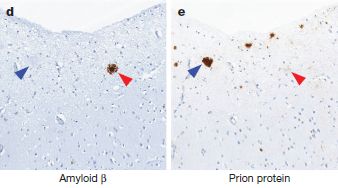

|

The figure shows teeth from four Homo floresiensis individuals (coded with LB numbers). It also shows teeth from early Javanese H. erectus (Sangiran) and H. habilis (OH).

The teeth are aligned, so similar teeth are in a horizontal row; they are coded at the left with standard tooth-numbering.

This is Figure 9B from the article.

|

Don't try too hard to make much of this from the pictures. The scientists took detailed measurements of all these teeth and more, and fed them to a computer for comparative analysis.

Upon integrating all the data, the scientists concluded that the hobbit teeth are quite distinct from the teeth of modern humans (Homo sapiens). This argues against the model that the hobbits are diseased or deformed forms of modern humans.

They then suggest that the teeth are most likely from the line Homo erectus, known to be in the region. They prefer a model in which the hobbits represent a dwarfed version of H erectus that developed in the confines of the island. If this holds, it would mean that the hobbits are not particularly close to modern humans, but rather are a side branch. It's interesting that a distinct species of Homo co-existed with "us" only 18,000 years ago.

"... [T]he dental remains from multiple individuals indicate that H. floresiensis had primitive canine-premolar and advanced molar morphologies, a combination of dental traits unknown in any other hominin species. The primitive aspects are comparable to H. erectus from the Early Pleistocene..." From the abstract of the article.

This is a serious analysis, and provides much information about the puzzle. Whether the conclusions from this work are correct remains to be seen. I suspect that the interpretation of the results will prove contentious.

Delightfully, from the Introduction of the article, referring to the full set of hobbit features. not just the dentition... "Researchers agree that this unique mosaic has significant evolutionary meaning, but disagree on what it is."

News story: Dental analysis suggests Homo floresiensis was a separate species from modern man. (B Yirka, Phys.org, November 20, 2015.)

The article, which is freely available: Unique Dental Morphology of Homo floresiensis and Its Evolutionary Implications. (Y Kaifu et al, PLoS ONE 10:e0141614, November 18, 2015.)

Background post on the hobbits: The little people of Indonesia (May 14, 2009). Includes a list of all Musings posts on the hobbits.

Next... Homo floresiensis -- revised dating of the original "hobbit" site (June 25, 2016).

Posts about teeth from ancient humans include...

* The case of the missing incisors: what does it mean? (September 13, 2013).

* Analysis of teeth confirms that Regourdou was right-handed (September 7, 2012).

More old teeth. Many more... Helicoprion -- a fish with 117 teeth, arranged in a spiral (March 9, 2013). Links to a follow-up.

Claiming knowledge one does not have: it's particularly common with those who think they are experts

November 29, 2015

What do you think of the "1975 Public Affairs Act"?

When a representative group of Americans was asked that, in the late 1970s when it might have been current, a third of the respondents expressed an opinion, one way or the other. That is, a third of those questioned claimed some knowledge of the Act.

That's an interesting finding -- because there is no such Act.

That is an example of people claiming knowledge they don't have. It's a nice example, because it is easy to tell whether they really have the knowledge they claim: there is no such Act. This phenomenon is called over-claiming.

A recent article extends the study of over-claiming to explore the effect of expertise. Are those who think they know a field well less likely to make excessive claims of their knowledge in the field -- or more likely? More likely, say the results. That is, "experts" are more likely to over-claim knowledge than non-experts.

It's an odd article, in that most of the results, which are quantitative, are presented as narrative, with almost no tables or figures.

Here's a brief summary of one experiment... Test subjects were randomly assigned to two groups. Each group was given a little geography quiz. After taking the quiz, they were asked to rate their knowledge of geography. One quiz was quite easy, the other was quite difficult. Those who took the easy quiz rated themselves as more knowledgeable about geography than did those who took the difficult quiz. That is, it seems that the easy quiz induced a feeling of expertise in the subject matter. Both groups were then asked to rate their familiarly with some geographical locations, some real and some not. The group that took the easy quiz was more likely to claim knowledge of non-existent locations. The authors interpret this as showing that the feeling of expertise induced by the easy quiz led to an increase in over-claiming.

The work describes a phenomenon. It does not explain it. Even the finding that it relates to one's perception of expertise is more description than explanation. It does not tell us whether people over-claim knowledge because it is human nature to do so, or because our culture (education?) teaches us to do so, or who knows what. In particular, there is no claim that it is because people deliberately lie.

Perhaps you are aware of the phenomenon. You may see it in politicians and in advocates for positions you disagree with. Yes, that's all probably true. But be careful. You may well show it, too -- along with those on your side. Whatever the reason, it is likely common. We don't carefully and critically examine everything we know. At the very least, when it matters, you should do so. When someone's "knowledge" is questioned, it would make sense to go check, go look it up.

Science, at least collectively, understands this. It is fundamental in science that knowledge is tentative, subject to questioning and further work. Of course, individual scientists may well express high confidence in their knowledge, for better or worse. And our education system tends to transmit "facts", often with little understanding of their source or certainty.

It's an intriguing article. Sorting out the causes of over-claiming is for future work. For now, we simply recognize the phenomenon of claiming knowledge one does not have. And we recognize that being knowledgeable does not prevent over-claiming; it may even make it worse.

"Continuing to explore when and why individuals overclaim may prove important in battling that great menace -- not ignorance, but the illusion of knowledge." That's the final sentence of the article.

You might wonder, regarding the story at the top of this post... Maybe there was something of a similar name that was in the news. That is, maybe this was confusion, not over-claiming. That is a proper kind of question to ask. I don't know in this case (but one can check it out, in the reference quoted in the current article). We must emphasize that the phenomenon of over-claiming is not based on one such story, but on much accumulated evidence.

Is over-claiming related to one's genuine knowledge of the subject, or to one's self-evaluation of expertise? Those are somewhat distinct issues, and the article discusses both. The particular experiment above focuses on the latter.

News stories:

* Self-proclaimed experts more vulnerable to the illusion of knowledge. (Science Daily, July 20, 2015.)

* 'Learned' people easily may claim facts impossible to know. (B Friedlander, Cornell Chronicle, June 11, 2015.) From one of the institutions involved in the work.

The article: When Knowledge Knows No Bounds: Self-Perceived Expertise Predicts Claims of Impossible Knowledge. (S Atir et al, Psychological Science 26:1295, August 2015.) The example I posed at the start of this post is taken from the introduction in this article. They give a reference to the study, which I did not check.

I think there is some connection between this and the recent post Using a smartphone as your extended brain (November 17, 2015).

Two fine books, listed on my page of Books: Suggestions for general science reading are relevant to the topic...

* Gleiser, The Island of Knowledge -- The limits of science and the search for meaning (2014).

* Kahneman, Thinking, Fast and Slow (2011).

Reducing asthma: Should the child have a pet, perhaps a cow?

November 28, 2015

Asthma is an increasing problem. It involves a respiratory distress, due to an immune reaction. Why it is increasing is unclear, but there is a perception that it has something to do with the greater cleanliness common in modern society. The idea is that, somehow, the exposures to antigens that commonly occur in early life are important for normal development of the immune system. Too much cleanliness, and the immune system doesn't develop "normally". It's an appealing idea, sometimes called the hygiene hypothesis. The problem is that it is rather broad, and we have little idea of the specifics. In fact, data to support the broad hygiene hypothesis as well as specific aspects of it are weak.

A new article offers one of the best tests yet of the hygiene hypothesis. It makes use of national databases in Sweden, which record information about every person -- and every dog. The analysis provides support for the hygiene hypothesis, but also shows the limitations of even a big study.

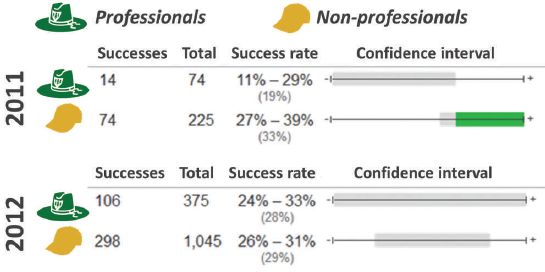

Here is one summary table from the article...

The general plan was to analyze the data for all children in Sweden over a certain time period, using the national databases. Information included whether or not the child had asthma, and whether or not the household had a dog, or had family members who handled farm animals. The scientists could then calculate how exposure to dog or to farm animals affected the odds of getting asthma. More specifically, the analysis relates exposure to the animals in the first year of life to the appearance of asthma at age 6.

There are several rows of data here, but looking at one row in detail will serve our purposes.

Look at the first row, for "All"; it summarizes the results over the entire population studied. The first column of numbers is the odds ratio (OR), with its 95% confidence interval (CI). The first value, for exposure to dog, shows an OR of 0.92. This means that children with dogs had 0.92 as much asthma as those without dogs; that is an 8% reduction. The CI is entirely below 1, and the p value shown is below the common cutoff of 0.05. Thus the result appears to show a statistically significant difference.

On that same row... the result at the right, for exposure to farm animals, is OR = 0.47, again statistically significant.

This is part of Table 2 from the article. I have truncated the table at the right side; what's missing is the number of cases in each group.

|

The general conclusion from that first row of data is that exposure to dog in the first year of life leads to a small, but statistically significant, reduction in asthma at age 6. And exposure to farm animals also leads to a reduction in asthma -- a much larger reduction.

The others rows of data in the table above broadly agree with the first row. Briefly...

The second row is a re-analysis of the same data, making certain adjustments. The adjusted results are marked with a superscript "a". The results generally agree with the unadjusted analysis, though the result for dog is a little stronger. [What are the adjustments? Other factors that the authors think might be an issue, such as parental age. It is hard to know whether such adjustments are proper -- or complete. Making such adjustments can be a contentious issue.]

The rest of the table subdivides the full set of data into two groups, depending on whether or not a parent had asthma. For each of those groups, there are two rows of results, just as for the complete ("all") set discussed above. Again, the results are similar. There seems to be a somewhat larger protective effect of dog exposure if a parent has asthma.

The authors note that this may be the largest and most systematic analysis of the effect of animals on the development of asthma. It supports that there is a protective effect of exposure to animals. That's fine. The effect of dogs is small, but a 15-20% reduction in asthma in families with a history of asthma is certainly of interest. But what is that effect? What we have here is a general correlation. Does it matter what kind of dog? Long- vs short-haired? Is it the dog's microbiota that matters? Does the type of exposure (intimacy between dog and child) matter? It's even worse with the "farm animal" part of the analysis. The criterion for inclusion was that a family member is involved with handling animals, as recorded in the national database listing occupations. The authors do note that the exposure is largely cattle and sheep. Still, that's a rather broad category.

This article got news attention when it came out. The common headline was that dogs are good for children, in reducing asthma. That's not incorrect, but it certainly is incomplete. It is typical of much of the work on the hygiene hypotheses. It's an interesting idea, and there is support for it. But it remains quite unclear what is going on. And it remains unclear whether you should get your child a cow to sleep with.

News story: Early contact with dogs linked to lower risk of asthma. (Science Daily, November 2, 2015.)

The article: Early Exposure to Dogs and Farm Animals and the Risk of Childhood Asthma. (T Fall et al, JAMA Pediatrics 169:e153219, November 2, 2015.)

More on the hygiene hypothesis:

* Treating asthma with a hookworm protein? (December 2, 2016).

* Are lab mice too clean to be good models for human immunology? (May 21, 2016).

* How intestinal worms benefit the host immune system (February 27, 2016).

* Are girls too clean? (February 26, 2011).

More asthma:

* A vaccine against asthma? (July 3, 2021).

* Is Helicobacter pylori good for you or bad? (April 10, 2012). The "good" is that Helicobacter may help prevent asthma, by its effect on the immune system.

More on children and dogs, perhaps relevant to the current post: Sharing microbes within the family: kids and dogs (May 14, 2013).

More on dogs: Predicting success in training guide dogs -- role of good mothering (November 27, 2017).

Among posts on cows...

* Polled cattle -- by gene editing (July 8, 2016).

* Cows on Mars? (November 7, 2012)

* Did Lucy butcher a cow? (February 11, 2011).

November 23, 2015

Organ transplantation: from pig to human -- a status report

November 23, 2015

One source of organs for transplantation could be non-human animals. Pigs may be the best candidate as organ donor for humans: they are of similar size, and similar physiology, in that they are omnivorous. And we know how to grow them on a large scale.

Musings has noted such work before [link at the end]. We now have a nice update, as a Nature news feature. It discusses the range of difficulties that must be dealt with. Despite some technical progress, the big answer so far is that it doesn't work very well.

There has been some success with use of pig corneas, and with pancreatic islet cells that are encapsulated. These are useful steps, but not true organ transplants.

A recent advance is the improved ability to do gene editing. Tools such as CRISPR allow us to make changes to the pig genome as we wish. Of course, that doesn't tell us what the right changes are, but it does facilitate the work.

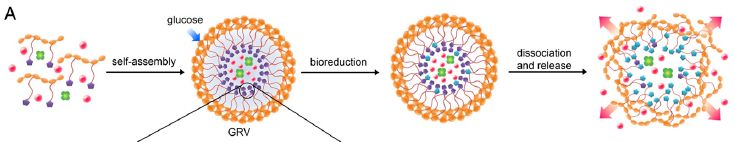

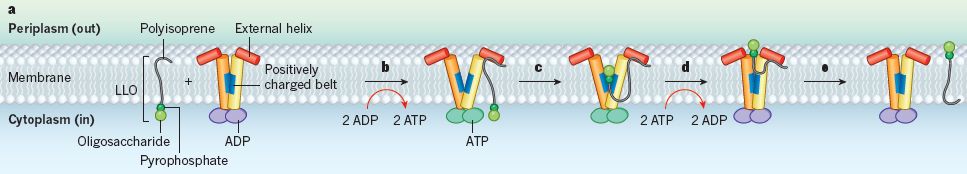

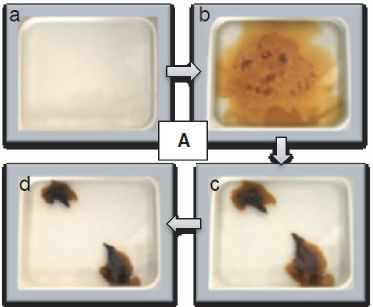

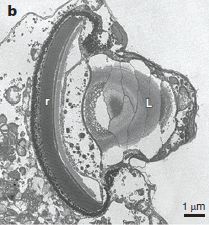

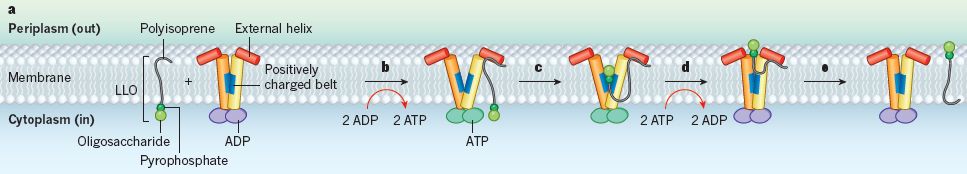

The figure summarizes some of the things being done.

It doesn't mention any of the problems, which are addressed in the article. Take the figure as a map, not a conclusion.

This is the Figure from the article.

|

Challenges remain; tools develop. Research continues. It will help us understand biology better, and perhaps someday may lead to a good source of organs. The news feature here is a useful overview and update.

News feature, which is freely available: New life for pig organs -- Gene-editing technologies have breathed life into the languishing field of xenotransplantation. (S Reardon, Nature 527:152, November 12, 2015.)

Background post: Pigs as organ donors for humans (February 16, 2010). Also see follow-up post, linked there.

New developments...

* Pig hearts can sustain life in baboons for six months (January 7, 2019).

* Long term survival of a pig heart in a baboon (April 30, 2016).

* How to do 62 things at once -- and take a step towards making a pig that is better suited as an organ donor for humans (January 17, 2016).

Also see: Using human stem cells to make chimeras in pig embryos (February 25, 2017).

More about CRISPR: CRISPR: an overview (February 15, 2015). Includes a complete list of posts on CRISPR.

There is more about replacement body parts on my page Biotechnology in the News (BITN) for Cloning and stem cells. It includes an extensive list of related Musings posts.

More about gene editing is included on my Biotechnology in the News (BITN) page Agricultural biotechnology (GM foods) and Gene therapy. It includes a list of related Musings posts.

Effect of food crops on the environment

November 20, 2015

Growing food affects the environment. It consumes resources, and leaves by-products.

A new article provides an analysis of the impact of selected crops, over a ten year period.

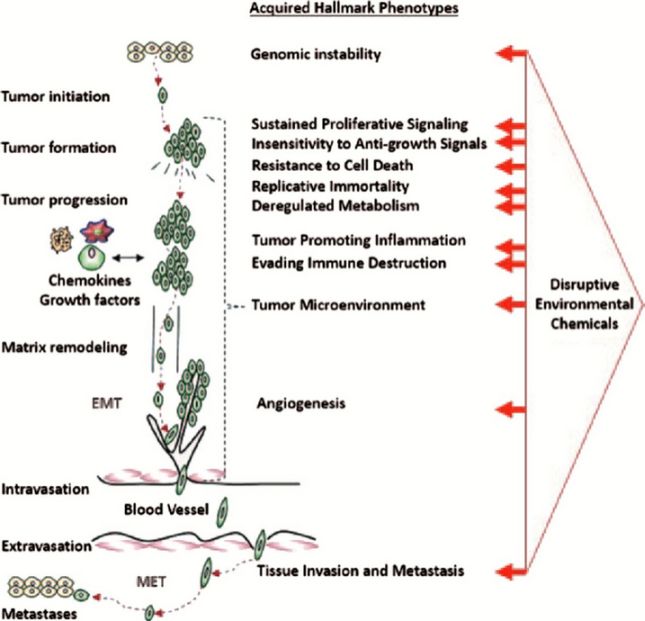

Here is one summary of the findings...

The figure shows the results for four US crops. The general nature of the graphs is the same for each crop,

The graphs show the environmental impact (y-axis) vs year (x-axis). Each graph covers a recent period of about ten years (though the dates are different for the different crops).

The impact is presented two ways. For each crop, the top frame is impact per hectare; the bottom frame is impact per ton. The difference, of course, reflects changes in crop yield over time.

The impact is presented on a "relative" basis, with the value for the first year taken as 1.

Results are shown for several types of environmental impact, using different colored lines. There is a key at the bottom, though it is rather cryptic; don't worry about this for now.

If you quickly scan the set of graphs, you will see that most of the lines are approximately horizontal. That is, whatever those lines show, not much changed over the time shown.

Let's note some of the bigger exceptions... The red lines for corn and cotton slope downward, with only half the impact at the end of the time period. The red lines for soybean slope upward, reaching about four times the original impact by the end.

Red lines? Red lines are for "FET": freshwater ecotoxicity. This largely reflects pesticide runoff into waters.

For the two crops showing reduced FET, the authors think that is due to increased use of GMO crops, properly managed, leading to less use of pesticides, especially the more toxic ones. For the crop showing increased impact, they note that there was a serious pest outbreak that required unusually high pesticide use.

You can easily see the effect of crop yield with the corn data. The lines slope downward a little more when plotted per ton; that is because the yield (tons/hectare) increased.

This is Figure 1 from the article. The full key for identifying the curves, from the figure legend: "ACD = acidification, EUT = eutrophication, SF = smog formation, HHR = human health respiratory, FET = freshwater ecotoxicity, HHC = human health cancer, and HHNC = human health non-cancer."

|

I think the most important point is that people are doing such analyses. They are hard to do. The authors note that they have advanced the field by emphasizing current data. They are able to see short term changes, and even explain them.

Whether their analyses and conclusions are correct is something we will learn over time. Presumably, others will do such analyses, and try to develop the methods further. Do people agree on the data? On the proper analysis? On the implications?

Such analyses have the potential to help us set targets for change, and to see how we are doing.

News story: LCA and the Dynamics of Agriculture's Environmental Impacts. (Bren School of Environmental Science & Management, University of California, Santa Barbara, October 8, 2015.) From the university.

The article, which is freely available: Changes in environmental impacts of major crops in the US. (Y Yang & S Suh, Environmental Research Letters 10:094016, September 11, 2015.) The first page is a nice overview. If you find the topic of interest, you may enjoy reading further. It's a nicely organized article, with considerable discussion of what they did, including limitations and caveats.

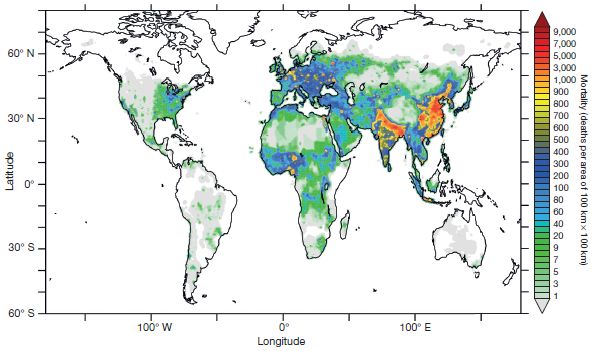

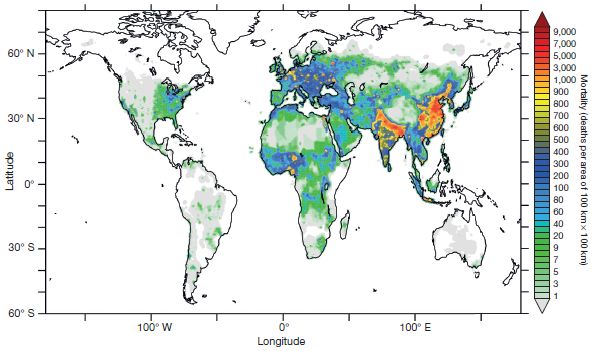

Another big view of pollution: Deaths from air pollution: a global view (October 23, 2015).

The article here is an example of life cycle analysis (LCA). Other LCA posts include...

* CFL and LED lights: energy-efficient, but toxic (March 3, 2013).

* Materials for solar cells (March 10, 2009).

More about pesticides... Silent Spring -- on its 50th anniversary (October 5, 2012).

More about soybean: Improving soybean oil by gene editing (January 8, 2017).

November 18, 2015

Using a smartphone as your extended brain

November 17, 2015

Modern phones give us instant access to all the world's information, it would seem. So we look up things. Things perhaps we should know, perhaps do know. Of course, the phone is a stand-in here for use of the Internet, a specific example of how we access the Internet. The broad question is, how do we use the Internet? Along the way, we might ask... Do people differ in how they use the Internet? If so, why?

A recent article offers some evidence on the matter. The general theme of the article was to explore how people use the Internet, via their smartphone. More specifically, the scientists asked whether they could correlate such usage with other characteristics of the people.

Here is an example of the results from the new article. The table is not easy reading. We'll walk through some parts of it slowly. I'll give an overview of the experiment and the results, but then focus on specific examples from the table. If you find the broad view confusing, the examples may help.

This experiment involved smartphone (SP) users. They reported their level of use, broken down into some categories, listed in the left column. The users were then grouped into low, medium or high, for each type of usage; those with the lowest 1/3 of usage level were considered "low", and so forth.

The people were given two types of tests, labeled "Cognitive Style" (left side) and "Cognitive Ability" (right side). We'll discuss what these mean later.

The table shows the average scores for people in each group. The column headings low-medium-high refer to the level of SP usage. The numbers in the table are the (average) test scores.

The column labeled ANOVA shows the result of a statistical test for that set of test scores. ANOVA values that are statistically significant are marked with one or more asterisks.

Example... Look at the first data set (upper left). It is for "overall" SP usage, and the relationship to the "cognitive style" test. People whose overall SP usage was low scored (on average) 0.44 on the cognitive style test. Those with medium overall usage scored 0.38, and those with high usage scored 0.25. The ANOVA statistic was 3.75, which is marked as significant. That is, the results show a significant correlation between overall SP usage and cognitive style score -- a negative correlation, in that higher phone usage was correlated with a lower test score.

This is the top part of Table 2 from the article. (The other parts provide similar analyses for computers users.)

|

What do we see? A useful place to start is with the asterisks that denote significance. For both tests, the results for overall SP usage and for SP search engine usage are significant. However, the results for SP usage for social media and entertainment are not. That is, whatever it is we are testing here, it relates to search engine use, not the other uses. "Search engine use" might be interpreted as using the SP for "knowledge"; perhaps it is the only one of the uses that we would interpret that way.

So, let's focus on the search engine usage. For both tests, scores decrease as SP search engine usage goes up. What are these two tests?

The cognitive ability test, on the right, is easier to explain. It is a test of one's ability to do certain things, such as some math. It is in the general vein of an IQ test. The trend of cognitive ability score vs SP search engine usage shows a correlation: people who use search engines more have lower test scores. (You just looked something up using your phone? That means you're dumb? No, not at all. We all look things up, but some people are more likely to than others. And so forth. Be cautious about interpreting all this!)

The cognitive style test, on the left, may raise some new issues. Recent decades have developed the idea that we have two broad types of thinking. One is generally described as fast and intuitive; the other as slower and analytical. Both of these are normal, and both have advantages. Of course, people vary in their propensity to use one or the other.

The cognitive style test is a measure of how analytical the person is. A higher score means that the person is more toward the side of the spectrum showing slower, more analytical thinking; a lower score means that they are more toward the side showing faster, more intuitive thinking. It would be good to avoid any connotation that one side is better than the other; they are two parts of normal human thinking.

The results for the cognitive style test suggest that those who tend to act fast-and-intuitive are more likely to use their SP search engine.

Taken together, the tests reported here suggest that people vary in how they use the Internet. We look things up, using the Internet as an extended memory. Some people do that more than others; the current work correlates that with certain features. Interesting tests.

The work opens up many questions. For example...

* Are the results reported here reproducible? It is possible that the results of such experiments vary, for various reasons including the nature of the people tested. A single experiment is a step, but not necessarily the final answer.

* What is the cause-effect relationship for the effect? Some would suggest that the presence of easy information, on the Internet, is making us less likely to think. There is nothing here that supports such a claim; the authors note that explicitly. It is just as plausible that some people are more inclined to look things up. And maybe, there is more than one reason for what is observed.

* And then there are some "big" questions... Is this good or bad? How should we be using the phone, or the Internet? These may be fun to discuss, but I really would suggest that it is premature to conclude much. It is sad to find that people think having more information available to more people is bad.

News stories:

* iPhone, Therefore I Am. (S Greenfield, Psychology Today, April 2, 2015.)

* Is your smartphone making you stupid? (Kurzweil, March 6, 2015.)

The article: The brain in your pocket: Evidence that Smartphones are used to supplant thinking. (N Barr et al, Computers in Human Behavior 48:473, July 2015.) Check Google Scholar for a copy.

Comment...

The general topic addressed here came up in discussion recently, so a note about the article caught my attention. I'm not sure what to make of all this. But if this post serves to promote some thinking or discussion about the topic, that's good. Just be cautious about jumping to conclusions.

One person responsible for raising these questions in the popular mind is the journalist Nicholas Carr. In 2008, he wrote an article for the Atlantic: Is Google Making Us Stupid? (N Carr, Atlantic, July 2008.) It's available, go have a look. The title is catchy, and gets attention. And the article raises questions. But it is important to distinguish raising questions and pretending that we have answers.

Carr later expanded the article into a book. The article usefully serves to raise the questions. The book is a long-winded elaboration; I spent much of the time with the book wondering what the point was. Some of the history is fun to read, but not important. Carr is not good at evaluating evidence, and his elaboration is unconvincing to those who think critically about evidence.

Some are using Carr's book to claim that the Internet is making us stupid. That's not justified. I wouldn't conclude much of anything from what Carr wrote, other than that there are questions. We have new tools. More people have more access to information than ever before. Why do some want to paint this as bad?

There is also a Wikipedia article on the topic, with the title of the article as its title. It tries to provide some balance of views, but is rather messy. If you browse it, you will get a sense of the debate. Don't try to make much more out of it.