Home

> Musings: Main

> Archive

> Archive for May-August 2012 (this page)

| Introduction

| e-mail announcements

| Contact

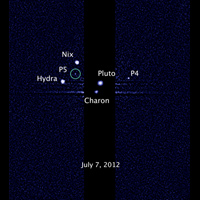

Musings: May-August 2012 (archive)

Musings is an informal newsletter mainly highlighting recent science. It is intended as both fun and instructive. Items are posted a few times each week. See the Introduction, listed below, for more information.

If you got here from a search engine... Do a simple text search of this page to find your topic. Searches for a single word (or root) are most likely to work.

Introduction (separate page).

This page:

2012 (May-August)

August 29

August 22

August 15

August 8

August 1

July 25

July 18

July 11

July 3

June 27

June 20

June 13

June 6

May 30

May 23

May 16

May 9

May 2

Also see the complete listing of Musings pages, immediately below.

All pages:

Most recent posts

2026

2025

2024

2023:

January-April

May-December

2022:

January-April

May-August

September-December

2021:

January-April

May-August

September-December

2020:

January-April

May-August

September-December

2019:

January-April

May-August

September-December

2018:

January-April

May-August

September-December

2017:

January-April

May-August

September-December

2016:

January-April

May-August

September-December

2015:

January-April

May-August

September-December

2014:

January-April

May-August

September-December

2013:

January-April

May-August

September-December

2012:

January-April

May-August: this page, see detail above

September-December

2011:

January-April

May-August

September-December

2010:

January-June

July-December

2009

2008

Links to external sites will open in a new window.

Archive items may be edited, to condense them a bit or to update links. Some links may require a subscription for full access, but I try to provide at least one useful open source for most items.

Please let me know of any broken links you find -- on my Musings pages or any of my web pages. Personal reports are often the first way I find out about such a problem.

August 29, 2012

An unusual scientific paper

August 29, 2012

|

At left is a handwritten note from the first page of the article. (It was originally an oral presentation, but later appeared in print.)

Just go look... It is freely available at article.

|

This was posted in the chemed discussion group. The poster's source: source.

More poetry... The Mudville story, on its 125th anniversary (June 3, 2013).

Deceiving a rival male

August 28, 2012

|

That is a male mourning cuttlefish (Sepia plangon).

On the left side he has male coloration (the "stripes"); on the right side, he has female coloration.

The cuttlefish is not a fish. It is a cephalopod -- a group that includes the octopus and squid. Cephalopods are known to be able to change their coloration, typically for camouflage.

The figure here is trimmed from the full size figure in the Science Now news story listed below. That figure is probably the same as Figure 1 of the article.

|

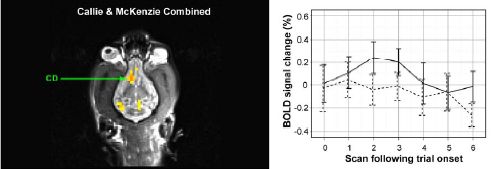

Such males, with mixed-sex coloration, are found only in a specific social context. The mixed-sex male is between a female and another male. That is based on extensive observations of the cuttlefish in a natural setting, according to a new paper. The findings are summarized in the following graph.

The graph is somewhat confusing. The key is to start with the x-axis. It shows several social groupings, with M and F having the usual meanings. For example, the right-most group, MMMF, means three males in a row, with a female to one side.

Two measurements are plotted for each social grouping. One is shown with bars, the other with points and a line. (The line doesn't really mean anything; this is just a bar graph, with two values being plotted for categories shown along the x-axis. It makes no sense to connect the dots between two categories.)

|

|

Let's start with the gray bars. Make that, the gray bar. It shows the percentage of animals with the deceptive coloration. Read this bar on the right-hand scale; it is about 40%. For what group? Look at the x-axis: it is MMF -- two males plus a female to one side. Importantly, there are no other gray bars. That is, they observe this coloration pattern only for the one grouping, MMF. (It is not clear exactly what the 40% number refers to. Is this the percentage of males with this mixed coloration, or the percentage of groups with a mixed color male? Fortunately, it doesn't matter much, to get the main idea of the paper.)

The graph line, read on the left-hand scale, shows the percentage of "groups" of each type. (For their purposes here, a "group" contains one or more animals.) You can see that only 10% of the groups they saw are of the MMF type, yet this accounts for all the deceptive displays.

Using the labels on the x-axis and reading the left-hand scale, you can see that about 20% of the groups were just one male (M), 10% were two males (MM), and so forth. But, again, the deceptive coloration was observed only in the MMF groups.

This is Figure 2 from the article.

|

Why is this happening? The authors suggest that the male is courting the female (showing her his male side), and deceiving a possible rival male on the other side (showing him his female side).

Interestingly, they also suggest that the male cuttlefish is controlling the coloration as a matter of intelligence -- evaluating the situation, and modifying its coloration only when it seems useful. They don't really present much evidence for that interpretation, but they do note that the cephalopods are noted for their intelligence. I suggest we keep an open mind on this interpretation. For now, simply establishing the phenomenon is a step forward -- and an interesting finding. Their interpretation may guide further work, but should not be taken as a conclusion.

News story: Two-Faced Fish Tricks Competitors. (Science Now, July 3, 2012.)

The article: It pays to cheat: tactical deception in a cephalopod social signalling system. (C Brown et al, Biology Letters 8:729, October 23, 2012.)

More about cephalopods...

* Aging without memory loss in cuttlefish (October 4, 2021).

* Sleep stages in octopuses -- do they dream? (July 13, 2021). Includes an extensive list of posts about octopuses and other cephalopods.

* Chromatic aberration: is it how cephalopods see color with only one kind of photoreceptor? (October 14, 2016).

* Cuttlefish vs shark: the role of bioelectric crypsis (May 10, 2016).

* How an octopus adapts to the cold -- by RNA editing (March 5, 2012).

* Quiz: What is it? (November 20, 2012). See the answer.

More mollusks... Is clam cancer contagious? (April 21, 2015).

Another camouflage story: The story of the peppered moth (July 9, 2012).

Another animal with a 2-sex appearance -- for a completely different reason: On his right side, he is female (April 24, 2010).

More on deception:

* A "flower" that bites -- and eats -- its pollinator (December 27, 2013).

* A deceptive robot (September 4, 2012).

More about animals that change color... Why chameleons change color (and get thin) (March 31, 2014).

Next post on intelligence: The smartest chimpanzee? (September 29, 2012).

What does "Anopheles" mean?

August 27, 2012

A bit of etymology -- and history.

The article -- just a paragraph or so, freely available: Etymologia: Anopheles. (Emerging Infectious Diseases 18:1511, September 2012.)

For a picture, see the related post: Genes that protect against malaria (January 19, 2010).

More Anopheles... Can Wolbachia reduce transmission of mosquito-borne diseases? 2. Malaria (June 17, 2016).

More on mosquitoes. Mosquitoes are delectable things to eat (August 21, 2010). This post addresses the issue of the good vs bad of mosquitoes, a topic perhaps prompted by the content of this new item.

* Previous history post...

Frank Oppenheimer, on his 100th birthday: the Exploratorium (August 14, 2012).

* Next... Silent Spring -- on its 50th anniversary (October 5, 2012). Not unrelated!

My page Internet resources: Miscellaneous contains a section on Science: history. It includes a list of related Musings posts.

Genome sequencing of a human fetus

August 25, 2012

People have been examining the human fetus for many years. In the amniocentesis procedure, a sample of fluid is obtained from within the uterus. This is an invasive procedure, and carries risks of its own. Ultrasound is an example of a non-invasive procedure, but it provides limited information.

The discovery that there is fetal DNA in the mother's blood opens up new possibilities. Obtaining the mother's blood is non-invasive to the fetus, and a routine minor invasion for the mother. Making use of this fetal DNA has moved forward with the development of DNA testing in general. Tests looking for specific genetic abnormalities have been developed and approved. Now, we have the first report of a "complete" genome sequence of a fetus, based on analysis of the blood from the mother.

There is a problem with this approach. The fetal DNA is typically about 10% of the DNA in the mother's blood; that is, the sample being sequenced is mostly (about 90%) maternal DNA. Thus the problem is sorting out what the "raw" sequencing results mean. Of course, the scientists can sequence the mother's genome -- and the father's too. They then collect lots (!) of sequencing data. Computer analysis sorts out which results are for the fetus.

There are a couple of issues in analyzing for the fetal genome. For the most part, the fetal genome follows Mendel's laws. At each spot on the genome, the fetus should have the sequence from the mother or the sequence from the father. This part of the analysis is logically straightforward, if both parental sequences are known. It's just a big computational problem.

In addition, the fetus may carry new mutations -- sequences not in either parent. Although such novel sequences are relatively infrequent, they may be of particular interest. What does the child carry that is unexpected? It's also difficult, because the frequency of mutations is not very different from the error frequency for sequencing.

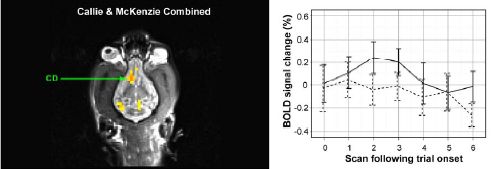

|

Here is an example of how one might see new mutations -- individual base sequences not carried by either parent. The figure at the right shows the sequencing results for a short segment from the mother, father, and offspring (fetus -- but the data shown are for mother's blood).

|

|

Look first at the result for the mother (at left, in the figure). The colored peaks represent raw sequencing data for a particular five-base segment of the genome, with a different color for each base. The result is then shown as base sequence directly underneath. The results for the father (middle) are the same. That is, both parents carry only this one DNA sequence at this site.

We would expect the child (fetus) to show this same result. However, that is not quite so. Look carefully at the result for the DNA from the blood of the pregnant mother (at the right in the figure, labeled "offspring"). The peak for the middle position is more complex: it has two colors. It's not important that you can see all the detail, but the computer records show that out of 93 sequencing "reads" for this position, 90 were T and 3 were C. (In the base sequence, this is shown as Y, where Y is the code for "T or C") What does that mean? Remember, the DNA sampled here is about 90% maternal. In this case, they found that it is 87% maternal and 13% fetal. Of the 93 reads, we might expect 12 to be from the fetus -- six from each of the two fetal copies of the gene. Finding three C at this position suggests that some of the fetal DNA has picked up a new mutation. After the child was born, they verified that the child indeed carries this mutation. (From knowing where this site is, they know the mutation would lead to a particular leucine amino acid in a protein being changed to a proline. They are concerned this mutation might be detrimental.)

The figure here is part of Figure 1 D from the article.

|

Well, it took several paragraphs there to explain what is going on for a five base sequence. Hopefully, it gives the idea. In this case, detailed analysis of the DNA from the mother's blood reveals that the fetus carries a novel mutation. Stepping back to the big picture, they have achieved the first sequencing of a child's genome prior to birth, using a non-invasive method. They are at the forefront of using the new DNA technologies, including the computer processing that is so critical to all sequencing. The authors do not suggest that this is very practical at this point, but it certainly opens the door.

News story: Baby's Genome Deciphered Prenatally from Parents' Lab Tests. (Science Daily, June 6, 2012.)

The article: Noninvasive Whole-Genome Sequencing of a Human Fetus. (J O Kitzman et al, Science Translational Medicine 4:137ra76, June 6, 2012.)

This work was made possible by recent developments in DNA sequencing, leading to major cost reductions. A recent post on this topic was: DNA sequencing: an overview of the new technologies (June 22, 2012).

A post on the ethics of learning about the fetus: Let parents decide (May 14, 2010).

Several posts on personalized, genome-based medicine, are listed at: Personalized medicine: Getting your genes checked (October 27, 2009).

More about fetal DNA: Male DNA found in human female brains (October 8, 2012).

More about DNA in the blood: A blood test that detects multiple types of cancer (March 30, 2018).

More human genome sequencing: Accumulation of mutations in the sperm of older fathers (November 19, 2012).

There is more about genomes on my page Biotechnology in the News (BITN) - DNA and the genome.

Thanks to Borislav for raising the topic of fetal DNA sequencing.

Fertilizing the ocean may lead to reducing atmospheric CO2

August 24, 2012

The southern oceans are deficient in iron. As a result, few algae -- the major photosynthetic organisms of the oceans -- grow there. What if we added iron to these oceans? We might predict that it would stimulate the growth of algae. Further, if the algae settled to the ocean floor (rather than being quickly recycled), this would result in carbon from the air being deposited at the ocean bottom. This could be a form of carbon sequestration, reducing the CO2 in the air. Some have suggested that this might be worth doing, as a way to combat global warming; it is a type of geoengineering.

Does it work? In fact, some small studies have suggested that adding iron to iron-deficient oceans does stimulate algal growth. A new paper confirms this, and also suggests that the algae settle.

|

Here is an example of their results, showing the first point.

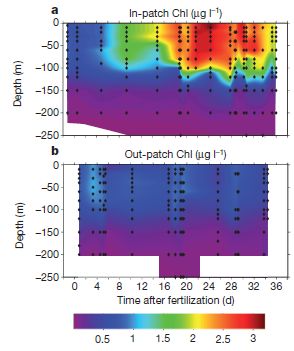

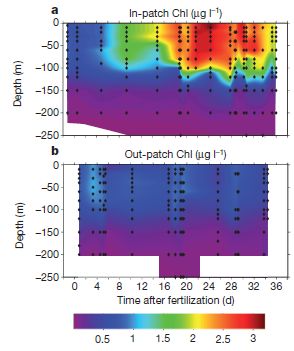

The scientists measure chlorophyll to represent the amount of biomass. The amount of chlorophyll is shown by color, according to the key at the bottom. Each graph shows the chlorophyll as a function of depth (y-axis; meters) and time after the fertilization with iron (x-axis; days). Part a (upper) is for the area within their study patch ("in-patch"); part b (lower) is for the area outside the study patch ("out-patch").

It may be simplest to start with part b. This is the "control" -- the area not fertilized. All the colors shown here are for low levels of chlorophyll, and there is little change over time.

|

Now look at part a, for the fertilized patch. You can see that the graph has many brighter areas, indicating higher concentrations of chlorophyll. This shows that the iron fertilization stimulated the growth of biomass. If you look more closely, you will see that the chlorophyll began to rise noticeably a few days after fertilization, continued to rise until about day 24, and began to decline near the end of the study time. You will also see that significant increases in chlorophyll were found at even 100 meters water depth.

The figure here is Figure 2 parts a and b from the article.

|

The graph above shows that iron stimulates algal growth. Per se, that is not enough to show that such fertilization would reduce atmospheric CO2. What happens to the algal biomass? The decline in chlorophyll (or biomass) shown above toward the end could be due to the algae settling -- or due to them being eaten. Distinguishing those requires a complex analysis of a huge data set of many parameters. They conclude that over half of the algal biomass sinks.

Thus this work supports the idea that stimulating algal growth by fertilizing iron-deficient oceans with iron could serve to reduce CO2 in the air. More specifically, it provides some evidence for the two steps that are needed: stimulation of algal growth, and settling of the algae. I should caution -- even emphasize -- that most scientists do not feel that enough is known about the system to employ it as a real geoengineering application to combat global warming. The information available is just too limited at this point. The general consensus is that more experimental work should be done, asking more questions about the system -- including carefully looking for unintended side effects. For now, this is interesting science, but should not be considered a practical tool.

News story: Sinking Carbon: Researchers Publish Results of an Iron Fertilization Experiment. (Science Daily, July 18, 2012.)

* News story accompanying the article: Biogeochemistry: The great iron dump. (K O Buesseler, Nature 487:305, July 19, 2012.)

* The article: Deep carbon export from a Southern Ocean iron-fertilized diatom bloom. (V Smetacek et al, Nature 487:313, July 19, 2012.)

A broad overview of geoengineering: Geoengineering: a sunscreen for the earth? (February 20, 2010).

More... Climate engineering: How should we proceed? (March 4, 2015).

Another post dealing with the iron limitation in the southern ocean: The effect of defecation by whales on global warming (August 2, 2010).

and maybe... Why are some icebergs green? (May 11, 2019).

More about CO2: Making use of CO2 (November 10, 2015).

More about iron as a limiting nutrient: The iron war (May 17, 2015).

More about our oceans: 2011: There was less water in the oceans (November 25, 2012).

Posts about diatoms include: Communication in diatoms (February 6, 2022).

More about measuring biomass: The ultimate census: the distribution of life on Earth (June 22, 2018).

August 22, 2012

NASA: It's InSight, not TiME

August 22, 2012

NASA has just announced its choice for the next "low-cost" planetary mission of the Discovery series. It's a mission to explore the core of Mars; the mission is called Interior exploration using Seismic Investigations, Geodesy and Heat Transport -- or InSight.

Among the losers was the proposed mission to visit the lakes of Titan, the mission called Titan Mare Explorer -- or TiME. We note the NASA choice here because the TiME mission was the subject of a Musings quiz (link below).

News story on the NASA announcement: NASA Unveils New Mars Mission to Probe Red Planet's Core. (Space.com, August 20, 2012.)

The Musings quiz about TiME: Quiz: NASA's boat (June 29, 2011).

More about Mars:

* Cows on Mars? (November 7, 2012)

* Mars: craters (August 11, 2012).

TALISE: A better boat for Titan? (October 16, 2012). A new chapter begins?

What about the H5N1 flu?

August 21, 2012

The H5N1 bird flu has been in the news a lot recently, with the controversial publication of two papers on its potential to jump to humans. As this incident winds down, Nature has published a nice short overview of where we are with H5N1 flu. It's worth a browse.

News feature, which is freely available: 5 Questions on H5N1. (E Yong, Nature 486:456, June 28, 2012.)

Posts on flu and flu vaccines are listed on the page Musings: Influenza (Swine flu).

NASA: Life with arsenic -- Follow-up #2

August 20, 2012

The article that was the basis of the original post has been retracted. See the Retraction note at the top of the original post for more information. Added August 28, 2025.

Original post: NASA: Life with arsenic (December 7, 2010). And... NASA: Life with arsenic -- follow-up (June 7, 2011).

The original paper made two main claims. First, they claimed they had a bacterial strain that would grow with arsenic (As) instead of phosphorus (P). Second, they claimed that these bacteria contained biochemicals with arsenic replacing phosphorus. (Those two claims complement each other. The first says that the organism seems to grow with As; the second says that indeed they find As in it, in specific biochemicals they might expect.) The paper was featured at a NASA press conference, which made the extreme claim that they had found (or would make) a new form of life. The basis of that claim was that P is one of the essential elements for all life as we know it; finding or making a life form that did not use P would indeed be novel. That press conference gained extra attention -- and scrutiny -- for the paper.

Among reasons to be suspicious of the original work... First, the growth media they used contained low (residual) levels of P. Second, what is known about the chemistry of As makes it unlikely that As could replace P in biochemistry. (Arsenate analogs of many phosphate compounds tend to be quite unstable.) The paper noted these concerns, and did not make the extreme claims that came from the press conference.

We now have two new papers following up on the original work. The general conclusion from the two papers is that the bacteria cannot grow without P, and that there is little As replacing P in the biochemicals of the cell. They did this by doing experiments similar to those of the original paper, but taking care to lower the residual P level even further. In addition, they extended the analytical measurements, and took extra care in purifying materials before analyzing them.

Thus at the moment there seems to be no basis for the claim that the bacteria can grow without P. Even when fed a very high ratio of As to P, there is little As in the cell biochemicals. Is this the last word? The original authors say they will report new results soon. Let's see what they say.

Despite the negative tone of the new work, the new bacteria may be of some interest. It is extremely resistant to As. It grows even when As is present at a level a thousand times higher than the P. Ignoring As at such a high level is itself a trick that may be worthy of further study. Further, there may be interest in studying the low level of As incorporation that is seen.

News story: Scientists say NASA's 'new arsenic form of life' was untrue. (Phys.org, July 9, 2012.)

The articles:

* GFAJ-1 Is an Arsenate-Resistant, Phosphate-Dependent Organism. (T J Erb et al, Science 337:467, July 27, 2012.)

* Absence of Detectable Arsenate in DNA from Arsenate-Grown GFAJ-1 Cells. (M L Reaves et al, Science 337:470, July 27, 2012.)

Earth: craters

August 19, 2012

|

Some readers may recognize this crater. Some may have been on its floor.

The crater is about 1.2 kilometers across.

|

That is Barringer Crater, often called Meteor Crater. It is in the US state of Arizona, about 100 miles (160 km) north of Phoenix. This crater was made by a meteor about 50 meters across, impacting with an energy of about 10 megatons TNT. The impact happened about 50,000 years ago -- well within the human era on Earth -- though there is no evidence for humans on this continent that early.

Last week I posted about a database of craters on Mars -- a database with a few hundred thousand entries (a link is below). Now we have an Earth crater -- from a database of Earth craters. Geologists have been working on this Earth Impact Database since 1955. It has 182 entries.

The young Earth should have been bombarded about as hard as Mars and the Moon. Why are there so few craters found on Earth? The major reason is that the Earth is so geologically active. Plate tectonics has erased most of our early history. The news story with the Mars crater post discusses this; that news story would be very suitable here.

The database: Earth Impact Database. I suggest you browse the home page and then look at the FAQ -- listed in the main text at the right. (The menu at the left is for the institute, not for this database.) Or explore other options, as you wish. For example, you might sort the list by crater size or age, to put the Barringer crater in perspective. The database is maintained by the Planetary and Space Science Centre, University of New Brunswick, Canada.

The photo above is reduced from figure #3 on their page for Barringer crater: Earth Impact Database: Barringer.

Mars database: Mars: craters (August 11, 2012).

A post on collisions of asteroids with earth: Gravity tractor: protection from asteroid collisions (October 26, 2009).

For more about meteorites: An extraterrestrial god (October 9, 2012).

A new approach for testing a Llullaillaco mummy for lung infection

August 17, 2012

|

"The Maiden", as she is known. Died, age 15 -- sacrificed to Pachamama, the Inca earth goddess, about 500 years ago. She was discovered, as an extraordinarily well preserved mummy, in 1999 near the 6,739 meter (about 22,000 feet) summit of Llullaillaco, in Argentina.

This is Figure 1A from the article. Mummies of two other children were also discovered there. One of them was analyzed by the same methods discussed here, and was negative on all tests.

|

Previous radiological examination of the Maiden had revealed various lesions, including in the lungs. Visual inspection revealed nasal mucus under the nostrils.

The key new step in this work was to apply a method that is just emerging: total analysis of all the proteins, or "shotgun proteomics". This is done by mass spectrometry; as with so many modern methods, it provides a "computer full" of data that is beyond ordinary comprehension. In this case, analysis of the proteins from the Maiden's mouth showed proteins of the immune system, which would be expected for someone with an active infection. Some of these proteins were characteristic of mycobacterial infections. Mycobacteria include the species that causes tuberculosis (TB), Mycobacterium tuberculosis.

With evidence of lung damage and evidence of infection, perhaps mycobacterial, the authors did an additional test. They looked at the DNA in the same samples from the Maiden. Preliminary DNA analysis seems to show DNA from mycobacteria, but is insufficient to point to a specific member of the group. All the evidence together suggests that the Maiden had an active mycobacterial infection.

An important point is the value of having the different kinds of evidence. DNA evidence is "in vogue", but simply finding DNA from an organism would be insufficient to indicate active disease. Many people carry the TB bacterium, for example, without active disease. The key contribution here is the protein work: analyzing the proteins from the mouth shows that there is an immune reaction to a pathogen. They then couple the protein findings with the radiological examination of the lung and the DNA evidence to suggest a diagnosis.

News stories:

* 'Maiden' Inca Mummy Suffered Lung Infection Before Sacrifice. (Live Science, July 25, 2012.)

* Disease Diagnosed in a 500-Year-Old Mummy. (New York Times, July 30, 2012. Now archived.)

The article, which is freely available: Detecting the Immune System Response of a 500 Year-Old Inca Mummy. (A Corthals et al, PLoS ONE 7:e41244, July 25, 2012.)

More about analyzing ancient diseases...

* A look at Chopin's heart (January 9, 2018). Not so ancient, but still...

* Musici Ambulanti: Ancient art and ancient microbiology (January 17, 2012).

* Diagnosis of prostate cancer in a 2100 year old man (November 8, 2011).

More mummies: How to make a mummy (May 7, 2023).

Analyzing ancient proteins: Dinosaur proteins (July 6, 2009). Caution... They refer to this work as if it were well accepted. I'm not at all convinced it is. The uncertainty about the analysis of dinosaur proteins in no way diminishes the new work.

More about mycobacteria:

* How did tuberculosis get to the Americas? (January 24, 2015).

* Leprosy: the armadillo connection (May 14, 2011).

More lungs... A better way to collect a sample of whale blow (November 28, 2017).

More mucus: Effectiveness of alcohol-based hand sanitizers? (September 28, 2019).

More from the Incas: Skull surgery: Inca-style (August 21, 2018).

Thanks to Borislav for suggesting this item.

August 15, 2012

Frank Oppenheimer, on his 100th birthday: the Exploratorium

August 14, 2012

|

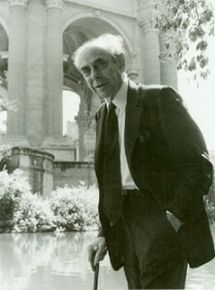

Frank Oppenheimer, founder and long-time director of the Exploratorium, San Francisco.

Born August 14, 1912; died February 3, 1985.

Shown here in front of the Palace of Fine Arts, the original home of the Exploratorium.

This figure is from The Aesthetic of Frank Oppenheimer. (Exploratorium. Now archived.)

|

For a sense of Frank Oppenheimer, and his view of science education: The Exploratorium: A Playful Museum Combines Perception and Art in Science Education. (F Oppenheimer, American Journal of Physics, 40:978, July 1972.)

The paper is also freely available from the Exploratorium, at Exploratorium copy; now archived. It is available there both as a web page and as a pdf. This page and the page listed above as the figure source are part of a "history" section at the Exploratorium web site.

In addition to his role in developing the world's greatest science museum, Frank Oppenheimer was a physicist, a member of the Manhattan project, a victim of McCarthyism, a rancher, a high school teacher -- and the younger brother of perhaps a more famous Oppenheimer.

* Previous history post... Salvador Luria, on his 100th birthday: the Luria Delbrück experiment (August 13, 2012).

* Next: What does "Anopheles" mean? (August 27, 2012).

Another birthday: Happy birthday, Phil Trans (March 25, 2015).

My page Internet resources: Miscellaneous contains a section on Science: history. It includes a list of related Musings posts.

Salvador Luria, on his 100th birthday: the Luria Delbrück experiment

August 13, 2012

|

Sal Luria, Nobel prize-winning molecular biologist.

Born August 13, 1912; died February 6, 1991.

This figure is from Wikipedia: Salvador Luria.

|

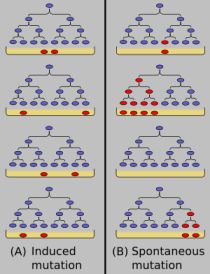

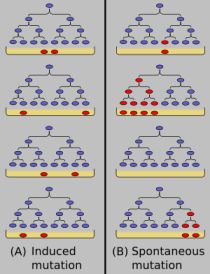

Luria was not just a molecular biologist; he was one of the founders of the field. He is particularly famous for an experiment reported in 1943, an experiment that has its own Wikipedia page. The experiment addressed a very fundamental question in genetics: does a selective pressure for some trait cause the trait to happen, or does it select for rare variants (mutants) that already have the trait? To make that more concrete... Imagine that bacteria are exposed to a drug (e.g., an antibiotic). Most die. A few survive; they are found to be resistant to the drug. The question is: Did the drug cause the resistant variants to occur, or were there some rare resistant variants already present, and they were allowed to dominate when the drug was added?

The Luria-Delbrück experiment, published by Luria with another distinguished scientist (and co-Nobelist) Max Delbrück, addressed this with an elegant experiment. The general idea of the experiment can be described simply, though full evaluation of the real experiment uses mathematical models for the two possibilities being distinguished, and statistical analysis of the experimental results.

Here is the main idea... Imagine that you do the experiment described above -- on challenging bacteria with a drug -- many times, and measure how many drug-resistant mutants you get each time. If the virus is causing (inducing) the mutations, you may expect about the same number each time. On the other hand, if the virus is merely selecting for rare variants that are already there, the number you get may fluctuate wildly -- depending on how many virus-resistant variants happen to be present. (The Luria-Delbrück experiment is sometimes also called the fluctuation test.)

|

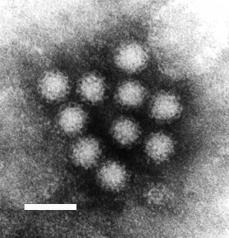

Here is a cartoon of the Luria-Delbrück experiment; it is from Wikipedia: Luria-Delbrück experiment. The general plan is that small samples of bacteria are grown, and then put on petri dishes ("plates") that include a virus. (The virus -- or bacteriophage -- here plays the role of a drug; it is exactly the same idea.) Only bacteria resistant to the virus can grow on the plates. The resistant bacteria are shown in red.

The figure describes the two models and the expected results. On the left is the model that the virus (drug) causes the mutations to occur. In this case, there are no virus-resistant bacteria present during the growth phase, and about the same number occur on each plate when the virus challenge happens. (In the cartoon here, two red colonies appear in each of the four frames.) On the right is the model that resistant bacteria arise -- randomly -- during the growth phase. Then, upon challenge with the virus on the plates, the number of resistant colonies varies widely. (In the cartoon here, the number of red colonies is 1,4, 0, and 2 in the four frames. And the number of red colonies equals the number of red cells already present before the virus challenge.)

|

As an example... In one experiment they did 12 samples. The numbers of resistant colonies were: 1, 0, 0, 7, 0, 303, 0, 0, 3, 48, 1, 4. I think it is "obvious" that the results vary widely. That wild fluctuation supports the second model: the variants (mutants) already existed, in varying numbers; the virus challenge simply allowed them to dominate, when all the original sensitive bacteria were killed off. Although I have presented a small set of data here for inspection, their full analysis involved many such experiments, and a statistical analysis of the data. (This is experiment 17 from Table 2 of the paper.)

The Luria-Delbrück experiment established that mutations occur in the absence of the selective pressure. Selection acts on pre-existing mutants; it does not create them. This is a basic tenet of modern biology, and is generally well understood within the framework of DNA and how it replicates. But this experiment was done before we knew that DNA was the genetic material -- a discovery published the following year.

The original Luria-Delbrück article: Mutations of bacteria from virus sensitivity to virus resistance. (S E Luria & M Delbrück, Genetics 28:491, November 20, 1943.) The paper is freely available through PubMed Central: copy at PMC.

Nobel site: The Nobel Prize in Physiology or Medicine 1969 was awarded jointly to Max Delbrück, Alfred D. Hershey and Salvador E. Luria for their discoveries concerning the replication mechanism and the genetic structure of viruses.

Another Musings post on molecular biology history... The original Watson-Crick paper on the structure of DNA (October 25, 2010).

More about phage: A virus that could treat acne? (October 21, 2012)

* Previous history post...

On a new method of treating compound fracture... (July 11, 2012).

* Next: Frank Oppenheimer, on his 100th birthday: the Exploratorium (August 14, 2012).

My page Internet resources: Miscellaneous contains a section on Science: history. It includes a list of related Musings posts.

Mars: craters

August 11, 2012

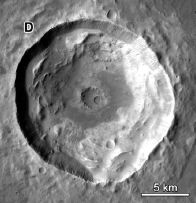

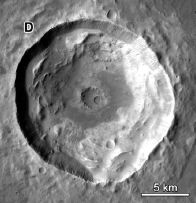

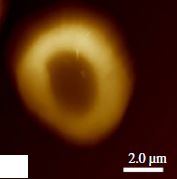

At the right is a crater on the surface of Mars.

This is part of Figure 5 from paper #1.

The scale bar is 5 kilometers.

|

|

Here are 384,342 more of them: Martian craters. (From the authors of the papers listed below.)

That's really the point there. A group of scientists have pored through vast amounts of data, from numerous missions, and come up with a catalog of known craters on Mars. (The number listed depends on the specific criteria used, such as size.) Two of their papers are listed below. The first is basically an announcement of the catalog. The second offers some exploration of their properties. Simply browsing it and looking at figure legends will lead to admiration of what they have accomplished -- even if there are no clear take home lessons at this point.

News story: Impact atlas catalogs over 635,000 Martian craters. (American Geophysical Union, June 11, 2012.) Good overview of the background and possible uses, with some broader perspective.

The articles:

* A new global database of Mars impact craters ≥1 km: 1. Database creation, properties, and parameters. (S J Robbins & B M Hynek, Journal of Geophysical Research 117:E05004, May 15, 2012.)

* A new global database of Mars impact craters ≥1 km: 2. Global crater properties and regional variations of the simple-to-complex transition diameter. (S J Robbins & B M Hynek, Journal of Geophysical Research 117:E06001, June 5, 2012.)

Another recently noted catalog... Habitable Exoplanets Catalog (July 27, 2012).

And... Earth: craters (August 19, 2012).

And... 124,993 and counting: cataloguing plant species in the Americas (February 26, 2018).

More about Mars...

* What causes gullies on Mars? (September 8, 2014).

* NASA has announced its choice... NASA: It's InSight, not TiME (August 22, 2012).

* Water at the Martian surface? (August 27, 2011). Includes links to more.

More craters...

* MESSENGER orbits Mercury, shoots Debussy (June 10, 2011).

* Lutetia: a primordial planetesimal? (February 13, 2012).

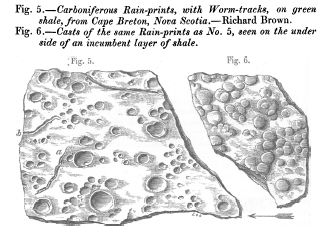

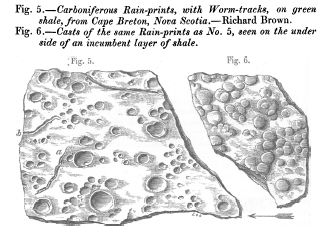

* Fossil raindrops and the density of the ancient atmosphere (May 6, 2012).

Light-dark (day-night) cycles affect pregnancy

August 10, 2012

Under natural conditions, we are exposed to alternating blocks of light and dark, approximately 12 hours each. Body rhythms are coordinated with that. If the light-dark signals are altered, the body typically continues to behave as if the old signals were still there. That is, the body has an endogenous cycling system, which is coordinated with the external light-dark cycle. This is called the circadian rhythm. (circa dia: about day.) Some aspects of the circadian rhythm are understood, in part by the study of mutations that disrupt the natural cycles.

Some external events disrupt our light-dark cycles. Changes in day length during the year occur slowly, and are generally compensated for. However, quick changes in the light-dark cycle, as occur during fast long-distance travel, cause physiological disturbances for a few days ("jet lag"), until the body's endogenous rhythm adjusts to being coordinated to the new cycle. Further, people whose daily cycle is very different from the common cycle may have problems. So called shift-workers, working at night and sleeping in the day, are an example; changing between schedules is an extra stress.

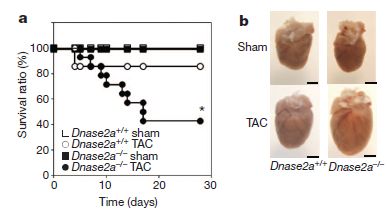

A new study looks at the effects of "jet lag" on pregnancy in mice. The experiment is simple, with one well-controlled variable. They took a group of pregnant mice, and housed them during pregnancy under three different conditions. One set had regular 12 hour light-dark cycles; this is the "control" set. For the other two sets of mice, the light cycles were shifted every four days, with the lights coming on either six hours later or six hours earlier. The results were dramatic. For the pregnant mice on the regular cycles, 90% gave normal birth. For the two groups with altered lighting cycles, only 50% or 22% (respectively) gave normal birth.

Readers who are not mice may be wondering how this applies to them. What makes this a good experiment is that it was a well-controlled experiment: well-defined conditions, with a clear experimental variable. But it is with mice. If you are intrigued, read the opening section of the paper, which brings together a range of observations with various mammals, including humans, suggesting that disruption of the natural circadian rhythm affects pregnancies. As they note, the problem with many of the observations, especially with humans, is that they are unclear. The current work with mice is clear, and the broader context suggests it might be relevant.

News story: Women trying to have babies face different clock problem. (Medical Xpress, May 23, 2012.)

The article, which is freely available: Environmental Perturbation of the Circadian Clock Disrupts Pregnancy in the Mouse. (K C Summa et al, PLoS ONE 7:e37668, May 23, 2012.)

More on body rhythms:

* Does it matter what time of day you get a vaccine? (October 26, 2012).

* What's a dia? Bumblebees and reindeer don't agree. (December 6, 2010).

* Sleepy teenagers (July 23, 2010).

In plants... Can plants calculate how long their food supply will last? (August 9, 2013).

More about pregnancy... Pregnancy in males: It's similar to pregnancy in females (February 22, 2016).

August 8, 2012

The genome of Musa acuminata

August 8, 2012

I don't really have much to say about this. I just wanted to re-use the picture shown above.

Seriously, we are in an era of an explosion of genome information, as we have noted regularly. The information reported here will be useful to those working on bananas, and to those studying evolution of plants. However, there are no immediate exciting messages for most of us from the work.

News story: Full genome reveals banana crop secrets. (Futurity, July 13, 2012. Now archived.)

The article, which is freely available: The banana (Musa acuminata) genome and the evolution of monocotyledonous plants. (A D'Hont et al, Nature 488:213, August 9, 2012.)

Previous post featuring the same figure...

Measuring radiation: The banana standard (April 17, 2011).

Previous genomics post...

In humans, rare mutations are common (July 24, 2012).

There is more about genomes and sequencing on my page Biotechnology in the News (BITN) - DNA and the genome. It includes an extensive list of Musings posts on the topics.

Do animal bones have something like annual growth rings?

August 7, 2012

The most familiar annual growth rings are probably those in trees. Perhaps you have seen them. A recent post on carbon (C-14) dating talked about tree rings, since comparing C-14 dating against tree ring counts is a good way to calibrate the C-14 dating method. (Link at end.) The growth rings reflect seasonal variation in growth rates, due to favorable vs unfavorable conditions, such as temperature. Careful analysis of the rings even provides information about the climate!

What about animals -- animals that grow over many years, thus encounter the same range of conditions as the trees? Do their bones show annual growth rings?

It's not that obvious what one should predict. After all, animals are not trees. But so-called cold-blooded animals share one feature with the trees: their body temperature is largely determined by the external environment. So we might predict that cold-blooded animals, such as reptiles, might show annual growth rings in their bones.

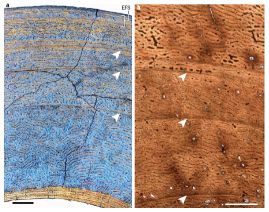

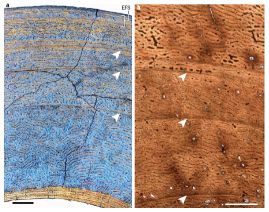

Here are a couple of pictures of animal bones.

Bigger picture [link opens in new window]

Just look at part a (left side of the figure) for now. The arrows point to annual growth rings in these animal bones.

This is Figure 1 of the Nature news article (Padian). The scale bar for the left side (black bar, lower left) is 0.5 millimeter. The scale bar for the right side (white bar, lower right) is 1.0 millimeter.

|

|

What kind of animal is this bone from? A deer -- a mammal, warm-blooded. In fact, a new paper has analyzed the bones of a wide range of ruminant mammals (cows, deer, and such -- animals that digest woody material in a rumen). The key finding is that they all contain such growth rings in the bones. It isn't the first finding of growth rings in warm-blooded animals, but it is the first thorough systematic study.

What about that other picture above -- part b, on the right? It also has growth rings, again marked with arrows. It's from a dinosaur fossil.

That brings us to the context of the new work. There is an ongoing debate about whether dinosaurs were cold-blooded or warm-blooded. The old idea is that they were cold-blooded, as typical of reptiles. Newer evidence has been mixed, but there is a trend toward the view that the dinosaurs were warm-blooded. However, one piece of evidence that seemed to favor the conclusion that dinosaurs were cold-blooded was the presence of growth rings in the fossilized dinosaur bones. The new work shows that this is not a valid argument. Growth rings are found in warm-blooded animals, too -- all of the wide range of ruminants in this careful study. Caution... The new work does not show that dinosaurs were warm-blooded; it merely shows that a particular argument suggesting they were cold-blocked is invalid. The presence of growth rings per se is not an argument one way or the other.

That warm-blooded animals show growth rings in their bones is interesting. Sometimes we just naively talk about warm-bloodedness as if it meant complete and perfect control of body temperature, oblivious to the outside environment. But it is not. Warm-blooded animals sense the outside environment, and respond to it. Making heat is expensive. It is reasonable that a warm-blooded animal allocates resources differently depending on the outside temperature. Thus this work is a reminder that warm-bloodedness is not a simple phenomenon.

News stories:

* Dinos Not Necessarily Cold-Blooded. (The Scientist, June 27, 2012.) The featured picture is quite interesting.

* How Sweet! Dinosaurs May Have Been Warm-Blooded After All. (Live Science, June 27, 2012.)

* News story accompanying the article: Evolutionary physiology: A bone for all seasons. (K Padian, Nature 487:310, July 19, 2012.) This is an excellent overview of the new work and its implications. (It is by UC Berkeley biologist Kevin Padian.)

* The article: Seasonal bone growth and physiology in endotherms shed light on dinosaur physiology. (M Köhler et al, Nature 487:358, July 19, 2012.) The article is difficult reading!

More about growth zones:

* Briefly noted... Dinosaur extinction: What time of year was it? (May 10, 2022).

* Tree rings, carbon-14, cosmic rays, and a red crucifix (July 16, 2012).

* Barium, breast milk, and a Neandertal (June 17, 2013).

More about bones:

* Human gracility (June 26, 2015).

* A new, simple way to measure bone loss? (September 14, 2012).

More about dinosaurs:

* T rex census (May 25, 2021).

* A tiny titan (May 9, 2016).

* Were dinosaurs cold-blooded or warm-blooded? (August 23, 2014).

* The oldest dinosaur embryos, with evidence for rapid growth (May 7, 2013).

* The Obama lizard (March 20, 2013).

* A dinosaur in color (April 5, 2010).

More about maintenance of body temperature (warm-bloodedness): Mammoth hemoglobin (February 1, 2011).

Also see... Global warming trend? Independent evidence (March 22, 2013).

For a video of a talk by Kevin Padian (who wrote the Nature news story) on dinosaur growth and vertebrate flight... Lecture videos: Berkeley City College (July 14, 2013).

Thanks to Borislav for suggesting this item.

Getting along: animals and bacteria

August 6, 2012

We may naively tend to think about individual organisms in isolation. Yet that is not how nature works. Nature involves communities of organisms, with complex interactions. In some cases, the associations are intimate and essential. As examples in humans...

* Our mitochondria, which are derived from bacteria, are essential components of our energy metabolism -- and are involved in disease processes.

* Our gut bacteria are essential to our well-being, in an emerging area we understand poorly.

A recent feature article in Microbe, the news magazine of the American Society for Microbiology, provides a nice gentle and readable overview of animal-bacteria relationships. Recommended!

The article, which is freely available: "Can't Live without You:" Essential Animal-Bacterial Relationships. (A E Douglas, Microbe 7:273, June 2012.)

Also see:

* Heart damage: role of mitochondrial DNA (June 1, 2012).

* Sushi, seaweed, and the bacteria in the gut of the Japanese (April 20, 2010).

There are similar associations beyond those involving bacteria and animals. Examples...

* A photosynthetic salamander? (August 24, 2010). This one involves a eukaryotic alga with an animal. This post links to other possibilities.

* A new organelle "in progress"? (September 13, 2010). This one involves a bacterium with a fern (a simple plant).

* Plants need bacteria, too (October 9, 2010).

And perhaps more speculatively... Bacteria induce simple "pre-animal" to become colonial (September 8, 2012).

Titan: tides, and the possibility of a sub-surface water ocean

August 4, 2012

As the Moon goes around the Earth, its gravitational pull on a particular place varies. We see this as the tides: water levels rise and fall due to the varying effect of the Moon. Of course, the effect works both ways. The Earth's gravitational pull on a particular place on the Moon also varies, though we usually pay no attention to this.

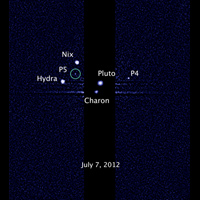

Similarly, the planet Saturn and its moon Titan exert varying gravitational pulls on each other. Now, NASA scientists report that measurements made by the Cassini spacecraft in orbit around Saturn allow them to begin to describe the tides on Titan. Further, they use their estimates of the tides on Titan to make inferences about the structure of Titan. Interestingly, they suggest that the tides are best explained if Titan contains a sub-surface ocean -- a water ocean.

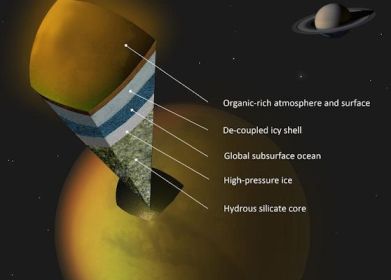

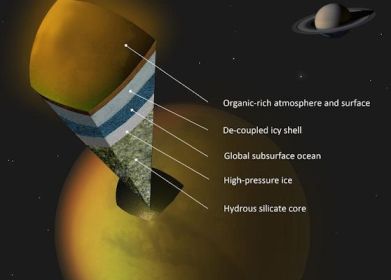

|

The figure shows a model of what they think Titan might look like. Note the darker blue layer, labeled "Global subsurface ocean".

It's important to distinguish what they actually measured, and what is model or hypothesis. They measured the tides -- the changes in shape of Titan as it orbits Saturn. This is a remarkable technical achievement. They measured tides of 10 meters (30 feet) -- ten times more than expected if Titan were solid rock. Thus it seems that Titan is "squishy" -- more deformable than they expected. They interpret this as indicating that Titan contains some highly deformable innards. And they suggest a sub-surface water ocean as that deformable layer. That is their working hypothesis for now. But it would be improper to say that they have shown there is such an ocean.

The figure is reduced from one in the NASA story listed below.

|

This work represents a small step toward understanding the structure of another solar system body. As the news stories below note, it has implications for understanding the Titan atmosphere, rich in the unstable chemical methane.

News story: Titan's Underground Ocean. (NASA, June 28, 2012.)

* News story from the journal in advance of the article: Planetary science: Cassini Spies an Ocean Inside Saturn's Icy, Gassy Moon Titan. (R A Kerr, Science 336:1629, June 29, 2012.)

* The article: The Tides of Titan. (L Iess et al, Science 337:457, July 27, 2012.) The article itself is mostly a technical analysis of the results. The final part includes a brief discussion of some alternative interpretations.

More about Titan...

* Briefly noted... A river map for Titan (February 9, 2022).

* TALISE: A better boat for Titan? (October 16, 2012).

* Weather forecast: Clouds will form near North Pole within two years (April 9, 2012).

The following post notes that the tidal interactions between Jupiter and its moon Europa may be the moon's primary heat source. Steppenwolf: Life on a planet that does not have a sun? (July 2, 2011).

More from Cassini: Venus: an unusual view (March 18, 2013).

More about tides... Does the moon affect earthquakes? (October 21, 2016).

More about oceans: 2011: There was less water in the oceans (November 25, 2012).

Another titan... A tiny titan (May 9, 2016).

Scorpion venom: a source of a novel antibiotic?

August 3, 2012

Antibiotics are our miracle drugs that protect us against bacteria. However, the bacteria "fight back": antibiotic resistance is undermining our use of antibiotics. We need new antibiotics.

Biologists at Wuhan University in China have taken a new approach to combat the problem.

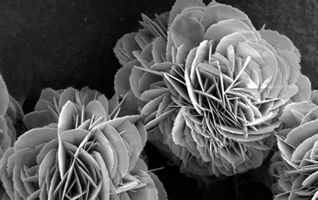

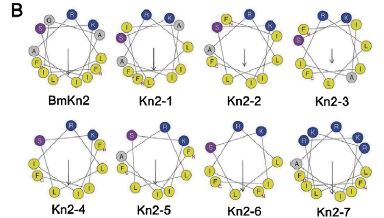

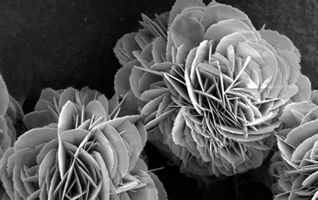

Their approach is shown at the right.

The figure is trimmed and reduced from the feature figure of the Wired news story.

|

|

A scorpion sting could help cure an infection? Sort of. Among the things a scorpion injects with its sting are antibiotics. (Some speculate that the purpose of the antibiotics is to protect the animal's dinner from decay before it gets to eating it.) In the new work, the scientists have taken a scorpion venom antibiotic -- and then improved it. There is some "theory" behind this, but let's start by looking at the result.

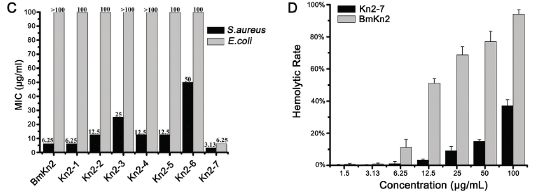

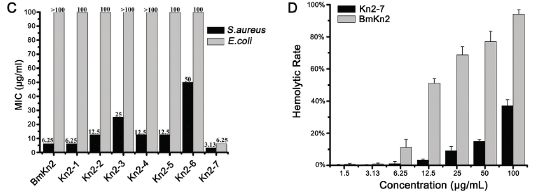

|

The left frame (part C) shows the effectiveness of several antibiotics against two test bacteria (dark and light bars). The y-axis is the concentration of antibiotic needed to prevent the bacteria from growing; lower is better. (MIC = minimal inhibitory concentration.) The left hand pair of bars is for the original antibiotic from the scorpion (called BmKn2); the right hand pair of bars is for the improved antibiotic (called Kn2-7). (There are also results for some others they tried; we'll ignore those.)

|

|

Compare the bars for the two kinds of bacteria for the original and improved antibiotic... Both bars are lower for the improved Kn2-7; one of them is much lower (for E. coli).

So far, so good. But there is often a problem with this type of antibiotic: it tends to kill our cells, too. A practical test of this is to see if it lyses red blood cells, a test called hemolysis. The right hand frame (part D) compares the original and improved antibiotic in a hemolysis test. The y-axis shows the rate of hemolysis; smaller is better. The x-axis is the level of the antibiotic. The light and dark bars are for the two antibiotics. For each, hemolysis increases with higher antibiotic levels; this would be expected. However, at any level, the improved antibiotic (dark bars; Kn2-7) shows less hemolysis than the original antibiotic. Thus the improved antibiotic, which is more effective against two kinds of bacteria (frame C) is also less toxic (frame D). (You can't tell from the graph whether it is good enough.)

The figure here is part of Figure 1 from the article.

|

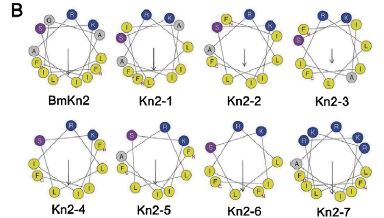

Do we understand why their new antibiotic is better? Not completely, but there are some ideas that guided them in making Kn2-7. Here is an example.

|

The figure here shows one view of the various antibiotics discussed here. (This shows the complete set, as in frame C above; again, we'll discuss only two of them.) These antibiotics are small proteins (peptides), and they have a tendency to fold up into a helical (spiral) chain. The view here is looking at the helix end-wise. About all you see is which amino acids are sticking out in which direction. (You can try to follow the line showing their order, but it really doesn't matter here.)

|

One feature thought to be good for this type of antibiotic is to have the basic amino acids (those with a positive charge) all on one side. In this figure, those amino acids are coded as dark blue dots. You can see here that the original antibiotic (BmKn2, upper left) has two of those near the "top"; the improved antibiotic (Kn2-7, lower right) has five of them. It's also good to have a separation between those basic amino acids (blue dots) and the hydrophobic amino acids, which tend to avoid water (shown as yellow dots). Again, you can see that Kn2-7 looks better by this criterion, too.

This figure is also part of Figure 1 from the article.

|

Thus they have made an improved antibiotic, starting with one found in scorpion venom. It is improved by two tests (top figure, above). They also have some understanding of why it is improved (second figure). None of that ensures that the new drug is actually useful; there are many drug candidates that pass early tests and fail later ones. In this case, they do a test with mice, and show that the new drug is effective for treating skin infections. This is encouraging. They propose that further work should be done with the new drug.

News stories:

* Scorpion Venom Heals Drug-Resistant Bacteria Infection. (Wired, July 11, 2012.)

* Study finds scorpion venom able to heal bacterial infections in mice. (Phys.Org, July 13, 2012.)

The article, which is freely available: Antibacterial Activity and Mechanism of a Scorpion Venom Peptide Derivative In Vitro and In Vivo. (L Cao et al, PLoS ONE 7:e40135, July 5, 2012.)

More about antibiotics and antibiotic resistance...

* Restricting excessive use of antibiotics on the farm -- follow-up #2 (April 16, 2012)

* Antibiotics and obesity: Is there a causal connection? (October 15, 2012).

Posts about other venoms include... Snake venom gland organoids (March 17, 2020). Links to more, including a book.

More on antibiotics is on my page Biotechnology in the News (BITN) -- Other topics under Antibiotics.

August 1, 2012

TIGER discovers smallest known fly; does it live in the head of tiny ants?

July 31, 2012

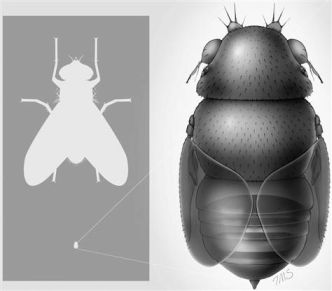

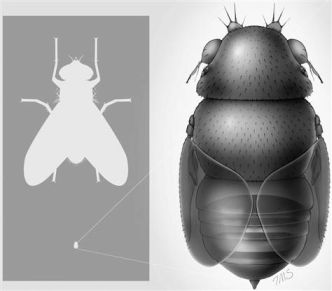

A scientist at the Los Angeles County Natural History Museum reports the smallest known fly.

The right hand part of the figure here shows a drawing of the fly. But its key feature is its size. The left hand frame shows an ordinary house fly at the top and this new fly at the bottom -- to scale. The new fly is about 1/15 the size of the house fly -- about 0.4 millimeters long.

The report goes on to talk about the lifestyle of the fly.

The figure at the right is reduced from one in the MSNBC news story listed below. It is probably the same as Figure 2 of the article.

|

|

This is an interesting story, but one that also illustrates the importance of being careful to distinguish what was actually found or done from what is suspected or hypothesized.

The real facts here are minimal. A single adult fly was discovered -- during a training course for TIGER, the Thailand Inventory Group for Entomological Research. The article listed below describes the fly. Since it seems distinct from all previously known flies, the author assigns it to a new species, which he calls Euryplatea nanaknihali. He discusses some of the features of this fly, and speculates on more.

The fly is of a type known to parasitize ants. Does this one parasitize ants? That's an interesting question. The author notes that there are some tiny ants, which were thought to be too small for flies to parasitize. But this new fly could reasonably do so. This reasoning leads to the part of the title of the article about small size not being sufficient to protect the ants (from parasitism by flies). The news stories pick up on this. Importantly, there is no information about whether this new fly parasitizes ants. There is only one specimen of the fly, found as an adult. It's the larval stage that parasitizes flies; no one has ever seen a larval stage for this new fly. How it develops is completely unknown. All the discussion of what it might do is interesting. Such ideas, even speculations, can guide further work. However, it is beyond what is now known. The title of the article emphasizes the speculation, and the titles of the news stories state things that are not known. (Remember that headlines are often written by someone other than the author. Headlines are to get attention. Always be careful about accepting them as factual. That includes titles of posts in Musings.)

News stories. Beware the hyped titles (as noted above), but otherwise these are both good overviews of the work and its implications.

* World's tiniest fly decapitates ants -- then lives in their heads. (J Welsh, MSNBC, July 2, 2012.)

* Tiny fly decapitates insect after growing inside of them. (Bunsen Burner, July 3, 2012.) Now archived.

The article, which is freely available: Small Size No Protection for Acrobat Ants: World's Smallest Fly Is a Parasitic Phorid (Diptera: Phoridae). (B V Brown, Annals of the Entomological Society of America 105:550, July 2012.) The article contains several drawings, but only one photo -- and that is of surprisingly poor quality.

An earlier post about a phorid fly -- also a parasite: A parasitic fly that causes hive abandonment in bees: Is this relevant to CCD? (January 27, 2012).

Another parasite of ants... Death-grip scars from zombie ants, 48 million years ago (November 9, 2010).

More about being small... What to do if your brain won't fit in your head (February 18, 2012).

More flies... A superhydrophobic fly -- that can survive in highly alkaline water (February 25, 2018).

And more...

* Ants: nurses, foragers, and cleaners (May 24, 2013).

Thanks to both Thien and Borislav for alerting me to this item, and sending the news stories listed above.

Metallic hydrogen? -- follow-up

July 30, 2012

Original post: Metallic hydrogen? (March 16, 2012). The post reported recent work which claimed to make metallic hydrogen. As we noted there, such claims are not new, and are likely to be controversial. Indeed, this claim has proved controversial. Nature has a recent "news feature" on the article and the controversy surrounding it. I should stress that there is no further solid information, thus there is no confirmation or disproof. However, those who find the story of metallic hydrogen intriguing may find this news feature worth a look. As with so much of what Musings presents, this is science in progress.

News story, which is freely available: Metallic hydrogen: Hard pressed -- Two physicists say they have forced hydrogen to become an exotic metal thought to exist only in the hearts of giant planets. Now they must face their critics. (Nature 486:174, June 14, 2012.)

Silk: Stabilizing vaccines and drugs

July 29, 2012

Many biological materials need to be stored cold. This adds cost. Further, in some cases, simply maintaining long term cold storage is difficult. Delivering vaccines to remote areas would be an example. A new article offers a simple solution: store the materials adsorbed onto silk.

|

Here is an example of the results. This is for measles vaccine, prepared in three different ways and tested for stability at two temperatures. The y-axis shows the "residual potency" (i.e., the stability); the x-axis is storage time.

|

|

First, compare the general pattern for the two temperatures: 25° C (upper) and 37° C (lower). All three preparations are fairly stable at 25° C, but distinct differences are clear at 37° C.

The three preparations? Squares are for the normal vaccine preparation, circles and diamonds are for two variations of vaccine combined with silk. It's clear that the normal prep is much less stable at 37° than either of the silk-treated preps. (Perhaps silk also helps at 25°, though the effect is small.) The "circles" treatment is for vaccine simply adsorbed onto silk fibers. The "diamonds" treatment includes a lyophilization (freeze-drying) step, which seems beneficial.

The figure here is part of Figure 2 from the article.

|

That is a typical result from the paper: storage of the vaccine with silk, preferably lyophilized, greatly increases the stability of the vaccine. The article includes data up to 45° C, with similar results. Results for two other vaccines they tested are similar. And they also tested two antibiotics; both showed enhanced stability when stored on silk.

Do they understand why this works, why silk stabilizes the materials? Yes and no. For the vaccines, which are proteins, it is likely that the silk immobilizes the material, protecting against heat-induced changes of shape that would cause the vaccine proteins to lose activity. It's also likely that the binding to silk puts the materials in a water-free environment, which may be protective. Even if these general ideas are correct, the scientists do not understand the details, and cannot predict which materials will benefit. Nevertheless, the results so far are encouraging, and the method may well be practical.

News story: New silk technology stabilizes vaccine and antibiotics so refrigeration is not needed. (Phys.org, July 9, 2012.)

The article: Stabilization of vaccines and antibiotics in silk and eliminating the cold chain. (J Zhang et al, PNAS 109:11981, July 24, 2012.) The Introduction is a very readable overview of the work, including the background about the problem of loss of biomaterials due to heat inactivation.

More on vaccines:

* Does it matter what time of day you get a vaccine? (October 26, 2012).

* A better way to deliver a vaccine? (July 25, 2010). This post is on the development of a vaccine delivery system that avoids the use of the traditional needles. I note it here because the authors of the current paper are also working in that area -- and they think the silk-based vaccines would be quite compatible with their delivery system.

The measles vaccine was noted in the post Ten Great Public Health Achievements, 2001-2010 (June 26, 2011).

More measles: What if Mickey Mouse got measles? (January 27, 2015).

More on silk:

* Silk-clothed electronic devices that disappear when you are done with them (October 19, 2012).

* Spiders and violins (May 4, 2012).

Several Musings posts about silk are listed on my page Internet Resources for Organic and Biochemistry under Amino acids, proteins, genes.

More on vaccines is on my page Biotechnology in the News (BITN) -- Other topics under Vaccines (general). There is also a section on that page on Measles.

Habitable Exoplanets Catalog

July 27, 2012

This post is of interest for a couple of reasons. First, the Habitable Exoplanets Catalog exists!

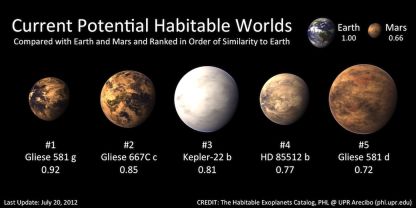

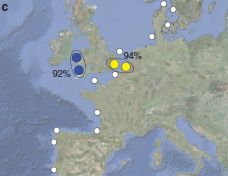

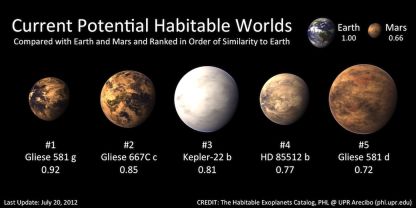

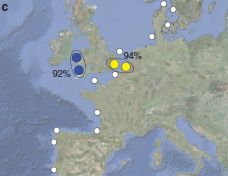

The following figure shows the main part of the Habitable Exoplanets Catalog (HEC). (It is reduced from a figure featured on the HEC main page, listed below. The figure is found at many other pages, both at the HEC site and in stories about HEC.)

The top row provides some background: Earth and Mars, with scores of 1.00 and 0.66, respectively. Scores for what? The Earth Similarity Index (ESI), a measure of habitability. Presence of liquid water, for example. The Earth, at 1.00, is the reference point.

The second row shows the only five extra-solar planets now considered to be in the habitable zone -- in order by their ESI score. They have scores from 0.92 to 0.72 -- all better than Mars.

|

|

There are only five planets outside our solar system now considered potentially habitable. That is out of 777 confirmed exo-planets, according to a table on the HEC main page. Only five! But remember, only two decades ago, the number of exoplanets we knew about at all was zero. We now know of 777 (confirmed), and 5 of those seem potentially habitable. Look at the table further, and you will see that there are about 2500 exoplanet candidates that have not yet been confirmed; 29 of these may also be candidates for habitability. (It seems that about 1% of exoplanets are being rated as potentially habitable.) Further, they predict there may be 41 habitable moons around those exoplanets. All in all, the catalog suggests there may be 75 habitable exoplanets or exomoons. Remember, this is based on exoplanets that we have some evidence for -- and only a tiny region of space has been carefully examined.

The second reason this is so interesting? Look at the first exoplanet listed. It is Gliese 581g. That exoplanet has already made Musings twice (links at the end). The first time was to report the claim for its discovery, and noting that it may be in the habitable zone. The second time was to report that other scientists questioned whether it existed.

There is a new paper on Gliese 581g, by those who originally claimed its discovery. They address the criticisms, and present more evidence to support their claim. Is this the last word? I doubt it. Thus we are left with an interesting situation: Gliese 581g may be the most Earth-like exoplanet known, or it may not exist. We'll see.

The HEC folks do not pretend to judge this dispute. They catalog what has been reported. The catalog will evolve as new results come in. That there is a catalog of habitable exoplanets is an interesting development. That we are not at all sure what belongs in it is a reflection of the early stage of the field.

HEC web site: Habitable Exoplanets Catalog. (Planetary Habitability Laboratory, University of Puerto Rico, Arecibo.) From the main page: "The Habitable Exoplanets Catalog (HEC) is an online database for scientists, educators, and the general public focused on potential habitable exoplanets discoveries. The catalog uses various habitability indices and classifications to identify, rank, and compare exoplanets, including potential satellites, or exomoons."

Press release about the current work: Five Potential Habitable Exoplanets Now. (HEC, July 19, 2012. Now archived.) This discusses both the catalog and the new paper.

The new article about Gliese 581g. GJ 581 update: additional evidence for a Super-Earth in the habitable zone. (S S Vogt et al, Astronomische Nachrichten (Astronomical Notes) 333:561, August 2012.) There is a preprint, probably in near-final form, freely available at arXiv: copy at arXiv. The paper is, in part, from the Lick Observatory; the Lick, in the San Francisco Bay Area, is associated with the University of California Santa Cruz.

Previous posts about Gliese 581g:

* The first truly habitable exoplanet? (October 12, 2010).

* The first truly habitable exoplanet? Follow-up. (October 26, 2010). (The content of this post has also been integrated into the post listed above.)

Recent post about Kepler mission: A new trick for the Kepler planet-hunters (June 25, 2012).

Another catalog... Mars: craters (August 11, 2012).

And... 124,993 and counting: cataloguing plant species in the Americas (February 26, 2018).

Also see...

* Habitable planets very close to a star (June 19, 2016).

* Most Earth-like (habitable) planets haven't formed yet (October 27, 2015).

* Exoplanet Travel Bureau (February 21, 2015).

July 25, 2012

A Lovelock update

July 25, 2012

We have noted the contributions and views of biologist James Lovelock in previous posts, which are consolidated on the supplementary page Gaia and James Lovelock.

As he approaches his 93rd birthday (tomorrow -- July 26), Lovelock is in the news again. He is writing another book and giving talks and interviews. Among the events was an interview with Jim al-Khalili, a UK physicist who runs the BBC Radio show A Life Scientific. The interview is broad -- about Lovelock's long career. It presents Lovelock as a real person, in his own voice. He is fun -- and provocative.

The BBC interview with Lovelock is available as an MP3 podcast: Lovelock interview. (Jim al-Khalili, BBC, May 8, 2012.) It's a half hour interview; listening to even part of it is a useful introduction to Lovelock. (You can also go to the web page for the program series, and scroll down to the Lovelock interview, May 8, 2012. A Life Scientific.)

Lovelock has been getting recent media attention for modifying some of his views on climate change. Since we alluded to some of his earlier views, it seems proper to note the update. However, I am not sure that one should make much of this. As noted, Lovelock enjoys being provocative. If he brings people into the debate, that is good. But if he becomes the subject of the debate, then that is less good. Lovelock's conclusions, then and now, are his opinions -- opinions of one with much knowledge of the field. But the field is one of great complexity and great uncertainty, and any firm conclusions are questionable. So, get involved, listen to Lovelock's reasons -- and to those of others. Don't put much weight in the opinions of any single person.

News story: Gaia creator rows back on climate. (BBC, May 8, 2012.) One attempt to note Lovelock's views. This story coincides with the above interview, but is based on much more. (The BBC interview noted here actually has very little on climate change.) Again, don't worry much about Lovelock's opinions, but use his provocative pronouncements as an excuse to get more into the complex debate.

This post is listed on the supplementary page Gaia and James Lovelock.

In humans, rare mutations are common

July 24, 2012

That does sound a bit odd, doesn't it. But it makes an important point. It's based on a trio of recent papers, all made possible by the rapidly increasing practicality of sequencing human genomes. The new work reveals some new features simply because there is so much more data than before; we can now see things that are rare.

It is common knowledge that some diseases are caused by our genes. Sickle cell anemia and cystic fibrosis are a couple of examples. In these cases, we know the specific gene variants (alleles) that are responsible, and we can sometimes follow the inheritance of the mutant alleles through families. The frequency of the mutant allele can be substantial -- several percent.

What has been less clear is the importance of alleles that are at very low frequency in the population -- so-called rare alleles. How often do rare alleles cause disease? How much do rare alleles in combination cause some effect? A broader form of the question might be: how many rare alleles are there? Or, how common are rare alleles?

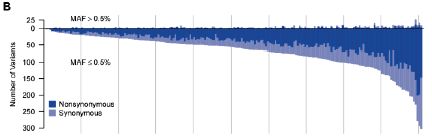

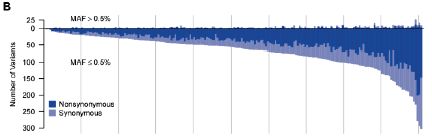

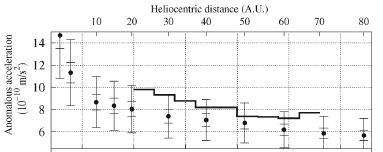

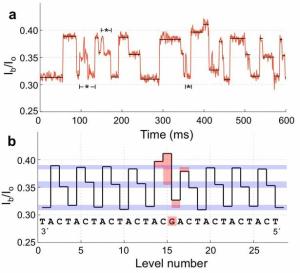

The new papers address the question. The basic approach is massive detailed sequencing. Here is an example of one of the analyses. In this work, the scientific team analyzed 202 genes from 14,002 people. They also took great care to get high quality sequencing data. When looking for rare mutations it is easy to get misled by sequencing errors. The following graph summarizes a massive amount of data from this study.

|

The graph shows the number of variants (mutations) found in each gene (y-axis) plotted against gene number (x-axis).

The y-axis is a bit unusual. It has a "zero" point, but then the numbers are positive in both directions from 0. It's really a double graph. One thing is plotted upward from 0, and one thing is plotted downward from 0. But what is plotted is itself a simple positive number, in both cases. It is the number of variants (mutations) found in each gene.

Start with the downward part of the graph, which is most visible. We'll look at the total number of mutations found, ignoring the two colors they show.

What they did was to measure the number of variants in each gene, and then list the genes in order by that number. The graph shows how many variants they found in each gene -- starting with genes with few variants on the left to genes with around 300 variants on the right. That is, the x-axis is simply the rank of the gene, in order by number of mutations found. The curve is a smooth curve, because they designed it that way. It gives a quick visual impression of how many mutations they found.

Now let's look at what the top and bottom parts of the graph are for. They are labeled MAF > 0.5% and MAF ≤ 0.5%. MAF means "minor allele frequency." That is, the lower curve is for rare alleles -- and there are a lot of them. The upper curve is for more common alleles -- and there are few of them. (The cutoff at 0.5% is arbitrary, but is common. Note that if a particular allele is present at 0.5%, it means that 1 in 100 of us have it -- since we each have two copies of each gene.)

As an example... Look at the gene at the extreme right; that would be gene #202 of their study. There are approximately 300 "rare" variants (read the graph downward), but only "a few" of the common variants (read the graph upward). There are far more rare variants than common variants. And that's the point. That general pattern holds for almost every gene in the study.

If you want to study the importance of variants of gene #202, it is easy to follow a few major alleles. It is hard to follow alleles that are rare. Yet most of the variation consists of rare alleles. If you carry a mutation in gene #202 (whatever that gene may be), it is likely that your mutation is rare -- and that it is not well-studied.

The figure above is Figure 1B from the paper by Nelson et al.

|

There are three independent papers on this, all appearing at about the same time. Each has its own approach to generating a large amount of data, but they all end up with about the same conclusions, at least for the basic points. I list here all three papers, with a news story for each; the numbers correspond for the stories and papers. For the basic idea, reading any one of the news stories is good; if you read more of them, all the better. You will see that what I have presented above is just the tip of the iceberg of what these papers say. In addition to the simple conclusion that rare variants are common, they interpret the development of human populations in the light of this information. Be forewarned: it gets pretty heavy! (But it is also fascinating.)

News stories.

1) 'Rare' Genetic Variants Are Surprisingly Common, Life Scientists Report. (Science Daily, May 18, 2012.)

2) Slew of Rare DNA Changes Following Population Explosion May Hold Clues to Common Diseases. (Science Daily, May 17, 2012.)

3) As population exploded, more rare genes entered human genome. (Medical Xpress, May 11, 2012.)

News story accompanying articles 1 & 2: Genetics: Human Genetic Variation, Shared and Private. (F Casals & J Bertranpetit, Science 337:39, July 6, 2012.)

The articles:

1) An Abundance of Rare Functional Variants in 202 Drug Target Genes Sequenced in 14,002 People. (M R Nelson et al, Science 337:100, July 6, 2012.) The example above is from this article.