Home

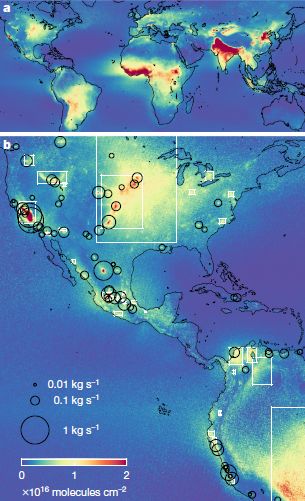

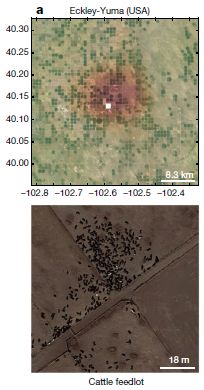

> Musings: Main

> Archive

> Archive for January-April 2019 (this page)

| Introduction

| e-mail announcements

| Contact

Musings: January-April 2019 (archive)

Musings is an informal newsletter mainly highlighting recent science. It is intended as both fun and instructive. Items are posted a few times each week. See the Introduction, listed below, for more information.

If you got here from a search engine... Do a simple text search of this page to find your topic. Searches for a single word (or root) are most likely to work.

Introduction (separate page).

This page:

2019 (January-April)

April 30

April 24

April 17

April 10

April 3

March 27

March 20

March 13

March 6

February 27

February 20

February 13

February 6

January 30

January 23

January 16

January 9

Also see the complete listing of Musings pages, immediately below.

All pages:

Most recent posts

2026

2025

2024

2023:

January-April

May-December

2022:

January-April

May-August

September-December

2021:

January-April

May-August

September-December

2020:

January-April

May-August

September-December

2019:

January-April: this page, see detail above

May-August

September-December

2018:

January-April

May-August

September-December

2017:

January-April

May-August

September-December

2016:

January-April

May-August

September-December

2015:

January-April

May-August

September-December

2014:

January-April

May-August

September-December

2013:

January-April

May-August

September-December

2012:

January-April

May-August

September-December

2011:

January-April

May-August

September-December

2010:

January-June

July-December

2009

2008

Links to external sites will open in a new window.

Archive items may be edited, to condense them a bit or to update links. Some links may require a subscription for full access, but I try to provide at least one useful open source for most items.

Please let me know of any broken links you find -- on my Musings pages or any of my web pages. Personal reports are often the first way I find out about such a problem.

April 30, 2019

Briefly noted...

April 30, 2019

Ricequakes. Studying the real world is important. But studying model systems can be easier, cheaper, and safer. A good model system reveals at least some aspects of the real world system. Example... Studying how rock-filled dams may fail is important. Studying the structure of a bowl of cereal is easier and cheaper (and presumably safer). A recent article is about the latter. It's fun -- and good science.

* News story: Using puffed rice to simulate collapsing ice shelves and rockfill dams. (B Yirka, Phys.org, October 15, 2018.) Links to the article, which is freely available. Included there (as "Supplementary materials") are an audio file and a video file. The audio file lets you listen for two minutes to a model dam collapse system. The five-minute video is speeded up 15x (no sound there). Be patient.

High-voltage thunderstorms: how high?

April 29, 2019

How about 1.3 billion volts?

That's the claim in a recent article. It is based on observations during a major thunderstorm on December 1, 2014, near Ooty, India (elevation 2200 meters, or about 7200 feet). Observations made by GRAPES. Observations of muons.

Here are some results...

The left-hand frame (Fig 2) shows a map of the sky. It is color coded to show the intensity of the muon stream detected during the storm; the intensity is given here as the difference from normal; see the color key at the right. Briefly, green means that the muon stream is normal. Blue means it is low, by as much as 2%. Red would mean an enhanced muon stream, but there are no such readings.

The big picture... There is a region to the right side of the map that is, in general, blue. A region of the sky where the muon stream was less than normal.

As to the sky-map... It is shown as a 13x13 array, giving 169 measurements.

The right-hand frame (Fig 3) summarizes the results over time. The y-axis is the intensity of the muon stream; as in Fig 2, it is shown as the difference from normal: ΔIμ. The x-axis is clock time, shown as universal time (UT) on the day of the big thunderstorm.

You can see that the muon intensity was markedly low for about 20 minutes, from 10:40 to 11:00.

These are Figures 2 (left) & 3 (right) from the article.

|

That's the muon intensity. This is a muon-observing station. GRAPES = Gamma Ray Astronomy at PeV EnergieS. (It's actually GRAPES-3, for Phase 3 of the project.)

Why? The muons are from cosmic rays; Their energy is altered by the electric potential of the thunderstorm cloud. There is a lot of theory behind that, and some empirical calculations. Bottom line... The scientists argue that the observed drop in muon intensity corresponds to a voltage drop of about 900 million volts from top to bottom of the thunderstorm; about 0.9 GV (gigavolts).

Further analysis at high resolution suggested that the voltage drop was as high as 1.3 billion volts (1.3 GV).

Voltage differences in the gigavolt range have long been predicted for such storms, but never observed. In fact, the 1.3 GV observed here is about 10-fold higher than the previous high voltage measurement.

The finding here is certainly interesting. But importantly, the current article opens the door to much further work. The modeling done to relate the observed muon intensity to the structure of thunderstorms will undoubtedly be developed further. And the connection between this kind of measurement, which integrates information over an entire cloud, and the traditional localized measurements from balloons will be explored.

News stories:

* Indian Scientists Measure 1.3-Billion-Volt Thunderstorm, the Strongest on Record. (R F Mandelbaum, Gizmodo UK, March 15, 2019.)

* Focus: Muons Reveal Record-Breaking Thunderstorm Voltage. (M Rini, Physics 12:29, March 15, 2019.)

* How a Space Telescope's Accidental Discovery Overturned Everything we Thought we Knew About Lightning Storms. (E Hook, Physics Central - Physics Buzz Blog, March 11, 2019. Now archived.) Relatively technical, but still very readable. (We also note that this blog item was posted by a Positron.)

The article: Measurement of the Electrical Properties of a Thundercloud Through Muon Imaging by the GRAPES-3 Experiment. (B Hariharan et al, Physical Review Letters 122:105101, March 15, 2019.)

Reference 1 of the current article is an article they note as the "first authoritative study of thunderstorms". Musings, too, has noted that article (though not in a timely manner): Benjamin Franklin and the electrical kite (November 22, 2011).

A recent post about thunderstorms... Lightning and nuclear reactions? (January 28, 2018). This post deals with gamma rays made during thunderstorms. The current article notes that the production of high energy gamma rays requires the high voltages that they have observed.

More about detecting muons... Using your smartphone to detect cosmic rays (April 7, 2015).

Also see... What do we learn from the sulfur isotopes in the California vineyards? (June 28, 2022).

Using caffeine to treat premature babies: risk of neurological effects?

April 27, 2019

Premature babies are at risk for a variety of problems, physical and neurological. The risk increases with earlier delivery.

One risk is apnea, a stoppage of breathing. Apnea is perhaps best known for the form called sleep apnea. The current issue is "apnea of prematurity". A standard treatment for apnea of prematurity is caffeine.

There has been little information about the long term effects of caffeine on neurological development. A new article addresses this issue. The article compares the neurological outcomes depending on whether caffeine was given in the first two days after birth or only after that.

The following table summarizes some key findings...

The table shows the outcomes for two groups of babies, depending on when caffeine treatment was started. "Early" caffeine means that caffeine treatment was started within two days after birth. Each entry is shown as a number and a percentage of the group. For example, the first data entry is 230 (14.9). That means that 230 babies in the early-caffeine group showed the outcome sNDI. That is 14.9% (of the total group of 1545).

For a quick overview, compare the percentage numbers in the two columns. For each outcome, they are somewhat smaller for the early-caffeine group.

What are the outcomes measured? NDI = neurodevelopmental impairment. That's an umbrella term; it's not clear from looking at the table, but all the other outcomes listed are sub-types of NDI. sNDI = significant neurodevelopmental impairment. CP = cerebral palsy.

The premature births considered here were those occurring at less than 29 weeks.

The analyses here were carried out at about two years of age.

This is trimmed from Table 3 of the article.

|

The big picture, then, is that premature babies with early caffeine treatment fared slightly better than those who were treated only later. The effect is small, and at least some of the results are not statistically significant.

The work reported here is not from a controlled double-blind study. It is based on analyzing the records available.

Considering the two previous points, the authors are positive but cautious. They conclude that the early caffeine appears helpful; importantly, it is not harmful. Early intervention with caffeine is clearly helpful for lung function; there is no indication it is harmful to neurological development. Additional, more controlled, studies would be welcomed.

News stories:

* Developing Brains of Preterm Babies Benefit From Caffeine Therapy. (Neuroscience News (University of Calgary), December 12, 2018.)

* Caffeine. Give it and give it early. (All Things Neonatal, January 10, 2019.) This is a blog page by an anonymous author who is clearly in the field. The page includes earlier posts on the topic by the same person, dating back to 2015. The current article is the subject of the first blog (at least for now).

The article: Early Caffeine Administration and Neurodevelopmental Outcomes in Preterm Infants. (A Lodha et al, Pediatrics 143:e20181348, January 2019.)

More about premature birth...

* Kangaroo mother care for premature babies: start immediately (June 27, 2021).

* Association of mother's sleep disorders with premature birth? (October 13, 2017).

* Lamb-in-a-bag (July 14, 2017).

* Imaging of fetal human brains: evidence that babies born prematurely may already have brain problems (March 10, 2017).

* When should the eggs hatch? (June 11, 2013).

* The problem of human birth (July 8, 2011).

Among posts on caffeine... How caffeine interferes with sleep (December 11, 2015).

More... Caffeine: is it good for solar cells? (May 13, 2019).

More about brain-related issues is on my page Biotechnology in the News (BITN) -- Other topics under Brain (autism, schizophrenia). It includes a list of related Musings posts.

April 24, 2019

Briefly noted...

April 24, 2019

Cassava poisoning. Cassava is a major starch crop. The part that is eaten is a tuber, similar to a potato. It contains high levels of cyanogenic glycosides (cyanide attached to sugars). Those compounds are part of why cassava is a relatively pest-resistant crop. They are also why cassava is quite poisonous. Even modern low-cyanide varieties must be treated to remove toxins before being eaten. The article noted here is about an incident where that obviously did not happen. (Cassava is perhaps best known to Americans in the form of tapioca.)

* I have no news story, but the article is short, quite readable, and freely available: Outbreak of Cyanide Poisoning Caused by Consumption of Cassava Flour -- Kasese District, Uganda, September 2017. (P H Alitubeera et al, Morbidity and Mortality Weekly Report (MMWR) 68:308, April 5, 2019.) The article contains a photo of cassava tubers, and also a nice figure with some important epidemiological data.

* More cyanide from our food: Domestication of the almond (August 26, 2019).

Can Google Translate properly translate medical instructions?

April 23, 2019

A patient receives instructions upon leaving the hospital. What if the discharging physician doesn't speak the patient's language? One possibility is to run the doctor's instructions through a computerized translation system, such as Google Translate (GT).

A recent article examined how well GT works. The current analysis was stimulated by a recent upgrade to GT.

The general approach was to take actual English instructions, from recent experience in the authors' hospital, and have GT translate them into Spanish and Chinese. Experienced humans then translated the GT translations back into English. Each resulting back-translation was compared to the original.

Here is a summary of what was found...

Two results are shown for each language tested: the frequency of inaccurate translations, and the frequency of translations that could be harmful.

The overall result is that most, but not all, of the instructions were translated accurately. And some of the inaccurate translations could be harmful.

For example, out of 647 sentences translated to Spanish, 53 (8%) were judged to be inaccurate. About a quarter of those (15; 2% of the total) were judged to be potentially harmful.

The data set examined was 100 discharge instructions, consisting of 647 sentences.

This is the top of Table 1 from the article.

|

What is the conclusion? The authors are positive, with caution. (And the results are better than in previous tests, with earlier versions of GT.) But perhaps focusing on the numbers misses the point. Do we really want the discussion to be about what level of harm due to translation errors is acceptable?

In fact, the authors go on and look at the nature of the errors. The rest of the Table classifies them. A second table gives some examples (some of which are included in the news stories listed below).

What's striking in the analysis is how many of the translation problems originate from poorly written originals. Problems range from simple typos (GT translates what it sees, without judgment) to complex, jargon-laden sentences.

Perhaps Google Translate should include a probability score giving its confidence in its translation. A low score would trigger a re-examination of the original. Perhaps at times the translating computer should even say... Doc, I have no idea what you are trying to say. Perhaps even instructions for English speakers should be run through Google Translate simply as a clarity check.

News stories:

* Can Google Translate be trusted for medical advice? (SiliconIndia, February 27, 2019.)

* Google Translates Doctor's Orders into Spanish and Chinese with Few Significant Errors -- Study Finds Most Errors Occur When Doctors Write Long, Jargon-Filled Sentences. (L Kurtzman, University of California San Francisco, February 25, 2019.) From the university.

The article: Assessing the Use of Google Translate for Spanish and Chinese Translations of Emergency Department Discharge Instructions. (E C Khoong et al, JAMA Internal Medicine 179:580, April 2019.)

Why some citrus fruits are so sour

April 22, 2019

You may think you know why (some) citrus are very sour. They are acidic, and the acidity is due to citric acid. That's not incorrect, but it is insufficient to explain what is observed. The pH of some citrus fruits, such as sour oranges or lemons, is lower than can be maintained by ordinary cells.

A recent article explores the basis of acidity of citrus. The scientists find that the very sour fruits pump hydrogen ions (protons) into the cellular vacuoles.

The following figure provides some of the evidence...

|

This pair of figures is for seven varieties of oranges.

Part b (top) shows the pH of the fruits. They fall into two groups: low pH (near 3) and high pH (near 6). Those are for oranges known to be sour or sweet, respectively.

Part c (bottom) shows the level of expression for three genes. These are labeled across the top, and the results are shown in three shades of purple. For now, just consider them all together. All three of these genes show high expression in the first four oranges (to the left), and extremely low expression in the last three (to the right).

The level of gene expression was determined by measuring the amount of messenger RNA.

The high pH for some oranges means that they are "not sour". They are often called sweet, by comparison, simply meaning non-sour. (Some have a high sugar content.)

This is part of Figure 5 from the article.

|

What are these three genes? Two of them (CitPH1 and CitPH5) are known to code for "proton pumps". These proteins use energy to lower the pH of the cellular vacuoles. (One of the three genes is not well characterized.)

It's interesting that the sweet oranges have near zero levels of all three of the genes being examined. How does a single mutation do that? It involves a regulatory mutation. A low level of a protein required to activate all three genes could give such a pattern. In fact, the article provides evidence to identify the regulatory genes that are affected.

The article contains similar data sets for lemons, pummelos, and limes. The general pattern holds for each case. The same genes seem to be involved in all citrus groups.

All the sweet citrus variants have a low content of citric acid. The emerging story is that low pH is caused by the proton pumps, which acidify the vacuoles. The steep pH gradient then promotes entry of citrate. That is, the high content of citric acid in sour citrus is a consequence of acidification, not the cause.

This story may sound familiar. The same proton transporters are involved in determining the color of petunia flowers. That was the subject of an earlier Musings post [link at the end]. The current citrus work was guided by earlier petunia work in the same lab. (The regulatory genes for these proton pumps, too, seem to be the same in petunia and citrus. Various mutations can occur to reduce proton pump activity, leading to higher pH.)

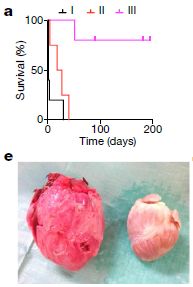

News stories:

* UvA biologists solve the long-standing riddle how lemons can be so extremely sour. (University of Amsterdam, February 26, 2019.) From the lead institution.

* Source of citrus' sour taste is identified. (Science Daily (University of California Riverside), March 5, 2019.)

The article, which is freely available: Hyperacidification of Citrus fruits by a vacuolar proton-pumping P-ATPase complex. (P Strazzer et al, Nature Communications 10:744, February 26, 2019.)

Background post: The petunia connection... pH and the color of petunias (March 26, 2014).

More about citrus...

* Caffeine boosts memory -- in bees (April 12, 2013).

* Grapefruit and medicine (March 26, 2012). Links to more.

More citric acid... Using mass spectrometry to analyze a poem (October 14, 2018).

The effect of prior dengue infection on Zika infection

April 20, 2019

Dengue and Zika are related viruses (of the flavivrrus group). It is known that their antibodies cross-react. Thus one might wonder whether prior infection with dengue would protect against Zika. But the story is more complicated, because of an unusual phenomenon in dengue: infection with one type of dengue virus can make a subsequent infection with another type of dengue worse. Does this phenomenon carry over to Zika? If so, how?

Musings has noted some relevant lab work on these questions [link at the end]. However, ultimately what matters is what happens in actual human populations. A recent article addresses that. A team of scientists was studying the health of the population in a small neighborhood in a Brazilian city when Zika came along. They already had a large collection of serum samples with high coverage of the population. A study of the dengue-Zika interaction built on that collection. (Dengue was already common there.)

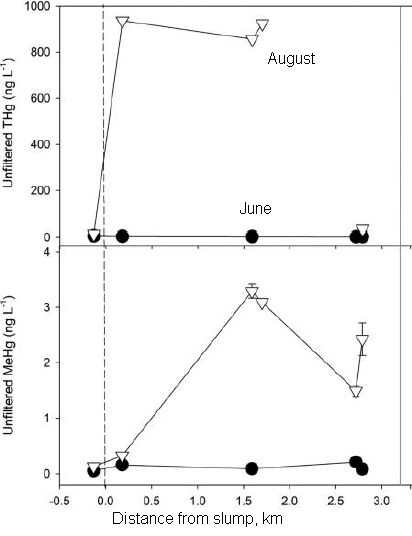

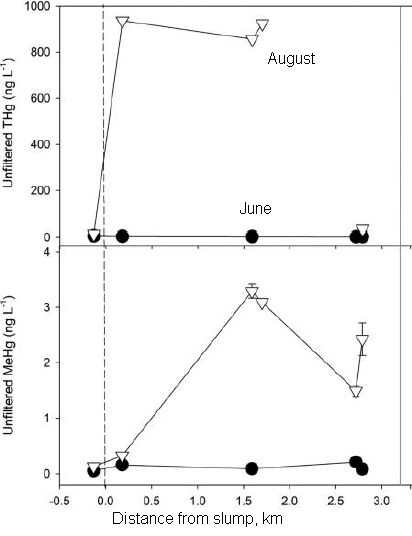

The following graph summarizes what they found. I should emphasize that the graphs here are not experimental data, but modeling results that followed analysis of the large and complex data set.

The y-axis for all the graphs is the probability of Zika infection. Zika infection is judged here by the presence of Zika-specific antibodies.

Th left-hand graph shows the expected age distribution. Not particularly interesting.

The other two graphs show the probability of Zika infection as a function of one or another measurement of pre-existing antibodies against dengue. The two curves are very different! One shows dengue antibodies correlating with reduced Zika; the other shows the dengue antibodies correlating with increased Zika infection.

This is slightly modified from Figure 4A of the article. I have added back the x-axis labeling (which was below Part B).

Note that both scales for levels of antibodies against dengue are log scales.

|

What are these two dengue measurements? One is the overall level of antibody against a particular viral protein. That's what the middle graph is about. Overall, having antibodies against dengue correlates with protection against Zika. It is likely this is due to the dengue antibodies directly protecting against Zika.

The right-hand graph is for a particular dengue antibody, called IgG3. Higher levels of this antibody correlate with a higher probability of Zika infection.

What does this mean? We don't know. It's not even clear that it relates to the already-known phenomenon of interaction between dengue strains. For now, the antibody IgG3 is a biomarker: it correlates with something interesting, but we don't know why. The authors note that this antibody is a marker for a recent dengue infection. Again, the relevance of that observation is not clear. (For example, it might mean that people with recent dengue infections have a higher exposure to mosquitoes, and hence to mosquito-borne diseases.)

The work here shows us, once again, that the dengue-Zika system is complex.

The analysis produced one further result, one not entirely unexpected. Most people are now immune. That's why the Zika epidemic in that area is largely over.

The current article does not address the severity of the Zika infections. That aspect will be addressed in future analysis of the study group.

News stories:

* Prior dengue infection protects against Zika. (Science Daily (University of Pittsburgh), February 7, 2019.)

* Dengue Immunity Provided Protection Against Zika Virus. (SciTechDaily (M Greenwood, Yale University), February 11, 2019.)

The article: Impact of preexisting dengue immunity on Zika virus emergence in a dengue endemic region. (I Rodríguez-Barraquer et al, Science 363:607, February 8, 2019.)

A background post about the interaction of dengue and Zika viruses, in an animal model: Can antibodies to dengue enhance Zika infection -- in vivo? (April 15, 2017).

Exploring another possible interaction between different flaviviruses: Does vaccination against yellow fever affect incidence of severe dengue? (October 4, 2019).

More about the viruses is on my page Biotechnology in the News (BITN) -- Other topics under Dengue virus (and miscellaneous flaviviruses) and Zika. Each of those sections includes a list of Musings posts.

April 17, 2019

Briefly noted...

April 17, 2019

A new form of calcium carbonate. It's a well-known chemical, and yet we now learn of a new form of it. Anhydrous CaCO3 forms three types of crystals (calcite, aragonite, and vaterite). Two hydrates are also known, with one or six waters per CaCO3 unit. Now, scientists report a third hydrate, called a hemihydrate, with one water molecule per two CaCO3 units: CaCO3.(1/2)H2O. (They even have a hint of another hydrate, CaCO3.(3/4)H2O, but with limited evidence so far.)

* News story: A new calcium carbonate crystalline structure. (Max Planck Institute of Colloids and Interfaces, Potsdam-Golm, March 1, 2019.) Refers to the article; here is the article link.

How hot is a landslide?

April 16, 2019

In 2008, a chunk of rock slid down Daguangbao mountain (China), triggered by a major earthquake. The following figure, from a recent article analyzing what happened, summarizes the event...

The figure is a side-view diagram of the event. Elevation is shown on the y-axis, horizontal position on the x-axis.

The simple story is that a chunk of land, shown in color, slid down to where it is shown here -- from the position immediately "above" that (and to the left) in the figure. (The source region, roughly oval, is outlined.)

Much of the chunk is substantially intact; that is coded here by the regular brick pattern.

This is Figure 2 from the article.

|

There is nothing particularly unusual about the general picture; that's the nature of landslides. But this event was an unusually large landslide, with over a cubic kilometer (109 cubic meters) of material sliding down.

In an effort to understand how such mega-landslides work, a team of scientists has developed a lab model, in which they can observe lab-scale events under their control.

The following figure shows an example of their results. The experimental conditions were chosen to mimic the forces thought to be involved in a mega-landslide such as the one shown above.

|

The figure shows two types of measurements during one such simulated landslide.

The x-axis, labeled "shear displacement", records the course of the event.

Two of the curves are for the temperature (T) at two locations. These are the two higher curves (blue and green). See the right-hand y-axis for the T scale.

One curve (the lower one, in red) is for the concentration of carbon dioxide. See the left-hand y-axis for the CO2 scale.

|

You can see that T rises to 1200 °C. And that CO2 rises.

This is slightly modified from Figure 8c of the article. I added the label T for the right-hand y-axis scale.

|

Such an event generates heat. It comes from the friction, of course. But the amount of heat generated is perhaps surprising: enough to decompose the carbonate-containing rock (limestone and dolomite), releasing CO2.

From the lab and field observations together, the scientists estimate that the actual T at the sliding surface during the event was at least 850 °C.

Such information led the scientists to suggest that minerals are decomposing -- and re-crystallizing -- during the sliding. That led them to examine some of the material from the actual event; they saw clear signs of such mineral changes.

The scientists suggest that superheated steam, the CO2 (supercritical) fluid, and the complex process of mineral decomposition and re-crystallization are all part of what reduces the friction, promoting further movement of the landmass.

That is, one can begin to put together a story of what happens in such an event... The earthquake knocks loose a piece of rock. It slides down, with considerable friction, generating heat. The heat is enough to cause changes in the rock, which lead to reduced friction -- and enhanced acceleration of the sliding rock. It is the special nature of a large landslide that it generates enough heat to cause these changes, and thus lead to the additional acceleration -- and devastation if anything is in the way.

Studying landslides is not easy. The article here is pioneering work to develop a lab model. It's fascinating, and apparently productive.

News story: The giant Daguangbao landslide: superheated steam and hot carbon dioxide. (D Petley, Landslide Blog (AGU), February 18, 2019.) Excellent overview.

The article: Superheated steam, hot CO2 and dynamic recrystallization from frictional heat jointly lubricated a giant landslide: Field and experimental evidence. (W Hu et al, Earth and Planetary Science Letters 510:85, March 15, 2019.)

A previous post that mentioned landslides: Lutetia: a primordial planetesimal? (February 13, 2012).

A possible cause of certain unusual earthly landslides is presented in the post: Briefly noted... item #1 (August 15, 2018).

The rising rate of caesarean section births: an intriguing correlation

April 15, 2019

Caesarean section (C-section) birth allows a baby to be born when the natural process would be medically impossible or unwise. It also allows birth to be arranged for the convenience of those involved (other than the baby).

The frequency of C-section births has risen over recent decades. The reasons are not entirely clear, and may be complex.

A recent article makes an interesting observation about the frequency of C-section births. The authors show that it correlates with the rate of change of height in the (adult) population.

The following graph shows the trend, and gives us a chance to explain what the height variable means.

|

The graph shows the frequency of C-section births (y-axis) vs (adult) body height change 1971-1996, in centimeters per year (x-axis).

Each country's data is shown as a single point. Country names are shown, though you can't read most of them here.

The striking observation is that there really is a trend, which holds for almost all countries examined.

There is no formal definition of outliers here. One could make the case that only one country is far off the main trend line. Or perhaps three -- all with higher than expected rates of C-section births.

|

The data for frequency of C-section births is for 2005-2017. At least approximately, women giving birth during that time were born during the period of the growth data.

This is reduced from Figure 2 of the article.

If you want to see more detail, here is the full-sized Figure 2 [link opens in new window].

In the article pdf, the text labels on the figure (i.e., the country names) are searchable.

|

What does the x-axis variable mean? It is about the average height of the (adult) population. For example... Consider a country where the average height of its people increased by 2.5 cm (about 1 inch) over the period examined. That is a 25 year period, so the population height increase is 0.1 cm/yr.

In some countries, the average population height is decreasing, by as much as 0.17 cm/yr. If that trend continues, the people of such a country would be 1 meter shorter in about 600 years -- and would reach height zero in about a thousand years. Extrapolation is fun!

What's going on? Why is the rate of C-section births related to population height? One might guess that it has something to do with the development status of the country. Increasing height might reflect better nutrition, for example.

The authors do some statistical manipulations, to try to sort out other possibly relevant factors. They correct their data for some variables relating to the country status. (These include, without details here... "obesity and diabetes rates, mean age of the mother, average female body height, HDI, HAQ Index and national HE." (from second paragraph of "Results")

That leads to Figure 3, which is linked here in the full-size version: Figure 3 [link opens in new window].

Figure 3 shows what is left after correcting for other known variables. You can see that there is still a similar trend line. The statistics suggest that the current variable, change in population height, accounts for about a third of the change in C-section frequency.

The authors suggest a reason for the effect. In a time of improving conditions, the fetus will be "more improved"; it is one generation younger than the mother. Thus a mismatch between the size of the fetus and the mother (the birth canal) is more likely. Note the distinction here between good conditions and improving conditions; the latter results in the mismatch between mother and fetus.

We should clarify and caution... What the re-analysis shows is that about 2/3 of the effect can be accounted for by other variables. About 1/3 is not accounted for by the other variables they examine. Since height is their focus, for the moment the effect seems correlated with height. But maybe there is something else, correlated with height, that is actually the more relevant factor. The statistical analysis helps us sort out the importance of some variables, to the extent we have good data for them. But it can't say anything about variables that are not tested.

Data. Statistics. Correlation. What does it mean? Scientific articles usually offer interpretation, not just data. Indeed, the authors here have an interpretation, as we noted. Whether their interpretation is correct or not, the data and the correlation are there. People will be intrigued by them, and will explore what they mean.

News stories:

* Caesarean rates related to better maternal nutrition, study finds. (NutraIngredients, February 12, 2019.)

* Changes in Height Linked to Increased C-section Rates -- Countries with populations whose average adult height grew late last century are more likely to have high rates of babies delivered surgically. (A Olena, The Scientist, February 6, 2019. Now archived.) Includes a good discussion of what it might mean.

The article: Secular changes in body height predict global rates of caesarean section. (E Zaffarini & P Mitteroecker, Proceedings of the Royal Society B 286:20182425, February 6, 2019.)

More about birth problems: The problem of human birth (July 8, 2011).

Posts on the implications of C-section delivery for the babies' gut microbiome:

* The microbiome of babies born by C-section (November 2, 2019).

* Your gut bacteria: where do you get them? (July 30, 2010).

More about human height: Is being tall bad for your health? (July 12, 2022).

Restoring ion transport in cystic fibrosis patients -- using a pore-forming drug?

April 12, 2019

Cystic fibrosis (CF) is a genetic disease, caused by mutations in the gene CFTR. It's sometimes said that CFTR codes for a channel for chloride ions; in fact, a common feature of CF patients is that their sweat is quite salty. But the CFTR channel does more than transport chloride. It seems to be a fairly general channel for anions. Of particular importance, it transports bicarbonate ion (HCO3-); CF patients have an altered pH of their cellular secretions because of the lack of bicarbonate transport.

CFTR? That's cystic fibrosis transmembrane conductance regulator.

A new article offers a new approach for treating CF. It's almost as simple as... punch some holes in the cell membranes so the anions can get through.

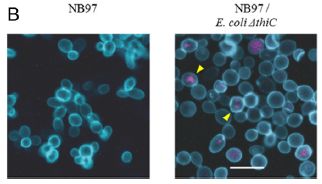

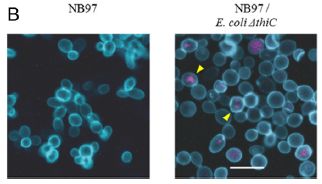

The following figure illustrates the problem -- and the suggested approach. The experiment here is in vitro, with cultured lung tissue from people with a particular form of CF.

|

The figure shows the pH of the ASL for such CF tissue, under different conditions. ASL? That's airway surface liquid.

You can see that the pH for the first (left-hand) condition is low, whereas the next two have higher pH.

The first is with no treatment (both "minus" in the key at the bottom).

The middle condition involves a drug combination labeled "Iva + fsk"; the right-hand condition involves "AmB". Both work. Both raise the pH by about 0.2 units.

This is Figure 1a from the article.

|

Both work. But there is an important difference, which you cannot tell from what has been presented so far. The first of those drugs is an established treatment for CF -- but it works only for people with certain specific CF mutations. The second drug is what they are focusing on here. It might be expected to treat CF regardless of the mutation. Indeed, further work in the article supports that prediction.

The header for the graph identifies the cell line and the CF mutations it carries. CuFi-4 is the cell line. It is heterozygous for each of two CF mutations: G551D/ΔF508. The CFTR protein resulting from the first of those mutations can be rescued by the Iva drug.

That second drug, AmB, is amphotericin B. It is a well-known drug, for reasons that have nothing to do with CF; it's an anti-fungal agent. It's known to make membranes leaky, though its effect on fungi is more complicated than that. It's also known to be very toxic, and must be given with great care.

Can a drug that makes membranes leaky be used to treat CF patients? There is some logic, but it should also be clear that this could be a risky approach. The preliminary tests, such as the one shown above, are encouraging.

The following figure shows a test in an animal model...

|

In this test, pigs carrying mutations for cystic fibrosis were used. The drug tested here is AmBisome, a commercial formulation of amphotericin B.

Data for ASL pH is shown for each pig separately. There is a red point before giving the drug and a yellow point after the drug; the two points for each pig are connected by a line.

You can see that the pH increased in each pig tested, by an amount consistent with the in vitro experiments. (The black bars show the mean for each treatment.)

This is Figure 3d from the article.

|

These are interesting and encouraging results. Drugs such as ivacaftor ("iva" in the top figure) have established the possibility of restoring channel function in CF patients. But drugs of this type act by stabilizing a particular mutant form of the CF protein. The current work opens up the possibility of a general stimulation of ion transport, independent of the specific defect that caused the problem.

I have focused on the pH effect here. Some of the discussion of the work is about restoring anti-bacterial defenses. That's important, of course; it follows from restoring the pH.

Whether amphotericin B itself will turn out to be a practical drug for this use remains to be seen. It is a drug we know a lot about, including its toxicity. The type of long-term use needed for treating CF patients must be of some concern. The authors suggest that our understanding of the toxic effects of AmB has progressed to the point where it can be managed. But whether AmB itself is the answer, it seems to be at least a clue, which can guide the development of better pore-forming drugs.

News stories:

* Scientists find new approach that shows promise for treating cystic fibrosis -- NIH-funded discovery uses common antifungal drug to improve lungs' ability to fight infection. (NIH, March 13, 2019.) From the major funding agency. (The page gives incorrect authorship for the article, referring to the group leader rather than the lead author. The information and link is otherwise fine.)

* Amphotericin Holds Promise as Treatment for All CF Patients, Preliminary Study Shows. (A Pena, Cystic Fibrosis News Today, March 19, 2019.)

* News story accompanying the article: Medical research: Fighting cystic fibrosis with small molecules. (D N Sheppard & A P Davis, Nature 567:315, March 21, 2019.)

* The article: Small-molecule ion channels increase host defences in cystic fibrosis airway epithelia. (K A Muraglia et al, Nature 567:405, March 21, 2019.)

More on cystic fibrosis:

* How our immune system may enhance bacterial infection (September 19, 2014).

* Cystic fibrosis: treating the underlying cause -- for some people (November 13, 2011). This post is about the type of mutation-specific CF drug used as the control in the first figure here. In fact, it is about the specific drug used here, ivacaftor.

April 10, 2019

Briefly noted...

April 10, 2019

Denisova Cave. It's best known to many as the source of the finger bone that still defines the Denisovan line of man. In fact, it has proven to be the source of diverse human fossils, yet much about the cave and about the bones still is mysterious. A recent news feature explores the significance of the cave.

* News feature, freely available: Siberia's ancient ghost clan starts to surrender its secrets -- A mysterious group of extinct humans known as Denisovans is helping to rewrite our understanding of human evolution. Who were they? (E Callaway, Nature News, February 27, 2019.) In print, with a different title: Nature 566:444, February 28, 2019.

* Among posts about Denisovans... The Siberian finger: a new human species? -- A follow-up in the story of Denisovan man (January 14, 2011).

* and... Denisovan man: beyond Denisova Cave (May 7, 2019).

GO dough

April 9, 2019

You know what you can do with bread dough. Now just think what you might be able to do with GO dough.

For many years, graphene has seemed to be a wonder material. However, it has achieved limited use, partly because it is so hard to handle. Now, a team of scientists reports making a dough form of graphene oxide (GO).

The following figure shows some properties of mixtures of GO and water, over a range of concentrations.

The left side (part e) shows the viscosity of the mixtures. The right side (part f) shows the stiffness. In both cases, the x-axis is the mass percent of GO in the mixture, but the two graphs are for different concentration ranges.

The viscosity graph shows a rapid rise, starting at about 2% GO. Instead of having a free-flowing mixture, a gel is formed at higher concentration of GO.

The stiffness graph shows a second rise, starting at about 50% GO. The mixture becomes solid above 60% GO.

Between about 20% and 60% GO the mixture is effectively a "soft dough".

This is part of Figure 1 from the article.

|

Dough. GO dough.

You know what you can do with dough.

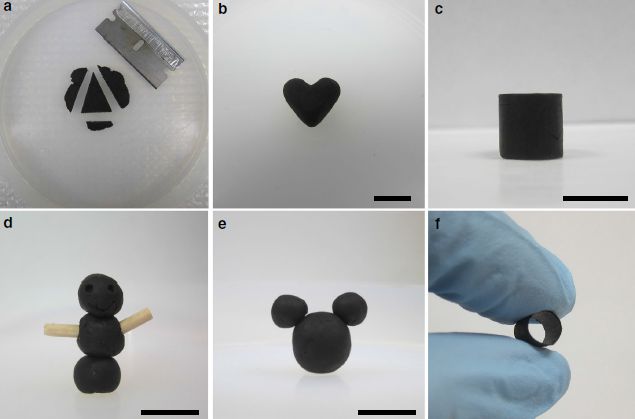

The following figure shows some examples, with the authors' own figure legend as explanation...

This is Figure 3 from the article.

Here is the figure legend from the article... "Fig. 3 GO doughs are highly processable and versatile. GO doughs can be readily reshaped by a cutting, b pinching, c molding, and d carving. GO doughs can be easily connected together d, e or with other solid materials d using the wooden sticks as an example. f A tubular GO structure can be prepared by molding a GO dough around a rod, demonstrating the versatility of using GO doughs to make 3D architectures that are otherwise challenging to obtain. Scale bars in b, c, d, and e are 1 cm."

If you don't see a couple of dark dots near the top of the structure in part d, try a different viewing angle.

|

GO dough has no ingredients other than the GO and water. There are no "binders" that need to be removed for later use.

Making GO dough is not quite as simple as it looks above. It required some development to learn good procedures for moving between the various GO forms. But now it should be practical and useful. GO dough is a step toward making GO, a common precursor for graphene itself, convenient.

News stories:

* Graphene Play-Doh: New Plasticine-Like Formulation Could Significantly Boost Graphene Industry. (D O'Donnell, Evolving Science, January 29, 2019.) Some of the writing is awkward, but overall this is a good overview of the new work, with good context.

* GO dough stands poised to bring graphene and its awesome properties into your life. (A Micu, ZME Science, January 31, 2019.)

The article, which is freely available: Binder-free graphene oxide doughs. (C-N Yeh et al, Nature Communications 10:422, January 24, 2019.)

Recent posts on graphene and GO include...

* Coloring with graphene: making a warning system for structural cracks? (June 2, 2017).

* Water desalination using graphene oxide membranes? (April 29, 2017).

Also see: How to mold glass (June 19, 2021).

Posts about graphene are listed on my page Introduction to Organic and Biochemistry -- Internet resources in the section on Aromatic compounds.

That section also notes other graphene work from the same lab, specifically the development of graphene for use as a hair dye. That lab, led by Jiaxing Huang, Professor of Materials Science at Northwestern University, has a knack for doing things that are both "fun" and serious science. (That work was also noted in Musings: Briefly noted... (September 26, 2018).)

What if we gave mosquitoes anti-malarial drugs?

April 7, 2019

Reducing the incidence of malaria in mosquitoes may not strike you as a priority issue. I'm not even sure it is a problem for the mosquitoes. But they do get infected -- and they then transmit the parasite to people. If we could reduce growth of the parasite in the mosquitoes, it should reduce disease transmission.

A new article explores the possibility, with some encouraging results. For example...

The figure shows the results from two experiments to test whether an anti-malaria drug could reduce the growth of the parasite in the mosquitoes.

Let's jump to the results; we'll fill in the experimental details later.

Look at the main graph for part c (left side; labeled "24 h pre-infection"). There are two sets of data points there, one for the control (left set), and one for a treatment with an anti-malaria drug ("ATQ" = atovaquone). The data points are for counts of the parasite in individual mosquitoes; the y-axis is labeled, a bit cryptically, "oocyst intensity".

There are a lot of parasite cells (the oocysts) in the control mosquitoes. There are none in the treated group.

Underneath the graph are pie charts. They show the "prevalence": the percentage of the mosquitoes with parasite oocysts. 87% in the control, zero in the treated mosquitoes.

Counting oocysts, in the mosquito gut, is for convenience. That's not the form of the parasite that is transmitted to humans by the bite.

This is part of Figure 2 from the article.

|

Treatment of the mosquitoes with an anti-malaria drug worked.

Part d (right side) shows a second experiment; it is similar, but a little different. The results are qualitatively the same.

What did the scientists do in the experiment discussed above? And what was the difference between the two experiments?

Each part of the figure has a little cartoon at its left side, outlining the experiment. For part c, it starts with a mosquito and a green dish with some material treated with the drug. The mosquitoes are exposed to the drug-treated surface for six minutes. 24 h later, the mosquitoes get some malaria-infected blood (red dish). And 7 days later they are analyzed for the load of parasites.

The experiment for part d was similar, except that the order of the two dishes is reversed. (The timings are a little different.) The mosquitoes are first fed the infected blood. 12 h later, they get exposed to the drug-treated surface.

The headings for the two graphs describe the drug treatment: 24 h pre-infection in part c; 12 h post-infection for part d. Both work, about equally well. That is, these results suggest that treatment with the drug before or after the mosquito is infected can be effective.

What do the scientists have in mind for making use of this information? People in areas where there is high transmission of disease by mosquitoes often use bed nets. The nets physically limit the mosquitoes' access to their blood meal. Effectiveness of the bed nets is enhanced by impregnating them with insecticides, to kill the mosquitoes. That helps, but it also leads to the development of resistance by the mosquitoes. What the scientists envision is adding the anti-malaria drug to the bed nets. The current work doesn't use bed nets, but it tests the approach -- and suggests it is worth pursuing further.

One can imagine limitations of this approach; the authors discuss several of them. It's not offered as "the answer", but as a step toward one more tool in the battle against mosquito-borne disease.

Some details..

- The malaria parasite studied here is Plasmodium falciparum.

- The mosquito is Anopheles gambiae.

- The drug ATQ is an inhibitor of mitochondrial cytochrome b.

News stories:

* Promising New Bed Net Strategy To Zap Malaria Parasite In Mosquitoes. (J Lambert, NPR, February 27, 2019.) Good perspective.

* Malaria Bed Nets Could Hold Disease Cure. (GEN, March 12, 2019.)

* News story accompanying the article: Medical research: Malaria parasite tackled in mosquitoes. (J Hemingway, Nature 567:185, March 14, 2019.)

* The article: Exposing Anopheles mosquitoes to antimalarials blocks Plasmodium parasite transmission. (D G Paton et al, Nature 567:239, March 14, 2019.)

A recent post about mosquito control: What if one gave appetite-suppressing pills to mosquitoes? (March 15, 2019).

A post that discusses the role of bed nets: Can chickens prevent malaria? (August 12, 2016).

More anti-malaria posts:

* Biological control of mosquitoes, using a modified fungus (July 8, 2019).

* Artemisinin: an improved source? (June 4, 2019).

There is a section of my page Biotechnology in the News (BITN) -- Other topics on Malaria. It includes a list of related Musings posts, including posts more generally about mosquitoes.

Air pollution: progress towards a process for ammonia oxidation

April 5, 2019

Ammonia is a very noticeable pollutant. It can be removed by oxidation, but that can yield various products. It would be nice to oxidize ammonia (NH3) to nitrogen gas (N2). (The H? It will end up as water.) But some oxidation conditions lead to oxides of nitrogen, such as N2O. (Oxides of N are sometimes collectively called Nox.) That's not so good; we have just traded one pollutant for another. Another consideration is temperature (T). At least for some purposes, it would be ideal to be able to get rid of ammonia at room T.

That is, what we want is a process to oxidize NH3 to N2 at room temperature.

Here are some results from a new article...

The two frames show results from a series of experiments testing four possible catalysts for the reaction, over a range of temperatures. (The T-scale, on the x-axis, is the same for both frames.)

The left frame (part a) shows the percent conversion of the NH3. Three of the catalysts show significant conversion even at the lowest T (about 20 °C). Two (red and green circles) show about 20% conversion at that T. All four of them give (near) 100% conversion at higher T. The black curve at the bottom is for an ineffective material. (We'll come back to what the catalysts are in a moment.)

The right frame (part b) shows what is called the selectivity of the reaction: what percentage of the product is the desired N2. All of the catalysts show near 100% selectivity, making only N2, at low T -- up to about 100 °C. One of them (red circles) continues to make (almost) only N2 even up to about 200 °C.

This is Figure 2 from the article.

|

Those are encouraging results. They suggest that we can convert NH3 selectively to N2, with minimal Nox, over a wide range of T. And that we can achieve modest rates for doing so even at room T.

So what are these catalysts? They are based on gold nanoparticles, on a support of niobium oxide, Nb2O5. There are three physical forms of niobium oxide, shown by the suffixes DO, T and A; see the key in part b. The one sample without gold is the ineffective control at the bottom of part a. And there are two different loadings of the Au onto the Nb2O5: 1% (in most cases) and 2.5% (the red circles).

The suffixes for the forms of niobium oxide stand for amorphous (A), orthorhombic (T), and deformed orthorhombic (DO).

The niobium oxide should formally be called niobium(V) oxide.

Not only does the Nb2O5 support work in general, there is a clear ranking of the forms. Nb2O5-DO is the best.

The niobium oxide has different kinds of acidic sites. Investigation suggested that some of those acidic sites were especially important for initiating the reaction pathway that led to the desired product N2. In particular, Bronsted acid sites and Lewis acid sites seem to promote different pathways of oxidation. Such information may be useful in further catalyst development.

This work could open a pathway towards developing simple canisters for removing ammonia pollution from buildings or other localized environments with high ammonia levels. Further work is needed. The 20% conversion seen at room T is encouraging but inadequate for a real process. (There is nothing in the current article about the lifetimes of the catalyst, which would be an important consideration for its cost.)

News story: Breakthrough in air purification with a catalyst that works at room temperature. Nanowerk News (Tokyo Metropolitan University), March 23, 2019.)

The article: Role of the Acid Site for Selective Catalytic Oxidation of NH3 over Au/Nb2O5. (M Lin et al, ACS Catalysis 9:1753, March 1, 2019.)

More about niobium...

* Would NbP be better than Cu? (March 5, 2025).

* Windows: independent control of light and heat transmission (February 3, 2014).

A post about the opposite reaction: Using light energy to power the reduction of atmospheric nitrogen to ammonia (May 20, 2016).

A recent post about (non-enzymatic) catalyst development: Breaking C-F bonds? (October 26, 2018).

More gold...

* A light-activated coating that can kill bacteria on surfaces (July 14, 2020).

* Prospecting for gold -- with help from the little ones (March 1, 2013). Includes a list of gold-related posts.

* A simpler assay for detecting low levels of HIV, using gold nanoparticles (January 3, 2013).

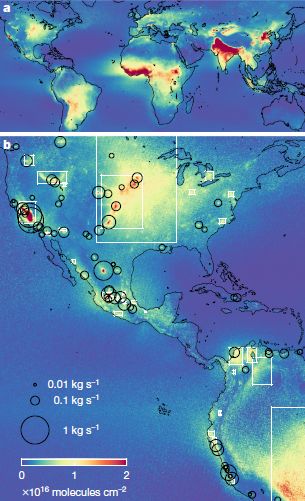

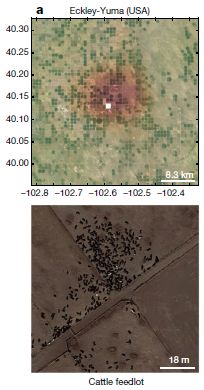

More about ammonia pollution: Global map of ammonia emissions, as measured from space (January 22, 2019).

April 3, 2019

Briefly noted...

April 3, 2019

Who is eating the world's biggest organism? Perhaps more interesting for the moment... What is the world's biggest organism? It's name is Pando. It may weigh as much as six million kilograms; it covers many square miles of the US state of Utah. To a casual visitor, it looks like a grove of trees. However, biologists have evidence that all the "trees" are part of one large organism, genetically identical "sprouts" off a common root system. Plants do things like that; it's not so much that Pando does novel biology, just that it is big. Unfortunately, Pando is an herbivore's delight.

* Two news stories, focusing on different issues...

- Massive organism is crashing on our watch -- First comprehensive assessment of Pando reveals critical threats. (Science Daily (Utah State University), October 17, 2018.) Links to the article, which is freely available.

- Pando, the World's Heaviest Organism, is an Ever-Growing Witness of an Ancient Earth. (Naturalis Historia, September 18, 2018.) Focuses on the history and nature of Pando. Links to much information, including the current article, which is Rogers & McAvoy (2018).

What happens to a snow-based water supply as the climate warms?

April 2, 2019

In California, there is a poor match between water supply and demand. A major part of the supply is from snow, which falls largely in the mountains in the north and east of the state -- in the winter. Water usage is greatest in the regions with high levels of agriculture or population, in the central and western parts of the state -- all year (but especially in the summer).

The state has complex systems for managing its water; included are numerous large dams. For our story here, the purpose of the dams is to store water. But the dams are only one part of the water storage system.

A recent article looks at what will happen to a snow-based water supply upon global warming.

The article is about the California water supply, but we will minimize the local details, and emphasize the main general point.

|

The graph shows the snowpack in the mountains (y-axis) vs time of year (x-axis), for three different time periods.

The top curve (black) is the typical current snowpack. The other two curves are modeling estimates for the snowpack at about mid-century (orange) and the end of the century (red).

The results summarized here are averaged over several climate models and over the state as a whole. Results from individual models are shown in the article; qualitatively, they are all similar. The full analysis in the article also shows results for several regions throughout the state, examining, for example, the role of elevation.

This is the upper left frame of Figure 1 from the article.

|

The main observation is simple: the snowpack will decrease as the climate warms. Also, the peak snowpack comes a little earlier.

Why? The warming may affect the amount of precipitation. In addition, it may affect how the precipitation is received, with more of it coming as rain rather than as snow. (The article here focuses on the snowpack itself, and does not address how much of the missing snow will be replaced by rain.)

Does it matter? The snowpack is itself a storage device. It collects water during the winter, and releases it during the spring.

Planning of water systems, including dams, takes the storage effect of the snowpack into account. Global warming will require rethinking the water storage system, even if the amount of precipitation doesn't change. (Dams, of course, are also designed to prevent flooding during the melt part of the cycle. That's not relevant to the main issue here.)

Global warming may affect our water supply by changing not only the amount but also the form of precipitation. In California, or anywhere else with a snow-based water system.

News stories:

* The Changing Character of the California Sierra Nevada as a Natural Reservoir -- Understanding how mountain snowpack may change upstream of California's major surface reservoirs. (A D Jones, Earth and Environmental System Modeling, US Department of Energy, December 7, 2018. Now archived.) A brief summary from one of the authors.

* Sierra Snowpack Could Drop Significantly By End of Century -- Berkeley Lab working with water managers to produce "actionable science". (J Chao, Lawrence Berkeley National Laboratory, December 11, 2018.)

The article: The Changing Character of the California Sierra Nevada as a Natural Reservoir. (A M Rhoades et al, Geophysical Research Letters 45:13008, December 16, 2018.)

More about California's water and mountains: Groundwater depletion in the nearby valley may be why California's mountains are rising (June 20, 2014). Links to more, including some more generally about water resources.

Atmospheric rivers and wind (May 9, 2017). A substantial fraction of California precipitation comes from "atmospheric rivers", which are exceptionally wet storms that come in from the tropical Pacific. As that might suggest, these storms are also rather warm; whether they yield snow or rain in the mountains is quite sensitive to the ambient temperature -- and therefore quite sensitive to climate change. Here is a previous post about these storms.

More about the effect of global warming on snowfall: Is Arctic warming leading to colder winters in the eastern United States? (May 11, 2018).

The genetic basis of why parrots seem so human

March 31, 2019

One feature that makes the parrots stand out among the birds is shown in the following figure...

The graph shows the life expectancy (y-axis) vs size (x-axis) for a number of birds. It's a log-log scale, but don't worry about that.

Life expectancy here seems to be longest life observed. (The details behind the graph are actually in other work.) That is, the intent here is to show the potential of each bird, not its average survival success. This graph is for birds in captivity. The full figure contains a similar graph for birds in the wild. We won't consider it here; it doesn't impact anything below.

The main point is that there is a general trend: bigger birds tend to have longer life expectancies. And then there a few birds that are above the main range. Four of them shown here, each marked with an asterisk.

Those are long-lived birds. And three of the four long-lived birds shown here are parrots. (The pigeon is not a parrot.)

The authors define long life expectancy here as being more than 20% above what is expected from the weight. The upper dashed line shows the cut-off.

This is Figure 1C from the article.

|

As a group, parrots are long-lived -- as compared to other birds of similar size. In fact, the blue-fronted Amazon parrot (Amazona aestiva) shows the biggest discrepancy on the graph of any of the birds here. It has almost the longest life of any bird here, just a bit less than the much-larger ostrich (which lives considerably less than expected).

A recent article reports sequencing the genome of the blue-fronted Amazon parrot. It is the most complete parrot genome so far. Much of the article, then, is about looking for genes involved in longevity. This involved comparing this genome with what information is available for other birds, with both normal and long life expectancy. The authors also focus on another trait that distinguishes the parrots: their cognitive skills.

The article leads to a list of genes (and other sequences, such as regulatory sites) that appear to correlate with long life expectancy or cognition. Many of the genes found here are new in this context. Not all of these candidates will turn out to be significant; this kind of correlational work generates candidates for further study.

The title of the post suggested a broader comparison than simply the parrots among birds. It turns out that some of the genes that make the parrot distinctive among the birds match those thought to be involved in longevity and cognition for humans. Parrots and humans are not closely related. That they may share certain solutions is an example of convergent evolution, where more than one organism has independently "discovered" the same solution to a problem.

There is a fair amount of speculation or even hype in the commentary about this article. Genome articles tend to do that. There are a lot of facts, largely poorly understood. And yet, genome analysis ultimately will tell us so much. Seeing parallels between the parrots and us is just for fun and a little perspective for now. But the article is a step toward understanding another fascinating group of organisms. It may also lead to some general ideas about how cognition develops.

News stories:

* Parrot Genes Reveal Why the Birds Are So Clever, Long-Lived. (M Solly, Smithsonian, December 10, 2018.)

* Parrot genome analysis reveals insights into longevity, cognition -- Genome of blue-fronted Amazon parrot compared with 30 other long-lived birds. (Science Daily (Carnegie Mellon University), December 6, 2018.)

The article: Parrot Genomes and the Evolution of Heightened Longevity and Cognition. (M Wirthlin et al, Current Biology 28:4001, December 17, 2018.)

A post about parrot virtues... Bird brains -- better than mammalian brains? (June 24, 2016). Links to more.

More parrots: Briefly noted... How parrots use social media (June 27, 2023).

There is more about genomes on my page Biotechnology in the News (BITN) - DNA and the genome. It includes an extensive list of related Musings posts.

My page for Biotechnology in the News (BITN) -- Other topics includes sections on Aging and Brain (autism, schizophrenia). Each of those includes a list of related posts.

The smallest tweezers -- for pulling single molecules out of cells

March 30, 2019

Look...

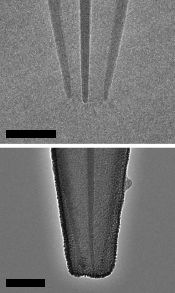

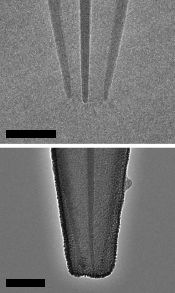

|

Two images of a newly designed set of tweezers, as described in a recent article.

The main thing you need to know for now is that the scale bars are 20 nanometers. The images here are from electron microscopy.

This is part of Figure 1b from the article.

|

Tweezers? The article uses the term tweezers in a general sense: a device to pick up something small. This device may look more like a pipet or eye-dropper, which can play the same role in retrieving a small item from liquid. But it doesn't work that way; it doesn't suck.

How does it work? It works by using an electric field to attract small things, which bind at the tip (rather than inside). The top picture, above, shows the basic structure; it is made of quartz. The bottom picture shows it after deposition of the carbon electrode material.

What is it for? Retrieving little things from inside cells. The scientists guide the tweezers to a desired site, watching what they are doing with a microscope. (The sample may be stained, to help them see particular kinds of molecules or structures.) They then push the tip through the cell membrane, turn on the electric field, and capture the desired object. The cell membrane recovers just fine, and the cell seems generally fine. In fact, one important point is that the same cell can be sampled over and over.

What have they done so far? Retrieved pieces of DNA -- and shown that they are intact and unaltered. Retrieved proteins -- and even mitochondria. The work so far is with cells in culture dishes. It should be possible to apply the method to cells in animals.

The current article presents a new tool. There are no findings of significance here. We now await "real" use of the new tool.

News stories:

* Electro-tweezers let scientists safely probe cells -- They allow repeated sampling of materials from the same living cell over time. (M Temming, Science News Explores (formerly Science News for Students), December 10, 2018.)

* Nanoscale tweezers can perform single-molecule 'biopsies' on individual cells. (Phys.org (H Dunning, Imperial College London), December 3, 2018.)

* Nanotweezers Allow For Extraction of Single Molecules From Living Cells. (Bioscription, December 9, 2018.) Includes useful perspective.

Video: Nanoscale Tweezers for Single Cell Biopsies. (Promotional video from the journal and the authors; 3 minutes; narrated. At YouTube.) Useful overview of the work, despite the immaturity of the narrators. (You can figure out who they are, I think, from the credits page at the end of the video. Maybe it is just that the narrator image is distracting.)

The article: Nanoscale tweezers for single-cell biopsies. (B P Nadappuram et al, Nature Nanotechnology 14:80, January 2019.)

More tweezers: The golden ear: A nano-ear based on optical tweezers (July 13, 2012).

More about examining the insides of a cell: Where is the hottest part of a living cell? (September 23, 2013).

Also see:

* Clippane (March 7, 2022).

* How to fill a small pipet (February 24, 2020).

March 27, 2019

Transmission of mitochondria from the father -- in humans

March 26, 2019

It's well known... Mitochondria are transmitted only by the mother.

There are some exceptions, especially with "simple" organisms. Occasional examples of transmission of paternal mitochondria have been reported in higher animals (mice and sheep). But for humans, the story has remained nearly absolute (with occasional reports of exceptions usually considered as lab errors).

Until now. A recent article reports studies of three unrelated families in which there are multiple cases of paternal mitochondria being transmitted, at high level.

The following figure shows a genealogy chart for one of those families...

The chart shows individuals from four generations, numbered at the left I to IV. The black symbols are for individuals who carry both paternal and maternal mitochondria, as judged by analysis of the mitochondrial DNA (mtDNA). There are four such individuals here.

There are two people in generation I. Unrelated, so they have 1- vs 2-digit "names". Since these numbers get re-used, an individual is designated by a two-part name, generation and individual. Thus the two parents are I-1 and I-10.

They have four children; each child has a single-digit name to show that they are a descendant from the previous generation. But they all have II in their name, showing that they are second generation. That is, the four children of the first generation parents are II-1 through II-4. Three of them have black symbols, meaning that they have mitochondria from both parents. High levels -- roughly half -- of their mitochondria are from each parent.

Each of those (1-digit) children has an unrelated (2-digit) partner. In particular, II-4, with unrelated partner II-40, has two children. One of those, III-6, also has biparental mitochondria.

Some of the symbols shown above are "hatched" (striped). The individuals with hatched symbols had a different, but related, condition. They had two types of mtDNA, but not the two expected by getting mtDNA from both parents. Instead, they had the two types from the parent who had biparental mitochondria. (In fact, in each case, that parent was female (circle symbols); thus the mitochondria here showed simple maternal transmission.)

This is Figure 1A from the article.

|

In summary, to see the main points...

- Focus on people with single-digit names; they are part of the main family lineage.

- There are four individuals with black symbols, meaning that they have mitochondria from both parents. All of these are descended from a male of the main family.

- There are other individuals with hatched symbols, meaning they have mitochondria from two parents of the main family, but from an earlier generation. All of these are directly from females of the main family. They did not themselves get mitochondria from their two parents, but they reflect an earlier such event.

The main result is the evidence that some people have received mitochondria from the father, as well as from the mother. Similar data for two other families is presented in the article. It is the first clear documentation of biparental transmission of mitochondria in humans.

The scientists are now estimating that transmission of paternal mtDNA may occur once in about 5,000 births.

Why did they do this work? It started with testing person IV-2 from the chart above; there was some suspicion that he might have mitochondrial disease. In fact, he did have an unusual combination of mtDNAs, but did not himself inherit those from his two parents. That is, the "index case" here pointed to something unusual in the family history.

That index case, IV-2, is noted with an arrow in the figure above. You can see that his box is hatched, indicating a mixture of mtDNAs. But further analysis showed that it was his mother and her father who actually received paternal mitochondria.

At this point, there is no indication of any pathology associated with having biparental mitochondria, either for that child or for any of the other black-symbol individuals in any of the families.

How does paternal transmission of mitochondria happen? The simple answer is that they don't know. Little is known about how paternal mitochondria are eliminated normally, and the details are thought to be different in different organisms.

It is interesting that paternal transmission may occur in consecutive generations. Three of the children in generation II, above, have paternal mtDNA. One of those is male (square symbol); he then shows paternal transmission to the following generation. Other examples were seen in the other two families studied. This may hint at a mutation that allows for such paternal transmission.

Could this be useful? Well, one might imagine... Mother has defective mitochondria. Why not turn on paternal transmission? It's plausible, but for now it is just a speculation. Studying what is behind the cases reported here may reveal how paternal transmission of mitochondria occurs, and may allow some control of the process.

News stories:

* Not Your Mom's Genes: Mitochondrial DNA Can Come from Dad. (K J Wu, WGBH (public television), November 26, 2018.) There is a small mix-up with some of the scientific details about the first case, but overall this is a useful story.

* Fathers Can Pass Mitochondrial DNA to Children. (A Azvolinsky, The Scientist, December 4, 2018.) Now archived.

* Opinion: The Central Dogma of Mitochondrial Genetics Needs Rewriting. (J D Loike, The Scientist, December 12, 2018. Now archived.) A discussion of the implications.

The article: Biparental Inheritance of Mitochondrial DNA in Humans. (S Luo et al, PNAS 115:13039, December 18, 2018.) Check Google Scholar for a freely available copy.

Update March 30, 2019...

Guess what... The current article has been challenged. The journal web page for the article notes, at the very top, that there is a letter there challenging the new finding, along with a reply from the original authors. The challenge offers another interpretation: that the mtDNA observed was actually integrated into the nuclear genome. In their reply, the authors argue against that suggestion, but they would agree that further data would help.

The challenge interpretation is itself interesting. There is precedent for finding mtDNA in the nuclear genome. (Historically, such transfer events must have occurred during mitochondrial evolution, but that is a different time scale.) But the specifics here make it seem unlikely for the current evidence.

It is a good dialog. Science proceeds by testing and rejecting odd ideas. But sometimes they are not wrong, and sometimes the testing itself leads to more that is of interest.

The challenge and the reply are each about one page, and fairly readable. You can get to them from the article web page in the post.

A post about the elimination of paternal mitochondria -- in a worm: How are mitochondria from the father eliminated? (September 20, 2016). The article discussed in this earlier post is reference 28 of the current article.

Another approach for dealing with mitochondrial disease: Tri-parental embryos for preventing mitochondrial diseases (September 23, 2016).

Another claim about mitochondria... Mitochondria in the blood? (April 5, 2020).

Role of a receptor for HIV in stroke recovery

March 23, 2019

This post is about recovery from a stroke, and the role of a protein called CCR5 in that recovery.

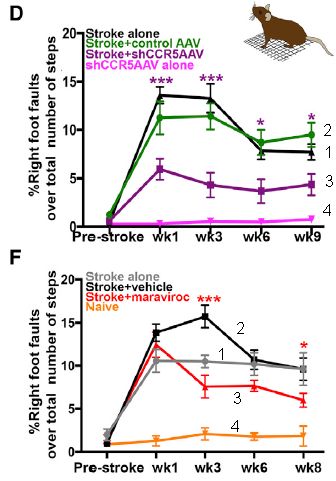

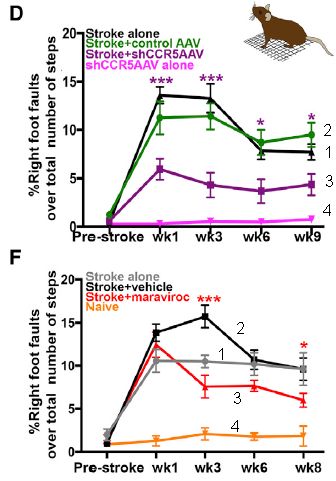

We'll start with some data showing that there is a connection -- and that we might be able to help...

|

The graphs show the results from two, related, experiments. We'll start by emphasizing the similarities.

In both cases, mice are given an experimental stroke. There is also a treatment, which is different for the two cases. The mice are tested for their physical agility. The y-axis is a measure of damage: foot faults. The x-axis is time: mostly, weeks after the stroke.

Curve 4 (bottom of each graph) shows the error rate of normal mice: near zero.

Curve 1 ("stroke alone") shows the effect of the stroke. In both cases, the stroke leads to major loss of agility: more foot faults.

Curve 3 ("stroke +" the treatment, the nature of which we have not yet described) shows the results for the treated mice. The treatment leads to improvement, as judged by this test. Curve 3 is below curve 1 at most time points following the stroke (7 out of 8).

|

Curve 2? It is for a mock treatment. It's hard to explain at this point, since I have not yet described the treatments. The point is that the qualitative statements above still hold.

The graphs have some asterisks on them. These are presumably to show that certain pairs of points test as significantly different. Oddly, the article seems to not say exactly what the * mean. It is a reasonable guess that they are for the significance of the points for curves 1 and 3.

This is slightly modified from parts of Figure 2 from the article. I have added numbers to the curves, for ease of referring to them.

|

Overall, the graphs suggest that the scientists are doing something that helps the mice recover from the stroke. The article contains other tests, including one of cognitive function. The general picture is the same as above.

So what's this about? CCR5. Both treatments involve reducing the activity of CCR5 following the stoke.

CCR5? You may have heard of it. It is the receptor for HIV. People lacking CCR5 are resistant to being infected by HIV. What does that have to do with stroke? Nothing. A virus receptor is often an "ordinary" protein that some virus has hijacked to use as a receptor to gain entry into cells. In the case of the HIV receptor CCR5, we know little about what the protein normally does.

What are the treatments shown above? Both treatments decrease the activity of CCR5 in neurons, but by different methods. The first uses a virus to deliver an RNA that inhibits the gene function -- and does so specifically in neurons, effectively knocking out the gene there. The second uses a drug treatment. Since CCR5 is involved in HIV infection, scientists have developed drugs that act at the first stage of infection, binding to CCR5. Thus the second test above (part F; bottom) shows that a drug we already have, developed for another purpose, may be useful in treating stroke. The drug is maraviroc.

The article also shows that reducing CCR5 activity helps with recovery from traumatic brain injury.

This is all in lab mice. Will it work in humans? The only way to know is to try it. That the drug used here is already an approved drug facilitates its testing for a new use.

It's an interesting lead.

A couple of questions that may occur to you...

We noted above that people lacking CCR5 are resistant to HIV. How do they do with strokes? The article includes some data showing that people lacking normal CCR5 recover better from strokes than do people with wild type CCR5. The data are limited at this point, but it is an intriguing claim.

What does CCR5 do? Why does blocking it affect stroke recovery? We don't really know. What seems to be happening is that the brain injury induces CCR5 activity, and that increased CCR5 reduces the formation of brain connections. You can see, then, why blocking CCR5 is good for recovery from stroke. But our understanding of CCR5 is very limited. We mainly know about its bad effects.

News stories:

* Human Gene Linked to a Better Recovery From Stroke. (Technology Networks (UCLA), February 22, 2019.)