Home

> Musings: Main

> Archive

> Archive for January-April 2014 (this page)

| Introduction

| e-mail announcements

| Contact

Musings: January-April 2014 (archive)

Musings is an informal newsletter mainly highlighting recent science. It is intended as both fun and instructive. Items are posted a few times each week. See the Introduction, listed below, for more information.

If you got here from a search engine... Do a simple text search of this page to find your topic. Searches for a single word (or root) are most likely to work.

Introduction (separate page).

This page:

2014 (January-April)

April 30

April 23

April 16

April 9

April 2

March 26

March 19

March 12

March 5

February 26

February 19

February 12

February 5

January 29

January 22

January 15

January 8

Also see the complete listing of Musings pages, immediately below.

All pages:

Most recent posts

2026

2025

2024

2023:

January-April

May-December

2022:

January-April

May-August

September-December

2021:

January-April

May-August

September-December

2020:

January-April

May-August

September-December

2019:

January-April

May-August

September-December

2018:

January-April

May-August

September-December

2017:

January-April

May-August

September-December

2016:

January-April

May-August

September-December

2015:

January-April

May-August

September-December

2014:

January-April: this page, see detail above

May-August

September-December

2013:

January-April

May-August

September-December

2012:

January-April

May-August

September-December

2011:

January-April

May-August

September-December

2010:

January-June

July-December

2009

2008

Links to external sites will open in a new window.

Archive items may be edited, to condense them a bit or to update links. Some links may require a subscription for full access, but I try to provide at least one useful open source for most items.

Please let me know of any broken links you find -- on my Musings pages or any of my regular web pages. Personal reports are often the first way I find out about such a problem.

April 30, 2014

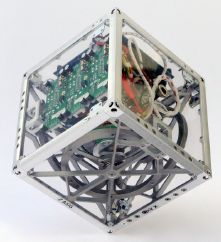

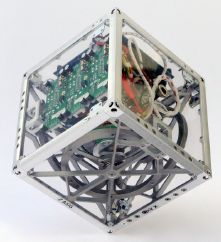

3D printing for space: a titanium woov, and more

April 29, 2014

There have been several recent posts on the emerging technology of 3D printing, or additive manufacturing, as it is also called. A recent story caught my attention in this context. It's not a scientific journal article at all, but a feature web page from the European Space Agency (ESA), describing potential applications of the technology for the space program. The page is both fun and instructive.

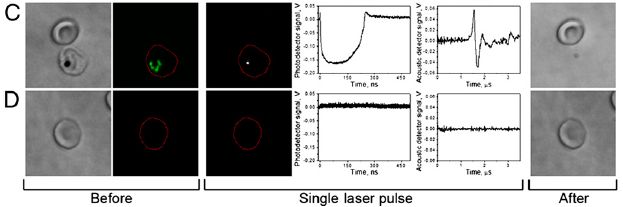

|

A titanium woov, made by 3D printing. It has been used in space.

This is part of the figure accompanying point 6 on the web page. That figure also shows a regular woov.

|

The web page: Ten ways 3D printing could change space. (ESA, April 15, 2014.) There is a list of 10 points, with many pictures included. (My favorite picture is the one with point 4, but it has no obvious scientific value.) If you don't get 10 points, you may get a "Continue" button. If you still don't get 10, try another browser. Strange page.

Most recent post about 3D printing: 3D printing: simple inexpensive prosthetic arms (January 29, 2014).

More:

* 3D printing: Make yourself a model of the universe (December 19, 2016).

* Can you make a 777 by printing it? (May 9, 2015).

More titanium: The major source of positrons (antimatter) in our galaxy? (August 13, 2017).

Salmonella and food contamination; the biofilm problem

April 28, 2014

Salmonella is a common food poisoning bacterium. It's one you can easily bring home - with a whole chicken. A new article addresses the issue of established Salmonella infections in the environment. The main concern in this article is food processing plants, not the home, but the idea is the same. If allowed to stay, Salmonella grows on a surface in a complex structure called a biofilm. Importantly, the biofilm helps to protect the bacteria from antibiotics and disinfectants.

Here is an example of what the scientists did...

In this experiment, the scientists established model Salmonella biofilms on concrete surfaces. At two times during the development of the biofilms, they treated portions of the surface with various disinfectants, following standard food industry procedures. The table below shows how many bacteria were found after each treatment.

| Disinfectant

| 2 day biofilm

| 7 day biofilm

|

| none

| 4.3x107

| 5.5x109

|

| sodium hydroxide (1 M)

| 0

| 5.2x107

|

| sodium hypochlorite (500 mg/liter)

| 3.3x106

| 2.5x109

|

| benzalkonium chloride (0.02%)

| 6.3x106

| 4.4x109

|

The data above are extracted from parts of Tables 3 & 4 from the article. The data shown here are for one strain and for 90 minutes of treatment, the longest time they used.

You can see that with the 2-day-old biofilm, only the 1 M sodium hydroxide was able to kill it. With the 7-day-old biofilm, no treatment was very effective.

It is a dramatic demonstration of how tenacious bacteria can be if they get established; the biofilm is their way to "get established". The implication is that if a small amount of Salmonella gets onto a surface, it will quickly establish itself in a form that is difficult to eradicate, but which can still shed infectious bacteria into the plant.

The article has some limitations. For example, the measurements here are for a concrete surface; that seems like something that would enhance bacteria sticking. The authors note that such surfaces are common in food processing plants, so are relevant. However, it would be nice to see what happens with smoother surfaces, such as stainless steel. (They do some work with such other surfaces, but don't do killing curves with them.) Second, they tested only one level of the disinfectants here, and it was chosen based on a quite different type of experiment. The level of bleach, for example, is quite low. We need to know whether higher levels could successfully penetrate the biofilm. These are difficult experiments to carry out, and what is reported is a useful contribution. The article adds to our appreciation of the difficulty of eradicating biofilms, but it is also known that biofilms vary.

News stories:

* Food processors beware: Salmonella biofilms incredibly resistant to powerful disinfectants. (Science Daily, January 15, 2014.)

* Salmonella Biofilms Extremely Resistant to Disinfectants. (Food Safety News, January 23, 2014.) This contains a photo showing biofilms on various kinds of surfaces. It shows that biofilms vary. (The source of the photo is not stated; it's not from the article.)

The article: Commonly Used Disinfectants Fail To Eradicate Salmonella enterica Biofilms from Food Contact Surface Materials. (M Corcoran et al, Applied and Environmental Microbiology 80:1507, February 2014.)

A post about food contamination, including Salmonella: Killer chickens (December 2, 2009). Links to several related posts, on a range of food contamination issues.

More about biofilms -- for better or worse...

* Does E coli grow in a multicellular form? (January 31, 2023).

* Shark skin inspires design of a new material to reduce bacterial growth (March 13, 2015).

* Killing persisters -- a new type of antibiotic (January 3, 2014).

* On sharing electrons -- II (June 9, 2013). Remember, biofilms are part of nature.

Also see: Perchlorate on Mars surface, irradiated by UV, is toxic (July 21, 2017).

My page for Biotechnology in the News (BITN) -- Other topics includes a section on Antibiotics. It includes a list of Musings posts on the topic, including disinfectants.

Do elephants suffer long term harm if their social groups are disrupted by human intervention?

April 27, 2014

Elephants live in complex societies. How well do the animals themselves understand their society? What happens if the social structure is disrupted? A recent article offers some interesting insights into such questions. Caution... The experiments and the results are interesting. The interpretation is less clear. There is considerable news hype about this work; it is not clear it is all justified at this point.

The basis of the work in the new article is comparing two populations of elephants -- comparing how they respond to socialization tests administered by the scientists. Here is an example...

The graph shows a behavioral response of two groups of elephants. Before we get to the details, note that the two graphs are different: one shows a trend, whereas the other does not. Something is going on. Let's look at what is being measured.

In this test, family groups of elephants were observed while recorded elephant calls were played to them. The calls were from elephants of different ages, representing different levels of social dominance. This is the "age" referred to along the x-axis. What's observed is how the family groups hearing the sounds bunched together, in what is considered a defensive response; the y-axis shows this as the "probability of bunching". The two groups of elephants studied here are in two national parks: Amboseli (Kenya) and Pilanesberg (South Africa); the labels at the top of the two frames identify the elephant populations.

You can see from the graphs that the elephants at Amboseli (frame A) behaved differently depending on the age of the elephants whose calls they heard. In particular, they bunched more in response to calls from older elephants; this is the expected behavior. In contrast, the elephants at Pilanesberg (frame B) did not distinguish the social significance of the elephants whose calls they heard.

This is Figure 2 Parts A & B from the article.

|

Why are the elephants at Pilanesberg less responsive to social cues? The authors interpret the work in terms of the history of the two elephant populations. Amboseli is considered a rather undisturbed park. In contrast, Pilanesberg was populated by orphan elephants during a cull of another park during the 1980s and 1990s. The authors suggest that the poor response of the Pilanesberg elephants is due to the severe social trauma that some of their members suffered 30 years ago.

The article used interesting methodology to probe elephant social structure, and the possible effect of social trauma caused by human intervention. Are there alternative ways to explain the results? What they claim is important if true, and it is "reasonable". However, I would be cautious about the interpretation; it's easy to think of other factors that might be involved. The scientists study two different populations of elephants, and assume that the key difference, the one responsible for the observed behavior, is the one they note. Perhaps, but that is an assumption. The authors do provide considerable discussion of other work that may be relevant, but it does not address this concern.

We need to distinguish how we might interpret this for immediate application to policy and how we understand it as science. There may well be enough here that we want to act with more caution in disturbing natural populations. That's fine. However, it is another matter to claim that we understand what is going on. This is a scientific paper; we should judge it by high scientific standards. We should distinguish whether an article provides conclusive proof of something, or whether it "merely" leads to an interesting hypothesis. We also have to accept that we make policy decisions on the basis of imperfect information.

News stories:

* Orphan Elephants Lack Social Knowledge Key for Survival -- Psychological impact from loss of family structure parallels PTSD in people. (Christy Ullrich Barcus, National Geographic Society, October 31, 2013.) Now archived.

* Mass Killings Can Haunt Elephants for Decades. (Science Now, November 1, 2013.)

The article, which is freely available: Effects of social disruption in elephants persist decades after culling. (G Shannon et al, Frontiers in Zoology 10:62, October 23, 2013.) A very readable article. I encourage you to look it over, especially the parts where they discuss the significance of the results.

Previous post about elephants... Was Linnaeus's original elephant African or Asian? (December 7, 2013).

Another psychology post: Can growing rice help keep you from becoming WEIRD? (July 22, 2014).

Another post about the effects of humans on other animals: Why male scientists may have trouble doing good science: the mice don't like how they smell (August 22, 2014).

The oldest known piece of cheese

April 25, 2014

|

The figure shows a mummy, about 3800 years old, from China. Around her neck are some pieces of cheese; some are marked with white arrowheads. The inset (lower right) shows an enlarged view of one piece of cheese.

This is from the abstract at the journal web site. It is probably equivalent to Figure 1D from the article. In the article, the scale bar for the inset has been partially cut off. There is a scale bar, 3 centimeters, for the main figure at the upper left; it's not easy to see, because of the colors.

|

It's the oldest known piece of cheese.

Why the interest in old cheese? Is there more to the story?

In a new article, scientists report that they can analyze some of the proteins in the cheeses from this archeological site. They are able to reconstruct a recipe for how the cheese was made. The protein analysis shows that the cheese was made by fermentation with bacteria and yeast; there is no evidence for the use of enzymes from animals.

The process used for making this cheese was probably similar to what is now known as kefir. The kefir process can give a milk product and a cheese, both low in lactose, a sugar that people in that part of the world digest poorly. Thus this cheese, found around the neck of a mummy, provides evidence that humans had learned to transform milk into a more digestible food 4,000 years ago. The authors even speculate how learning to make cheese might have driven the development of cattle farming.

There are many levels here. The find of the mummy with cheese was something of an accident, though it is also a tribute to the Chinese archaeologists. The ability to analyze the proteins in the old cheese reflects leading edge technology and leads to some understanding of the cheese. The data are limited, but they lead to a story. Much of the story is rough, even speculation, but it may guide further work.

News story: Researchers reconstruct a cheese recipe from the Early Bronze Age. (Phys.org, March 12, 2014.) Good overview.

The article: Proteomics evidence for kefir dairy in Early Bronze Age China. (Y Yang et al, Journal of Archaeological Science 45:178, May 2014.) It's a fun paper to browse, even if you skip over the more technical aspects of the analyses.

To be clear... There has been evidence for cheesemaking earlier than this cheese, by a few thousand years. What's new here is having the cheese -- and being able to analyze it.

Musings notes old things from time to time. Most recently... Chromosomes -- 180 million years old? (April 18, 2014).

and old proteins... Dinosaur proteins (July 6, 2009).

and old milk...

* Old baby bottles (November 11, 2019).

* Got milk? (October 13, 2008).

and more old food... The oldest known bread (August 28, 2018).

More about milk history... Barium, breast milk, and a Neandertal (June 17, 2013).

More cheese...

* Cheese-making and horizontal gene transfer in domesticated fungi (January 19, 2016).

* What's the connection: blue cheese, rotten coconuts, and the odorous house ant? (August 24, 2015).

More dairy... A clinical trial of ice cream (June 2, 2015).

More mummies: How to make a mummy (May 7, 2023).

April 23, 2014

The role of a government science adviser

April 22, 2014

I recently came across a delightful little "Comment" piece by the chief science adviser to the Prime Minister of New Zealand. What's it about? It's about what it means to be a science adviser to a government.

We have a science adviser to the President in the US. The role -- and the power -- of the adviser varies, depending on various circumstances, including the instincts of the President toward science. It was interesting to read the perspective of an equivalent in another country.

I encourage you to have a look.

The article, which is freely available: The art of science advice to government. (Peter Gluckman, Nature 507:163, March 13, 2014.) The subtitle: "Peter Gluckman, New Zealand's chief science adviser, offers his ten principles for building trust, influence, engagement and independence."

Another government science adviser: Science in the White House (June 11, 2009).

Also see: US Presidential candidates weigh in on science questions (October 18, 2016).

Targeting growth factors to where they are needed

April 21, 2014

Growth factors do what the name implies. One might think that using growth factors could be useful in promoting healing of wounds. However, it has proven difficult in practice, because it is hard to target the growth factors to where you want them to act.

A new article tries a new approach to targeting the growth factors, and reports some encouraging results.

Wounds are accompanied by exposed extracellular matrix (ECM). We can think of ECM as a type of structure that is around cells; it includes some common proteins, such as collagen. Growth factors typically work after binding to ECM. In the new work, scientists decided to try targeting the growth factors they wanted to use to the exposed ECM. More precisely, they wanted to enhance the binding to make it helpful during therapeutic use of growth factors.

The work had two phases. The first was to learn how to target things to the ECM. The second was to apply that to targeting growth factors in a wound-healing test.

Here is an example of what they found in the first phase. They tested many proteins, listed along the left side of the following figure. (Many have GF, for growth factor, in their name.) They tested them to see how well they bound to various ECM proteins, listed across the top. The familiar collagen is one of those they tested. In the figure, the length of each bar is a measure of how well the protein at the left bound to the particular ECM protein.

There is no need to look at details. All we need is the general pattern.

You can see that the results vary: there are long bars and there are short bars. Of particular interest is PlGF-2, which they have highlighted with reddish bars. It binds well to all the ECM proteins tested. That's what they wanted. They chose to use PlGF-2 (placenta growth factor-2). They were then able to find a small portion of this protein that was responsible for the binding to ECM.

This is Figure 1 from the article.

|

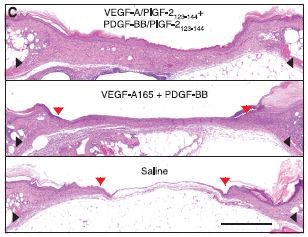

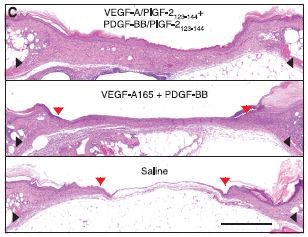

Here is an example of trying to use PlGF-2 to target growth factors. The test involves looking for healing of controlled wounds made in the skin of mice. The mice used are mutant mice, with poor wound healing. The growth factor treatment is applied directly to the wound. The pictures in the following figure show what the wounds look like after 15 days of healing with various treatments.

|

In the bottom frame, the "treatment" is a control; only saline solution was applied. In the middle frame, two growth factors were applied. In the top frame, these same two growth factors were used, but in a modified form: both were engineered to include the part of PlGF-2 that provided targeting to the ECM.

The general pattern is clear... The growth factors (middle frame) promote more healing than does the saline, and the targeted growth factors (top frame) are even better.

This is Figure 2c from the article. The scale bar (lower right) is 1 millimeter.

|

So, the scientists proposed targeting growth factors to where they are needed, they worked out a way to do that, and they showed that it works in a simple mouse model. It's a long way to the clinic, but this is an interesting article, worth pursuing.

News stories:

* Engineered 'Glue' Called Growth Factors Helps Heal Wounds Faster. (Medical Daily, February 22, 2014.) This is a useful brief overview of the work. However, there may be some confusion about their term "glue". Growth factors are normal proteins. What is new here is to work out a way of attaching them where they are needed; that is the "glue" idea.

* Bioengineered growth factors lead to better wound healing. (MedicalXpress, February 20, 2014.)

The article: Growth Factors Engineered for Super-Affinity to the Extracellular Matrix Enhance Tissue Healing. (M M Martino et al, Science 343:885, February 21, 2014.)

More about growth factors: Is it possible that mental retardation could be prevented by a simple prenatal treatment? (January 14, 2013).

More about the ECM: Zebrafish reveal another clue about how to regenerate heart muscle (December 11, 2016).

More about wound healing...

* An improved bandage, based on superhydrophobic carbon fibers (January 21, 2020).

* Fixing the heart with some glue and light (July 27, 2014).

* Smart sutures (November 3, 2012).

* On a new method of treating compound fracture... (July 11, 2012).

* Print yourself new body parts (April 16, 2010).

And a wound that did not heal... To kill a mastodon (November 15, 2011).

Development of insects resistant to Bt toxin from "genetically modified" corn

April 19, 2014

Bt toxin is an insecticidal protein, originally from the bacterium Bacillus thuringiensis (nicknamed "Bt"). Plants have been genetically modified to contain Bt toxin. Such plants are resistant to certain insects; the use of the plants means that it is not necessary to apply insecticides against those insects. (There are actually various types of Bt toxin, but we won't worry about that here.)

The question at hand is whether insects will develop resistance to Bt toxin after exposure to plants with Bt toxin.

The following figure illustrates the phenomenon, as reported in a new paper.

|

The bars on the graph show the survival of western corn rootworm larvae when exposed to corn (maize).

There are four bars, representing all possible combinations of two types of worm larvae and two types of corn.

You can see that three of the four bars are relatively high (high survival), and one is low (low survival). The low bar is for "normal" worms on Bt corn; that is, Bt corn reduces the survival of normal worms. That's what Bt corn is supposed to do.

|

The high bars? Two of them are for corn without Bt. Those are controls; we expect that worm survival is high without Bt. But one high bar is with Bt corn... it tests worms that had been isolated off of a recent crop of Bt corn. This bar shows that these worms have developed resistance to Bt toxin.

If you want to work through the graph carefully... The two types of corn are labeled at the bottom. They differ in whether or not they contain a gene for Bt toxin. One corn has no Bt toxin; it is labeled "Bt absent". The other corn has a Bt toxin, it is labeled "mCry3A", for the particular Bt toxin.

The two types of worm are denoted by the two bar colors. The dark bars (at the left of each pair) are for worms from a recent population recovered from a Bt corn crop. The light bars (right of each pair) are for "control" (or "normal") worms.

The A at the top of the three high bars? It means that those three results are statistically the same.

This is Figure 2B from the article.

|

That insects are developing resistance to Bt corn is neither new nor surprising. (What is new here is that the resistance is expanding; worms developed as resistant to one form of Bt toxin are showing cross-resistance to another form.) In fact, there are precautions that are to be followed to reduce the chances of development of resistance. They aren't being followed. The problem was predictable; it's now in front of us. Perhaps it would be good to follow the precautions?

Lest we over-interpret that... There is no guarantee that we understand the problem completely or that the declared precautions are adequate. There certainly is room for further understanding, which will come with experience. The point is that we aren't fully using the understanding we do have.

News story: Pests worm their way into genetically modified maize. (Nature News, March 17, 2014.)

The article: Field-evolved resistance by western corn rootworm to multiple Bacillus thuringiensis toxins in transgenic maize. (A J Gassmann et al, PNAS 111:5141, April 8, 2014.)

Recent post about a genetically modified crop plant: Rotavirus: passive immunization via food (January 10, 2014).

More about resistance to Bt toxin:

* Alternative microbial sources of insecticidal proteins (December 9, 2016).

* Resistance to Bt toxin: What next? (July 15, 2016).

For more on GM crops, see my Biotechnology in the News (BITN) page Agricultural biotechnology (GM foods) and Gene therapy.

More about Bt toxin: How to administer Bt toxin to people? (May 16, 2016).

More about corn...

* What can we learn from a five thousand year old corn cob? (March 21, 2017).

* Atmospheric CO2 and the origin of domesticated corn (February 14, 2014).

More about worms... Extending lifespan -- five-fold (January 12, 2014).

Chromosomes -- 180 million years old?

April 18, 2014

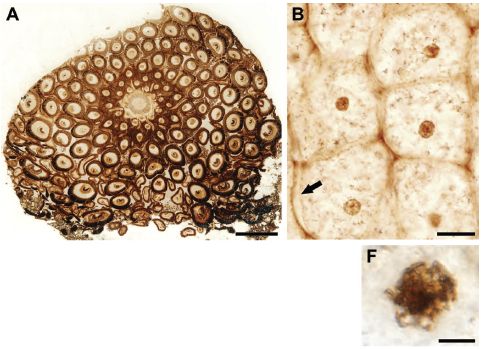

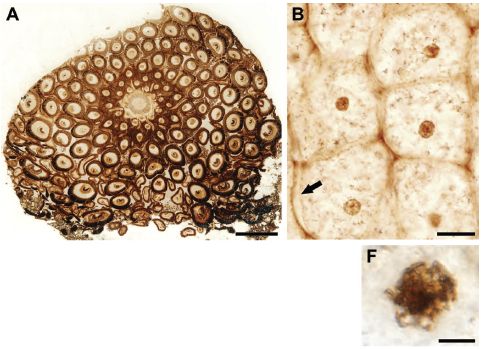

At the right are some pictures of part of a fern, from a recent article.

Part A (upper left) shows a cross section from the rhizome (root-like) structure. You can see individual cells. For example, there are two cells in the top "row", and six in the second row (depending on what you call a row there). There are small dark regions in most of the cells; these are nuclei. Scale bar = 500 µm.

Part B (upper right) shows a group of six cells at higher magnification. You can see cell walls, and even perhaps a cell membrane (at the arrow). You can see nuclei, and some have nucleoli visible within them. Scale bar = 20 µm.

|

|

Part F (lower right) shows the nucleus of one cell at even higher magnification. This cell seems to be in the process of mitosis; with some imagination, you can see the condensed chromatin. Scale bar = 5 µm.

This is part of Figure 1 from the article. The parts above are independent; they are not different magnifications of the same regions. The full figure contains more parts. In particular, there are several pictures of cells in various stages of mitosis.

|

The fern shown above is 180 million years old; this is a fossil fern. Aside from the beautiful pictures, what is interesting here is that this looks very much like a modern fern. That motivated the scientists to do an additional test. They measured the size of the nucleus in cells of this fossil fern and of a similar modern fern. The following figure shows the results.

|

The graph shows the size distribution of nuclei found in the 180 million-year-old fossil fern (brown bars) and in the extant (modern) fern (blue bars).

Two properties of the nuclei are measured: perimeter (left) and area (right). (Of course, these are not independent features; the scientists are simply trying to measure what they see in two ways.)

This is part of Figure 2 from the article. I have added the labeling at the bottom.

|

The old and new ferns have the same size nuclei! Based on what is known about the size of nuclei of plant cells, it is likely that the old and new ferns have about the same amount of genetic material. For example... Genome duplications are common in plants; had there been a duplication between the old and new ferns, it should have been very obvious here.

There is no claim of finding DNA here, though there is a claim that we can see chromosomes. (If you look at many of the pictures, that's quite plausible.) Nevertheless, the scientists are able to offer some information about the genome of a 180 million year old fern. That's noteworthy.

News story: Spectacular fossil fern reveals Jurassic-era chromosomes -- Fern's genome appears to have been stable for at least 180 million years. (Ars Technica, March 21 2014.)

The article: Fossilized Nuclei and Chromosomes Reveal 180 Million Years of Genomic Stasis in Royal Ferns. (B Bomfleur et al, Science 343:1376, March 21, 2014.)

Previous post about a fern: A new organelle "in progress"? (September 13, 2010).

More... Hybrid formation between organisms that diverged 60 million years ago (May 8, 2015).

More about cell division... A gene that reduces the chance of successful pregnancy: is it advantageous? (May 18, 2015).

A post about an ancient DNA sequence: The oldest DNA: the genome sequence from a 700,000-year-old horse (August 4, 2013). At the time of posting, this was the oldest reported genome sequence; it may still be. The current post is from material that is 250 times older -- 180 million years older. It makes no claim to showing DNA, but it does make a claim about the nature of the genome.

And... A claim of finding dinosaur DNA (May 31, 2020).

There is more about genomes on my page Biotechnology in the News (BITN) - DNA and the genome. It includes an extensive list of Musings posts on sequencing and genomes.

Ancient proteins... Blood vessels from dinosaurs? (April 22, 2016).

More old things... The oldest known piece of cheese (April 25, 2014).

April 16, 2014

Can you see your hand in total darkness?

April 14, 2014

Try it. Go into an absolutely dark place (a closet, perhaps, with no light leaks). Close your eyes. Put on a perfect blindfold. Then move your hand in front of your face. Can you see it? The question is not whether you are aware of your hand in front of your face, but whether you think you see it. Some people say they can.

It's not easy to do that test just right. Perhaps there is a bit of light. One "control" is for two people to do this together. Move your hand in front of your face, then have your partner move his/her hand in front of your face. If you report that you can see your hand but not your partner's hand (even though it was in the same place doing the same thing), that is what we are talking about here.

What's going on? As noted, it is not a surprise that you know where your hand is. After all, it is your hand. What's a surprise is that you think you are seeing the hand. Your brain knows where the hand is; that's fine. However, for some reason it (your brain) reports to you that your eyes saw it. At least, that's what happens for some people.

Even with the control suggested above, this all seems quite subjective. Is it possible that someone could manipulate the results (consciously or otherwise)? A new article does further testing of the phenomenon, with that concern in mind.

In one set of experiments, two consecutive trials were done with the same people -- and with an attempt to trick them. In each trial, the participants were given a blindfold. However, they were told that one of the blindfolds would have small holes -- and that the other would give complete darkness. They were not told which was which. In fact, the two blindfolds were identical: both were light-tight. As a result, the experimental design might have created an expectation for Trial 2. For example, if the participants were somehow imagining hand motion in Trial 1, and thought that might be due to the light leak, they might report less in Trial 2.

Here are some of the results...

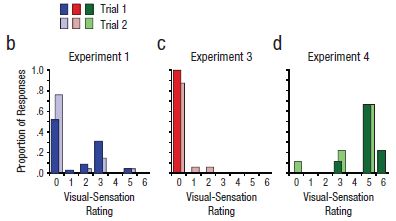

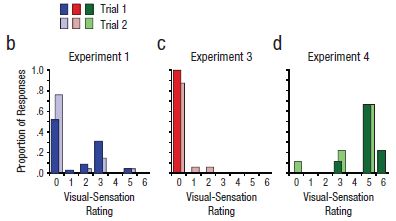

|

The basic design of the test is that a person was blindfolded and asked to move their hand in front of their face. (We'll note some variations of the basic design as we go.) They were then asked to tell what they "saw", which was coded on a scale from 0 to 6. This scale, called "Visual-sensation rating", is shown on the x-axis of the graphs. For example... 0 = no visual sensation at all, 3 = visual sensation of motion with direction, 6 = the visual shape looked like the outline of a moving hand.

The y-axis is the proportion of people who reported a particular score. A score of 3 is considered to be significant.

|

A key part of the testing here was the blindfold, as noted above. By telling the participants that there were two different kinds of blindfold, they built a deception into the test. The participants thought that they were using different blindfolds for the two trials, and this might have led to a difference between the two trials.

A quick glance at the results for the three experiments, above, shows very little difference between Trial 1 and Trial 2 within each experiment.

The three experiments? Experiment 1 (frame b of the figure) was the basic test. You can see that some of the participants, say about 20%, reported seeing their hand (score 3 or higher). The people who reported a positive response in the two trials were largely (though not entirely) the same people. (There is, in fact, some trend toward less positive response in Trial 2; this may be a clue that what is being studied is complex.)

Experiment 3 (frame c) was done differently in one key regard. Instead of the participant moving his or her own hand in front of their face, the experimenter moved his or her hand in front of the participant's face. You can see that this resulted in almost no response (none meeting the cut-off criterion of score 3).

The comparison of Experiments 1 & 3 makes the basic point of the phenomenon, and the difference between "my" hand and "your" hand. That general difference does not depend on the cutoff score used. Further, the deceptive test here helps to establish the validity of the phenomenon. Most participants reported the same visual sensation despite the attempt to deceive them.

This is Figure 1 parts b-d from the article.

|

What about Experiment 4 (frame d)? It's the same basic experimental design as with Experiment 1, but this time there is a huge positive response. Most of the people report a 5 or 6; those scores were rare in the other experiments. What's different with Experiment 4? The subjects of this experiment have synesthesia. People with synesthesia report unusual connections between the senses. For example, some may report associating a particular color with a particular number. It's intriguing that people with one type of unusual cross connections, in synesthesia, are likely to report the type of cross connection of the current work. It's intriguing -- but we have no idea what it means.

The article contains one very different kind of experiment, which also helps to establish that the phenomenon reported here is real. The scientists do eye-tracking experiments. The simple answer is that people who report seeing their hand move in total darkness are more likely to show eye-tracking of the moving hand than those who do not report seeing the hand. Apparently, the brain not only attributes vision to the eyes, but tells the eyes where to look -- even in total darkness.

News story: 'Kinesthesia' Lets Us See, Perceive Our Own Actions In The Dark Without Even Noticing. (Medical Daily, October 31, 2013.)

Video. There is a promotional video from the University of Rochester. It's called What Synesthesia Can Tell Us About Connections in the Brain. It is available directly at YouTube. (4 minutes)

The article: Kinesthesis Can Make an Invisible Hand Visible. (K C Dieter et al, Psychological Science 25:66, January 2014.) (Put the title into Google Scholar, and you may find a copy from the authors at Univ Rochester. At last check, there were multiple links there, with various outcomes. Look around; one is the final published article.)

More about synesthesia:

* Can people learn to be synesthetic? (January 7, 2015).

* Synesthesia: the good side? (January 14, 2012).

More on the interrelationships of the senses: Connecting the senses (April 26, 2011).

More about eye tracking: Signs of autism in 2-month-old babies (February 7, 2014).

Whales in the Chilean desert -- the oldest known case of a toxic algal bloom?

April 13, 2014

A construction crew working on a highway through the Chilean desert found a whale. Paleontologists have investigated the site, and it's rather interesting.

The photo above shows one of the observations.

It is reduced from the figure in the Science Daily news story.

|

Finding marine animal skeletons in the desert or in mountains is not novel. This is land that used to be oceanic or nearby; it has been uplifted over the eons. Chile is one of the most geologically active areas in the world, as the major earthquakes in early April reminded us. But there is much more to this particular story, as reported in a new article.

Among the striking observations...

- There were skeletons of many animals -- various types of whales and other marine animals.

- Collections of animals were found at four different elevations in the area, corresponding to four different dates.

- The arrangement of the fossils suggested that the animals had died at sea, and later were washed ashore.

A simple interpretation of the findings is that each layer of bones represents a mass stranding event. The multiple layers represent distinct mass stranding events, probably over several thousand years. Nothing like this had even been seen in the fossil record.

What caused the mass strandings? The scientists consider some possibilities, and end up suggesting that the mass strandings were due to blooms of toxic algae, much like what we now call red tides. We know such events occur now, and we know that whales can be the victims. The scientists take the assemblages of whales in the Chilean desert as evidence for algal blooms. The site is estimated to be 6-9 million years old -- older than any algal bloom previously known.

As you read this, be sure -- as always -- to distinguish what is fact from what is interpretation. The facts are collections of dated fossils, with the figure above an example. The algal bloom is an interpretation, one made after considering possible causes and the evidence at hand. However, interpretations are subject to change. In fact, one purpose of offering interpretations (which includes evidence against other interpretations) is to stimulate further work. The facts here are remarkable; the interpretation is quite tentative.

News stories:

* Mass strandings of marine mammals blamed on toxic algae: Clues unearthed in ancient whale graveyard. (Science Daily, February 25, 2014.)

* The Tiny Culprit Behind A Graveyard of Ancient Whales. (E Yong, Not Exactly Rocket Science (National Geographic blog), February 25, 2014. Now archived.) More pictures. And a good summary of the argument that the assemblages are due to algal blooms.

The article, which is freely available: Repeated mass strandings of Miocene marine mammals from Atacama Region of Chile point to sudden death at sea. (N D Pyenson et al, Proceedings of the Royal Society B 281:20133316, April 22, 2014.)

More about whale bones: A quasi-quiz: The fate of bone and wood on the Antarctic seafloor -- and the discovery of new bone-eating worms (August 20, 2013).

More about whales:

* If it quacks like a whale... (August 25, 2014).

* Effect of simulated sonar on whale behavior (March 16, 2014).

More from the Atacama desert of Chile: Life that thrives on hot air (September 5, 2009).

More algal blooms -- even older: A major algal bloom associated with the dinosaur extinction event? (May 13, 2016).

More about mountains being uplifted: Our mountains are growing (May 19, 2012).

More about highways and animals:

* The first Americans: Is it possible we have the date wrong by 100,000 years? (June 28, 2017).

* Why the bear used the overpass to cross the highway (May 11, 2014).

Google tracks the flu -- follow-up

April 11, 2014

Original post: Google tracks the flu (April 30, 2009).

In that post we noted that Google was using their records of what people searched for to predict the incidence of influenza. The idea is clear. The question is, how well have they done?

Here are some data...

The graph shows the percent of doctor visits due to influenza-like illnesses (ILI) over time, as estimated by four methods.

Look at two of those curves. The dark blue line is the official estimate from the Centers for Disease Control (CDC), based on surveillance reports; the orange line is the Google Flu Trends prediction. (We will briefly note the other two curves at the end.)

For the first two years, the two curves are quite close. After that, the Google Flu curve is high; that is particularly obvious during the last peak (Winter 2013 flu season).

A recent article in Science, presented as a Policy Forum, addresses that discrepancy. What went wrong? It's an interesting read, with implications beyond this particular issue.

The figure above is the top part of the main figure from the article. (The bottom part of that figure plots the same data as a percent error from the CDC estimate.)

|

At the heart of the problem is the underlying philosophy: that we can generate useful information by simply collecting lots of numbers. This is the "Big Data" issue, though it is fair to note that not everyone means the same thing by that term.

Among the points the authors make... What Google does is not transparent; thus it is not subject to the usual scrutiny of scientific analyses. Further, Google has said that they have tuned their method, and they will presumably continue to do so. Importantly, this includes tuning of both the Google Flu algorithms, but also Google search, which provides the raw data. In one sense, that's fine, but it also reminds us of their lack of transparency. What Google Flu does is to give an answer, but with little explanation or understanding. Is that good? It might be if it was correct. But we now know it may not be correct.

It's an interesting story. I encourage people to read it, whether they care much about tracking the flu or not. The article comes down rather hard on Google. Perhaps they overdo that, so let's not get distracted by trying to judge Google. The bigger issue is how we learn to use Big Data. Google is a pioneer there; that in itself makes them open to criticism.

News story: When big isn't better: How the flu bug bit Google. (Terra Daily, March 18, 2014.)

The article: The Parable of Google Flu: Traps in Big Data Analysis. (D Lazer et al, Science 343:1203, March 14, 2014.) Check Google Scholar for a freely available copy of the final published article.

What about the other two curves in the graph? One, labeled "lagged CDC", is for making predictions based on the most recent CDC data available, which is typically about 2-3 weeks old. The curve labeled "Google Flu + CDC" is based on a combination of the lagged CDC numbers with the Google Flu numbers. The general pattern is that this combined indicator is better than the individual indicators. Using more information is good. See the lower graph in the article, where the data is plotted as errors.

* Previous post on the flu: Face masks and flu virus transmission on airplanes: an analysis of a flight (August 27, 2013).

* Next: Transparency of clinical trials -- Is the flu drug Tamiflu worthless? (May 4, 2014).

Many posts on various flu issues are listed on the supplementary page: Musings: Influenza.

More about Google:

* In what year was the word "slavery" most used in books? (February 23, 2011).

* elgooG (October 12, 2009).

April 9, 2014

When lightning strikes a tree...

April 8, 2014

|

What's left of a redwood tree in a residential neighborhood less than a mile from the UC Berkeley campus, after a lightning strike last week. It's now about 25 feet high -- about a third of what it had been.

|

News story: Lightning strikes Berkeley tree, sends wood chunks flying. (Berkeleyside, March 31, 2014.) Includes several nice photos of the area. The photo shown above is reduced from the top photo in this story.

Apparently there was some similar damage in the Sausalito area during the storm. No injuries or major property damage. It was a noisy time -- in an area where thunderstorms are not common.

More about lightning:

* What's the connection: ships and lightning? (October 14, 2017).

* A story of ball lightning and burning earth (February 4, 2014).

There is more about energy on my page Internet Resources for Organic and Biochemistry under Energy resources. It includes a list of some related Musings posts.

Methane leaks -- relevance to use of natural gas as a fuel

April 7, 2014

Methane (natural gas, CH4) is playing an interesting role in current discussions of fuels and greenhouse gases. Burning methane leads to much less greenhouse gas emission than does burning coal. That is because much of the fuel value of CH4 comes from burning the hydrogen in it. Therefore, there is less CO2 emitted for a given amount of energy released from the fuel. By this argument, switching from coal to natural gas is a good move. On the other hand, methane itself is a greenhouse gas -- a potent one, about 30 times more potent than CO2. Even fairly small leakages of unburnt methane to the atmosphere could more than negate its advantage as a clean fuel.

Terminology confusion...We often hear the term carbon emission. Of course, not all C is equal. As noted above, CO2 and CH4 are both greenhouse gases, of quite different potencies. The term carbon emission in common usage typically means emission of CO2. Emissions of other greenhouse gases are sometimes converted to the equivalent amount of CO2.

Comment... Above I framed the issue in terms of a trade-off between coal and natural gas. You might wonder if that is the proper question. The point here is that it is one relevant question. We do use natural gas; we need to learn how to use it as cleanly as possible. There is a choice between coal and natural gas for some people; we need to address it as best we can. Climate change is complex. Looking at one piece at a time is one way to approach it. A corollary is that we need to be clear what the question is. Simply asking if natural gas is good is a poorly framed question; the question should be, good compared to what?

It would thus seem desirable to have a good understanding of the nature and magnitude of methane emissions into the atmosphere. A recent article in Science discusses this; the article attempts to take everything that has been reported about methane emission and integrate it into a coherent picture. Adam Brandt, the lead author, visited UC Berkeley in late March and gave a talk on the subject.

The main conclusion from the article is that we have a very poor understanding of methane emissions. In fact, the major role of the article may well be to help define the question rather than to reach a conclusion.

Nevertheless, the data that is available does suggest some conclusions. Even though they may be tentative, it is worth noting some of them...

Current estimates of methane emissions by the US Environmental Protection Agency (EPA) are likely to be low, perhaps by 50% or so. Among possible reasons for that are some procedural biases, and not taking into account some biological sources of methane, such as livestock and wetlands.

Despite the uncertainties, it is unlikely that the magnitude of methane emissions from natural gas operations negates its clean-fuel advantage over coal. (We should caution that some of the news media accounts of this report mis-represent this point.)

An issue of much current concern is production of natural gas by hydraulic fracturing (fracking). They find that methane leaks from this process are not large. This seems plausible. It's a more modern industry, largely developed during a time of greater consciousness about the problem of methane leakage. Further, methane is the product. Those whose business is natural gas have an economic incentive to minimize methane leakage. (In contrast, the petroleum producer may not have that incentive.)

An important finding that comes from analyses of individual units is that most emissions come from a fairly small number of very leaky units. For example, they note (p 734 middle), " ... one study measured ~75,000 components and found that 58% of emissions came from 0.06% of possible sources." This is good news, in a sense. It suggests that the industry can operate with a fairly low methane leakage rate. Programs to find and fix what they call "super-emitters" would be worthwhile; there is effort to develop such monitoring.

An interesting concern is the role of abandoned wells (for either natural gas or oil). In the old days, wells that were no longer productive were simply abandoned. In many cases there is little or no record of where they are, much less any monitoring for possible leakage. (The number of dead wells is several times the number of active wells. p 29 of the Supplement.)

The analysis is about methane in North America. (The 16 authors are all in the USA and Canada.) The question of global methane emissions came up at the talk. It was beyond the scope of this report, but the questions would be the same.

How does one study methane emissions? There are two general approaches. One is to study what individual units (such as wells or associated processing equipment) emit; this is referred to as "bottom up". The other is to measure the CH4 in the atmosphere, and try to understand what affects it; this is "top down". Both of these are far more complex than they sound here. (Much of the talk was about the methodology.) For now, the two approaches do not agree well; the books don't balance!

This is an interesting and important topic. The new work is an admirable contribution. Getting the issues out on the table, with some preliminary suggestions, is good. But this needs more work. If you want more than my brief summary above, try the news story listed below. The article itself is rather dense, and is for those with a serious interest in either the policy or science sides.

News story: America's natural gas system is leaky and in need of a fix, new study finds. (Stanford University, February 13, 2014. Now archived.) From the lead institution. Good overview.

The article, which is presented as a Policy Forum: Methane Leaks from North American Natural Gas Systems. (A R Brandt et al, Science 343:733, February 14, 2014.) Check Google Scholar for a freely available copy. The Supplement is freely available at the journal web site.

Other posts on methane emissions include:

* Los Angeles leaked -- big time! (April 29, 2016).

* Boston is leaking (February 13, 2015).

* Space-based observation of atmospheric methane -- and the Four Corners methane hotspot (December 29, 2014).

* Quality of oil and gas wells -- fracking and conventional (August 18, 2014).

* Svalbard is leaking (March 7, 2014).

* Shale gas recovery using hydraulic fracturing (fracking) (October 7, 2013).

Related... Emissions from gas stoves, even when 'off' -- air pollution implications (February 21, 2023).

The following post addresses another aspect of the trade-off between CO2 and CH4: Climate change: Should we focus on methane? (March 24, 2012).

More on fracking... Fracking: Implications for energy usage and for greenhouse gases (October 26, 2014). This focuses on how less expensive gas might impact patterns of energy usage.

Also see:

* More about air pollution: The SF6 story: an emerging greenhouse gas? (August 25, 2020).

* National contributions to global warming (June 25, 2014).

There is more about energy on my page Internet Resources for Organic and Biochemistry under Energy resources. It includes a list of some related Musings posts.

Interfering with prion propagation?

April 5, 2014

There is no known method for preventing or treating prion diseases, such as bovine spongiform encephalopathy (BSE) in cows and Creutzfeldt-Jakob disease (CJD) in humans. A recent article hints at a lead.

Prion diseases involve misfolded proteins. A normal cellular protein takes on an abnormal shape, and induces other copies of the protein to do so, too. The abnormal protein, which aggregates, is toxic. Exactly how this all happens is not entirely clear. However, it is now possible to carry out this prion conversion in the lab. A team of scientists using that procedure found a substance that inhibits the prion conversion.

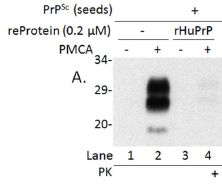

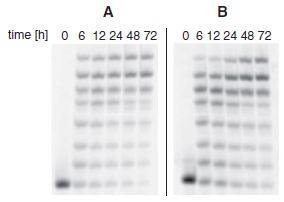

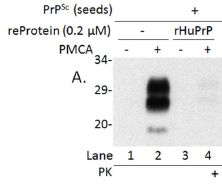

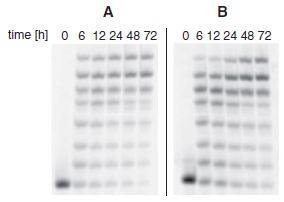

The following figure shows the results of a key experiment that demonstrates the inhibitor.

|

The general procedure here is to convert the prion in the lab, using a method called protein misfolding cyclic amplification (PMCA). (We use the terms convert and amplify interchangeably. The amount of abnormal protein is amplified, by converting normal protein to abnormal.) The product of the PMCA conversion is analyzed on an electrophoresis gel, to measure the amount of the abnormal protein that was made.

There are four lanes showing results. These differ in all possible combinations of two variables. One is whether the inhibitory substance (a protein) was added; this is labeled "reProtein 0.2 (µM)". The other is whether amplification was actually run; it is labeled "PMCA".

|

Lane 2 shows the result of a normal PMCA amplification. It is + for PMCA and - for the inhibitory protein. You can see that there is a big black band at about 29 on the scale. (The 29 means the molecular weight of the protein is about 29,000.)

Lane 1 is a control, in which amplification was not done. (Note the - for lane 1 in the row labeled PMCA.)

Lane 4 is the important one. In this case, the extra protein (called rHuPrP) was added -- and it inhibited amplification. That's the key point.

Lane 3 has the inhibitory protein but there is no amplification. Not surprisingly, there is no product.

This is part of Figure 1 from the article. (The full figure includes more controls.)

|

What is this magic stuff -- the inhibitory substance that inhibits prion conversion in the lab? It's actually a form of the normal human protein. The scientists show that it binds to the abnormal protein, apparently preventing it from doing its usual harm. Is this useful? They do one experiment with mouse cells, which suggests that it might be. I would be very cautious about this new finding; it's a long road from these novel findings to a useful treatment. However, for a serious disease with no mode of prevention or treatment, any lead is worth following up.

News story: Recombinant Human Prion Protein Inhibits Prion Propagation. (Science Daily, October 9, 2013.)

The article, which is freely available: Recombinant Human Prion Protein Inhibits Prion Propagation in vitro. (J Yuan et al, Scientific Reports 3:2911, October 9, 2013.)

Previous post on prions: Prion diseases -- a new concern? (March 19, 2012).

The assay used here to measure prion propagation has been adapted for use with Alzheimer's disease. An early-detection system for Alzheimer's disease? (June 28, 2014).

For more about prions, see my page Biotechnology in the News (BITN) - Prions (BSE, CJD, etc). It includes a list of related Musings posts.

Also see: A better way to un-boil an egg -- and why it might be useful (March 20, 2015).

YY in the mouth?

April 4, 2014

How appetite is controlled is the subject of much study. Appetite is one component of the broader topic of obesity. Understanding these is good basic science. Of course, there is also interest in the possible development of drugs, but let's emphasize the underlying science.

A hormone of interest is peptide YY (PYY). (The YY denotes that two consecutive amino acids are tyrosine; Y is the one-letter code for tyrosine.) PYY is a natural hormone; it's made in the gut, in response to digesting food, and circulates via the blood. It binds to receptors, inducing satiation -- the feeling of being full. That is, PYY suppresses appetite and leads to reduced food intake. It also induces what is called taste aversion; when given to humans systemically (by injection into the bloodstream), that manifests as a severe response politely called visceral sickness. Clinical trials of PYY as an appetite suppressant were short-lived.

A new article reports that giving PYY to mice directly in the mouth, as a spray, induces the desired appetite loss, without the taste aversion side effect. Of course, that has immediate implications for possible use as a drug, but it also raises the question of what is going on.

The scientists analyze what is going on by studying the receptors for PYY and what their effect is. Overall, what they find is a satisfying explanation for the observed results. The key point is that PYY receptors in the mouth selectively signal the region of the brain involved in satiety. In fact, it's now known that PYY is present in saliva as well as in the blood.

The article is quite detailed. But overall it offers some interesting results. We see that a hormone can have different effects depending on how it is administered, and we can understand that in terms of how the body is "wired". The article is part of our increasing understanding of what determines how much we eat -- a process not well controlled by many people.

News story: UF researchers use oral peptide spray to stimulate weight loss in animals. (University of Florida, December 19, 2013.) From the lead institution.

The article: Salivary Peptide Tyrosine-Tyrosine 3-36 Modulates Ingestive Behavior without Inducing Taste Aversion. (M D Hurtado et al, Journal of Neuroscience, 33:18368, November 20, 2013.)

More on appetite...

* What if one gave appetite-suppressing pills to mosquitoes? (March 15, 2019).

* Fructose and your brain (January 28, 2013).

Other parts of the obesity story...

* Could we treat obesity with probiotic bacteria? (August 5, 2014).

* Why exercise is good for you, BAIBA (March 10, 2014).

A post that is not relevant to the current topic... Y-Y: the first (May 5, 2019).

PYY is one of several hormones that affects, in one way or another, body weight. The long list of such hormones is confusing, but is perhaps to be expected. Body weight homeostasis is a big issue -- for your body; it shouldn't be surprising that multiple regulatory circuits are involved. Understanding them all opens the possibility of more specifically diagnosing why a particular person may not be controlling body weight satisfactorily. One of the very first of these hormones to be discovered was leptin, made by fat tissue. There is some information about leptin and some of the others in the section of my page Organic/Biochemistry Internet resources on Lipids.

April 2, 2014

Agriculture: the Swiss spaghetti harvest

April 1, 2014

At the right... A Swiss lady harvests the spaghetti.

|

|

For more, see the video, which is from a BBC telecast: Spaghetti video, at YouTube. (3 minutes. There is sound, but it doesn't start for about a half minute.) You should, of course, not read any of the text material or labeling on the page.

What does the BBC have to say about this, in retrospect? On this date. (BBC, MMVIII.) The figure above is from this page; it is presumably a still from the video.

This is rather old, and I couldn't find any recent articles on the topic. But perhaps it is appropriate for the day.

Next pasta post: Briefly noted... Pasta that is stronger than steel (January 16, 2019).

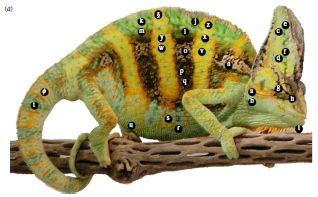

Why chameleons change color (and get thin)

March 31, 2014

It may be common knowledge that chameleons change color as camouflage. However, that may be only part of the story. A new article provides evidence that one type of chameleon changes color to signal its status in a fight. The article is accompanied by a wonderful video; check it out regardless.

To set the stage -- literally...

|

The arena.

For what? For a contest between two chameleons.

This is Figure 1 from the "Supplementary Information" posted at the journal web site for the article.

|

What did the scientists do? They staged "contests" between pairs of chameleons, and made video recordings. Using the videos, they then analyzed the color patterns of the contestants over time, and correlated the colorations with the outcome. In doing the analysis, they took into account how the chameleon visual system works; that is, they tried to see what the chameleons saw. The analysis allowed the scientists to reach some conclusions about the role of chameleons changing color.

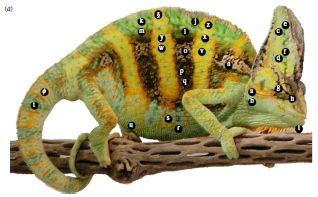

One of the animals used in the study.

The chameleons studied here are male veiled chameleons, Chameleon calyptratus.

The labels show the regions that the scientists measure.

This is reduced from Figure 1a from the article.

|

|

For example, brightness of body stripes was a good predictor of the willingness of a chameleon to fight. Interestingly, the animals turn sideways during the approach phase, as you can see in the video. On the other hand, brightness of the head was the best predictor of victory; the speed of changing head color was also predictive. Victory was generally recognized when the loser walked away; this usually occurred prior to much physical encounter. (The scientists intervened in one case, out of 45, where injury seemed likely.)

What do we learn from this? Animals -- including humans -- fight. Some aspects of the fighting have become ritualized, and fights are resolved during the preliminaries: the animal who knows he will lose walks away, with little or no physical damage. The current work gives some insight as to how these chameleons convey their status and resolve their disputes. We also learn that the color-changing ability of the chameleon is an active part of social communication, not simply about hiding.

Of course, many questions remain. For example.. What is the physiology of the signals to change color? Do some chameleons send deceptive signals? If this kind of color changing is so good at resolving fights, why isn't it more common in the animal kingdom?

Video. There is a short video available with the article (article web site; choose Supplementary Information). It is also available at YouTube. (2 minutes; no sound.) The video shows the activities of four chameleons, using the arena shown above. You can see color changes. Even more strikingly, look for the changes in thickness of an animal when you have an end-on view -- which is when they are displaying sideways. (Does this give new meaning to the term "flat panel display"?) You may want to focus on a single quadrant of the video; the upper left is a good place to start the first time you watch it. A must see!

News stories:

* Study shows male chameleons fighting prowess tied to color changing abilities. (Phys.org, December 11, 2013.)

* Chameleons Convey Different Info With Different Body Parts. (Ed Yong, Not Exactly Rocket Science (National Geographic blog), December 10, 2013.) Now archived.

The article, which is freely available: Chameleons communicate with complex colour changes during contests: different body regions convey different information. (R A Ligon & K J McGraw, Biology Letters 9(6):20130892, December 23, 2013.)

More about animals that change color... Deceiving a rival male (August 28, 2012).

More about lizards... When should the eggs hatch? (June 11, 2013).

The Heartland virus -- follow-up

March 30, 2014

Original post: The Heartland virus (October 2, 2012).

In that post we briefly noted reports of a new virus associated with disease in humans. Only two cases were known, but both were serious. What has happened since? Has the virus become common? Has it faded away? Neither, it seems.

We now have a new article that gives a brief update on the Heartland virus. Over the past two years, six additional cases have been reported. A vector for the virus has been identified -- a tick, as had been suspected. That's about it. It's a short article (two pages).

What's the point? A new disease is emerging. It might turn out to be important; it might not. How do we tell? What should we be doing? It's likely that new viruses are emerging regularly; some will turn out to be important. HIV and SARS are recent examples that did turn out to be important. (The jury is still out on MERS, which is a "cousin" of SARS.) A regular stream of new influenza viruses also belongs here. And what about Ebola? We have new tools to help us recognize and study new viruses, but we don't really know how to tell which are going to be important. Do we wait until a virus has killed some number of people before we pay attention to it? Or do we develop early-warning systems that reveal anything new, whether it will turn out to be important or not?

The article, which is freely available: Notes from the Field: Heartland Virus Disease - United States, 2012-2013. (D M Pastula et al, Morbidity and Mortality Weekly Report 63:270, March 28, 2014.) Includes a picture of the Lone Star tick, the likely vector for this virus.

More about emerging diseases is on my pages for Biotechnology in the News (BITN): Emerging diseases. That discusses some general issues, and also links to some specific diseases that have emerged in recent decades, including all those mentioned above.

Cooperation: a key to separating gases?

March 28, 2014

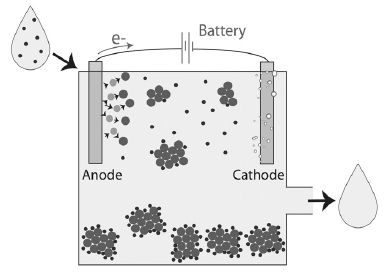

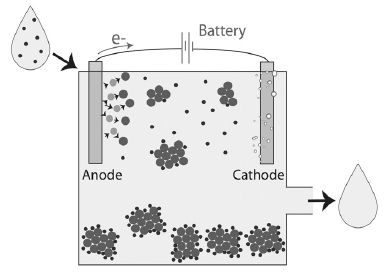

Separating chemicals that are very similar can be challenging. An example of industrial importance is separating the gases carbon monoxide, CO, and dinitrogen, N2. A new article offers some progress on separating these two gases.

The key is that the scientists develop a material that CO binds to cooperatively. That is, the more CO binds, the easier it is for more to bind. That is an unusual behavior. More commonly, one molecule does not know that another has bound. Or, perhaps, if there is a limited number of binding sites, then one molecule binding makes it harder for the next to bind; it's harder to find a vacant binding site if most are occupied -- just as with parking spots for the car. With cooperative binding, one molecule makes it easier for the next to bind. It's as if one molecule binding opens up new binding sites. In fact, that is exactly what the scientists think is happening. Think about... how might parking one car make more parking spots available?

A classic example of cooperative binding is in your body: the binding of dioxygen, O2, to hemoglobin in your blood cells. The first O2 that binds changes the shape of the hemoglobin so that it is now easier for a second O2 to bind. The cooperative binding of O2 promotes delivery of the gas to tissues and its release there.

Let's look at some of their results for how CO binds to the new material.

|

The graph shows how much CO binds to the material as a function of the CO pressure.

The x-axis shows the pressure of CO added, in kilopascals (kPa). Note the log scale. There are two y-axis scales -- two scales for showing the same thing: how much CO binds. Both scales say "amount absorbed"; it's expressed two ways. The left scale is the simpler one: volume of gas bound per gram of material. We'll leave the right scale for now.

|

Look at how the amount of CO bound (y-axis) increases as CO as added (x-axis). The first part of the curve is rather simple: add more CO, get more bound. And then... At about 10 (101) kPa, the curve dramatically changes; it turns upward. It gets easier to bind more CO! Much easier. That's the cooperativity.

Just look at the solid symbols. (The open symbols are for gas release; we'll skip that.)

This is Figure 2C from the article.

|

What about N2? They do the same kind of binding curve with N2. The whole curve for N2 looks like that first part for CO. Nothing special happens to the N2 curve as more N2 is added. That is, the binding curves for both CO and N2 are similar at low pressures. However, at high pressures, CO shows cooperative binding, but N2 does not.

The real test is what happens with mixtures. Here are their results...

|

The graph summarizes the results for separating mixtures of CO and N2.

The x-axis shows the composition of the input ("feed") gas. The y-axis shows the composition of the output ("absorbed") gas. Both are labeled with the percentage of CO.

For example, the first point has 10% CO in the input. The output, based on absorption to the new material, is about 40% CO. That's a pretty good enrichment step. In fact, for all points, the output gas has higher CO than the input gas. (The dotted diagonal line shows what would happen if the output was the same as the input; all points are above the dotted line.)

This is Figure S12 from the Supplementary materials accompanying the article.

|

Earlier I suggested you think about how parking one car might make more parking spots available. We might imagine that parking one car knocks out a garage wall, revealing a new area that is available for parking. If you prefer a gentler scenario, the car might actuate a switch that opens a door to a new area for cars; that's more likely to be a reversible system. What's going on with the CO may be like that. The material includes copper ions, Cu2+. The CO binds to the copper ions; when enough are bound, the material changes shape -- revealing more binding sites. N2 does not bind to the copper ions, thus does not open up new spaces.

That explanation is not sufficient to explain why the separation works, but we'll stop here. The article has more about the nature of the cooperativity, and why it leads to separation.

The nanoporous polymer they develop here is an example of a metal-organic framework , or "MOF" material.

Back to the first figure for a moment... The right-hand scale, which we ignored earlier, is the ratio of CO bound to the Cu2+. You can see that the curve turns upward at a ratio of 0.76. For now, we simply note that this scale reflects the importance of the Cu2+ to the story.

Bottom line... A team of scientists has designed a new material to address a problem that is important to the chemical industry. In lab scale tests, it seems to work, and they have at least some understanding of why. Whether it is practical, either in its present form or as developed further, is open.

News story: Adaptable crystals allow quick, efficient separation of carbon monoxide from gas mixtures. (Nanowerk News, February 5, 2014.)

The article: Self-Accelerating CO Sorption in a Soft Nanoporous Crystal. (H Sato et al, Science 343:167, January 10, 2014.) Supplementary materials at the article web site include a short video (one minute; no sound; freely available), with a cartoon version of the separation process.

More about MOFs:

* Separating isotopes of hydrogen using a MOF (April 18, 2022).

* Capturing NO2 from polluted exhausts (December 7, 2019).

* A novel device for measuring fluoride in water (March 1, 2019).

* Harvesting water from "dry" air (July 1, 2017).

* Doing X-ray "crystallography" without crystals (September 18, 2016).

* Zhemchuzhnikovite: a natural MOF (August 19, 2016).

More about porous materials:

* Liquids with holes (January 30, 2016).

* Upsalite: a novel porous material (September 6, 2013).

Other posts that mention carbon monoxide...

* A treatment for carbon monoxide poisoning? (January 13, 2017).

* Garlic or rotten eggs? (February 8, 2010).

* Seeing molecules under a microscope (September 19, 2009).

March 26, 2014

pH and the color of petunias

March 26, 2014

The color of petunias is due to a type of pigment called an anthocyanin. The color of anthocyanin pigments depends on the acidity. In fact, it has long been known that the usual red color of petunias is due to the vacuole -- the cellular compartment where the pigment is -- being quite acidic. Blue petunias arise when that compartment is less acidic.

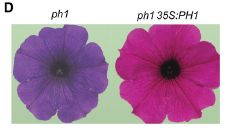

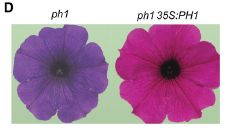

|

Blue and red petunias.

Explanation later.

This is Figure 1D from the article.

|

A recent article adds to our understanding of how acidity of a cellular compartment is controlled. It has been generally understood that there are proton pumps. The proton is the hydrogen ion, H+; acidity is the amount of that ion present. The pH is a number that represents the amount of H+; it is thus a measure of acidity. The new finding is that petunias have two proton pumps for the vacuole. Blue petunias arise when one of them is mutated, leading to vacuoles that are still acidic, but less so than "normal". Interestingly, this second proton pump seems to be a novel type.

In the figure above, the petunia on the left, the blue one, is labeled ph1. This means that this petunia carries a mutation in the ph1 gene; that's the new proton pump gene the scientists found. The right-hand petunia, the red one, is labeled ph1 35S:PH1. The first part of that, the lower case ph1, means it carries the same ph1 mutation as the left-hand petunia. However, the scientists have added to it a copy of the normal (wild type) PH1 allele, denoted by capital letters. Adding back the suspect gene is a good way to verify that a defect was actually due to what you thought it was.

To elaborate on that last point... The first petunia carries a ph1 mutation. The scientists think that the ph1 mutation is responsible for the color. However, it is hard to know for sure; perhaps there is some other problem with this strain. Adding back the suspect gene tests that. If the blue color were due to some other problem, rather than the ph1 gene, then adding back a good PH1 gene would not rescue it. This is a common approach to test that the cause of a genetic defect has been properly identified.

News story: Roses are red -- why some petunias are blue. (e! Science News, January 3, 2014.)

The article, which is freely available: Hyperacidification of Vacuoles by the Combined Action of Two Different P-ATPases in the Tonoplast Determines Flower Color. (M Faraco et al, Cell Reports 6:32, January 16, 2014.)

Among many posts on flowers...

* A "flower" that bites -- and eats -- its pollinator (December 27, 2013).

* Did the earliest dinosaurs like flowers? (October 14, 2013).

* Better enzymes through nanoflowers (July 7, 2012).

Another example of plants using these proton pumps: Why some citrus fruits are so sour (April 22, 2019).

Also see: Pumping tin (January 12, 2018).

More plant pigments: What is folium? (July 7, 2020).

Recovery of live, infectious virus from 30,000 year old permafrost

March 25, 2014

This is a story that has gotten considerable news attention.

A team of scientists has taken a sample of 30,000 year old arctic permafrost, thawed it out in the lab, and inoculated a portion into a culture of amoebae. Viruses grew. That is, they claim to have resurrected a virus that had been frozen away in the permafrost for 30,000 years.

Claims of resurrecting material that old are not new; Musings has noted a couple before [links at the end]. Viruses, being small and chemically simple, should be simpler to resurrect than more complex organisms. Further, proving that what was found really was as old as claimed is difficult. Perhaps the important test is whether others can replicate this type of work. Nevertheless, the claim is made, and at least catches attention.

It is of some interest that it is an amoebavirus -- of the general type of giant amoebaviruses that has been getting quite a bit of attention lately, including a Musing post [link at the end]. Interestingly, the permafrost-derived virus looks very similar to one of the modern viruses reported earlier. However, upon closer examination, it has an unusual combination of genome and life cycle properties. The whole story of these giant viruses is rather new and obviously quite incomplete.

So we note the story. The significance will become clearer with further work.

News story: 30,000-year-old virus from permafrost is reborn. (Phys.org, March 3, 2014.)

The article: Thirty-thousand-year-old distant relative of giant icosahedral DNA viruses with a pandoravirus morphology. (M Legendre et al, PNAS 111:4274, March 18, 2014.)

Background posts...

* A 30,000 year-old plant, with an assist from a squirrel (March 10, 2012).

* Life at age 34,000? (October 8, 2011).

* The largest known virus (August 5, 2013). The new virus looks similar to the one shown in this post.

Other Musings posts that start with permafrost...

* Mercury pollution from Arctic melting (February 19, 2019).

* Inuk, a 4000 year old Saqqaq from Qeqertasussuk (March 1, 2010). This one yields genome information, but not a living organism.

More about the Arctic: What if your compass pointed south? (October 24, 2014).

There is more about the large viruses of amoebae on my page Unusual microbes in the section A huge virus.

More about amoebae: Trogocytosis -- How an amoeba chews its food (May 16, 2014).

The mass of an electron

March 23, 2014

A new article presents the latest measurement. The scientists report that the mass of the electron is 0.000548579909067 atomic mass unit (amu). More precisely, they report the mass as 0.000548579909067(14)(9)(2) amu. And precision is indeed the issue. Those three terms in parentheses at the end are three types of uncertainties in their measurements; each number in parentheses is the uncertainty in the last digits of the reported measurement. The mass they found is quite similar to previous measurements, but the uncertainties here are much lower.

|

"History of electron mass measurements." That's their title for this figure, which is Figure 4 from the article. It summarizes measurements of the electron mass over the last 20 years.

The graph shows the electron mass vs year -- with the axes perhaps reversed from what you might expect.